2026-05-13 23:30:35

AI workloads are exposing the limits of what most databases were designed to handle. Databases will need to process petabytes of data, millions of writes per second, and data types like vectors – all while delivering consistent sub-millisecond P99 latency.

Join ScyllaDB co-founders Dor Laor (CEO) and Avi Kivity (CTO) to explore what real-time AI workloads actually require, and what it takes to stay ahead.

You will learn:

How AI workloads are shifting in real-world applications

The specific pressures these new patterns place on databases

What architectural features help teams meet AI’s demands

In early 2023, the Databricks rate limiter ran on a simple architecture. An Envoy ingress gateway made calls to a Ratelimit Service, which in turn queried a single Redis instance. The setup handled the traffic it was designed for, and the per-second nature of rate limiting meant the counts could stay transient without any durability guarantee.

Then, the real-time model serving was launched. A single customer could now generate orders of magnitude more traffic than the service was built for, and three specific cracks appeared.

Tail latency climbed sharply under load, worsened by two network hops and a P99 of 10 to 20 milliseconds between services in one cloud provider.

Adding machines and bolting on caches stopped helping past a certain point.

The single Redis instance also represented a single point of failure that the team could no longer tolerate.

The team redesigned the service, and the rebuild merits attention because the most interesting part is what they chose to give up.

Strict accuracy is expensive at scale, and Databricks traded it for a faster critical path, a horizontally scalable counter, and a rate limiter that answers as if the decision has already been made by the time the client checks.

In this article, we look at how Databricks implemented rate limiting at scale, how they shrank the critical path, and the accuracy tradeoff that shrinking usually requires.

Disclaimer: This post is based on publicly shared details from the Databricks Engineering Team. Please comment if you notice any inaccuracies.

Strip away the framing, and rate limiting reduces to a counting problem. Each request arrives, the system locates the right counter, compares it against a threshold, and either allows or rejects the request. The design question is where that counter is stored and how quickly it works.

In the old Databricks architecture, the counter was stored in Redis. See the diagram below:

A request flowed through Envoy, hit the Ratelimit Service, and triggered a call to Redis. That meant two network hops on the critical path of every request. In a cloud provider where P99 network latency sat between 10 and 20 milliseconds, those hops dominated the rate limit decision time. A check that should have cost microseconds was costing tens of milliseconds.

See the diagram below:

The team had already tried to work around this. Envoy can be configured with consistent hashing so that requests with the same key land on the same Ratelimit Service instance, which lets that instance keep a local count. The approach helped, but it hit three walls.

Non-Envoy services could not participate in the scheme, which fragmented the rate limit view.

When the service cluster scaled up or restarted, the hash assignments churned, which forced regular syncs back to Redis.

Lastly, consistent hashing can be prone to hotspotting, where one very popular key saturates a single machine while its neighbours sit idle. The only way to push through those hotspots was to over-provision the entire cluster.

This is where scaling stops being additive. Adding machines stopped moving the latency numbers, and adding more caching introduced more inconsistency. The architecture itself was the ceiling, and the team had to change it.

Rate limiting is transient. A per-second count exists only as long as that second is current, and the moment it rolls over, the old value becomes irrelevant.

That property opens a door. If a count only needs to live for a second, durable storage is more than the problem requires. The count can live in memory on the server that owns it, and losing that server during a restart costs almost nothing.

The challenge is that a single server cannot hold counts for every rate limit key across the fleet. The service needs a way to partition keys across servers, and a way for any client to quickly find the server that owns a given key.

That is the problem Dicer solves at Databricks. For the purposes of this discussion, Dicer can be treated as a black box. Dicer is a routing layer that lets a service keep state in memory while remaining horizontally scalable and fault-tolerant. Clients ask Dicer which server owns a given key, and that server confirms it is the authoritative owner before handling the request.

See the diagram below:

With Dicer in place, the Ratelimit Service could move every counter in-memory. The network hop to Redis disappeared. Server-side tail latency came down sharply, and the team could scale horizontally by adding replicas to Dicer’s assignment pool. The single point of failure went away as well, because each replica became the authoritative store for its own slice of keys. Restarts and scale events redistributed ownership without any external coordination, and the churn that had forced Redis syncs in the old consistent hashing setup stopped having an impact.

This solved one problem and exposed another.

The server side was now fast, but the client side still made a synchronous call across the network for every single request. A rate limit check that had been waiting on Redis was now waiting on the Rate Limit Service. The P99 came down, but the shape of the problem remained. The critical path still had a round trip on it.

This is where the team made its most consequential move.

Millions of client requests per second were still translating into millions of synchronous calls to the Ratelimit Service. Even with the server answering in memory, that represented significant network traffic, significant server capacity, and significant client-side waiting. The team asked a harder question.

Does every request truly need to wait for a rate limit decision before proceeding?

They considered three alternatives:

The first was prefetching tokens on the client, where the client pulls a block of capacity and answers rate limit checks locally.

The second was batching requests on the client and waiting for a response before releasing them.

The third was sampling, where only a fraction of requests get checked.

Each had problems. Prefetching carries messy edge cases during startup, expiry, and token exhaustion. Batching adds delay and memory pressure. Sampling works for high-QPS limits but falls apart when the limit itself is small.

What the team built is called batch-reporting, and it rests on two ideas:

The first is that clients make no remote calls on the rate limit path.

The second is optimistic rate limiting, where the default is to allow the request and reject only when the client already has a reason to reject from an earlier report.

The client counts how many requests it let through and how many it rejected, grouped by rate limit key. Every 100 milliseconds or so, a background thread packages those counts and reports them to the Ratelimit Service. The server responds with instructions telling the client which keys should be rejected, until which timestamp, and at what rejection rate.

The diagram below shows the three architectures side by side:

The impact was substantial:

Tail latency on rate limit calls fell by roughly a factor of ten, because the calls were effectively free for the client.

Spiky inbound traffic turned into constant outbound reports because the reporting cadence is fixed regardless of how bursty the underlying traffic becomes.

Server-side load became predictable for the first time.

The inversion merits emphasis. The rate limiter used to be asked before each decision. Now it is told after. That inversion sits at the core of the redesign, and the tradeoff is explicit. Databricks accepts that some requests over the limit will slip through between reports, and their backends are built to tolerate that overshoot.

Batch-reporting introduced a problem of its own.

Between the moment a client starts exceeding a limit and the moment the server tells it to reject, traffic can leak through. A hundred milliseconds of overshoot at high QPS amounts to a lot of requests. The team wanted guarantees that kept overshoot within roughly 5 percent of the policy, and reaching that target required three-layered fixes.

The first was a rejection rate returned by the server. The idea is to use the past to predict the near future. If the last second’s traffic exceeded the policy by some amount, the formula rejectionRate equals (estimatedQps minus rateLimitPolicy) divided by estimatedQps tells the client what fraction of upcoming requests to drop. This assumes that the next second’s traffic resembles the last second’s, which often holds true to help.

The second was a client-side local rate limiter as a defense in depth. When traffic spikes so obviously that the batch cycle has no chance of catching up, the client starts rejecting locally based on its own counts. This catches the extreme cases immediately rather than waiting for a round trip.

The third was the algorithm itself. Once autosharding lets the service hold counts in memory, the token bucket becomes feasible. The token bucket has a useful property that a fixed window lacks. Fixed window resets to zero at the end of every interval, so a customer can blast traffic right at a window boundary and technically stay within the policy while sending double the intended rate during the crossover. The token bucket continuously fills and drains, and it can go negative. When a customer sends too many requests, the bucket remembers and stays empty until the refill catches up. The reset problem disappears. Token bucket also approximates a sliding window when configured without extra burst capacity, which produces a stricter shape than a fixed window for most limits.

This is where the algorithm choice was gated by the storage choice. Token bucket needs compare-and-set style logic on every increment, which was slow in Redis. In-memory, the same operation is close to free. Once the token bucket was viable, the earlier rejection rate mechanism became unnecessary, and the team eventually converted every rate limit in the system to a token bucket.

The Databricks story resolves into three decisions that depend on each other:

The first is the algorithm, which determines how the counter behaves at the boundaries of time intervals. Fixed window, sliding window, and token bucket each produce different behaviour in that regard.

The second is where the state lives, whether in a shared external store like Redis, in an in-memory counter on a single server, or in an in-memory counter sharded across a cluster of servers through some routing layer.

The third is the sync model, which can either require every request to wait for a synchronous decision or allow clients to make local decisions and reconcile through asynchronous reports.

The old Databricks architecture sat at one corner of that space, combining a fixed window, shared Redis, and synchronous per-request checks. The new architecture sits at a different corner, combining token bucket, sharded in-memory storage, and asynchronous batch reports.

The dependency chain is worth understanding. Token bucket needs cheap compare-and-set semantics, which rules out Redis at the QPS Databricks sees, which forces in-memory state. In-memory state across many counters forces sharding, because one server cannot hold everything. Sharding with authoritative per-key ownership is what enables batch-reporting, because each shard can act as the source of truth for its keys without coordinating with peers.

These constraints explain the order of the rollout. Sharded in-memory came first, batch-reporting followed on top of it, and token bucket replaced the algorithm once the state architecture could support it.

The end result is a rate limiter that is faster, more resilient, and more scalable than what came before, at the cost of strict accuracy. That tradeoff is the foundation of the design.

A system that has to enforce limits exactly, because each request over the limit costs real money or violates a contract, would have to pick a different architecture. Databricks could afford this one because their backends tolerate roughly 5 percent overshoot.

A few smaller details from the rollout are worth noting. The team built a localhost sidecar next to the Envoy ingress to host the batch-reporting logic, because Envoy is third-party code they could not change directly. Before in-memory counting was ready, a Lua script on Redis batched writes together to keep batch-reporting latency manageable during the migration.

The rebuild reframes what rate limiting is as a system problem.

The algorithm tends to get the attention, but the storage and sync model determine whether the algorithm can run at scale. Distributed counting is a single design problem with three coupled aspects rather than three independent ones.

References:

2026-05-12 23:31:03

Underneath every review sits a purpose-built, independent context engine. It’s the layer that decides what the agent actually sees before a single token of generation happens.

Purpose-built because code review demands a different context than chat or autocomplete: placing the relevant context fragments assembled for each review.

The engine assembles inputs across four planes:

Sandbox. Cloned repo, dependency analysis, multi-repo context and linters/SAST (ESLint, Semgrep) running on the change.

Review instructions. Your coding guidelines, AGENTS.md, path, and AST-scoped rules, tone, and learnings from past reviews.

Integrations. MCP tools, issue trackers (Jira, Linear), CI/CD failures, and web search.

LLMs. Routing across OpenAI and Anthropic.

In 2020, Figma’s data synchronization architecture was about five lines of logic. A cron job ran once a day, queried every row from a database table, dumped it into S3, and loaded it into Snowflake.

It was straightforward, easy to reason about, and it worked.

Three years later, that same simplicity was costing Figma millions of dollars a year and leaving their analytics team looking at data that was already days old by the time they could query it.

For reference, Figma is a collaborative design platform where teams create, prototype, and iterate on user interfaces together in real time. If you’ve used a modern app or website, there’s a high chance the screens were designed in Figma or that Figma was part of the workflow.

Since its early days, the product has expanded well beyond its core design tool. FigJam added collaborative whiteboarding in 2021. Dev Mode launched in 2023 to bridge the gap between designers and developers. Figma Make brought AI-powered app prototyping into the mix. The company also localized for the Brazilian, Japanese, Spanish, and Korean markets.

All of that growth meant an explosion in the volume and complexity of data flowing through Figma’s systems every day.

In this article, we will learn what happened as Figma grew and how its engineering team handled the growth in terms of the data pipeline issues.

Disclaimer: This post is based on publicly shared details from the Figma Engineering Team. Please comment if you notice any inaccuracies.

Figma’s original data pipeline did what’s called a full sync. Every run copied the entire contents of a database table, regardless of how much had actually changed since the last run. If a table had ten million rows and only fifty changed that day, the pipeline still copied all ten million. When tables are small, this is fast and cheap.

To start with, Figma’s production databases were hosted on Amazon RDS PostgreSQL and served live user traffic. Every time someone opens a file, saves a change, or loads a project, those databases handle the request. Running heavy analytical queries on these same databases, things like computing company-wide KPIs or analyzing usage trends across millions of users, would compete with live traffic and slow down the product. So like most companies at this scale, Figma maintains a separate analytics warehouse in Snowflake, a database built specifically for these kinds of large, complex queries. The catch is that data has to get from one to the other. That transfer is the synchronization pipeline.

But Figma’s tables didn’t stay small.

As mentioned, between 2021 and 2025, they launched FigJam, Dev Mode, Figma Make, and expanded localization to serve the Brazilian, Japanese, Spanish, and Korean markets. The user base grew rapidly, and so did the data.

By 2023, daily synchronization tasks were taking around six hours to complete. The largest tables took several days. To make things worse, the pipeline required dedicated database replicas just to handle the export load without affecting production traffic. Those replicas alone cost millions of dollars annually.

Figma evaluated three options to handle this:

They could keep the existing system, but sync delays and replica costs made that untenable.

They could add parallelism to speed up the full copies, but this was a band-aid that wouldn’t scale as tables continued to grow.

Or they could overhaul the pipeline entirely.

They chose the overhaul, committing to incremental synchronization. Instead of copying entire tables every run, they’d capture only what changed and apply those changes to the destination. The concept is simple, but the execution is not.

Incremental synchronization flips the model. Rather than asking “what does the whole table look like right now?” it asks “what changed since last time?” Only the inserts, updates, and deletes since the last sync get transferred and applied. For a table with ten million rows where fifty changed, you’re now moving fifty rows instead of ten million.

The mechanism that makes this possible is called Change Data Capture, or CDC. Every database keeps an internal log of every write operation, known as the write-ahead log, for its own crash-recovery purposes. CDC reads that log and converts it into a stream of change events. This does not add overhead to the database, and we are piggybacking on bookkeeping that the database is already doing.

The diagram below shows how CDC works on a high-level:

Those change events need somewhere to go. Figma uses Kafka, a distributed streaming platform that acts as a buffer between the production database and Snowflake.

As CDC captures changes, it publishes them to Kafka topics, one topic per table. Snowflake then consumes from those topics at its own pace. This decoupling ensures that the production database doesn’t need to know or care whether Snowflake is online, busy, or behind. It just writes events to Kafka, and Kafka holds onto them until the consumer is ready. If Snowflake goes down for maintenance, no data is lost. The events queue up in Kafka and get processed once Snowflake comes back.

One point to note, however, is that the stream only captures changes from the moment you start listening. It doesn’t contain the full history of the table. So on day one, the destination database is empty, and the change stream only knows about changes happening right now. There is a need for a starting point.

That starting point is a snapshot. In this approach, we take a full copy of the table at a specific moment in time, then start applying changes from before that moment forward. Here’s why the timing matters. For example, Ssy Figma kicks off a snapshot export at 2:00 AM, and the export takes two hours to complete. During those two hours, users are still active. Records are being created, updated, and deleted. The snapshot finishes at 4:00 AM, but it only reflects the state of the table as of 2:00 AM. If the change stream starts capturing events at 4:00 AM, every change between 2:00 and 4:00 AM is lost. The destination table will be missing two hours of data, with no error to flag the gap. To avoid this, Figma ensures the Kafka CDC stream’s start offset precedes the snapshot timestamp. That overlap means some events will be duplicates of what’s already in the snapshot, but duplicates can be handled during the merge step. Missing data cannot.

Figma also had to decide whether to buy an off-the-shelf solution or build its own setup. They evaluated vendor options seriously and found three problems:

Generic CDC tools couldn’t leverage Amazon RDS-specific APIs, like the ability to export snapshots directly to S3 without maintaining a separate database replica.

Vendor pricing at Figma’s scale came out to five to ten times more than an in-house build.

The tools they evaluated couldn’t reliably handle Figma’s data volume, which was still growing.

Therefore, they assembled their pipeline from lower-level components:

Amazon RDS handles snapshot exports to S3.

Kafka streams the CDC events.

Snowflake stored procedures perform the incremental merge, in other words, applying the stream of changes to bring the destination tables up to date.

Merge jobs run on a configurable schedule, defaulting to every three hours.

See the diagram below:

That three-hour default is a deliberate design choice, not a limitation. More frequent merges mean fresher data but higher Snowflake compute costs. Figma lets teams override the default where it matters. Their billing pipeline, for example, runs on 30-minute merge cycles. Each team pays only for the freshness they actually need.

Building the pipeline is half the job. The other half is knowing whether it’s actually working correctly.

Data pipelines can fail in ways multiple ways that don’t produce errors. For example:

A partial failure during a snapshot export

A misconfigured CDC connector

An unexpected data format from the source.

These issues don’t crash the pipeline. They just produce wrong data. And wrong data in an analytics warehouse leads to wrong KPIs, wrong business decisions, and a slow erosion of trust in the entire data platform.

Figma’s answer to this scenario is quite rigorous. They built a dedicated validation workflow that clones the live base table, runs the entire bootstrap process independently into a temporary schema, aligns both copies to the same point in time using CDC data, and then compares them cell by cell. This runs weekly for every table in the pipeline. Most teams settle for row-count checks or sampling. Figma treats its analytical warehouse with the same correctness guarantees you’d expect from a production database.

The reason this approach works is independence. If a bug exists somewhere in the main pipeline, say a CDC connector silently drops certain types of update events, any validation that reuses the same pipeline path would inherit the same bug. The corrupted data would match the corrupted check, and everything would look fine. By bootstrapping a completely separate copy through an independent process and comparing the two, Figma guarantees that an error in one path can’t silently pass the other’s checks.

Figma also built a zero-downtime re-bootstrap capability by versioning all bootstrap artifacts except the final user-facing view. When schemas evolve, or a full re-bootstrap is needed, the new version is built in parallel and promoted via an atomic view update. Live queries are never disrupted.

The other piece that holds it all together is automation. Figma structured its automation into two tiers:

First-level automations handle the actual execution. You give them a table name, and they run the bootstrap or validation and alert if something goes wrong.

Second-level automations sit above and decide when to trigger the first tier. A controller workflow checks every few hours for new tables that need onboarding. A dispatcher workflow kicks off validation for each table weekly.

The system is largely self-operating, and developers get involved only when alerts fire.

The payoff came early. One week into testing in their staging environment, the automated re-bootstrap routine caught a severe failure mode. Had it reached production, it would have triggered a site-wide outage lasting at least twenty minutes. The old system, a daily cron job with no automated validation, could never have caught this.

The numbers tell the story quite clearly:

Data freshness went from 30-plus hours to under three hours, with the option to configure it down to minutes.

The pipeline handles tables over ten times larger than the old system could manage, with consistent and predictable performance.

Eliminating the dedicated export replicas produced multimillion-dollar annual savings.

Operations have seen zero major incidents during and after launch.

Beyond raw performance, the rebuild unlocked new capabilities.

For example, a sync-on-demand CLI tool lets developers trigger immediate synchronization outside the regular schedule. CDC data is now exposed to end users, so developers can query the full change history of any entity and not just its current state. During incident response, this means questions like “show me every change to this user’s record in the last 48 hours” get answered in minutes.

However, this project took a significant investment of time, effort, and resources.

The new system is much more complex than a daily cron job. Figma compensated with aggressive automation and validation, but that’s additional complexity layered on top. Ultimately, this tradeoff was worth it at Figma’s scale.

References:

2026-05-11 23:31:17

The context that actually matters isn't in your database. It's in the tools your users live in every day. Multi-stage agents stall the moment they hit a step they can't see. And every missing integration is a different OAuth flow, a different token lifecycle, weeks of plumbing before the agent reads a single record.

WorkOS Pipes connects your agent to the tools your users live in. Pre-built connectors for GitHub, Slack, Salesforce, Google Drive, and more. Pipes handles OAuth, token refresh, and credential storage. You call the real provider API with a fresh token, every time. Your agent pulls context at every step, for as long as the task runs.

Engineers at Pinterest work across a sprawling set of internal systems every day. They query data through Presto, debug batch jobs in Spark, manage workflows in Airflow, search internal documentation, and track bugs in ticketing platforms.

When Pinterest started building AI agents, they wanted those agents to do more than answer questions. They wanted agents that could reach into these systems directly, pulling logs, investigating bug tickets, querying databases, and proposing fixes, all within the surfaces engineers already use.

The challenge was driven by standard maths. If you have five AI-powered surfaces (an internal chat app, IDE plugins, chatbots, CLI agents, and other autonomous agents) and ten internal tools, you’d need fifty bespoke integrations without a shared protocol. In other words, every new surface or tool multiplies the work.

The Model Context Protocol (MCP) promised to collapse that multiplication into addition. Build one MCP client per surface and one MCP server per tool, and they all speak the same language.

Pinterest adopted MCP as the foundation for this vision. However, implementing the protocol turned out to be the easy part. The real engineering effort went into everything around it, such as a central registry, a two-layer auth system, a unified deployment pipeline, and observability baked in from day one.

In this article, we look at how Pinterest designed that ecosystem and what they had to get right beyond the protocol itself.

Disclaimer: This post is based on publicly shared details from the Pinterest Engineering Team. Please comment if you notice any inaccuracies.

Model Context Protocol (MCP) is an open-source standard that gives large language models a unified way to talk to external tools and data sources.

Instead of writing custom glue code between every AI application and every tool it needs to access, MCP defines a shared client-server protocol. An AI surface acts as the client, an MCP server wraps a tool or data source, and they communicate using a standardized format for discovering tools, invoking them, and returning structured results.

Before MCP, connecting AI surfaces to internal tools was an N x M problem. Five surfaces times ten tools equals fifty custom integrations to build and maintain. MCP turns that into an N+M problem. You build five clients and ten servers, and any client can talk to any server. That is fifteen pieces of work instead of fifty, and the gap widens as you add more surfaces or tools.

But MCP only defines the communication protocol. It does not handle authentication, authorization, deployment, service discovery, or governance.

Those are the problems Pinterest had to solve on its own. In other words, the MCP spec provides the grammar, and Pinterest had to build the entire school system around it.

When Pinterest decided to adopt MCP, three early decisions shaped the entire ecosystem. Each involved a genuine tradeoff, and understanding those tradeoffs helps us make sense of why the architecture looks the way it does.

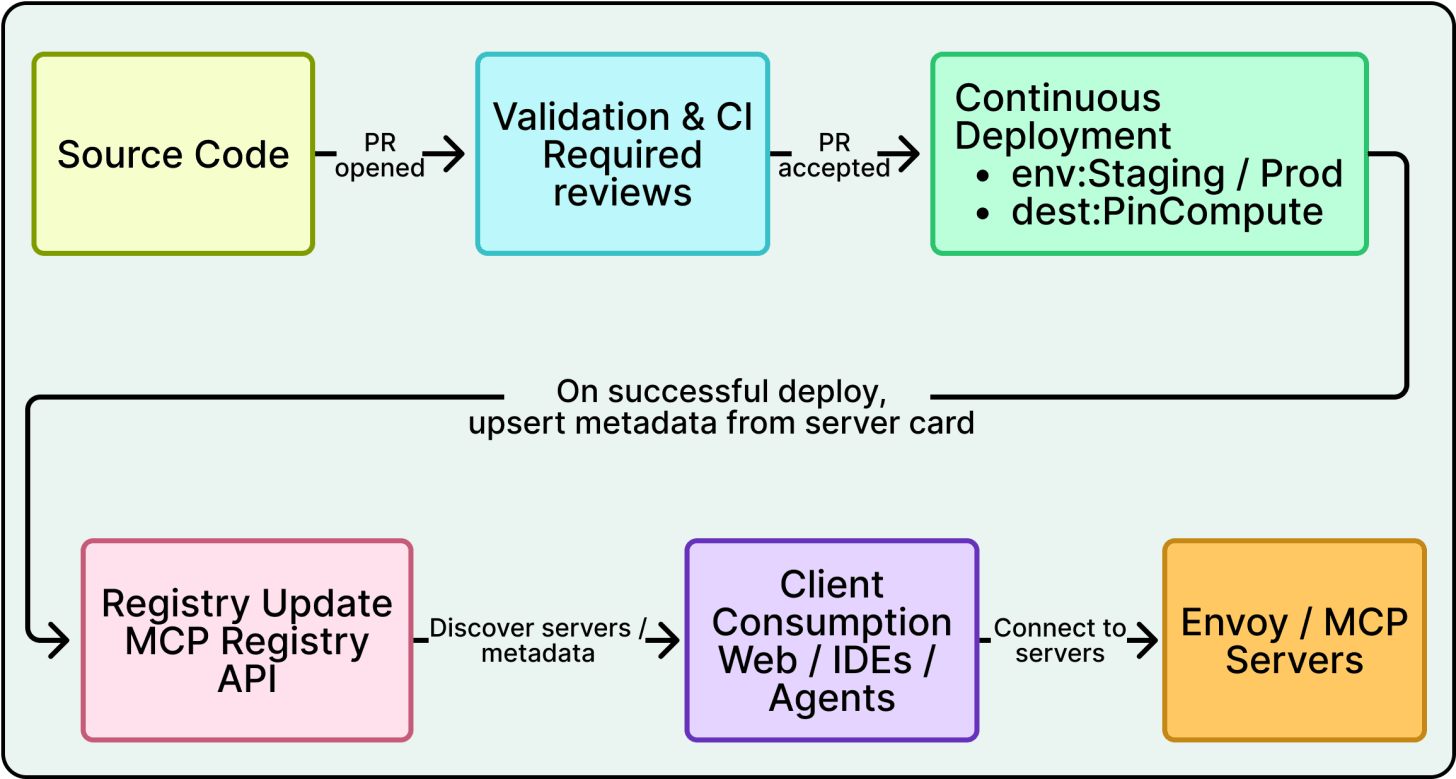

See the diagram below that shows the overall architecture:

MCP supports local servers that run on a developer’s laptop and communicate over standard input/output. Many individual developers use MCP this way with tools like Claude or Cursor.

Pinterest went the opposite direction.

They explicitly optimized for internal cloud-hosted MCP servers, where their routing and security infrastructure could be applied consistently. Local servers are still allowed for experimentation, but the so-called paved path at Pinterest is to write a server, deploy it to their cloud compute environment, and register it in their central catalog. Every tool call becomes a network request, which adds latency compared to a local server.

However, centralizing servers in the cloud meant that Pinterest could apply consistent authentication, authorization, logging, and monitoring across every server without relying on individual developers to configure those things correctly on their own machines.

Pinterest debated building a single monolithic MCP server that exposed every tool versus building multiple domain-specific servers. They chose the latter.

For example, the Presto MCP server handles data queries. The Spark MCP server handles job debugging. The Knowledge MCP server handles documentation and institutional Q&A. Each server owns a small, coherent set of tools.

Two forces drove this decision.

First, different servers need different access controls. The Presto server touches sensitive business data and requires strict group-based gating. A documentation server is lower risk and can be more broadly accessible. Bundling them into one server would force a single access policy across tools with very different sensitivity levels.

Second, every tool description consumes tokens in the AI model’s context window, which is the limited amount of text the model can process in a single interaction. A monolithic server with fifty tools would stuff the model’s prompt with tool descriptions it does not need for the current task, crowding out space for the actual conversation.

Domain-specific servers keep the tool list small and relevant. This context window constraint is uniquely AI-specific because in a traditional microservices setup, you would not worry about your service catalog consuming tokens.

The tradeoff here is more operational overhead per server, since each one needs deployment, monitoring, and ownership. This cost led directly to the third bet.

Early feedback from teams was clear. Spinning up a new MCP server required too much boilerplate, including deployment pipelines, service configuration, and operational setup, all before anyone could write a single line of business logic.

The Pinterest engineering team responded by building a unified deployment pipeline. Teams define their tools, and the platform handles deployment, scaling, and infrastructure. This turned what had been a multi-day setup process into something where domain experts could focus entirely on their business logic. Without this investment, the bet around many small servers would have collapsed under its own operational weight.

Sitting beneath all of this is the MCP registry, a central catalog that serves as the source of truth for which servers exist, who owns them, and how to connect to them. It has two faces.

A web UI lets humans browse available servers, see their live status, find the owning team and support channels, and inspect visible tools.

An API lets AI clients programmatically discover servers, validate them, and check whether a given user is authorized to access a given server.

Only servers registered here count as approved for production use. In other words, the registry is not just a phone book, but the governance backbone of the entire ecosystem.

Giving AI agents access to tools that touch real production systems and sensitive data raises immediate security concerns.

Pinterest treated MCP as a joint project with their security team from day one, and the result is a two-layer authorization model that deserves careful attention.

See the diagram below:

Consider what happens when an engineer opens Pinterest’s internal AI chat and asks the agent to query revenue data from the data warehouse. That single request crosses multiple systems as mentioned below:

The chat frontend talks to the MCP registry to find available servers.

The request gets routed to the Presto MCP server, which runs a query against a real database containing business-sensitive information.

At every hop, the system needs to answer two questions. Who is this person? And are they allowed to do this specific thing?

Layer 1 handles coarse-grained checks at the network edge.

When an engineer opens any AI surface at Pinterest, they go through an OAuth flow, which is the standard process for logging in with a company account and granting the application permission to act on the user’s behalf. This produces a JWT (JSON Web Token), a small signed token that encodes the user’s identity and group memberships. That JWT travels with every subsequent request.

Before a request reaches any MCP server, it passes through Envoy, a network proxy that sits in front of every service in Pinterest’s infrastructure. Envoy validates the JWT by checking the signature and expiration, then converts it into standard headers like X-Forwarded-User and X-Forwarded-Groups.

Envoy also enforces coarse-grained access policies. These are broad rules like “the production AI chat application may talk to the Presto MCP server, but experimental servers running in the dev namespace are off-limits.” If the request violates these rules, it gets rejected before the MCP server ever sees it. Think of Envoy as the building security desk. It checks your badge and makes sure you are supposed to be in the building at all.

Layer 2 handles fine-grained checks inside each server.

Even if Envoy lets a request through, the MCP server applies a second layer of authorization at the individual tool level. Pinterest uses a decorator pattern on tool functions (@authorize_tool(policy=’...’)) that checks whether the specific user is allowed to invoke that specific tool.

For example, the Presto MCP server might be reachable by many teams, but only the Ads engineering group can call a tool like get_revenue_metrics. This is like the difference between being allowed into the building and being allowed into a specific room.

For servers that handle particularly sensitive data, Pinterest adds business-group gating. The server extracts the user’s business group membership from their JWT and checks it against an approved list before even establishing a session. This list of approved groups is set during the initial security review when the server is first registered.

For example, even though the Presto MCP server is technically reachable from Pinterest’s broadly used AI chat interface, only specific groups like Ads, Finance, or certain infrastructure teams can actually connect and run queries. This means that turning on a powerful, data-heavy server in a popular surface does not silently expand who can see sensitive data.

Why two layers instead of one?

Envoy’s policies are fast, network-level checks that block obviously unauthorized traffic before it reaches any application code. The tool-level decorators handle nuanced, business-logic-specific permissions that a network proxy is not equipped to reason about. Together, they provide defense in depth. Even if one layer has a misconfiguration, the other still catches unauthorized access.

The official MCP specification defines an OAuth 2.0 authorization flow where users authenticate with each MCP server individually, typically involving consent screens and per-server token management. Pinterest skipped this entirely. Since they control the entire internal environment, they piggyback on the auth session the user already has when they open an AI surface. There is no additional login prompt or consent dialog when a user invokes an MCP tool.

This is simpler for end users, but only works because Pinterest owns every piece of the stack. A company relying on third-party MCP servers would likely need the per-server OAuth approach described in the spec.

Lastly, for automated service-to-service calls where there is no human in the loop, Pinterest uses SPIFFE-based authentication. In this pattern, the calling service proves its identity through a cryptographic certificate issued by the service mesh rather than presenting a human’s JWT. Pinterest reserves this for low-risk, read-only scenarios where the blast radius is tightly constrained.

Pinterest was deliberate about one thing. MCP could not be a science project that lived in its own separate interface. It had to show up in the tools that engineers already use every day.

The diagram below shows how the MCP integration has been done across various surfaces at Pinterest.

Pinterest’s internal AI chat interface is used by the majority of employees daily. The frontend automatically handles OAuth flows and returns a list of usable MCP tools scoped to the current user’s permissions. Once connected, the AI agent binds MCP tools directly into its toolset, so invoking an MCP tool feels identical to calling any other built-in capability. From the user’s perspective, they are just asking the AI to do something, and the MCP plumbing is invisible.

Pinterest also embeds AI bots in its internal communication platform, and these bots expose MCP tools as well. Auth is handled through the registry API, just like the web interface. These bots support context-aware tool scoping, meaning certain MCP tools are restricted to certain channels. Spark MCP tools, for example, only appear in Airflow support channels. This keeps tool lists relevant to the conversation and prevents users from accidentally invoking tools that do not make sense in a given context.

AI-enabled IDEs can pull data through the Presto MCP server on demand, so agents bring data directly into coding workflows instead of requiring engineers to switch to a separate dashboard. CLI agents provide similar capabilities for terminal-based workflows.

The servers that see the heaviest usage reflect the most common engineering pain points.

The Presto MCP server is consistently the highest-traffic server because data access is a universal need across teams.

The Spark MCP server underpins Pinterest’s AI-assisted debugging experience, where agents diagnose job failures, summarize logs, and help record structured root-cause analyses, turning noisy operational threads into reusable knowledge.

The Knowledge MCP server acts as a general-purpose endpoint for institutional knowledge, giving agents the ability to search documentation and answer questions across internal sources.

Since MCP servers enable automated actions, the blast radius of a mistake is larger than if a human manually performed the same steps.

Pinterest’s agent guidance mandates human-in-the-loop approval before any sensitive or expensive action. Agents propose actions, humans approve or reject (optionally in batches) before execution. Pinterest also uses elicitation for dangerous operations, where the AI explicitly asks the user to confirm before performing something like overwriting data in a table. This is a governance decision.

Pinterest built observability into the MCP ecosystem from the start rather than treating it as an afterthought. All MCP servers use a set of shared library functions that provide logging for inputs and outputs, invocation counts, exception tracing, and other telemetry out of the box. This is part of the server framework itself, so teams get observability for free when they use the unified deployment pipeline.

At the ecosystem level, Pinterest tracks the number of registered servers and tools, invocation counts across all servers, and a north-star metric that rolls everything up into a single number. That number is the time saved. For each tool, server owners provide a “minutes saved per invocation” estimate, based on lightweight user feedback and comparison to the prior manual workflow. Multiplied by invocation counts, this gives an order-of-magnitude view of impact.

As of January 2025, MCP servers at Pinterest were handling 66,000 invocations per month across 844 monthly active users. Using the owner-provided estimates, MCP tools were saving on the order of approximately 7,000 hours per month.

Pinterest’s MCP ecosystem offers a clear blueprint for organizations building AI agents that need to act on internal systems. The pattern they established, a standard protocol, a central registry, layered auth, a unified deployment pipeline, and built-in observability, is transferable well beyond Pinterest’s specific context.

The most important lesson is where the effort actually went. The MCP protocol gave Pinterest a shared language between AI surfaces and tools. That was necessary but far from sufficient. The registry, auth layers, deployment pipeline, and measurement framework are what turned a promising protocol into a production system handling tens of thousands of invocations per month.

To conclude, Pinterest’s approach suggests a practical starting point of seeding a small set of high-leverage MCP servers that solve real pain points, then invest in the platform work, especially the deployment pipeline, that makes it easy for other teams to build on top. Pinterest’s unified pipeline was the unlock that turned a platform team project into an org-wide ecosystem.

References:

2026-05-09 23:31:10

If slow QA processes bottleneck you or your software engineering team and you’re releasing slower because of it — you need to check out QA Wolf.

QA Wolf’s AI-native service supports web and mobile apps, delivering 80% automated test coverage in weeks and helping teams ship 5x faster by reducing QA cycles to minutes.

QA Wolf takes testing off your plate. They can get you:

Unlimited parallel test runs for mobile and web apps

24-hour maintenance and on-demand test creation

Human-verified bug reports sent directly to your team

Zero flakes guarantee

The benefit? No more manual E2E testing. No more slow QA cycles. No more bugs reaching production.

With QA Wolf, Drata’s team of 80+ engineers achieved 4x more test cases and 86% faster QA cycles.

This week’s system design refresher:

Claude Code vs. OpenClaw: 5 Design Dimensions

Become an AI Engineer | Enrollment Ends Soon

How AI Fakes a Human in 5 Steps

How do you know if your AI app actually works?

Why Does Git Revert Cause Conflicts?

Claude Code terminates after every task. OpenClaw never sleeps. Both are highly capable, but they have key architectural differences.

System Scope

Claude Code is a short-lived process. You launch it, it runs, it exits. OpenClaw is a long-running background daemon with a Gateway that holds open WebSocket connections to apps like Discord, Slack, and WhatsApp.

Agent Runtime

Claude Code uses a single async query loop: think, tool call, observe, repeat. OpenClaw uses per-session queues, where the Gateway routes RPCs into separate queues.

Extension Architecture

Claude Code supports MCP, plug, skill, and hook, all wired into the agent. OpenClaw uses a manifest-first plugin system. Plugins flow through a central registry before reaching the Agent.

Memory

Claude Code treats CLAUDE. md as memory. OpenClaw separates MEMORY. md from daily notes and adds hybrid vector/keyword search across structured sections.

Multi-agent & Routing

Claude Code uses a lead-to-subagent pattern. OpenClaw uses a route-and-delegate system where inbound channels get routed to dedicated agents that hand off to shared subagents.

Over to you: which pattern do you think is the future of agents?

Our 6th cohort of Becoming an AI Engineer starts in about a week. This is a live, cohort-based course created in collaboration with best-selling author Ali Aminian and published by ByteByteGo.

Here’s what makes this cohort special:

Learn by doing: Build real world AI applications, not just by watching videos.

Structured, systematic learning path: Follow a carefully designed curriculum that takes you step by step, from fundamentals to advanced topics.

Live feedback and mentorship: Get direct feedback from instructors and peers.

Community driven: Learning alone is hard. Learning with a community is easy!

We are focused on skill building, not just theory or passive learning. Our goal is for every participant to walk away with a strong foundation for building AI systems.

If you want to start learning AI from scratch, this is the perfect platform for you to begin.

One selfie in, one fake video out. Here's how deepfakes work at a high level.

The diagram below shows the full pipeline that turns a reference image like selfie, a voice clip, and a prompt into a fake video.

Step 1: Prompt Refinement. The text prompt gets cleaned, augmented with extra detail, and paired with a negative prompt to suppress unwanted artifacts like distorted hands.

Step 2: Reference Image Prep. A single selfie of the target is passed through a VAE encoder, a neural network that compresses images into a compact latent representation.

Step 3: Diffusion Inference Engine. Starts from pure noise and runs a diffusion-based denoiser, conditioned on the refined prompt, reference latent, and audio to produce clean video latents. A VAE decoder then converts those latents back into video frames.

Step 4: Post-Processing. The raw frames are upscaled to higher resolution, color-corrected for consistency, screened by an NSFW classifier, and stamped with a watermark.

Step 5: Multimodal Syncer. Audio is converted to phonemes (the distinct sound units of speech). A lip-sync model aligns mouth movements to those phonemes.

The output is a video of a CEO who never said those words, in a room they never entered.

Over to you: What do you look for to figure out if a video's real or made by AI?

You evaluate it. But most teams skip this step (or do it wrong) because "eval" feels vague. It's not.

Every good eval is a 3-step recipe.

Step 1: Pick a task. AI systems have different capabilities and dimensions to evaluate. For LLMs, it can be safety or math capability, in RAGs it can be grounding and retrieval, Pick one.

Step 2: Collect eval data. For every task, gather inputs paired with the right answer or expected behavior. A safety set pairs risky prompts with "refuse."

Step 3: Develop a grader. How do you decide if the output is good?

Use code-based graders (if/else, unit tests) for things with a clear correct answer and patch passing unit-tests.

Use model-based graders (LLM-as-judge) for subjective tasks like safety.

Use human graders for edge cases and anything where nuance matters more than throughput.

Most production evals combine all three. Code-based for what's cheap to check. Model-based for scale. Human-based for what matters most.

Over to you: what's the hardest thing about your task to grade, and which grader type do you use for it?

git revert looks straightforward until it throws a conflict. Here's why that happens.

What git revert actually does: Unlike reset, a revert doesn’t rewrite history. Instead, it creates a new commit that undoes the changes from an earlier one. This keeps your history clean, traceable, and safe for shared branches.

Why revert conflicts happen: Conflicts appear when a later commit changed the same lines as the commit you're trying to undo.

Example in the diagram:

Commit C2 added a feature

Commit C3 changed those same lines

Reverting C2 now collides with changes from C3

Git can’t know which version is correct, so a revert conflict is triggered.

How to resolve it:

1. Run git revert C2

2. Git pauses when it hits the conflict

3. You manually fix the file

4. Stage it

5. Continue the revert

Git then creates a new commit that cleanly undoes C2 while keeping C3 intact.

Over to you: Have you ever hit a revert conflict at the worst possible moment? How did you resolve it?

2026-05-08 23:31:40

Our 6th cohort of Becoming an AI Engineer starts in about a week. This is a live, cohort-based course created in collaboration with best-selling author Ali Aminian and published by ByteByteGo.

Here’s what makes this cohort special:

Learn by doing: Build real world AI applications, not just by watching videos.

Structured, systematic learning path: Follow a carefully designed curriculum that takes you step by step, from fundamentals to advanced topics.

Live feedback and mentorship: Get direct feedback from instructors and peers.

Community driven: Learning alone is hard. Learning with a community is easy!

We are focused on skill building, not just theory or passive learning. Our goal is for every participant to walk away with a strong foundation for building AI systems.

If you want to start learning AI from scratch, this is the perfect platform for you to begin.

2026-05-07 23:31:08

For most of their life, containers have been considered more as a deployment concern. Package your code with its dependencies, ship it as one unit, and run it the same way everywhere.

That story was true, and it was also pretty useful, but it’s just one half of what containers were good for. The other half is what happens when we stop thinking of a container as a way to deliver one application and start thinking of it as a building block we can compose with others.

Software engineering has been here before. In the 1990s, object-oriented programming gave application code a clean boundary we could compose against. Out of that boundary came design patterns, the small library of standard solutions every working programmer eventually internalizes. With containers, distributed systems have gone through the same transition.

In this article, we’ll walk through the patterns that have crystallized over the past decade, organized by the scope of their coordination. Three of them describe how containers cooperate when they share a single machine. The other three describe how containers coordinate when the work spans many machines. None of these patterns is a rule. They’re answers to problems that distributed-systems engineers kept solving over and over.