2026-05-08 07:17:11

Applications vary wildly in what they demand from a system, making it difficult for a single benchmark to provide a broadly representative score. Benchmark suites try to address this by running a set of workloads that hopefully capture a range of typical application behavior. SPEC CPU2017 is an industry standard benchmark suite that dates back to 1989. Geekbench is another suite with a more recent history, going back to around 2010 if I remember correctly. Unlike SPEC CPU2017, Geekbench has a strong consumer focus. It’s distributed in binary form like most consumer applications, rather than source code form like SPEC CPU2017. Geekbench’s test harness and test runtimes also prioritize accessibility and ease of use.

Those differences make Geekbench 6 an interesting suite to evaluate alongside SPEC CPU2017. I looked at SPEC CPU2017 on Chips and Cheese several years ago. Now, I have a license for Geekbench 6 courtesy of Primate Labs’s founder, John Poole. It’s time to dig into the challenges Geekbench 6 workloads present for modern CPUs.

Because it’s distributed in binary form, Geekbench 6 can easily target ISA-specific features. Here, I’m running various workloads through Intel’s Software Development Emulator, which provides exact instruction counts and can emulate different ISA extensions without regard to what hardware it’s running on. With Intel’s Granite Rapids as an ISA target, AVX-512 plays a prominent role in Background Blur, Object Detection, and Structure from Motion. Granite Rapids represents Intel’s latest server platform, and comes with support for AMX for matrix multiplication acceleration. AMX shows up in Object Detection and Photo Library, where it accounts for 0.2% and 0.02% of executed instructions, respectively.

Even though AMX accounts for a small minority of executed instructions, AMX has an outsized impact. Ice Lake X represents an AVX-512 capable ISA target without AMX. Comparing results from Ice Lake X and Granite Rapids targets show that AMX can dramatically drop the number of AVX2 and AVX-512 instructions required to do the same work. Other tests show little to now difference between those two ISA targets, as expected.

AVX(2) has a huge presence across Geekbench 6 workloads, to the point that it’s easier to name workloads that aren’t heavily vectorized than ones that are. Text Processing, File Compression, Clang, and PDF Renderer don’t have much vectorization. Everything else uses plenty of 128-bit or 256-bit vectors.

Moving to Haswell gives an AVX2 capable baseline with no AVX-512 support. The three heavy AVX-512 workloads see those AVX-512 instructions replaced by more AVX2 ones. Object Detection is a particularly prominent example, with 256-bit AVX2 dominating the instruction stream.

An Ivy Bridge ISA target gives an idea of what happens with AVX support, but not AVX2. AVX provides 256-bit vector registers and 256-bit load/store operations, but 256-bit vector math operations are limited to floating point. With SDE emulating an Ivy Bridge ISA target, the executed instruction stream skews heavily towards 128-bit packed SSE operations. 256-bit AVX still shows up in many workloads, but accounts for less than 1% of executed instructions across most of them. Only Background Blur makes heavy use of 256-bit AVX.

Lastly, Intel’s Pentium 4 “Prescott” represents an x86-64 baseline from long ago. It’s mostly here for curiosity, as there are likely very few systems in service limited to this level of ISA extension support. The executed instruction distribution is surprisingly close to that of Ivy Bridge. 128-bit packed SSE operations dominate across most workloads.

SPEC CPU2017 doesn’t explicitly target ISA features, including vector extensions. To maximize portability, SPEC CPU relies on compilers to find opportunities to use ISA features. With GCC 14.2.0 compiling for an AVX-512 capable target (Zen 5), vector ISA extensions do show up in a few floating point tests. 549.fotonik3d and 554.roms execute a lot of AVX-512 instructions. Elsewhere, auto-vectorization plays a comparatively minor role. It’s almost absent in SPEC CPU2017’s integer suite, except in 525.x264 and 548.exchange2. Those two workloads use some 128-bit vectors.

Instructions per cycle gives a quick overview of how “difficult” a workload is, for lack of a better word. Low IPC often indicates a workload is bound by branch mispredicts or cache misses, or less often, by hitting a particular deficiency in the core. Games for example tend to be low IPC workloads, bound primarily by the memory subsystem. High IPC in contrast points to a well fed core, with focus shifting to how fast the core can crunch through instructions.

Geekbench 6’s IPC distribution is skewed towards the medium to medium-high IPC range. Many workloads average well beyond 2 IPC on high performance cores like Intel’s Lion Cove and AMD’s Zen 5. Skymont isn’t explicitly a high performance core, but can often get close in IPC terms. However, it’s more prone to “glass jaw” cases, as can be seen in Clang, Asset Compression, Photo Library, and Structure From Motion.

Poking around with a couple of aarch64 cores tells much the same story. Arm’s Neoverse N1 and Neoverse N2 achieve reasonably good throughput considering their likely design targets. I consider anything above 2 IPC to be high for a 4-wide core, and Neoverse N1 hits plenty of those cases. Neoverse N2 is a 5-wide core ,and sails past 3 IPC on several tests. Neoverse N1 does end up with somewhat low IPC on Object Remover, but I suspect that’s down to a weaker vector execution setup. Only Navigation sticks out as a persistently difficult, low IPC workload across all the CPUs I tested.

SPEC CPU2017 shows a wider IPC distribution, with more difficult low IPC workloads. That’s especially the case for SPEC’s integer suite, where 505.mcf and 520.omnetpp pose huge challenges even for sophisticated, high performance cores.

Geekbench 6’s IPC distribution lands closer to that of SPEC’s floating point suite, though SPEC still shows more IPC variation. 549.fotonik3d is a very low IPC outlier, and represents a corner case bound by how much bandwidth a single core can pull from DRAM. In general, Geekbench 6 has a much tighter IPC distribution across its workloads than SPEC does.

Branch prediction is both difficult and vital to high performance. Modern cores devote enormous resources to branch prediction. Geekbench 6 has several workloads that challenge branch predictors even on modern cores.

Navigation is by far the worst, with a high enough MPKI figure that mispredicts are likely the single biggest reason behind its low IPC. More advanced predictors help, but don’t solve the problem. That creates a curious situation where newer cores see little IPC improvement compared to older, smaller ones from over a decade ago.

Aside from Navigation, File Compression and Clang present moderate challenges for branch predictors. Newer predictors tend to help in Clang, while older ones tend to get shredded.

Asset Compression is an interesting case, because branch prediction normally isn’t a problem in that workload. MPKI is well under control on Lion Cove, Zen 5, and both Neoverse cores. Even much older predictors with less storage budget and less advanced prediction techniques do reasonably well. Piledriver gets away with 2.76 MPKI, while Jaguar is just a tad worse at 2.96 MPKI. However, Skymont’s normally competent predictor trips over itself in this workload suffering 4.33 branch MPKI.

Cache misses can be another major challenge for modern CPUs. On the instruction side, only Clang has a large enough code footprint to spill out of Lion Cove’s 5.2k entry op cache. On the other end of the spectrum, several Geekbench 6 workloads appear to spend much of their time executing tiny loops. In PDF Renderer, HDR, and Photo Filter, Lion Cove’s frontend is often able to feed the core out of its 192 entry loop buffer. That doesn’t improve core throughput because Lion Cove is still limited by its 8-wide rename and allocate stage downstream, but it does suggest excellent code locality in those workloads. High loop buffer usage can also help save power, because much of the frontend could be turned off when running code out of the loop buffer.

Zen 5 has an even larger op cache with 6K entries, and a well optimized one at that. Again, Clang is the only workload that offers any significant challenge to the op cache. Even then, Zen 5 can mostly feed itself from the op cache rather than the decoders. That’s very much where AMD wants to be, since Zen 5’s decoder setup is weaker than Lion Cove’s when running a single thread.

Data-side memory accesses tend to have more challenging patterns. Many of Geekbench 6’s workloads create heavy L1D miss traffic on Lion Cove. Most of these L1D misses are caught at L2. Lion Cove’s large 3 MB L2 pulls a lot of weight in making sure the core can sustain high IPC across much of Geekbench 6’s suite, despite high L3 latency. The new 192 KB L1.5D cache does well to, and occasionally captures the vast majority of L1D misses. Object Remover stands out as the only test with very high L2 miss traffic on Lion Cove.

L3 misses tend to be low, hinting at good data locality or predictable access patterns. Performance counters here specifically count the first retired load that created a miss to a 64B line. If a load requests data from a cache line that already has a miss request in progress, initiated either by a previous load or a prefetch, that counts as a FB (fill buffer) hit. I didn’t log that here. However, I did track traffic right in front of the CPU tile’s die-to-die interface, at what Intel calls the arbitration queue. I’m using that as a proxy for CPU-side L3 miss and DRAM bandwidth.

Many of Geekbench 6’s workloads request multiple gigabytes per second across the die-to-die interface, though none push the memory subsystem from a single thread as hard as SPEC CPU2017’s fotonik3d. Photo Filter is the most bandwidth heavy test in Geekbench 6, despite having a tame-looking 0.23 L3 MPKI figure. That suggests Lion Cove’s prefetcher is able to initiate many memory requests before an instruction asks for the data. Prefetching helps mitigate DRAM latency and keep IPC up. That low MPKI figure contrasts with behavior in games, which tend to miss L3 and can be sensitive to DRAM latency.

AMD’s performance events count demand data cache refills, rather than tagging loads with data sources and counting at retirement. Demand means a refill initiated by an instruction, as opposed to the prefetcher. They’ll generally give higher counts compared to events at retirement, especially if a lot of instructions execute but are later flushed (for example from branch mispredicts).

With 96 MB of L3, the Ryzen 7 9800X3D practically eliminates L3 misses for the Navigation workload and improves in Object Remover too. Elsewhere, the difference in where events are counted and the low L3 miss activity overall makes it hard to tell if the larger cache helps compared to the 36 MB L3 in Arrow Lake. Further up in the memory subsystem, Zen 5’s smaller 1 MB L2 can still be very effective, but often suffers more misses than Lion Cove’s larger L2. AMD has to rely a little more on their L3, which does have better performance than Intel’s.

I also tracked traffic at the Ryzen 7 9800X3D’s memory controllers. I tried to gather data at the IO die side of the die-to-die interface (CCMs), but couldn’t get Data Fabric performance events figured out for write traffic. Unified Memory Controller (UMC) data should be adequate anyway because I have the iGPU disabled and should have very little IO traffic while running Geekbench 6 workloads. The Ryzen 7 9800X3D overall has lower DRAM traffic than on the 285K, likely thanks to the former’s larger L3. Generally though, the pattern is similar. Photo Filter stands out as a bandwidth heavy but prefetch friendly workload. HTML5 Browser and Horizon Detection slot into the same category.

Both Geekbench 6 and SPEC CPU2017 scores are given in speedup relative to a reference system. Geekbench 6’s reference system is a Dell Precision 3460 with a Core i7-12700, which is set to a baseline score of 2500. SPEC CPU2017’s reference system is a Sun Fire V490 with 2.1 GHz UltraSPARC-IV+ processors, which is set to a score of 1.

I find SPEC CPU2017’s score easier to interpret because it’s simply a speedup ratio, while Geekbench 6’s score takes a bit more math to get there. On the flip side, Geekbench 6’s reference system is more modern and relevant. The Sun Fire V490 dates back to 2009, and already performed poorly compared to systems from just a few years later. A Sandy Bridge or Bulldozer system would provide a far better reference point.

Geekbench 6’s reference system also has strong vector execution capabilities, assuming the workloads mostly ran on the 12700’s P-Cores. Geekbench 6’s workloads tend to be vector heavy, so the baseline sets rather demanding expectations. Skymont has weak vector execution despite improving on prior E-Cores, and often falls below the 2500 score baseline. The same applies to Arm’s Neoverse N1 and N2, though those cores also fall behind because they’re optimized for lower performance targets in general.

Geekbench 6 is a vector-heavy suite that emphasizes core throughput. Many workloads have small instruction footprints, enjoy good branch prediction accuracy, and are prefetcher friendly. There are exceptions of course. Navigation stands out as one of the only low IPC workloads, bound by branch mispredicts. Clang does run into some op cache misses, though the larger op cache on Zen 5 still does very well, and other factors seem to hold back its IPC before Zen 5’s per-thread, 4-wide decoders present a limitation.

SPEC CPU2017 shows some of the same characteristics, with a large number of workloads that don’t pressure the memory subsystem as much as games do. While SPEC captures a broader range of IPC-related challenges, its emphasis on portability means it doesn’t stress vector execution as much as Geekbench 6 can. Both suites ultimately serve different purposes, and neither can be used in place of the other. Going forward, I expect both SPEC and Primate Labs to continue evaluating application behavior, and update their workloads as typical application behavior evolves.

2026-04-08 12:29:08

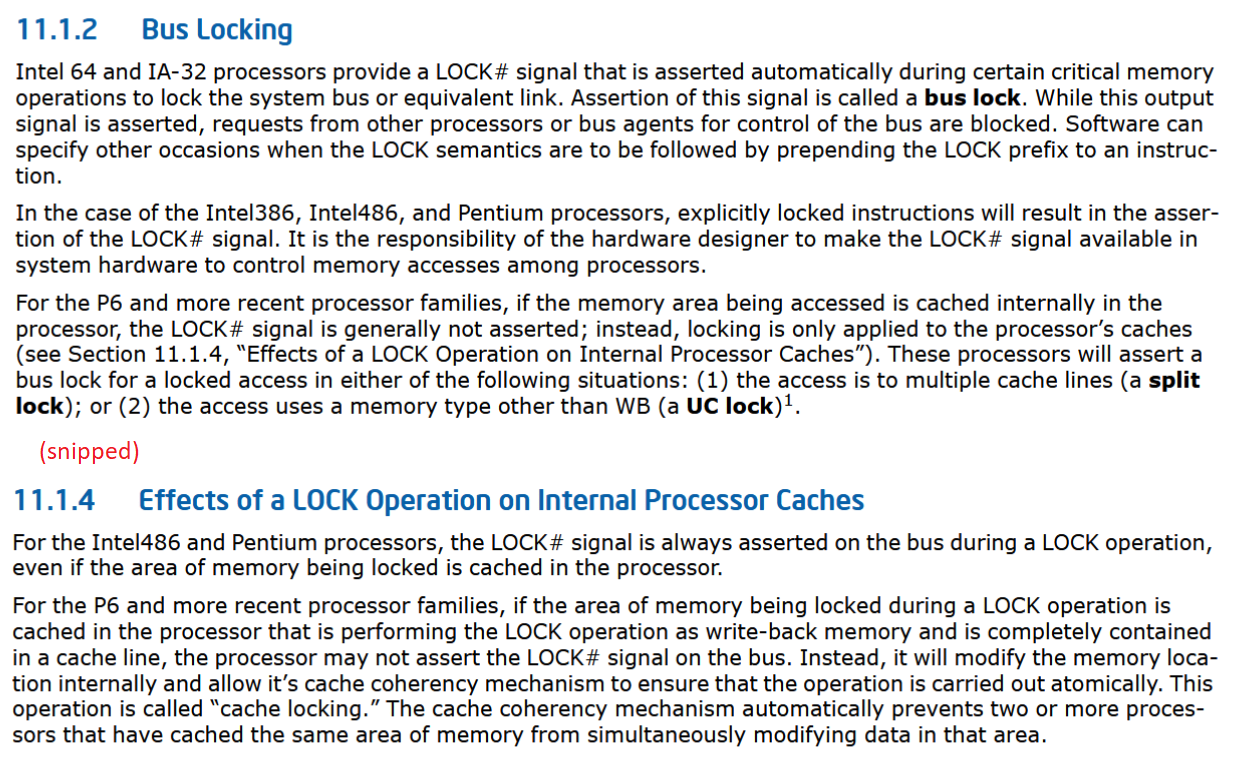

“Split locks” are atomic operations that access memory across cache line boundaries. Atomic operations let programmers perform several basic operations in sequence without interference from another thread. That makes atomic operations useful for multithreaded code. For instance, an atomic test and set can let a thread acquire a higher level lock. Or, an atomic add can let multiple threads increment a shared counter without using a software-orchestrated lock. Modern CPUs handle atomics with cache coherency protocols, letting cores lock individual cache lines while letting unrelated memory accesses proceed. Intel and AMD apparently don’t have a way to lock two cache lines at once, and fall back to a "bus lock" if an atomic operation works on a value that’s split across two cache lines.

Bus locks are problematic because they’re slow, and taking a bus lock “potentially disrupts performance on other cores and brings the whole system to its knees”. AMD and Intel’s newer cores can trap split locks, letting the kernel easily detect processes that use split locks and potentially mitigate that noisy neighbor effect. Linux defaults to using this feature and inserting an artificial delay to mitigate the performance impact.

I have a core to core latency test that bounces an incrementing counter between cores using _InterlockedCompareExchange64. That compiles to lock cmpxchg on x86-64, which is an atomic test and set operation. I normally target a value at the start of a 64B aligned block of memory, but here I’m modifying it to push the targeted value’s start address to just before the end of the cache line. Doing so places some bytes of the targeted 8B (64-bit) value on the first cache line, and the rest on the next one. As expected, “core to core latency” with split locks range from bad to horrifying.

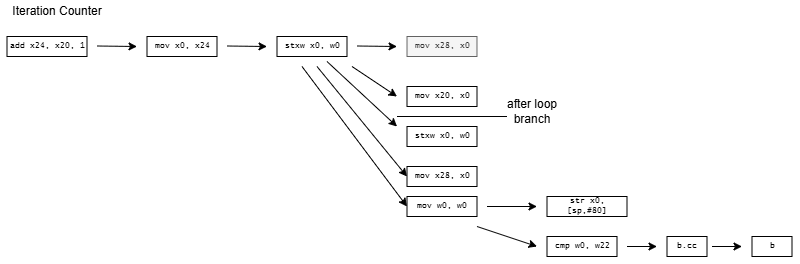

To assess the potential disruption from split locks, I ran memory latency and bandwidth microbenchmarks on cores excluded from the core to core latency test. Besides microbenchmarks, I ran Geekbench 6’s photo filter and asset compression workloads. The photo filter workload generates a lot of cache miss traffic, while asset compression tends to be the opposite. Many recent CPUs only achieve their highest clock speeds with two or fewer cores active. One core will be loaded by the workload being tested for contention effects, and another pair will be used for the core to core latency test. I therefore turned off boost or lowered clock speeds on some of the tested hardware to reduce noisy neighbor effects from clock speed variation, helping isolate the effects of split locks.

Intel’s Arrow Lake gets to be the first victim. Normal core to core latency results look like this:

Split locks send latency to 7 microseconds, which remains mostly constant across different core types.

On Arrow Lake, split locks only affect L2 misses. It’s close to a “bus lock” in the traditional sense because it affects the first level in the memory hierarchy shared by all CPU cores. In theory a program can be completely unaffected by split locks as long as it keeps hitting in L2 or faster caches.

Past L2, split lock contention roughly halves memory access performance. Curiously, the 4 MB L2 caches shared across quad-core E-Core clusters aren’t affected, even if split locks are being looped from a pair of cores within the same cluster.

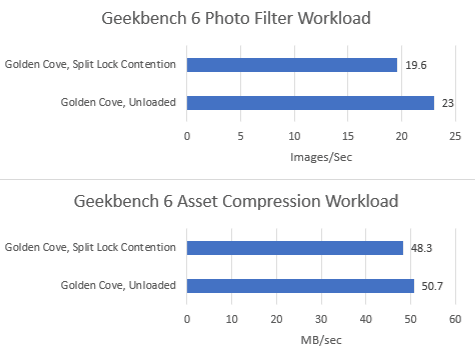

Split locks heavily impact GB6’s photo filter workload. Asset compression also takes a hit, but gets away relatively unscathed.

Zen 5 has better split lock latency than Arrow Lake, though 500 ns is still bad in an absolute sense. As on Arrow Lake, core cluster boundaries don’t affect split lock performance.

Split locks trash everything beyond L1D. L2 and L3 performance regresses by a factor of ten. Zen 5 suffers severe penalties from split locks compared to Arrow Lake, though this situation is certainly a very contrived and unrealistic one.

Both Geekbench 6 workloads take heavy performance regressions under split lock contention. Asset Compression doesn’t generate a lot of L3 miss traffic, but even a L1D miss is very costly with split locks being looped on Zen 5.

Alder Lake uses a hybrid core setup with Golden Cove P-Cores and Gracemont E-Cores. Split locks perform horribly, and also invert the latency picture compared to intra-cacheline locks. Bouncing a cache line between P-Cores normally happens with less latency than on the E-Cores. Prior to Arrow Lake, Intel had to take a trip through the ring bus even when bouncing a line between E-Cores in the same cluster.

With split locks, P-Cores suffer agonizingly poor latency. Split locks between P and E-Cores have just above 7 microseconds of latency, matching Arrow Lake. E-Cores have the best split lock latency.

Alder Lake’s memory subsystem tolerates split locks well. L3 performance only takes a modest hit. DRAM latency goes up with split locks come into play, but not by a large amount.

Geekbench 6’s photo filter and asset compression workloads barely show any performance loss, as foreshadowed by microbenchmark results. Alder Lake might have particularly poor split lock performance, but does an excellent job of insulating other applications from their effects.

Zen 2’s split lock latency lands above Zen 5’s, though it’s still better than what Intel’s newer architectures achieve. Latencies remain constant regardless of cluster boundaries, mirroring behavior seen with Zen 5 and Arrow Lake.

As on Zen 5, split locks have a devastating effect on any L1 miss. Bandwidth and latency regress by around 10x with the core to core latency test spamming split locks.

Curiously, L3 latency takes another hit after 2 MB if split locks are being looped on the same CCX. Zen 5 didn’t have an extra inflection point under split lock contention even within the same CCX.

As on Zen 5, both Geekbench 6 workloads suffer heavily under split lock contention.

Intel’s older Skylake architecture actually has better split lock latency than Arrow Lake and Alder Lake. It’s a bit worse than Zen 2, but doesn’t reach into the microsecond range.

Skylake also takes split lock contention penalties for L2 misses, but not for L2 or L1 hits.

Both tested Geekbench 6 workloads show measurable performance impact from split locks. Photo filter loses 34.24% of its performance, while asset compression takes a lower 16.2% loss.

AMD’s Piledriver has high core to core latency results, but remarkably has the best results for split locks. Split lock latency is double to triple the latency of intra-cacheline locks, but that’s worlds better than on newer platforms.

Split locks don’t affect cache hits. Remarkably, that extends to the shared L3 cache. DRAM performance does get hit, with latency doubled and bandwidth cut by more than half.

Microbenchmark results translate to higher level workloads. Geekbench 6’s photo filter and asset compression workloads both get away with only minor performance impact. The hit to DRAM performance certainly hurts, but a Piledriver core has 10 MB of cache completely unaffected by split locks. No other hardware tested here can field that much split-lock-unaffected cache capacity.

Goldmont Plus has high split lock latency, though it’s still curiously better than Intel’s more modern Arrow Lake and Alder Lake.

Just like Arrow Lake’s E-Cores, Goldmont Plus can hit L2 and be completely unaffected by split locks. DRAM bandwidth does take a substantial drop, but latency only regresses slightly. It’s worth noting that Goldmont Plus starts with very poor baseline DRAM latency though.

Slight differences in L1D and L2 performance can be attributed to lower clock speeds, since I didn’t underclock the chip.

Both Geekbench 6 workloads show performance regressions, but it’s not much considering there’s also multi-core clock speed drops in the mix. Much of the score decrease for asset compression can probably be attributed to lower clocks. The photo filter workload definitely suffers, but not to the same extent as Arrow Lake or AMD’s Zen 2 or Zen 5.

The term “bus lock” dates back to early multiprocessor systems, which placed multiple CPUs on a shared bus. Each CPU had its bus pins connected to the same motherboard traces, which connected them to the chipset. Locking the bus prevents other CPUs from making memory accesses, preventing them from interfering with atomic operations. Modern CPUs no longer use a shared bus, and instead use non-blocking, distributed interconnect setups. It’s not clear what a “bus lock” really means. And while Intel and AMD still use the terminology, “bus locks” caused by split locks clearly have a range of effects depending on the hardware in question.

I suspect Intel’s bus locks are implemented in their IDI (in-die interconnect) protocol. P-Cores and E-Core use IDI to talk to the uncore, which starts with the ring bus on most Intel client designs. Goldmont Plus’s performance monitoring documentation curiously indicates that a “bus lock” is a special request to the L2 controller, though L2 hits aren’t impacted just like on other Intel designs.

AMD’s implementation goes beyond a traditional bus lock in Zen 2 and Zen 5, with core-private L2 caches impacted as well. One possibility is that AMD falls back to the Infinity Fabric layer when handling split locks, though I don’t have strong evidence to support that. Performance monitoring events increment at the Data Fabric’s Coherent Stations during core to core latency test runs. But if they’re responsible, they only handle control path traffic because the increments aren’t proportional to L2 hit traffic observed when also running a memory bandwidth microbenchmark.

Piledriver also throws a wrench into the works when trying to define bus locks. Maybe AMD’s old architecture doesn’t use bus locks at all, and is able to use its cache coherency protocol to let unrelated accesses proceed as long as they hit cache.

Overall, there’s no good analogy to the classical shared bus on a modern CPU. Perhaps CPU designers should drop the term “bus lock” from documentation, and precisely document how a split lock impacts their systems.

Linux’s split lock mitigation traps split locks and introduces millisecond level delays, aiming to both make split locks “annoying” and provide better quality of service to other applications.

Testing on Linux without touching sysctl settings shows latency comparable to mechanical hard drive seek time, which is an eternity for a CPU. This kind of delay should also eliminate the noisy neighbor effects above, since the split lock core to core latency test simply isn’t running most of the time.

I think Linux’s default makes sense for mutli-user or server systems, which value consistent performance when running a large number of tasks. Limiting noisy neighbor effects from a rogue application, like my test code above that spams split locks, is a sensible option. I can see it being used alongside other QoS mechanisms like running at lower, locked clock speeds, partitioning caches, and throttling memory bandwidth usage to ensure consistency.

For consumer systems though, Linux’s default feels like an overreaction to a problem that didn’t exist. A core-to-core latency test uses locks at a rate no normal application would. A split lock variant of that test is even more ridiculous. Games have apparently been using split locks for quite a while, and have not created issues even on AMD’s Zen 2 and Zen 5. However, a game that runs at 10 FPS on Linux and 200 FPS on Windows is a problem for Linux.

Linux traditionally struggled on the desktop scene because it required a high level of technical skill and substantial troubleshooting time from users. Linux distros have made tremendous progress in chipping away at those requirements over the past two decades. But doing a scream test on users - that is, attempting to impose a vision of the “proper” way to do things by creating an artificial performance problem - is a step backward. Ease of use is everything in the consumer world. Every OS problem that a user has to troubleshoot is a failure on the OS’s part. Going forward, I hope Linux dodges avoidable problems like this.

Split locks don’t stop other cores from running code. They’re not the hardware equivalent of Python’s global interpreter lock, or another similar construct that blocks concurrency. On modern CPUs, split locks don’t even block all memory accesses. They only introduce a performance penalty when those memory accesses miss a certain cache level. That performance penalty varies wildly too.

Applications tend to suffer varying degrees depending on how often they miss cache. Hardware has a huge influence too. AMD’s Piledriver and Intel’s Alder Lake do the best job of minimizing noisy neighbor effects. Piledriver is especially impressive because it manages that while delivering the best split lock latency across all hardware tested here. On the other hand, AMD’s Zen 2 and Zen 5 suffer heavily in this corner case. Another application could slow down so much that you’d be forgiven for thinking you got a version of it compiled using Claude’s C Compiler.

From these results, programmers should obviously strive to avoid split locks. They perform poorly, and have a heavier effect on other applications than intra-cacheline locks. From the hardware side, there’s clearly room to optimize split locks for better performance and reduced noisy neighbor effects. I hope to see both hardware and software developers take a measured, data-driven approach to tackling the split lock problem, and one that doesn’t involve introducing new performance problems.

Zen 5 in the Ryzen AI MAX+ 395 (Strix Halo) has higher split lock latency than its desktop Zen 5 counterparts. I suspect split locks are handled at Infinity Fabric’s Coherent Station blocks, but curiously those counters also increment for intra-cacheline locks that are handled within a CCX. Perhaps the Coherent Station gets some sort of notification about a line changing state.

Zen 4 in a high performance mobile implementation shows similar split lock latency to Zen 2.

Arrow Lake E-Cores have a lot of monitoring sophistication around bus locks. Under the split lock core to core latency test, any E-Core involved gets blocked for nearly all cycles. However, other E-Cores are only blocked around half the time. That would explain the roughly 50% L3/DRAM performance degradation, though it does not explain how L2 performance remains unscathed.

I took rough notes on die to die traffic when running Geekbench 6 workloads on Arrow Lake by observing performance counters sampled at 1 second interval and picking out spikes. My goal isn’t to give an accurate figure for average L3 miss traffic per workload, but rather to select two interesting workloads for measuring split lock impact.

2026-04-01 15:02:33

Update (4-2-2026): After careful consideration of changing conditions, especially the change in current date, the transition to Claude’s C Compiler has been rescinded. The original article remains below.

Our society has gone all-in on AI. Software and related technologies represent one of the biggest sectors of the US economy, and technology companies have all but bet the farm on LLMs being able to do everything under the sun. By the law of sunk costs*, AI investments will increase without bound until that objective is achieved. AGI is therefore inevitable, and everyone must fully commit to ensuring AI’s success because things will get more painful the longer it takes. When bank money runs out, the government will bail them out. When government funds are exhausted, aliens will get involved, followed by various deities. Please don’t ask what happens after that. Anyway, it’s in everyone’s best interest to embrace the AI revolution with absolute conviction. Therefore, I’m excited to announce that Chips and Cheese will stand at the vanguard of the AI revolution by adopting Claude’s AI-generated compiler, CCC.

CCC, or Claudes’ C Compiler, is a “from-scratch optimizing compiler with no dependencies […] able to compile the Linux kernel.” Anthropic used their Claude large language model (LLM) to write the entire compiler. Human effort was relegated to prompting and maintaining AI coding agents, and standing up a very robust test suite to keep those coding agents on track. Past Chips and Cheese articles used microbenchmarks and benchmarks built using GCC. GCC was developed by humans, which is backwards and reactionary by the standards of many cadres today. Comparing GCC and CCC should be a powerful showcase of revolutionary software development practices.

Microbenchmarking is a useful tool for understanding low level hardware characteristics like cache and memory latency. My first shot at testing load latency used a simple loop of dependent array accesses in C:

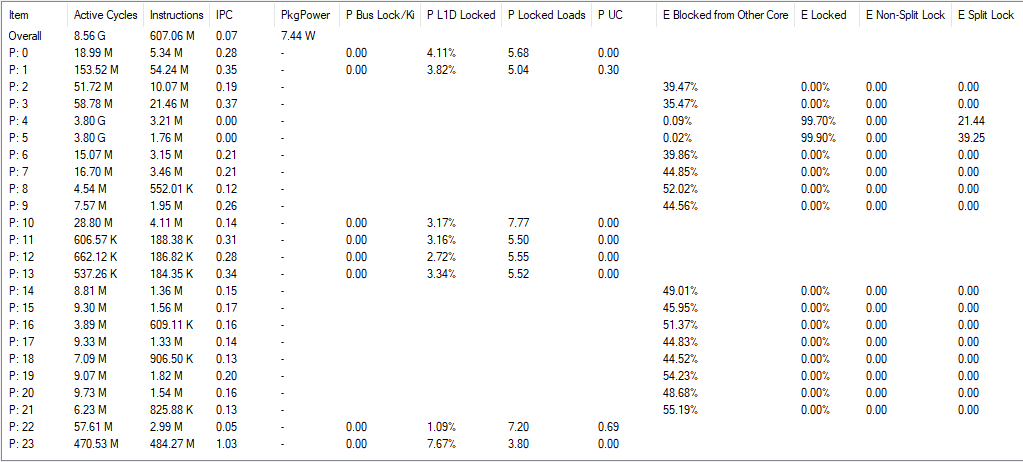

A = A[current] creates a dependency chain where one array access result feeds into the next, preventing accesses from overlapping even on an out-of-order core. Dividing access time by iteration count gives latency. CCC and GCC compile the loop into the following assembly code:

CCC generates more code from the same high level source than GCC does, and sometimes, more is better. CCC’s code is more ideologically correct from a RISC standpoint. GCC uses indexed addressing modes that express a dependent shift, add, and load sequence in a single instruction. RISC advocates for a small set of simple instructions and larger code sizes, and indexed addressing modes are quite complex. CCC follows the RISC philosophy, and splits the array access into separate shift, add, and load instructions.

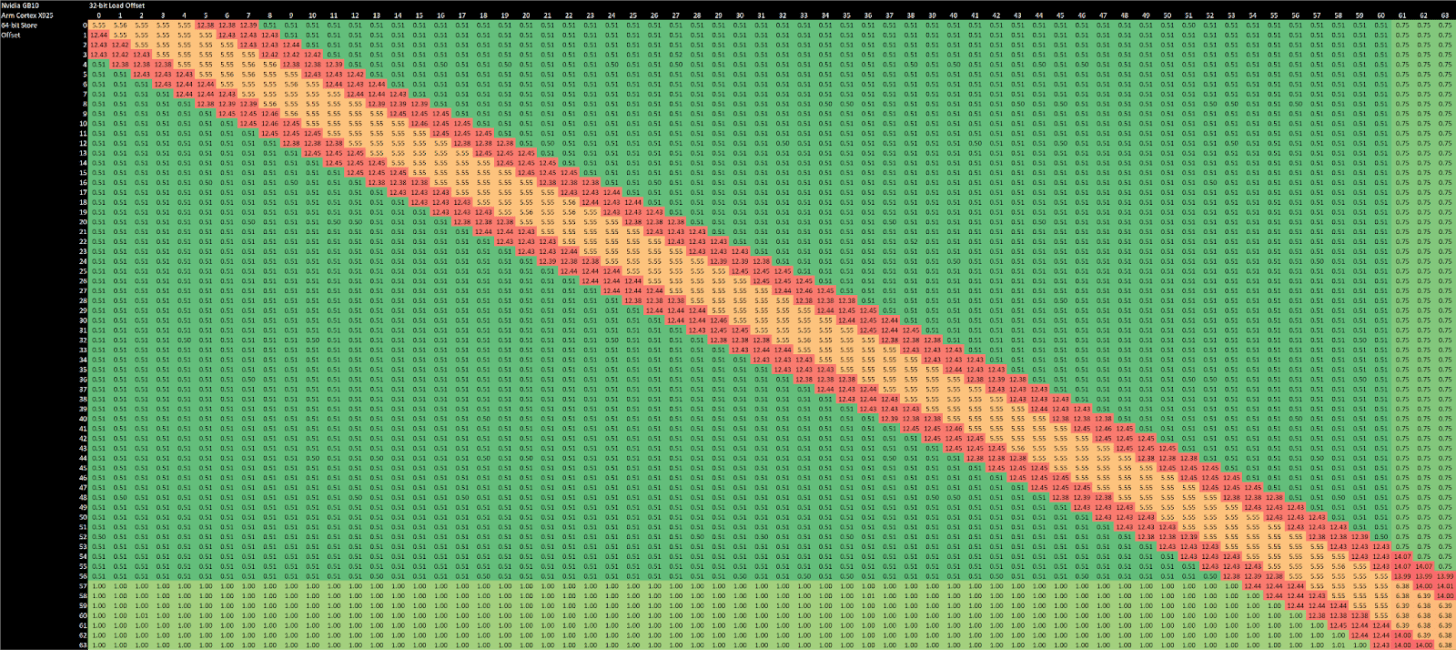

CCC doesn’t stop there and exceeds quotas by decomposing current = A[current] into a nine instruction dependency chain. On x86-64, it shuffles the index through several registers and sends it on a trip through the stack. It does the same on aarch64, but at least keeps the index in registers. On both architectures, CCC brings the array base address in from the stack, and sends it on another round trip through the stack for good measure before using it.

Compiling the microbenchmark with CCC results in higher observed array access latency. Zen 5 and Lion Cove need two extra cycles, likely from the shift + add dependency chain. Exceptionally strong move elimination and zero-latency store forwarding seem to let Intel and AMD collapse the nine-instruction dependency chain. After that, their out-of-order core width absorbs the additional instructions. Arm’s recent cores have less robust move elimination and can’t do zero latency store forwarding. They suffer a 6-7 cycle penalty.

Smaller cores with narrower execution engines struggle with CCC-compiled code, likely because they don’t have enough core throughput to absorb loop overhead and the sum += current sink statement. CCC also compiles those into very long assembly sequences, placing pressure on core throughput.

In-order cores suffer the largest penalties. They’re not embracing the AI revolution with adequate enthusiasm, and should seriously reconsider their stance or risk getting denounced.

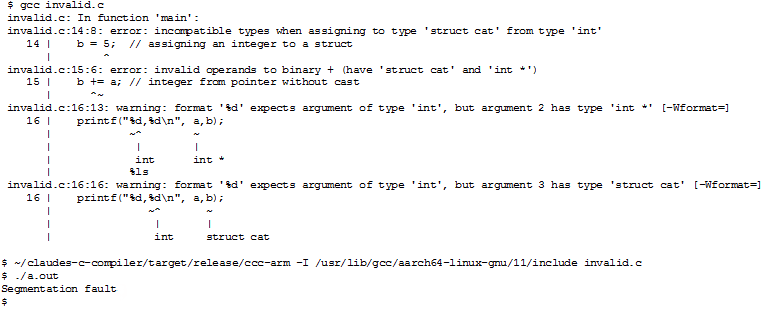

Old, traditional compilers force the masses to follow a tight set of rules dreamt up by the bourgeoisie. If a programmer doesn’t follow these rules, the compiler will complain and may even refuse to complete the compilation process. Warnings and errors are of no use to the proletariat, who just want results. CCC boldly crushes type systems with revolutionary fervor, as if to shout “out with the old, in with the new!” Take the following code:

GCC cowers behind error messages with weak excuses about incompatible types and fails to produce a binary. In doing so, GCC engages in counter-revolutionary wrecking by undermining the work of the masses, who have to waste time fixing errors.

Rather than make excuses, CCC raises the revolutionary banner high and produces an executable.

Claude’s C Compiler only compiles C code. All other languages are therefore invalid. Eight of SPEC CPU2017’s workloads are C-only. I tested them on the following cores:

Arm Cortex X925 in Nvidia’s GB10

AMD Zen 5 in the Ryzen 7 9800X3D, with boost disabled

Intel Lion Cove in the Core Ultra 9 285K

I ran with boost disabled on the 9800X3D because running CCC-built workloads takes a lot of time. Extra time spent in high performance states mean more power consumption, and we should save every last bit of power possible to feed AI applications.

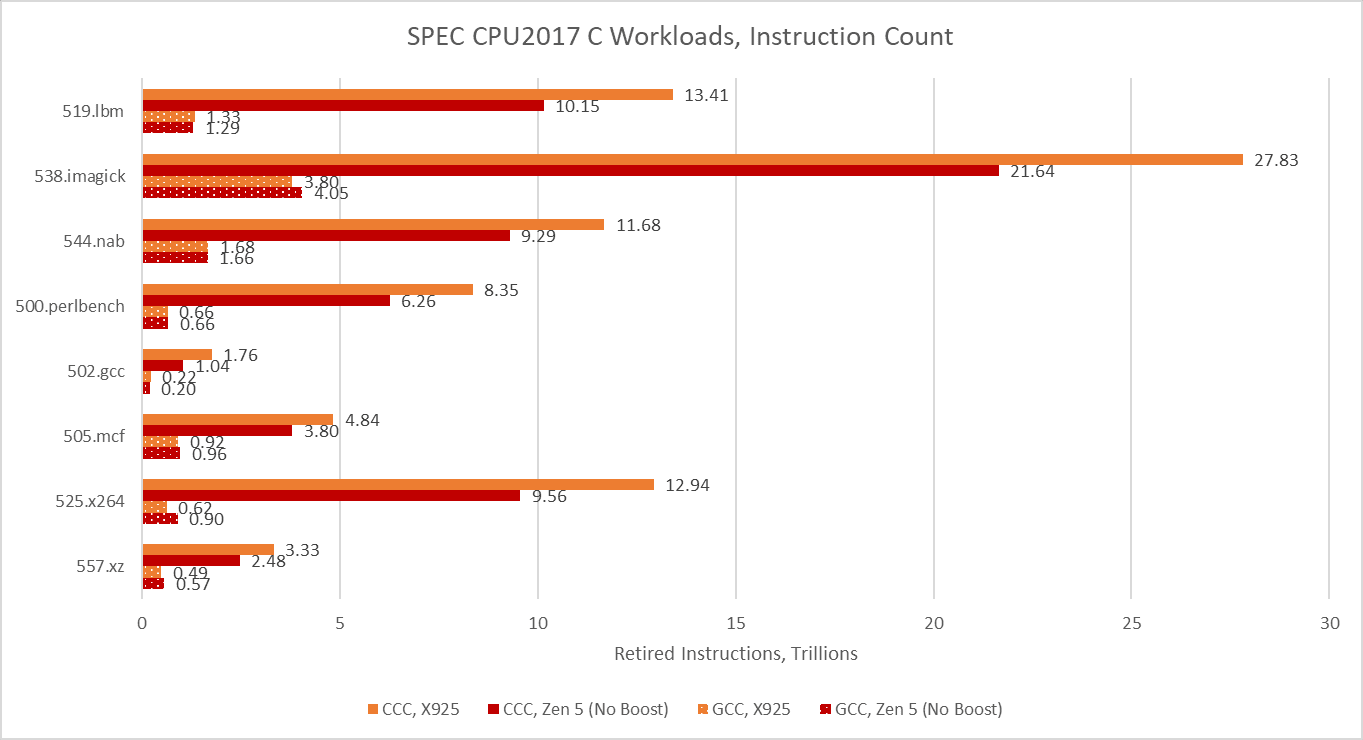

CCC’s version of 502.gcc consistently crashed with a segmentation fault on Cortex X925, even though it managed to complete some runs on x86-64. When it ran to completion on x86-64, CCC’s 502.gcc delivered 23.6% and 27.1% of the performance of its GCC-compiled counterpart on Lion Cove and Zen 5, respectively. CCC hit its relative performance peak on 505.mcf, where it managed less than a 35% regression versus GCC. Across the eight tested SPEC CPU2017 workloads, using CCC on average regressed performance by somewhere above 70%.

Arm’s Cortex X925 suffers hardest from the CCC switch. With GCC, Arm’s core was very much a match for Zen 5 and Lion Cove. Now, it loses in every workload except for 500.perlbench, where Zen 5 takes a dive to let X925 slip ahead. But X925 still can’t compare to Lion Cove in that workload.

In turn, Lion Cove can’t compare to Sun’s UltraSPARC IV+. SPEC CPU2017 scores are speedup ratios relative to a reference machine, which is “a historical Sun Microsystems server, the Sun Fire V490 with 2100 MHz UltraSPARC-IV+”. Lion Cove may have the best 500.perlbench score of the three cores tested here at 0.76, but that’s below 1. A vibe-coded compiler is the product of the latest software development techniques and can’t be at fault. It therefore follows that Sun’s UltraSPARC-IV+ is amazing. The UltraSPARC-IV+ is a dual core version of the UltraSPARC III, which uses a 4-wide in-order pipeline. Lion Cove is an 8-wide out-of-order design with massive reordering capacity and much higher clock speeds, but clearly none of that is necessary to win.

Performance is overrated. Analysts today agree that IPC is more important than performance, and CCC code achieves excellent IPC. On 525.x264, Arrow Lake averages 6.09 IPC when running CCC code. That’s the highest IPC average across all SPEC CPU2017 runs above. GCC’s best IPC average tops out at a wimpy 5.5. CCC’s IPC advantage isn’t universal though, and GCC does manage higher IPC on 538.imagick.

As foreshadowed with the cache and memory latency microbenchmark, CCC takes a great leap forward in instruction counts across all eight SPEC CPU2017 workloads. Some workloads execute more than 10x as many instructions.

Hardware performance monitoring reveals a different set of challenges for the CPU pipeline when comparing GCC- and CCC-compiled code. Branch prediction is less important because branch count didn’t grow proportionately to total instruction count. SPEC CPU2017’s three C-only floating point workloads become very core-bound when built with CCC. On Zen 5, that means retirement is blocked by an instruction that doesn’t read from memory. That indicates performance is limited by how fast the core can execute instructions, rather than how fast the memory subsystem can feed the core with data. The five integer workloads become less frontend bound, and face steeper backend challenges. CCC workloads across the board achieve higher core utilization than their GCC-compiled counterparts, as the IPC figures from before suggest.

500.perlbench is a catastrophically bad case for Zen 5 when compiled with CCC because of a much lower op cache hitrate. Zen 5 primarily feeds itself with a 6K entry, 16-way op cache, which can fully feed the 8-wide rename/allocate stage. 4-wide, per-thread decoders handle larger instruction footprints. Typically applications with poor code locality suffer from other IPC limiters besides frontend throughput, especially when the core isn’t running a second thread that can hide latency. 500.perlbench has higher IPC potential if not for the per-thread decode throughput limitation, putting Zen 5 behind X925 and very far behind Lion Cove. It’s the first case I’ve seen outside a microbenchmark where Zen 5’s decoder arrangement comes out to bite. Zen 5’s op cache manages high coverage in other workloads, so 500.perlbench is an exceptional case.

CCC challenges Lion Cove’s pipeline in a similar fashion, though Lion Cove is generally more backend bound than Zen 5. This could be because Lion Cove sits atop a higher latency uncore. Or, the difference could be down to accounting methodology. For example, Intel might be counting cycles where any instruction is delayed by a load as “memory bound”, which could lead to higher counts than AMD’s approach. Lion Cove’s 8-wide decoder excels in 500.perlbench, where frontend limitations are minor compared to what they were in Zen 5.

Arm’s Cortex X925 is very core-bound when running CCC code, and not just in the three FP workloads. I’m distinguishing between core and memory bound backend bound slots by looking at the proportion of backend bound cycles covered by the STALL_BACKEND_MEMBOUND event, which counts when there’s a backend stall and the “backend interface to memory is busy or stalled”.

I partially attribute the core-bound slot increase to Cortex X925 not having the robust move elimination and zero-latency store forwarding that Lion Cove and Zen 5 do. Long chains of register-to-register MOVs or frequent trips through the stack are rare in typical code. As evidence, Intel's Ice Lake only took a 1-3% performance loss with move elimination disabled. Move elimination therefore acts as a cherry on top in most applications, with most performance coming from out-of-order execution basics and an efficient cache hierarchy to feed the core. Of course, that changes with CCC.

519.lbm’s large increase in backend memory bound slots looks related to store forwarding latency. A hot section in CCC-compiled 519.lbm repeatedly spills register x0 to the stack, only to reload it several instructions later, making sure the memory system contributes to all phases of code execution. Store forwarding latency forms part of a long dependency chain, and X925 can’t do zero-latency forwarding the way Zen 5 and Lion Cove can.

Compilers are crucial to modern software development, and performance of compiled code is key to making high level languages practical. Switching to CCC causes a 70%+ performance degradation across C-only SPEC CPU2017 workloads. Most consumers will readily accept this, because a fast core like Intel’s Lion Cove still does well when running CCC code. As evidence, AMD’s Bulldozer architecture fascinated enthusiasts about 15 years ago. Bulldozer is 36.8% faster than Lion Cove, the fastest CPU tested here for running CCC code. Simply overclocking Lion Cove to 8 GHz could close much of the performance gap.

Of course, some provocateurs may only reach 7.5 GHz, undoubtedly due to a combination of personal skill issues and insufficient enthusiasm for the AI revolution. Severe agitators may theorize that Bulldozer’s level of single threaded performance is not top-notch in some scenarios, and demand ever more performance. To prevent these people from discrediting the revolution, the stock performance problem must be addressed.

Performance problems can be addressed from either hardware or software, because hardware and software optimization go hand-in-hand. Well optimized software performs well across a wide variety of hardware. Likewise, well designed hardware delivers good performance across a wide range of software. CCC-generated code dramatically shifts what software demands from hardware, upsetting the existing social contract between software and hardware. Faulting the software side would undermine the crowning achievements of the AI revolution. Therefore, hardware must boldly tackle the performance question.

Completely addressing the performance gap would be easy by my estimate. With 20-30 GHz clock speeds and several generations worth of architectural improvements, CCC code could reach the performance achieved by GCC-compiled binaries on current generation hardware. These targets might have been dismissed in the past. But they’re achievable now because the AI revolution isn’t just any revolution. Rather, it is a cultural revolution that upends views regarding power consumption. Infinite power can make many previously unthinkable designs a reality. Some hardware designers may see red flags in this approach and persistently complain. But proper hardware engineers will rally around those flags and chant slogans. By correctly following the path of the revolution, they’ll be sure to succeed and may even exceed their quotas. May the odds be ever in their favor.

* The proof is trivial and similar to the proof for the gambler’s law (99% of gamblers quit just before they win big)

2026-03-22 13:46:34

Motherboard chipsets have taken on fewer and fewer performance critical functions over time. In the Athlon 64 era, integrated memory controllers took the chipset out of the DRAM access path. In the early 2010s, CPUs like Intel’s Sandy Bridge brought PCIe lanes onto the CPU, letting it interface with a GPU without chipset involvement. Chipsets today still host a lot of important IO capabilities, but they’ve become a footnote in the performance equation. Benchmarking a chipset won’t be useful, but a topic doesn’t have to be useful in order to be fun. And I do think it’ll be fun to test chipset latency.

I’m using Nemes’s Vulkan-based GPU benchmark suite here, but with some modifications that let me allocate memory with the VK_MEMORY_PROPERTY_HOST_COHERENT_BIT set, and the VK_MEMORY_PROPERTY_DEVICE_LOCAL_BIT unset. That gives me a buffer allocated out of host memory. Then, I can run the Vulkan latency test against host memory, giving me GPU memory access latency over PCIe. The GPU in play doesn’t matter here, because I’m concerned with latency differences between using CPU PCIe slots and the southbridge ones. I’m using Nvidia’s T1000 because it’s a single slot card and can fit into the bottom PCIe slot in my various systems. All of the systems below run Windows 10, except for the FX-8350 system, which I have running Windows Server 2016. I’m using the latest drivers available for those operating systems, which would be 553.50 on Windows 10 and 475.14 on Windows Server 2016.

I also tried testing in the other direction by having the CPU access GPU device memory. Nemes actually does that for her Vulkan uplink latency test. However, I hit unexplainable inflection points when testing across a range of test sizes. Windows performance metrics indicated a ton of page faults at larger test sizes, perhaps hinting at driver intervention. Investigating that is a rabbit hole I don’t want to go down, and the CPU to VRAM access behavior I observed with the T1000 didn’t apply in the same way across different GPUs. I’ll therefore be focusing on GPU to host memory access latency, though I’ll leave a footnote at the end for CPU-side results.

Setting the VK_MEMORY_PROPERTY_HOST_COHERENT_BIT means writes can be made visible between the CPU and GPU without explicit cache flush and invalidate commands. With the T1000, this has the strange side effect of causing a lot of probe traffic even when the GPU hits its own caches. GPU cache hit bandwidth can be constrained depending on platform configuration, possibly depending on probe throughput. AMD’s old Piledriver CPU has northbridge performance events that can track probe results. These events show a flood of probes when the test goes through small sizes that fit within the T1000’s caches. Probe activity dips when the test reaches larger sizes and bandwidth is constrained by the PCIe link.

There’s a lot of mysteries here. Probes work at cacheline granularity, and AMD’s 15h BKDG makes that clear. But probe rate suggests the T1000 is initiating a probe for every 512 bytes of cache hits, not every 64. Another mystery is why cache hit latency appears unaffected.

Mysteries aside, I’ll be dropping a note when GPU cache hit bandwidth appears to be affected by the platform. But I won’t comment on GPU cache miss bandwidth, which will obviously be limited by PCIe bandwidth. The T1000 has a x16 PCIe Gen 3 interface to the host, and the newest platforms I test here can support PCIe Gen 4 or Gen 5. I don’t have a single slot card with PCIe Gen 4 or Gen 5 support.

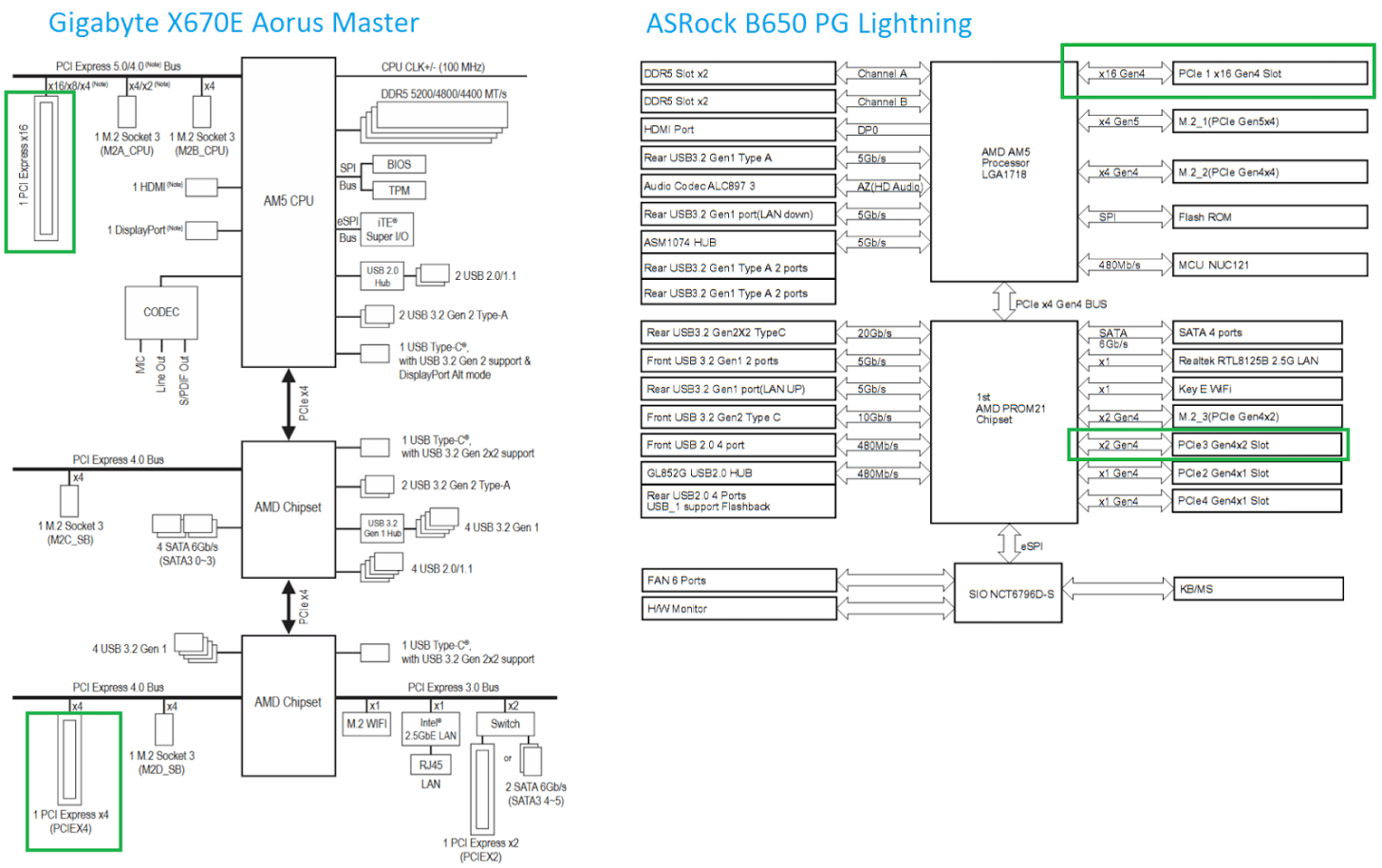

AMD’s latest desktop platform is built around the PROM21 chip. PROM21 has a PCIe 4.0 x4 uplink, and exposes a variety of downstream IO including more PCIe 4.0 links. AMD’s X670E chipset chains two PROM21 chips together, basically giving the platform two southbridge chips for better connectivity. Gigabyte’s X670E Aorus Master has three PCIe slots. One connects to the CPU, while the two others connect to the lower PROM21 chip. The PROM21 chip connected to the CPU isn’t hooked up to any PCIe slots. Instead, it’s only used to provide M.2 slots and some USB ports. The B650 chipset, tested here on the ASRock B650 PG Lightning, only uses one PROM21 chip and connects it to PCIe slots.

Using the CPU’s PCIe lanes gives about 650 ns of baseline latency. Going through one PROM21 chip on the B650 chipset brings latency to 1221 ns, or 569.7 ns over the baseline. On X670E, hopping over two PROM21 chips brings latency to 921.3 ns over the baseline. Going over the chipset therefore comes with a hefty latency penalty. Going through an extra PROM21 chip further increases latency.

I saw no difference in GPU cache hit bandwidth with the T1000 connected to Zen 5’s CPU lanes. Switching the GPU to the chipset lanes dropped cache hit bandwidth to just above 25 GB/s, and that figure remains similar regardless of whether one or two PROM21 chips sat between the CPU and GPU.

Arrow Lake chipsets follow the same topology as Intel chipsets going back to Sandy Bridge. A Platform Controller Hub (PCH) serves the southbridge role. The PCH connects to the CPU via a Direct Media Interface (DMI), which is a PCIe-like interface. Z890 uses DMI Gen 4, which can use transfer speeds of up to 16 GT/s with eight lanes. I’m testing on the MSI PRO Z890-A WIFI, which provides three PCIe slots. The first is a x16 slot connected to the CPU, while the two others are x4 slots connected to the PCH.

Baseline latency on Arrow Lake is higher than on Zen 5, with the GPU seeing 785 ns of load-to-use latency from host memory. Going through the PCH adds approximately 550 ns of latency, which is in line with what one PROM21 chip adds on the AM5 platform.

I’m going to skip commenting about the T1000’s cache hit bandwidth on Arrow Lake. It does not behave as expected, even when attached to the CPU’s PCIe lanes. The T1000’s cache hit behavior also differs from that of other GPUs. For example, Intel’s Arc B580 maintains full cache hit bandwidth when attached to the Arrow Lake platform, though curiously it can’t cache host memory in its L2.

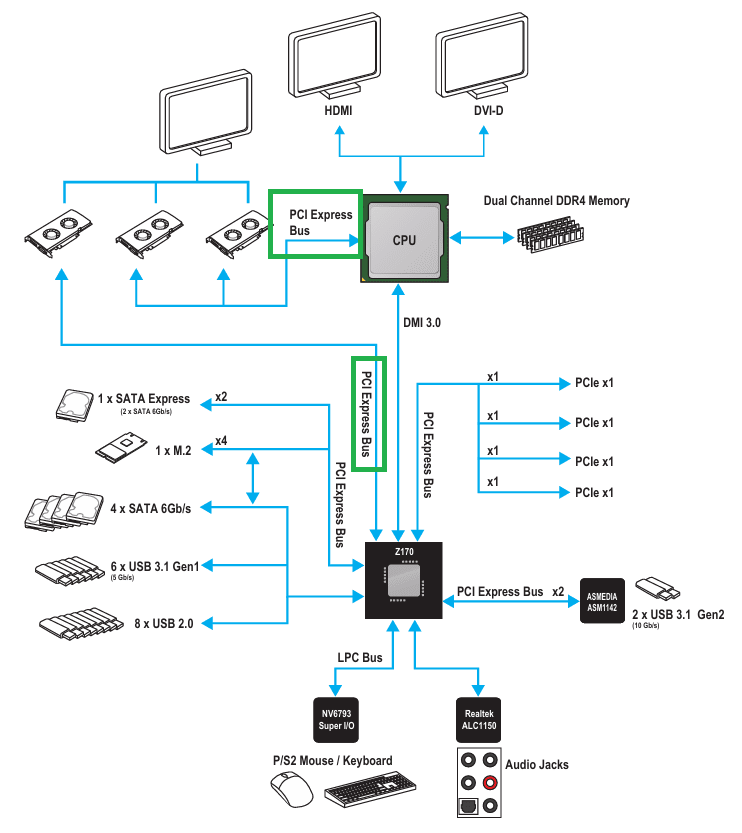

Skylake’s Z170 chipset uses a familiar southbridge-only topology like Z890, but with less connectivity and lower bandwidth. I’m testing on the MSI Z170 Gaming Pro Carbon, which connects the top PCIe x16 slot to the CPU. A middle slot also connects to the CPU to allow splitting the CPU’s 16 PCIe lanes into two sets of eight. The bottom slot connects to the PCH.

Skylake’s CPU PCIe lanes provide excellent baseline latency at 535.59 ns, while going through the Z170 PCH adds 338 ns of latency. That’s high compared to regular DRAM access latency, but compares well against current generation desktop platforms.

L1 bandwidth on the T1000 isn’t affected when the GPU is attached to CPU PCIe lanes, though L2 hit bandwidth is strangely lower (though still well above VRAM bandwidth). Switching the card to the Z170’s PCH lanes reduces cache hit bandwidth to just over 51 GB/s.

AMD’s Bulldozer and Piledriver chipsets use an older setup where the CPU has an integrated memory controller, but the chipset is still responsible for all PCIe connectivity. I’m testing on the ASUS M5A99X EVO R2.0, which implements the 990X chipset. The RD980 (990X) northbridge connects to the CPU via a 5.3 GT/s HyperTransport link, which I assume is running in 16-bit wide “ganged” mode. Two PCIe slots on the M5A99X connect to the RD980, and can run in either single x16 or 2x8 mode. A x4 A-Link Express III interface connects the northbridge to the SB950 southbridge, which provides more PCIe lanes and slower IO. A-Link Express is based on PCIe Gen 2, and runs at 5 GT/s.

Baseline latency is very acceptable at 769.67 ns when using the 990X northbridge’s PCIe lanes, which puts it between AMD’s Zen 5 and Intel’s Arrow Lake platforms. That’s a very good latency figure considering the AM3+ platform’s fastest PCIe lanes don’t come directly from the CPU like on modern platforms.

Going through the SB950 southbridge increases latency by 602 ns. It’s a higher latency increase compared to the PCH on Arrow Lake or one PROM21 chip on B650. However, southbridge latency is still better than going through two PROM21 chips on X670E.

On the 990X platform, the T1000’s cache hit bandwidth is capped at around 132 GB/s when connected using the northbridge PCIe lanes. With the southbridge PCIe lanes, cache hit bandwidth drops to just over 20 GB/s. Both figures are well in excess of the platform’s IO bandwidth. The 990X’s HyperTransport lanes can only deliver 10.5 GB/s in each direction, and cache hit bandwidth far exceeds that even when the T100 is attached to the southbridge. If the T1000’s cache hit bandwidth is limited by probe throughput, then the 990X chipset has 10x more probe throughput than is necessary to saturate its IO bandwidth. Of course, that’s nowhere near enough to cover GPU cache hit bandwidth.

As mentioned before, having the CPU access GPU VRAM is another way to measure PCIe latency. Host-visible device memory allocated on the T1000 is uncacheable from the CPU side, likely to ensure coherency. If caches aren’t in play, then small test sizes provide the best estimate of baseline latency because they don’t run into address translation penalties or page faults. Here, I’m plotting CPU-to-VRAM results at the 4 KB test size next to the GPU-to-system-memory results above. There’s some difference between the two measurement methods, but not enough to paint a different picture.

Chipsets today don’t take on performance critical roles, and accordingly aren’t optimized with low latency in mind. Going over chipset PCIe lanes adds hundreds of nanoseconds of latency, and imposes bandwidth restrictions. Chipsets can affect cache hit bandwidth too, possibly due to probe throughput constraints.

Older chipsets like AMD’s 990X show it’s possible to deliver reasonable PCIe latency even with an external northbridge. But I don’t see latency optimizations coming into play on future chipsets. With the decline of multi-GPU setups, chipsets today largely serve high latency IO like SSDs and network adapters. Those devices have latency in the microsecond range, if not milliseconds. A few hundred nanoseconds won’t make a difference. The same applies for probe performance. Accessing host-coherent memory is a corner case for GPUs, which expect to mostly work out of fast on-device memory. Needless to say, cache hits for a VRAM-backed buffer won’t require sending probes to the CPU.

I expect future chipset designs to optimize for reduced cost and better connectivity options, while keeping pace with higher bandwidth demands from newer SSDs. I don’t expect latency or probe path optimizations, though it will be interesting to keep an eye out to see if behavior changes.

2026-03-15 04:45:58

GB10’s integrated GPU is its headline feature, to the extent that GB10 can be seen as a vehicle for delivering Nvidia’s Blackwell architecture in an integrated implementation. The iGPU features 48 Streaming Multiprocessors running at up to 2.55 GHz, meaning it’s basically a RTX 5070 integrated. The RTX 5070 still has an advantage thanks to a higher power budget, more cache, and more memory bandwidth. Still, GB10 undeniably has a powerful iGPU, much like AMD’s Strix Halo.

Unlike AMD’s Strix Halo, Nvidia’s GB10 focuses on AI applications. Nvidia’s proprietary CUDA ecosystem is key, because CUDA is nearly the only name in the GPU compute game. GPU compute applications are optimized first and foremost for CUDA and Nvidia GPUs. Everything else is an afterthought, if a thought at all.Combining significant GPU compute power with iGPU advantages and CUDA support gives GB10 a lot of potential. Of course, hardware has to deliver high performance for those ecosystem advantages to show. Achieving high performance involves building a balanced memory hierarchy, as well as tailoring hardware architecture to do well in targeted applications.

GB10’s GPU-side memory subsystem brings few surprises. It’s basically a Blackwell GPU integrated, with a familiar two-level caching setup. The L2 serves as both a high capacity last level cache and the first stop for L1 misses. AMD in contrast uses more cache levels and gradually increasing cache capacity at each level, which mirrors their discrete GPUs too. In a cache and memory latency test, GB10 and Strix Halo trade blows depending on test size. Larger caches are hard to optimize for high performance, so AMD wins when it’s able to hit in its smaller 256 KB L1 or 2 MB L2 compared to Nvidia’s higher latency 24 MB L2. Nvidia wins when GB10 can keep accesses within L2 while AMD has to service them out of Strix Halo’s 32 MB memory side cache. Both Strix Halo and GB10 use LPDDR5X memory. Strix Halo’s iGPU enjoys better latency to LPDDR5X than Nvidia’s GB10, reversing the situation seen on the CPU side.

Nvidia’s L1 cache offers an impressive combination of low latency and high capacity. With dependent array accesses, it can return data nearly as fast as AMD’s 16 KB scalar cache while offering more capacity than AMD’s first level scalar and vector caches combined. However, dependent array accesses don’t tell the whole story. Pointer dereferences indicate that AMD takes a lot of overhead from array addressing calculations. Cutting that out shows that RDNA3.5’s scalar cache offers very low latency. Nvidia already had efficient array address generation, so switching to pointer dereferences makes less of a difference on GB10. Regardless of access method, GB10’s L1 offers higher capacity and lower latency than RDNA3.5’s L0 vector cache.

GB10’s system level cache is invisible in the latency plot above because it’s smaller than the GPU’s 24 MB L2. It also doesn’t seem to maintain a mostly exclusive relationship with the GPU L2, so its capacity isn’t additive on top of the L2. Nvidia’s slides say the SLC aims to enable "power efficient data-sharing between engines", implying that feeding compute isn't its primary purpose.

Like many modern GPUs, GB10 supports OpenCL’s Shared Virtual Memory (SVM) feature. SVM allows seamless pointer sharing by mapping a buffer into the same virtual address space on both the CPU and GPU. GB10 supports “coarse grained” SVM, meaning the buffer cannot be simultaneously mapped on both the CPU and GPU. Some GPUs with only coarse grained SVM appear to copy the entire buffer under the hood, as can be seen with Qualcomm and Arm iGPUs. Thankfully, GB10 can handle this feature without requiring a full buffer copy under the hood.

Compared to Strix Halo, GB10’s memory subsystem strategy moves high capacity caching up a level. Strix Halo’s “Infinity Cache” setup was awkward because it placed a GPU-focused cache at the other end of a system level interconnect. That places high pressure on Infinity Fabric, which AMD built to handle nearly 1 TB/s of bandwidth. GB10 in contrast uses the 24 MB graphics L2 to filter out a large portion of GPU memory traffic, so the system level interconnect primarily deals with DRAM accesses. That said, using 16 MB of cache just to optimize data sharing feels awkward too. SRAM is one of the biggest area consumers in modern chips because of how far compute has outpaced DRAM performance. A hypothetical setup that concentrates last level caching capacity in the SLC could be more area efficient, and could boost CPU performance.

48 SMs mean 48 L1 cache instances, giving GB10’s iGPU impressive cache hit bandwidth. With Nemes’s Vulkan based benchmark, GB10 easily outpaces Strix Halo. Strix Halo’s iGPU can achieve around 10 TB/s using a narrowly targeted OpenCL kernel that can’t work with arbitrary test sizes, but GB10 is still ahead even with that methodology. Nvidia’s advantage continues at L2, which offers both higher bandwidth and higher capacity than AMD’s 2 MB L2. Needless to say, Nvidia’s L2 continues to compare well as test sizes increase and Strix Halo has to use its 32 MB Infinity Cache. Finally, both iGPUs achieve similar bandwidth from system memory, with GB10 taking a slight lead with faster LPDDR5X.

Both GPUs use write-through L1 caches, so writes from compute kernels go straight to L2. GB10’s L2 handles writes at half rate, and provides just under 1 TB/s of write bandwidth. Write bandwidth on Strix Halo is somewhat lower at approximately 700 GB/s. Write behavior on both iGPUs is similar to that of larger discrete GPUs.

Nvidia’s GPUs use a single block of SM-private memory for both L1 cache and Shared Memory. Shared Memory is a software managed scratchpad private to a group of threads. It fills an analogous role to AMD’s Local Data Share (LDS), letting code explicitly keep data close to compute when data movement is predictable. A larger L1/Shared Memory block can let each group of threads use more scratchpad space with lower impact to occupancy or L1 cache capacity. Nvidia’s datacenter GPUs get 256 KB of L1/Shared Memory per SM. GB10 does not.

Instead, GB10 behaves much like Nvidia’s consumer Blackwell architecture and has 128 KB of L1/Shared Memory. In exchange for lower L1/Shared Memory capacity, GB10 achieves 13 ns of latency in Shared Memory using dependent array accesses. That’s slightly better than 14.84 ns for B200.

Instruction fetch behaves as expected for any Blackwell GPU. Each SM sub-partition has a 32 KB L0 instruction cache, which can fully feed a partition’s maximum throughput of one instruction per cycle. Blackwell uses fixed length 16-byte instructions, so the A 128 KB L1 instruction cache feeds all four partitions, and can also deliver one instruction per cycle. Bandwidth from the L1I is shared by all four partitions, and per-partition throughput drops to 0.5 IPC when two partitions contend for L1I access. GPU shader programs are typically small and simple compared to CPU programs, so I suspect most code fetches will hit in the 32 KB L0 instruction caches.

RDNA3.5 Workgroup Processors use a simpler instruction caching strategy, with one 32 KB instruction cache servicing four SIMDs. Bringing a second wave into play does not cause bandwidth contention at the L1I, though two waves executing different code sections do contend for L1I capacity. Running code from L2 leads to poor instruction throughput on both architectures.

If Strix Halo had high compute throughput for an integrated GPU, GB10 takes things a step further. Strix Halo’s iGPU has 20 Workgroup Processors (WGPs) to GB10’s 48 SMs. These basic building blocks are the closest analogy to cores on a CPU. RDNA3.5 WGPs do have doubled execution units for basic operations and higher clocks. But even in the best case, Strix Halo’s iGPU lands a bit behind GB10’s.

Both GPUs have low FP64 throughput, another characteristic that sets them apart from datacenter ones. GB10 has a 1:64 FP64 to FP32 ratio, while Strix Halo has a 1:32 ratio.

Nvidia’s GB10 presentation at Hot Chips 2025 started by talking about scaling their Blackwell architecture from server racks, to single GB300 GPUs, to the GB10 chip in DGX Spark. GB300, like B200, uses Nvidia’s B100 datacenter Blackwell architecture. The datacenter distinction is important. Ever since Pascal, Nvidia’s datacenter and consumer GPU architectures look quite different even when they use the same name. Cache setup and FP64 performance are often differentiating factors.

GB10’s GPU uses Blackwell’s consumer variant. It supports compute capability 12.1, putting under the same column that describes Nvidia’s RTX 5xxx consumer GPUs in Nvidia's chart. B200 in contrast has compute capability 10.0. Compared to GB10’s SMs, datacenter variants can keep more work in flight, have more L1/Shared Memory, and more FP64 units. 5th generation Tensor Core features are also limited to the datacenter variant.

These differences mean that GB10 doesn’t act like a smaller version of Nvidia’s Blackwell datacenter GPUs. Presenting GB10 as having the same architecture creates confusion, as can be seen in forum posts and GitHub issues, where kernels written for Nvidia datacenter GPUs can't run on GB10. Even if code doesn’t use any features specific to datacenter Blackwell, GB10 presents a different optimization target. Saying GB10 uses the same architecture as GB300 would be like saying Strix Halo’s RDNA3.5 iGPU uses the same architecture as MI300X. It’s great for investors, but not useful for a more technical audience where accuracy is important. I feel it would have been better to present GB10’s GPU as a member of Nvidia’s consumer RTX lineup. Consumer GPU architectures are perfectly capable of handling a wide variety of compute applications. Nvidia could still position GB10 as a compute-focused GPU.

Nvidia’s consumer Blackwell variant has advantages too. It has hardware raytracing acceleration, which can be useful in workloads like Blender with Optix. Consumer SMs clock higher than their datacenter counterparts, which can reduce per-wave latency on code that doesn’t benefit from any of datacenter Blackwell’s advantages. Finally, consumer Blackwell SMs take less area, which translates to more vector compute throughput with a given die area budget.

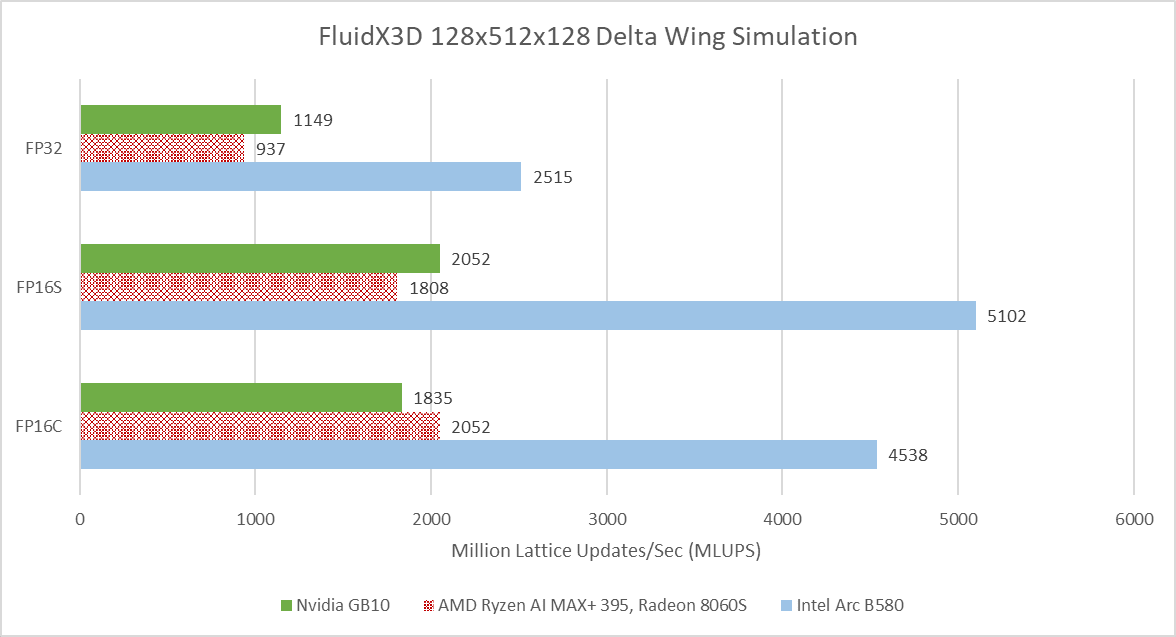

FluidX3D simulates fluid behavior using the lattice Boltzmann method. It carries out computations using FP32, but can store data in 16-bit floating point formats to reduce pressure on memory bandwidth and capacity. FP16S mode stores values in the IEEE-754 standard 16-bit floating point format, and benefits from hardware instructions when converting between FP32 and FP16. FP16C uses a custom 16-bit floating point format to minimize accuracy loss. Conversions between the custom FP16 format and FP32 have to be done in software, increasing the compute to bandwidth ratio.

GB10 leads AMD’s Strix Halo when running FluidX3D’s delta wing simulation example with FP32, and continues to have an edge in FP16S. However, it falls behind in FP16C mode. Intel’s Arc B580 nominally sits in the same performance segment as Strix Halo, but manages a huge performance lead over both iGPUs. VRAM bandwidth is likely a factor. FluidX3D has poor memory access locality and therefore tends to be bound by VRAM bandwidth. Besides reporting simulation speed, FluidX3D outputs memory bandwidth figures that account for accesses made from its algorithm’s perspective.

These accesses can include cache hits, which are transparent to user code. Profiling FluidX3D on Intel’s Arc B580 however shows that FluidX3D’s reported bandwidth aligns well with VRAM bandwidth usage from performance monitoring metrics.

GB10 and Strix Halo use 8533 and 8000 MT/s LPDDR5X over a 256-bit bus, giving 273 and 256 GB/s of theoretical bandwidth, respectively. That’s very high bandwidth for a consumer platform, but can’t compare to the 456 GB/s that the Arc B580 gets from GDDR6 over a 192-bit bus. Lack of memory bandwidth mean both iGPUs lean harder on caching than their discrete counterparts, and could suffer in workloads with poor access locality.

VkFFT implements Fast Fourier Transforms in multiple APIs. Here, I’m running its Vulkan benchmarks. GB10 leads Strix Halo, and turns in the most consistent performance across different test configurations. Strix Halo’s performance is more varied, and never beats GB10’s. Intel’s B580 is all over the place. It ends up with a higher average score than GB10, but takes huge losses in certain test configurations.

VkFFT outputs bandwidth figures alongside test results, and again shows what a discrete GPU can do with a fast, dedicated memory pool.

VkFFT can also target double precision (FP64) and half precision (FP16). GB10 is able to take an overall lead with double precision, while Strix Halo still can’t pull ahead in any test. Intel’s B580 can use its bandwidth advantage to win in some configurations, but not enough to get an overall score lead. None of the three GPUs here have strong FP64 performance, but VkFFT is often memory bound. The primary challenge is feeding the execution units, not having more of them.

Half precision runs tell a similar story, but with narrower gaps between the three GPUs. GB10, Strix Halo, and B580 average 76793.25, 62498.79, and 70980.17 respectively across all of the half precision test configurations.

In this self-penned benchmark, I perform a brute-force gravitational potential calculation over column density values. It’s a GPU accelerated version of something I did during a high school internship. Datacenter GPUs tend to do very well because I use FP64 values. GB10 and Strix Halo fall very far behind.

FAHBench is a benchmark for Folding at Home, a distributed computing project that runs protein folding simulations. The benchmark comes with three workloads, which can be run with either single or double precision. Two workloads use a DHFR (dihydrofolate reductase) system in explicit or implicit mode. Explicit simulates all 23558 atoms, while implicit only uses 2489 atoms to approximate a solution. NAV, or voltage gated sodium channel, is the third workload and uses 173112 atoms.

GB10 easily tops FAHBench’s workloads in single precision mode. FAHBench makes good use of local memory to keep data on-chip, reducing pressure on the global memory hierarchy. Global memory accesses that do happen have reasonable locality, even on the heavier NAV workload. Data Fabric performance monitoring on Strix Halo shows about 200 GB/s of GPU memory requests, and ~50 GB/s of traffic at the Unified Memory Controllers. That implies Strix Halo’s 32 MB system level cache caught about 150 GB/s of memory traffic. GB10’s 24 MB L2 is likely almost as effective, which would mean Nvidia also avoids DRAM bandwidth bottlenecks.

As a result, FAHBench favors compute throughput and plays well into GB10’s advantages. In some cases though, Strix Halo can keep up surprisingly well. In the DHFR single precision test, Strix Halo starts with a score just above 200 ns/day. If it maintained that, it would have nearly tied GB10. However, performance quickly drops as the chip pushes past 90 C. By targeting thin and light devices, Strix Halo has to contend with tighter thermal and power constraints than GB10 does in mini-PCs. Dell’s cooling in their mini-PC is excellent, and manages to keep GB10 in the low 60 C range during FAHBench runs.

Double precision workloads on FAHBench are very compute bound on all three GPUs. Intel’s B580 has the least horrible FP64 to FP32 ratio at 1:16. Strix Halo comes next at 1:32, followed by GB10 at 1:64.

Large iGPUs are fascinating, and it’s great to see Nvidia entering the scene. GB10’s GPU has plenty of strengths compared to the one in AMD’s Strix Halo. It uses Nvidia’s latest Blackwell architecture, while Strix Halo makes do with RDNA3.5 instead of AMD’s latest RDNA4. Nvidia also uses TSMC’s 3nm process, while Strix Halo uses 4nm. GB10’s GPU is sized to be a bit larger than Strix Halo’s. Compute benchmarks show GB10’s strength. It beats Strix Halo more often than not. All else being equal, GB10’s iGPU could be a dangerous competitor to Strix Halo and a welcome arrival for PC enthusiasts.

Unfortunately, gaming on GB10 is off to a rough start. iGPU setups are locked into whichever CPU they come with. GB10 uses ARM CPU cores, and quite excellent ones from an ISA-agnostic point of view. However, ISA does matter when software can’t be easily ported. Nearly all PC games target x86-64, are closed source, and lack 64-bit Arm ports. Emulation and binary translation may let some games run, but at a performance cost. For example, Retrotom on Reddit ran Cyberpunk 2077 on GB10 and achieved about 50 FPS at 1080P with medium settings. Similar settings on Strix Halo in Cyberpunk 2077's built-in benchmark give just under 90 FPS. Strix Halo already suffered a CPU performance penalty because of contention at the memory bus and high memory latency compared to desktop discrete GPU setups. Taking another heavy hit to CPU performance is a tough pill to swallow.

Nvidia understandably positions GB10 as a compute solution, aimed at developers who want to test their code without going to a datacenter GPU. Developers can recompile their application to target 64-bit Arm, minimizing compatibility issues. GB10’s strong compute performance, high memory capacity, and iGPU advantages play well into this purpose. In the compute role however, GB10 has a VRAM bandwidth disadvantage compared to discrete peers.

The factors above mean GB10 is an exciting product, but possibly one with a limited audience. Like Strix Halo, GB10’s unified memory and more compact form factor have to face off against iGPU compromises and an extremely high price compared to discrete GPU setups with similar compute throughput. I hope to see both Nvidia and AMD iterate on their large iGPU designs to bring price down and minimize their compromises. There’s a lot of potential for large iGPU designs, and I’m excited to see that segment develop.

2026-03-03 14:51:25

Desktop and laptop use cases demand high single threaded performance across a large variety of workloads. Creating CPU cores to meet those demands is no easy task. AMD and Intel traditionally dominated this high performance segment using high clocked, high throughput cores with large out-of-order engines to absorb latency. Arm traditionally optimized for low power and low area, and not necessarily maximum performance. Over the years though, Arm steadily built more complex cores and looked for opportunities to expand into higher performance segments. Matching the best from Intel and AMD must have been a distant dream in 2012, when Arm launched their first 64-bit core, the Cortex A57. Today, that dream is a reality.

Cortex X925 in Nvidia’s GB10 achieves performance parity with AMD’s Zen 5 and Intel’s Lion Cove in their fastest desktop implementations. That gives Arm a core fast enough to not just play in laptop segments, but potentially in the most performance sensitive desktop applications too. Nvidia’s GB10 uses ten X925 cores, split across two clusters. One of those X925 cores reaches 4 GHz, while the others are not far behind at 3.9 GHz. Dell uses the GB10 chip in their Pro Max series, and we’re grateful to Dell for letting us test that product.

Arm’s Cortex X925 is a massive 10-wide core with a lot of everything. It has more reordering capacity than AMD’s Zen 5, and L2 capacity comparable to that of Intel’s recent P-Cores. Unlike Arm’s 7-series cores, X925 makes few concessions to reduce power and area. It’s a core designed through and through to maximize performance.

In Arm tradition, X925 has a number of configuration options. However, X925 omits the shoestring budget options present for A725. X925’s caches are all either parity or ECC protected, dropping A725’s option to do without error detection or correction. L1 caches on X925 are fixed at 64 KB, removing the 32 KB options on A725. X925’s most significant configuration options happen at L2, where implementers can pick between 2 MB or 3 MB of capacity. They can also choose either a 128-bit or 256-bit ECC granule to make area and reliability tradeoffs.

X925 interfaces with the rest of the system via Arm’s DSU-120, which acts as a cluster-level interconnect and hosts a L3 cache with up to 32 MB of capacity. X925 and its DSU support 40-bit physical addresses, which is adequate for consumer systems. However, it’s clearly not designed for server applications, where larger 48-bit or even 52-bit physical address spaces are common.

Performance and power efficiency starts with good branch prediction. Arm knows this, and X925 doesn’t disappoint. Its branch predictor can recognize extremely long repeating patterns. In a test with branches that are taken or not-taken in random patterns of increasing lengths, X925 behaves a lot like AMD’s Zen 5. AMD’s cores have featured very strong branch predictors since Zen 2, so X925’s results are impressive.

Cortex X925’s branch target caching compares well too. Arm has a large first level BTB capable of handling two taken branches per cycle. Capacity for this first level BTB varies with branch spacing, but it seems capable of tracking up to 2048 branches. This large capacity brings X925’s branch target caching strategy closer to Zen 5’s, rather than prior Arm cores that used small micro-BTBs with 32 to 64 entries. For larger branch footprints, X925 has slower BTB levels that can track up to 16384 branches and deliver targets with 2-3 cycle latency. There may be a mid-level BTB with 4096 to 8192 entries, though it’s hard to tell.

Compared to AMD’s Zen 5, X925 has roughly comparable capacity in its fastest BTB level depending on branch spacing. Zen 5 has more maximum branch target caching capacity, especially when it can use a single BTB entry to track two branches. Still, X925 has more branch target storage than Arm cores from a few years ago. Cortex X2 for example topped out at about 10K branch targets.

A 29 entry return stack helps predict returns from function calls, or branch-with-link in Arm instruction terms. Like Intel’s Sunny Cove and later cores, the return stack doesn’t work if return sites aren’t spaced far enough apart. I spaced the test “function” by 128 bytes to get clear results.

In SPEC CPU2017, Cortex X925 achieves branch prediction accuracy roughly on par with AMD’s Zen 5 across most tests, and may even be slightly ahead. 505.mcf and 541.leela consistently challenge branch predictors, and X925 pulls ahead in both. Intel’s Lion Cove is a bit behind both Zen 5 and X925.

SPEC’s floating point workloads are gentler on the branch predictor, but X925 still shows its strength. It’s again on par or slightly better than Zen 5.

Cortex X925 ditches the MOP cache from prior Arm generations, much like mid-core companion (A725). While Cortex X925 doesn’t have the same tight power and area restrictions as A725, many of the same justifications apply. Arm already tackles decode costs through a variety of measures like predecode and running at lower clock speeds. A MOP cache would be excessive.

On the predecode side, X925’s TRM suggests the L1I stores data at 76-bit granularity. Arm instructions are 32-bits, so 76 bits would store two instructions and 12 bits of overhead. Unlike A725, Arm doesn’t indicate that any subset of bits correspond to an aarch64 opcode. They may have neglected to document it, or X925’s L1I may store instructions in an intermediate format that doesn’t preserve the original opcodes.

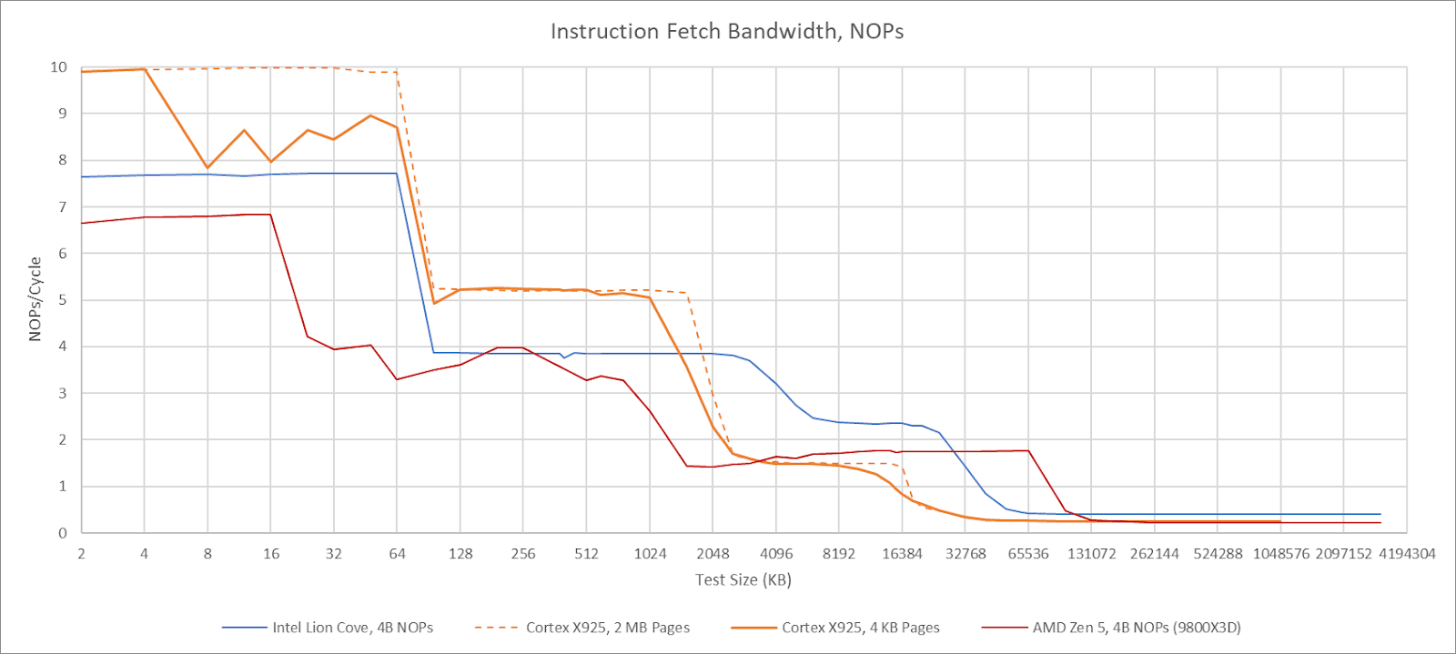

X925’s frontend can sustain 10 instructions per cycle, but strangely has lower throughput when using 4 KB pages. Using 2 MB pages lets it achieve 10 instructions per cycle as long as the test fits within the 64 KB instruction cache. Cortex X925 can fuse NOP pairs into a single MOP, but that fusion doesn’t bring throughput above 10 instructions per cycle. Details aside, X925 has high per-cycle frontend throughput compared to its x86-64 peer, but slightly lower actual throughput when considering Zen 5 and Lion Cove’s much higher clock speed. With larger code footprints, Cortex X925 continues to perform well until test sizes exceed L2 capacity. Compared to X925, AMD’s Zen 5 relies on its op cache to deliver high throughput for a single thread.

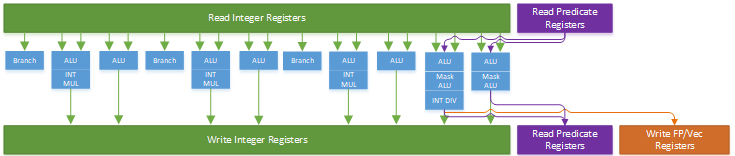

MOPs from the frontend go through register renaming and have various bookkeeping resources allocated for them, letting the backend carry out out-of-order execution while ensuring results are consistent with in-order execution. While allocating resources, the core can carry out various optimizations to expose additional parallelism. X925 can do move elimination like prior Arm cores, and has special handling for moving an immediate value of zero into a register. Like on A725, the move elimination mechanism tends to fail if there are enough register-to-register MOVs close by. Neither optimization can be carried out at full renamer width, though that’s typical as cores get very wide.