2026-05-16 00:44:27

Every day I read some sort of wrongheaded extrapolation about the future of AI — that today’s models are somehow indicative of AGI creating a “permanent underclass” of people that stops people from building software companies, or really doing any kind of job on the computer:

Hyperbolic? Perhaps. But even those who view the idea of a permanent underclass as overblown tell me that the meme contains a kernel of truth. Yash Kadadi, a 23-year-old start-up founder and Stanford dropout, summarized the sentiment of his peers: “There’s only a matter of time before GPT-7 comes out and eats all software and you can no longer build a software company. Or the best version of Tesla Optimus comes out,” and can perform all physical labor as well. In that world, this year is a human’s “last chance to be a part of the innovation.”

Yash, your peers are fucking idiots. You may as well be talking about breeding Grinches or Ninja Turtles, or kvetching about the upcoming threat from Godzilla. “The best version of Tesla’s Optimus [robot]” suggests that Tesla has released an Optimus robot, or that any prototypes are capable of anything approaching useful work, something that Tesla itself has said isn’t the case.

Every discussion of AI has become a discussion of anywhere between one and a million different theoreticals.

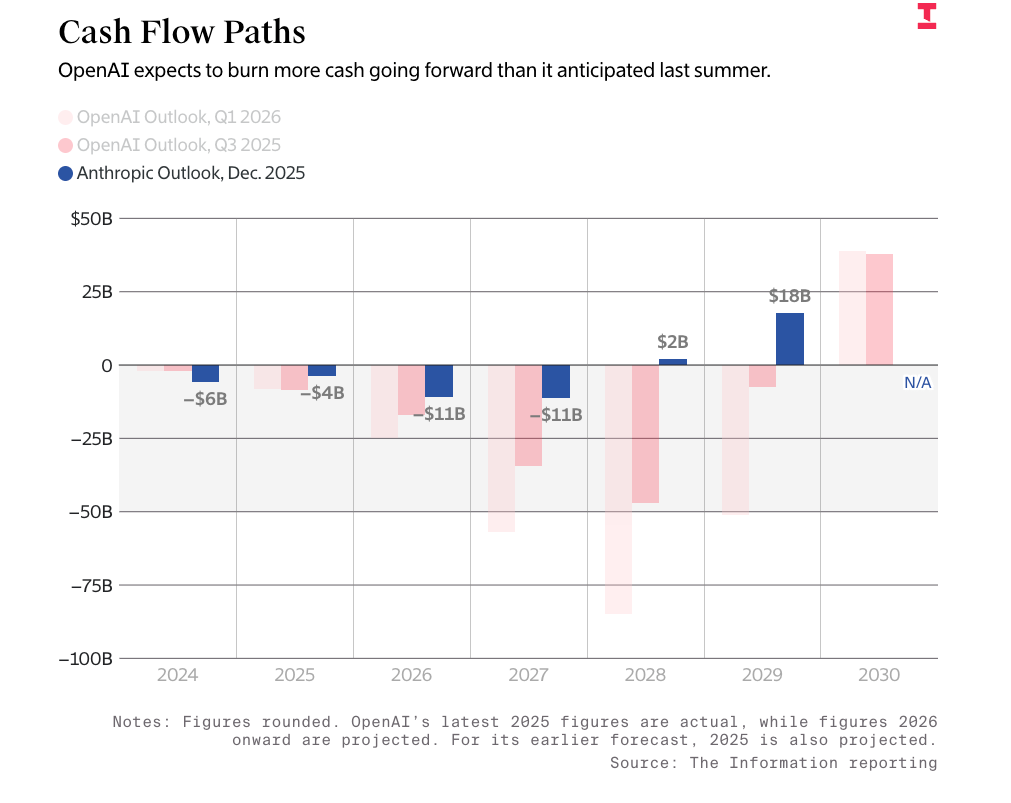

The Information’s headline that OpenAI will “save $97 billion through 2030 in latest Microsoft deal” — one that capped its revenue share (as in the actual money it sends to Microsoft) at $38 billion — hinges on the idea that OpenAI would somehow make $190 billion in revenue, because that’s what it would take to actually max out its revenue share.

The majority of articles about METR’s “time horizon” study of how long models take to complete tasks gush with mindless praise, but regularly leave out two valuable details: that these comparisons are made based on estimates of both human task times, and that the most-commonly shared task is based on how likely it is to complete a task 50% of the time:

The task-completion time horizon is the task duration (measured by human expert completion time) at which an AI agent is predicted to succeed with a given level of reliability. For example, the 50%-time horizon is the duration at which an agent is predicted to succeed half the time.

It’s the Sex Panther joke from Anchorman, except it’s a chart that gets written up in major newspapers and bandied about as proof of models becoming conscious.

Nevertheless, everybody appears to be having a lot of fun making stuff up or making ridiculous assertions based on OpenAI or Anthropic’s predictions. Likely gas leak victim Joseph Jacks posted last week that at its current rate of growth, Anthropic would pass Google’s revenue by 2028. Multiple different people I’d rather not link to are posting benchmarks of Anthropic’s still-to-be-released Mythos model as proof that we’re in the early-to-middle stages of the entirely-fictional AI 2027 “simulation,” despite the entirety of this ridiculous, oafish extrapolation relying on the idea that at some point LLMs become conscious and start doing their own research.

None of these people seem to want to engage with reality, even in their extrapolations.

Whether or not you believe the bubble will burst, it’s hard to argue (not that anybody nobody bothers to try) with my recent reporting about the lack of data centers coming online or the fact that the majority of AI revenue comes from two companies that are, in the end, hyperscalers feeding themselves money. Nobody has presented any real argument as to how Oracle completes its data centers or avoids running out of money given the fact that it needs OpenAI to be able to pay it $70 billion or more a year in the next four years to survive. The lack of any real, thoughtful response to my assertions outside of ultra-centrists and people that can’t count is a sign that I’m onto something, and I take it as a badge of pride.

But what I haven’t done recently — not since AI Bubble 2027, at least — is try my own hand at extrapolating the future based on the things I have read, seen and reported on.

Today, I’m taking a different approach, inspired by one of my favourite comic series. In Marvel’s “What If…?” writers asked questions that would entirely change the course of the Marvel Universe, such as What If The Fantastic Four Didn’t Get Their Powers, or Loki Was Worthy of Mjolnir.

I’ll be honest that there are a lot of unanswered questions I have about the AI bubble that make precise, time-based predictions almost impossible. We’re in the midst of one of the most insane market rallies in history driven around the exploding valuation of NVIDIA and data center related stocks despite there being a great deal of compelling evidence that millions of Blackwell GPUs are sitting in warehouses, meaning that the market is rallying around the idea of data centers getting built without ever confirming whether that’s actually true.

In the past, I’ve approached things from an investigative perspective, proving what I believe to be one of the greatest misallocations of capital in history. Today, I’m going to have a little more fun, exploring both the worrying signs I see and their potential consequences in the form of questions, mixing my own reporting with a little bit of fiction.

My reasoning is simple: I think people are very good at ingesting and remembering specific facts and events, but much worse at understanding their consequences. For example, Dave Lee of Bloomberg — who I adore and admire! — said that An OpenAI Bubble Is Not An AI Bubble and makes numerous correct assertions about OpenAI, but fails to consider that OpenAI accounts for $718 billion of Oracle, Microsoft, and Amazon’s backlogs, meaning that OpenAI’s collapse would leave Oracle destitute, Microsoft and Amazon short-changed, Cerebras without 80%+ of its revenue, and CoreWeave without a major client and in breach of loan covenants guaranteed by OpenAI’s revenue.

Even if Anthropic were able to mop up some of that fallow capacity, it too relies on endless venture capital and hyperscaler welfare to pay, well, increasingly-large shares of hyperscaler revenue.

I feel as if many people are willing to ask if we’re in an AI bubble, but few seem to want to talk about what might happen. It’s really easy to say “stocks are overvalued” or “OpenAI is deeply unprofitable,” but thinking much harder than that starts to make you feel a little crazy. Data center construction now makes up a larger chunk of all construction spending than commercial real estate. OpenAI has made promises that total over a trillion dollars, and Anthropic $330 billion. NVIDIA represents 8% of the value of the S&P 500, and that valuation is based on the idea that it will never, ever stop growing, which is only possible if data center construction never stops. CoreWeave, IREN, Nebius, and Nscale all rely on hyperscaler contracts that are related to OpenAI, and if those contracts go away because OpenAI does, they’re screwed.

Most people can say that these things are true, but very few of them are willing to think about their consequences, because when you do so, things begin feeling completely and utterly fucking insane.

Put another way, for me to be wrong, all of these data centers will have to get built, OpenAI will have to make and raise $852 billion in the next four years, the underlying economics of generative AI will have to improve in a dramatic and unfathomable way, and do so in such a way that it creates hundreds of AI startups that can substantiate $400 billion of annual compute revenue. For NVIDIA to continue growing its revenues at an historic rate, it will also have to, by 2028, be selling over $1 trillion in GPUs, which will require there to be funding to buy these GPUs, at a time when hyperscaler cashflows are dwindling and banks are worried they’re “choking” on AI data center debt.

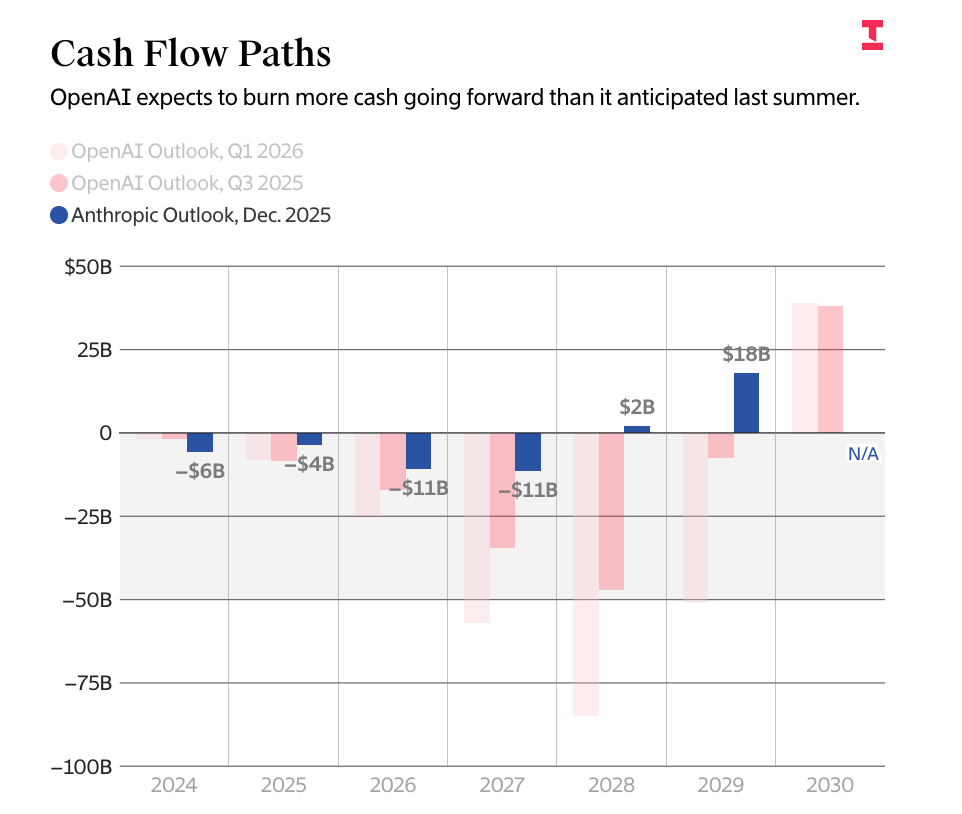

The AI bubble is supported almost entirely by magical thinking and people ignoring obvious warning signs again and again and again in the hopes that at some point something changes. You can quote whatever story you like about Anthropic’s skyrocketing revenues (which are absolutely inflated) — there’s no getting away from the fact that it loses billions of dollars year, and if your answer is that it will turn profitable in 2028, please tell me how because there is no proof that it’s possible.

I also kind of get why nobody wants to think about this stuff. Even though it’s become blatantly obvious that the economics don’t make sense, the stock market continues to rip based on equities connected to the AI bubble in a way that defies logic but rewards positive speculation. Major media outlets continue publishing positive stories about the power of AI that seem entirely-disconnected from what AI can do, and millions of dollars are being spent by companies based on a theoretical return on investment.

No, really, per The Information’s Laura Bratton quoting PagerDuty CIO Eric Johnson:

“I am preparing myself to be surprised” by the bills, he said. “We believe that there’s a lot of value here. Unfortunately, it’s fairly new technology, so there’s some open questions that we’re gonna be working through” around its costs and getting a return on the investment.

We are fucking years into this man, how is the question of return on investment still an open question?

Okay, we know the answer: we’re in a bubble. Everybody is pressuring everyone else to “integrate AI,” to “get every engineer AI,” to “become more efficient using AI,” with token spend becoming some sort of vulgar status symbol despite the whole point of the AI push being that workers can be replaced, or enhanced, or, I dunno, something measurable. In the end, all that’s being measured is how many tokens employees are burning, leading to Amazon staff deliberately setting up “agents” to burn more tokens to seem more “engaged with AI” than they really are, all because dimwit managers and executives don’t understand what people do at their jobs and can only comprehend Number Go Up.

As a result, it’s far easier to fall in with the groupthink, even if it’s hysterical, nonsensical and based on flimsy ideas like “it’s just like Uber” (it isn’t) or “Amazon Web Services burned a lot of money” (it burned less than half of OpenAI’s $122 billion funding round on capex for the entirety of Amazon in the space of 15 years, adjusted for inflation), because thinking that everybody’s wrong requires you to disagree with the markets, most of social media, your boss, and your most annoying coworkers.

People also don’t really like thinking about bad things happening. They’re happy to make vague leaps in a direction that makes them feel prepared for the worst (such as the specious statements about all of these data centers being for the military or a theoretical bailout), especially if it makes them feel smart, but in doing so they get to avoid the actual bad stuff — the economic ramifications for ordinary people, the years of depression ahead for the tech industry, and the calamitous results for the market.

So, today, I’m going to have a little fun thinking about the actual consequences of everything I’ve been writing. I’m going to thread in both my own and others’ reporting, and take these ideas to their logical endpoints as far as I can.

This is going to be the first of a two-part exploration of what the actual consequences of the AI bubble bursting might be.

I’ll also caveat this by saying that these are, ultimately, explorations of potential future events rather than cast-iron guarantees. People seem to be resistant to being told the truth, so perhaps it’s time to explore these ideas as theoretical — fictional, even — so that people are more willing to take them in.

This series is all about simple scenarios, and one very simple question.

Time. Space. Reality.

It's more than a linear path — it’s a prism of endless possibility. I am the Watcher, and I am well aware of how AI generated that sentence sounds.

I am your guide through these vast new realities.

Follow me and dare to face the unknown.

And ponder the question…

What if…We’re In An AI Bubble?

2026-05-13 00:17:30

If you liked this piece, please subscribe to my premium newsletter. It’s $70 a year, or $7 a month, and in return you get a weekly newsletter that’s usually anywhere from 5,000 to 18,000 words, including vast, detailed analyses of NVIDIA, Anthropic and OpenAI’s finances, and the AI bubble writ large. My Hater's Guides To Private Credit and Private Equity are essential to understanding our current financial system, and my guide to how OpenAI Kills Oracle pairs nicely with my Hater's Guide To Oracle.

My last piece was a detailed commentary on the circular nature of the AI economy — and how the illusion of AI demand is just that, an illusion.

Subscribing to premium is both great value and makes it possible to write these large, deeply-researched free pieces every week.

During every bubble there’s one very obvious thing that keeps happening: things are said, these things are repeated, and are then considered fact. Sam Bankman-Fried was the smiling, friendly, “self-made billionaire” face of the crypto industry. NFTs were the future of art, and would change the way people think about the ownership of digital media.

The actual evidence, of course, never lined up. NFT trading was dominated by wash trading — market manipulation through two parties deliberately buying and selling an asset to raise the price. Cryptocurrency never took off as anything other than a speculative asset, and altcoins are effectively dead. Sam Bankman-Fried was only a billionaire if you counted his billions of illiquid FTX tokens, but that didn’t stop people from saying he wanted to save the world weeks after the collapse of Terra Luna, a stablecoin that he himself had bet against and may have helped collapse.

Three months before his arrest, a CNBC reporter would fly to the Bahamas to hear SBF tell the story of how he “survived the market wreckage and still expanded his empire,” with the answer being that he had “stashed away ample cash, kept overhead low, and avoided lending,” as opposed to the truth, which was “crime.”

The point is that before every scandal is somebody emphatically telling you that everything’s fine. Everything seems real because there’s enough proof, with “enough proof” being a convincing-enough person saying that “most of FTX’s volume comes from customers trading at least $100,000 per day,” when the actual volume was manipulated by FTX itself, and the “$100,000 a day in customer funds” were being used by FTX to prop up its flailing token.

In the end, the “proof” that SBF was rich and that FTX was solvent was that nobody had run out of money and that nothing bad had happened to anybody. SBF was a billionaire sixteen times over because enough people had said that it was true.

Anyway, one of the most commonly-held parts of the AI bubble is that massive amounts — gigawatts’ worth — of data centers have both already been and continue to be built…

…but then you look a little closer, and things start getting a little more vague. While Wood Mackenzie’s report said that there was “25GW of data center capacity added to the funnel” in Q4 2025 does not say how much came online. CBRE said back in February that “net absorption of 2497MW” happened in primary markets in 2025, with other reports saying that somewhere between 700MW and 2GW of capacity was absorbed every quarter of 2025. At the time, I reached out for any clarity about the methodology in question and received no response.

Okay, so, I know data centers are getting built and that they exist. I believe some capacity is coming online.

But gigawatts? Or even hundreds of megawatts? How much data center capacity is actually coming online?

Why did Anthropic get so desperate it took on a years old data center, xAI’s Colossus-1, full of even older chips from a competitor — one whose CEO described the company as “evil,” and that’s currently facing a lawsuit from the NAACP over allegations the facility’s gas turbines are polluting black neighborhoods?

Remember, Colossus-1 is an odd data center, with around 200,000 H100 and H200 GPUs and an indeterminate amount of Blackwell GB200s, weighing in at around 300MW of total capacity…which isn’t really that much if we’re talking about gigawatts being built every quarter, is it?

So, I have two very simple questions to ask: how long does it take to build a data center, and how much data center capacity is actually coming online?

These simple questions are surprisingly difficult to answer. There exists very little reliable information about in-progress data centers, and what information exists is continually muddied by terrible reporting — claiming that incomplete projects are “operational” because some parts of them have turned on, for example — and a lack of any investor demand for the truth. Hyperscalers do not disclose how many data centers they’ve built, nor do they disclose how much capacity they have available.

I find this utterly inexcusable, given the fact that Amazon, Google, Meta and Microsoft have sunk over $800 billion in capex (and more if you count investments into Anthropic and OpenAI) in the last three years.

So I went and looked, and what I found was confusing.

So, you’re going to hear people say “well Ed, data centers are being built,” and what I’m talking about is data centers that have been fully constructed and then turned on. It’s really, really easy to find data centers that are under construction, but as I’ve discussed in the past, that can mean everything from a pile of scaffolding to a near-complete data center.

Yet finding the latter is very, very difficult. I’ve spent the last week searching for data centers that broke ground in 2023 or 2024 that have actually been finished, and come up surprisingly empty-handed. Some projects are stuck in construction hell, eternally dueling with planning departments over permitting, some are chugging along with no real substantive updates, some, as is the case with Nscale’s Loughton, England data center, have done effectively nothing for the best part of a year, some are perennially adding more capacity to the order as a means of continuing raking in construction bills, and some are claiming their data centers are “operational” as only a single phase has turned on.

You should also know that even once construction has finished, the buildings themselves must be fully filled with the necessary cooling, power and compute hardware, at which point it can be configured to meet a client’s specifications (which can take months), at which point the unfortunate soul building the facility can actually start making money.

I think it’s also worth revisiting how difficult data center construction is, and how large these new projects are.

This starts with a very simple statement: nobody has actually built a 1GW data center (to be clear, it’s usually a campus of multiple buildings networked together) yet. There are campuses — such as Stargate Abilene — which promise to reach 1.2GW, but nearly two years in sit at two buildings at around 103MW of critical IT load each with, based on discussions with sources with direct knowledge of Abilene’s infrastructure, a third building sitting fully-constructed but with barely any gear inside it.

It’s fundamentally insane how many different companies are trying to build these things considering how difficult even the simplest data center is to build.

Take, for example, American Tower Corporation’s edge data center in Raleigh, North Carolina, which I’ll mention a little later. This is a 1MW facility — or one-thousandth the size of a gigawatt facility — occupying 4000 sq ft of real estate at first and expanding to 16,000 if ATC actually gets it up to 4MW. That’s about two-and-a-bit times larger than the typical American home. And, from ground-breaking to ribbon-cutting, it took eleven months to complete. And that’s not including all the other necessary time-consuming bits, like finding land, securing permits, and so on.

That’s a simple one. People want to build data center campuses a thousand times larger than that. Look at how difficult it is.

In fact, it’s so difficult that the companies can’t build all of it at once. Larger data center campuses are almost always divided into “phases,” in part because that’s the smartest way to build them, and in part with the express intention of convincing you that they’re “fully operational.”

For example, CNBC’s MacKenzie Sigalos reported in October 2025 that Amazon’s Indiana-based (allegedly) 2.2GW Project Rainier data center was “operational,” but only seven out of a planned 30 buildings were actually operational, and her comment of “with two more campuses [of indeterminate capacity] underway.” This comment was buried two videos and 600 words into a piece that declared the data center was “now operational,” with the express intent of making you think the whole thing was operational.

To give her credit, at least she didn’t copy-paste the outright lie from Amazon, which claimed that Rainier was “fully operational” in a press release the same day. You’ll also note that Amazon never provides any clarity about the actual capacity of Rainier.

Sigalos did exactly the same thing when the first (of eight) buildings of Stargate Abilene opened, declaring that “OpenAI’s first data center in $500 billion Stargate project is open in Texas,” burying the comment that only one was operational with another nearly complete several hundred words earlier.

These are intentionally attempts to obfuscate the actual progress of the data center buildout, and if I’m honest, I’ve spent months trying to work out why big companies that were supposedly building large swaths of data centers would be trying to do so.

Unless, of course, things weren’t going to plan.

In its last (Q3 FY26) quarterly earnings call, Microsoft CEO Satya Nadella claimed that “[Microsoft] added another gigawatt of capacity this quarter, and [remained] on track to double [its] overall footprint in two years.” A quarter earlier, he claimed to have added “nearly one gigawatt of total capacity,” with Karl Keirstead of UBS saying that he “...thought the one gigawatt added in the December quarter was extraordinary and hints that the capacity adds are accelerating.”

As I’ll discuss below, I can find no evidence of anything more than a few hundred megawatts of Microsoft’s data center capacity coming online. While I’ll humour the idea that it doesn’t announce every new data center, and that there may be colocation and neocloud counterparties (67% of CoreWeave’s revenue comes from Microsoft, for example) that make up the capacity, as I’ll also discuss, I don’t know where the hell that might be.

So, to be aggressively fair, I asked Microsoft to answer the following questions on May 4, 2026:

A Microsoft representative from WE Communications promised to "circle back" by 5PM ET on Monday May 4th, but did not return further requests for comment via text and email, which is incredibly strange considering the simple and straightforward nature of my questions.

That’s probably because the vast majority of its publicly-announced or documented data center capacity doesn’t appear to be getting finished.

In September 2025, CEO Satya Nadella claimed that Microsoft had added 2GW of capacity “in the last year,” and acted as if Fairwater, a project with two actively-constructed data centers with one in Wisconsin that broke ground in September 2023 and another in Atlanta that broke ground in July 2024, was something to be “announced” rather than “a very expensive project that has taken forever.” Nadella also claimed that there are “multiple identical Fairwater datacenters under construction,“ though he neglected to name them.

To be clear, “Fairwater” refers to a project where multiple data centers are linked with high-speed networking to make one larger cluster, a project that sounds ambitious because it is, and also unlikely because it’s yet to have been built.

Fairwater Atlanta — the latter of the Fairwaters — was “launched” in November 2025 and it’s unclear how much capacity it has. Cleanview claims it’s at 350MW of capacity, and Microsoft’s own community outreach page claims construction would be completed by the beginning of October 2025, but, as I’ll get to, it’s unclear whether this is just one phase, given that reporting shows multiple other buildings still under construction.

I have serious doubts that Microsoft stood up a 350MW data center in less than a year, given everything else I’m about to explain.

Fairwater Wisconsin is also a data center of indeterminate size, but Cleanview claims Phase 1 is 400MW, quoting a story from FOX6 News Milwaukee from September 2025 that said that Microsoft was “investing an additional $4 billion to expand the campus,” featuring a video of a very much in construction data center saying the following:

Microsoft is in the final phases of building Fairwater, the world’s most powerful AI data center, in Mount Pleasant. Microsoft is on track to complete construction and bring this AI data center online in early 2026, fulfilling their initial $3.3 billion investment pledge.

So, $3.3 billion — at a rate of around $14 million per megawatt per analyst Jerome Darling of TD Cowen — is about 235MW of capacity, which is a lot lower than 400MW.

Seven months later, Satya Nadella said that the Fairwater datacenter in Wisconsin was “going live, ahead of schedule,” a sentence written in the present tense, but also said that it “will bring together hundreds of thousands of GB200s in a single seamless cluster,” which is in the future tense.

It’s a great time to remind you that Microsoft claims that it brought online roughly eight times that capacity (around 2GW) in the past six months.

To make matters worse, it doesn’t appear that Fairwater Wisconsin is actually operational. Ricardo Torres of the Milwaukee Journal-Sentinel reports that Microsoft has said it isn’t actually online, and that while there “...is equipment inside the data center conducting start-up opportunities…the company anticipates [they] will continue to happen for the next several weeks.”

Epoch AI’s satellite footage of Fairwater Wisconsin — which mentions a completely wrong capacity because it’s uniquely terrible at calculating it (it claimed Colossus-1 has 425MW capacity, for example) — notes that as of April 2026, one building appeared to be operational, with a second under construction.

So, that’s one building in Wisconsin that might be complete, and based on the permitting application from August 2023 dug up by Epoch, the project is designed to have 117MW of capacity, which is a lot lower than 235MW. While Epoch didn’t have permitting for building two, it did for three and four, which are designed to have around 719MW of capacity, and as of April 2026 still appear to be slabs of concrete.

In simpler terms, there’s at most around 117MW of capacity running at Fairwater Wisconsin.

Sidenote: To be clear, I think some revenue is being generated from a Fairwater data center, as my reporting from last year on OpenAI’s inference spend involved a few million dollars’ worth of billing for “Fairwater,” but it’s unclear whether that referred to Fairwater Atlanta or Wisconsin.

The Fairwater data centers are Microsoft’s most-publicized data centers, yet they’re shrouded in secrecy, with the Atlanta Journal-Constitution having to file an open records request to find the site being developed by QTS, a data center developer owned by Blackstone. Videos of Fairwater Atlanta from last November show a giant campus with two large buildings and a patch of yet-to-be-developed dirt. DataCenterMap refers to it as “under construction.”

Epoch AI’s satellite footage notes that as of February 2026, building four’s roof was complete and “all mechanical equipment appears to be installed,” but “there is still a lot of construction activity around the building.” Based on air permits filed as part of the project (that Epoch found), it appears that each building is powered by a number of Caterpillar 3516C Generator Sets at around 2.5MW each, with building one having 47 (117.5MW), building two having 13 (32.5MW), building three having 30 (75MW), and building four having 35 (87.5MW).

If we’re very generous and assume that three buildings are complete, that means that Fairwater Atlanta is at around 225MW of capacity (not IT load!).

So, that’s about 342MW of data center capacity being built by one of the largest companies in the world, in its most-publicized and written-about data centers.

Put another way, for Microsoft to come remotely close to its so-called 2GW of capacity in the last six months, it will have had to bring online a little under six times that capacity.

I’m calling bullshit.

I really did want Microsoft to give me some answers, but I’m very confused as to how it can remotely claim it brought even a gigawatt of capacity online in the last year.

I also question whether Microsoft is actually building multiple other “identical” Fairwater data centers, as I can’t find any announcements or pronouncements or mentions or hints as to where they might be.

In fact, I’m having a little trouble finding where else Microsoft has been building data centers, and those I can find are extremely suspicious.

In Microsoft’s announcement of its Wisconsin data center, it mentioned two other projects — one in Narvik Norway that had already been announced months beforehand by OpenAI, and another with Nscale in Loughton, England that was also announced by OpenAI that very same day as part of the entirely fictional Stargate project.

If you’re wondering how those are going, Microsoft had to take over the entire Narvik project (which does not appear to have started construction) from OpenAI, and the Loughton data center (which OpenAI also backed out of) is currently a pile of scaffolding.

For two straight quarters, Microsoft has said it’s brought on an entire gigwatt of capacity,and I have to ask: where?

Because when you actually look at the projects it’s announced, very little appears to have been built, and that which has is nowhere near its theoretical capacity.

To be specific about what Microsoft is claiming, it’s saying it’s brought around 4GW of capacity online in the space of two years, and at a 1.35 PUE, that’s about 2.96GW of critical IT load, which works out to the power equivalent of around 284,600 H100 GPUs, which may be possible — after all, Microsoft apparently bought 450,000 H100 GPUs in 2024 — but I can’t find much evidence of data centers that could house that many GPUs, nor that might be in construction.

Let’s dig in.

Microsoft broke ground on three data centers in Catawba County North Carolina in 2024 — one in Hickory, another in Lyle Creek, and another in Boyd Farms:

Alright, maybe I’m being unfair! Maybe it’s just a North Carolina problem. There must be another that broke ground and got built…right?

Microsoft also broke ground on a data center in Quebec City, Canada in September 2024, and as of April 2026, “generator testing has been completed,” and “civil works will continue until Autumn 2026.”

Okay, well, maybe it’s a Canada problem. What about Microsoft’s New Albany, Ohio data center that broke ground in October 2024? Well, as of March 2026, “spring activity would resume,” and “beginning soon, soil will be delivered to the site via a designated truck route. I’ll note that Microsoft specifically says that Ames Construction is currently leading it, and that it will “resume the lead role in project communications” once the final phase of construction is done at some unknown time.

Alright, well, how about the August 2025 ground breaking in Cheyenne, Wyoming that was allegedly “due to launch in 2026”?

Well, Microsoft hasn’t updated its community page since it said there’d be a community meeting planned for November 2025 and that “neighbors within the vicinity will be notified ahead of construction,” which sounds like construction is yet to commence. Not to worry though, it announced on April 14, 2026 that it planned to expand it to “accelerate innovation and economic growth”

How about that 2023-announced Southwest Hortolândia Brazil data center? That’s right, the last update was in September 2025, and the update was “construction activities continue to progress in alignment with local regulations.” A piece from Folha De S.Paulo from March 2026 mentioned that Microsoft “had begun operating its first artificial intelligence data centers in Brazil,” but satellite footage shows that it’s barely finished.

What about the Newport, Wales data center it announced in 2022? Well, as of November 2025, a politician was standing on a concrete slab saying how many jobs it’ll theoretically bring in, which it won’t.

What about Microsoft’s four data centers in Irving, Texas, announced December 2024? The best I’ve got for you is a news report about a data center in Irving Texas breaking ground in January 2025. Its San Antonio data center, announced in July 2024? Well, construction was underway as of December 2025, and it appears that construction will begin in the summer of 2026 on another one in the area.

How about the two data centers outside of Cologne, Germany, announced in November 2024? Well, as of September 2025, Microsoft has…plans to build one of them?

…what about the 900 acres of land it bought in June 2024 in Granger, Indiana? Great news! According to 16NewsNow, Microsoft officials “could break ground on a proposed data center…in late April or early May [2026].”

How about Project Ginger West, a data center planned in Des Moines. Iowa since March 2021? Hope you like waiting, because Microsoft itself says that it’s estimated to finish construction in Summer 2028. Ginger East, announced a few months later? Mid-2028. Project Ruthenium (announced 2023)? I don’t have shit for you I’m afraid.

This company claims it’s built four fucking gigawatts of capacity, but when I go and look to see what it’s actually built I’ve failed to find a single announced data center from the last three years that got turned on outside of its Fairwater Atlanta and Wisconsin sites.

To be clear, all of these sites are somewhere in the 200MW to 300MW range. For Microsoft to have brought online 4000MW of data center capacity in the last two years would require it to have completed thirteen or more of these projects, all while choosing not to promote them, with every project operating in such a veil of secrecy that no local or national news outlet reported a single one of them.

I truly cannot work out how Microsoft has brought on any more than 500MW of capacity in the last year based on my research, and think Microsoft is deliberately obfuscating whether said capacity was contracted rather than actively in-use, much like CoreWeave refers to itself having 3.1GW of “total contracted power” but only added 260MW of active power capacity in a single quarter at the end of 2025.

Sidenote: If you’re wondering why CoreWeave didn’t include how much active power it added in its Q1 2026 earnings press release, it’s because (per its own earnings presentation) it only added 150MW, in a quarter it contracted 400MW. It also said it added six new data centers, which I doubt.

However, the exact verbiage used in Microsoft’s earnings transcripts is that it “added another gigawatt of capacity,” which sounds far more like it’s saying it brought them online…

…but it didn’t, right? It obviously hasn’t.

Where are all the data centers, Satya? Where are they? Why are your PR people too scared to tell me?

No, really, where are they?

So, to be fair, analyst Ben Bajarin, one of the more friendly pro-AI posters, argues that actually all of that capacity is secretly behind-the-scenes, something I’d humour if there was any kind of paper trail to a bunch of Microsoft data centers that were secretly being built.

I’d also be more willing to humour it if any of the data centers that have been publicized as “breaking ground” had actually been finished, or if both Fairwater Atlanta and Wisconsin weren’t so deceptively-marketed.

My only devil’s advocate is that Microsoft could, in theory, be working with colocation partners to stand up several gigawatts of capacity through shell corporations and SPVs, but even then, not a single one has any sort of trail to Microsoft? All of that capacity?

It’s really, really weird, and the only answers I get are smug statements about how “Fairwater is ahead of schedule.”

But if I’m honest, I’m having trouble even making these numbers add up.

Considering how loud, offensive and conspicuous the AI bubble has become, it feels like we should have a far, far better understanding of how much actual capacity has been built.

I also think it’s time to start being realistic about how long these things are taking to build.

For example, I was only able to find a few data centers that for sure, categorically, definitively opened, and for the most part, it appears that a data center takes around 18 months to go from groundbreaking to opening.

And these, I add, are all facilities that are relatively modest — at least, when compared to the kinds of gigawatt-scale campuses that are reportedly in active development.

Digging deeper, I found a lot of projects stuck in development Hell:

While there are absolutely data centers under construction, and some, somewhere, are actually being completed, the vast majority of projects I’ve found are either in a mysterious limbo state or, in most cases, under construction years after breaking ground.

Across the board, the message seems to be fairly simple: it takes about 18 to 24 months to build any kind of data center, and the bigger they are, the less likely they are to get completed on schedule.

Those that actually “come online” aren’t actually fully constructed, but have brought on a single phase — something I wouldn’t begrudge them if they were anything close to honest about it. In reality, data center companies actively deceive the media and customers about the actual status of projects, most likely because it’s really, really difficult to build a data center.

In any case, what I’ve found amounts to a total mismatch between the so-called “rapid buildout” of AI data centers and reality.

It also doesn’t make much sense when you factor in how many GPUs NVIDIA sold.

In October last year, NVIDIA CEO Jensen Huang told reporters that it had shipped six million Blackwell GPUs in the last four quarters, though it eventually came out that he was counting two cores for every GPU, making the real number three million. I disagree with the framing, I think it’s incoherent and dishonest, but I’ve confirmed this is what NVIDIA meant.

In any case, if we assume two cores per GPU, a B200 GPU has a power draw of around 1200W, for around 3.6GW of IT load for 3 million of them. I realize that NVIDIA also sells B100 and B300 GPUs (similar power draw) and NVL72 racks of 72 GB200 GPUs and 36 CPUs, but bear with me.

Blackwell GPUs only started shipping with any real seriousness in the first quarter of 2025, which means that a good chunk of these data centers were built with H100 and H200 GPUs in mind. Nevertheless, I can find no compelling evidence that significant amounts — anything over 500,000 GPUs — of Blackwell-based data centers have been successfully brought online.

When I say I struggled to find data centers that had been both announced and brought online, I mean that I spent hours looking, hours and hours and hours, and came up short-handed.

I want to be clear that I know that there is Blackwell capacity actually being built, and believe that the majority of that capacity is retrofits of previous data centers, such as Microsoft’s extension to its Goodyear Arizona campus which it began building in 2018 that likely houses Blackwell GPUs.

But I no longer believe that the majority of Blackwell GPUs are doing anything other than collecting dust in a warehouse. Blackwell GPUs require distinct cooling, a great deal more power than an H100, and cost an absolute shit-ton of money, making it unlikely that a 2023 or early-2024 era data center could handle them without significant modifications.

I fundamentally do not believe more than a million — if that! — Blackwell GPUs are actually in service.

If that’s the case, NVIDIA is likely pre-selling GPUs years in advance — experimenting with the dark arts of “bill-and-hold” — and helping certain partners like Microsoft install the latest generation to create the illusion of utility, availability and viability that does not actually exist.

If I’m honest, I also have serious questions about the current status of many H100 and H200 GPUs. Based on what I’ve found, I’d be surprised if more than 3GW of actual capacity was turned on in the last two years, which means that NVIDIA has sold anywhere from double to triple the amount of GPUs that the world can hold.

While the Anthropic-Musk compute deal is an obvious sign about xAI’s lack of demand for compute, it’s also, as I mentioned earlier, a clear sign that AI data centers are mostly not getting finished, and those that do get finished are taking two or three years even for smaller builds.

While it sounds a little wild, I think in reality only a few hundred megawatts — if that — of actual, usable AI compute capacity is being spun up every quarter. If I was wrong, there’d be significantly more progress on, well, anything I could find.

Why can’t Microsoft offer up a data center that isn’t called Fairwater, and why are its Fairwater data centers taking so long? How much actual capacity has Microsoft brought online? Because it certainly isn’t fucking 2GW in six months.

I’m willing to believe that Microsoft has a number of collocation agreements with parties that don’t disclose their involvement. I’m also willing to believe that Microsoft doesn’t publicize every single data center it’s building or has built.

2GW of capacity is a lot. It’s nearly ten times the (likely) existing capacity of Fairwater Atlanta. If Microsoft is bringing so much capacity online, why can’t we find it, and why won’t they tell us? And no, this isn’t some super secret squirrel “they’re building secret data centers for the government” thing, it’s very clearly a case where “capacity” refers to “something other than data centers that actually got brought online.

Despite their ubiquity in the media, AI data centers are relatively new concepts that are barely five years old. They are significantly more power-intensive than a regular data center, requiring massive amounts of cooling and access to water to the point that the surrounding infrastructure of said data center is often a massive construction project unto itself.

For example, OpenAI and Oracle’s Stargate Abilene data center is (in theory) made up of two massive electrical substations, a giant gas power plant and eight distinct data center buildings, each with around 50,000 GB200 GPUs, at least in theory. Every data center requires that power exists — as in it’s being generated in both the manner and capacity necessary to turn it on, either through external or grid-based power — and is accessible at the data center site.

This means that every single data center, no matter how big, is its own construction nightmare. You’ve got the power, the labor, the permits, the planning, the construction firm, the power company, the specialist gear, the temporary power (because on-site power is slow), the backup power (because you can’t just rely on the grid for something you’re charging millions for!), the cooling, the uninterruptible power supplies — endless lists of shit that needs to go very well or else the bloody thing won’t work.

These are very difficult and large projects to complete. Edged Computing’s (theoretically) 96MW data center in Illinois is 200,000 square feet in effectively two large squares. For comparison, every single inch of gambling space in Caesar’s Casino Vegas is around 130,000 square feet. These things are fucking huge, fucking difficult, and fucking expensive, and all signs point to capacity not coming online.

Let’s go back to Anthropic mopping up Musk’s fallow data center capacity, which stinks of desperation for both companies. If there were modern data centers full of GB200s being turned on and available anywhere in the next month or two, wouldn’t it be more financially prudent to wait for it, even if it’s just on an efficiency level? A franken-center made up of H100s and H200s with some GB200s stapled onto the side feels like a stopgap solution.

I have similar questions about the results of adding this capacity — that “...Anthropic plans to use [it] to directly improve capacity for Claude Pro and Claude Max subscribers,” “doubling” (whatever that means) the 5-hour rate limit and removing the recently-added peak rate limits.

What’s the plan here, exactly? Less than a month ago Anthropic’s Head of Growth, Amol Avasare, said that Anthropic was “looking at different options to keep delivering a great experience for users” because Max accounts were created before the era of Claude Code and Cowork. How does adding 300MW of capacity magically resolve that problem? Was that always the plan?

Or was this a knee-jerk reaction to the surging popularity of OpenAI’s Codex? Because the original justification for peak hours was that Anthropic needed to manage “growing demand for Claude,” demand that I bet Anthropic claims hasn’t gone anywhere.

It’s also important to remember that last year, OpenAI’s margins (which are already non-GAAP), per The Information, were worse than expected because (and I quote) it had to “..to buy more expensive compute at the last minute in response to higher than expected demand for its chatbots and models.”

In other words, Anthropic has deliberately tanked its already-negative 2026 gross margins by desperately buying the fallow compute from a company whose CEO threw up the nazi salute, called the company “misanthropic and evil,” and has the “right to reclaim the compute” if Anthropic “engages in actions that harm humanity.”

Surely you’d wait a few months for some new, less tainted source of compute, right? And surely it wouldn’t be such a big deal, because new data centers get switched on every day, right?

Right?

So, let’s get to brass tacks.

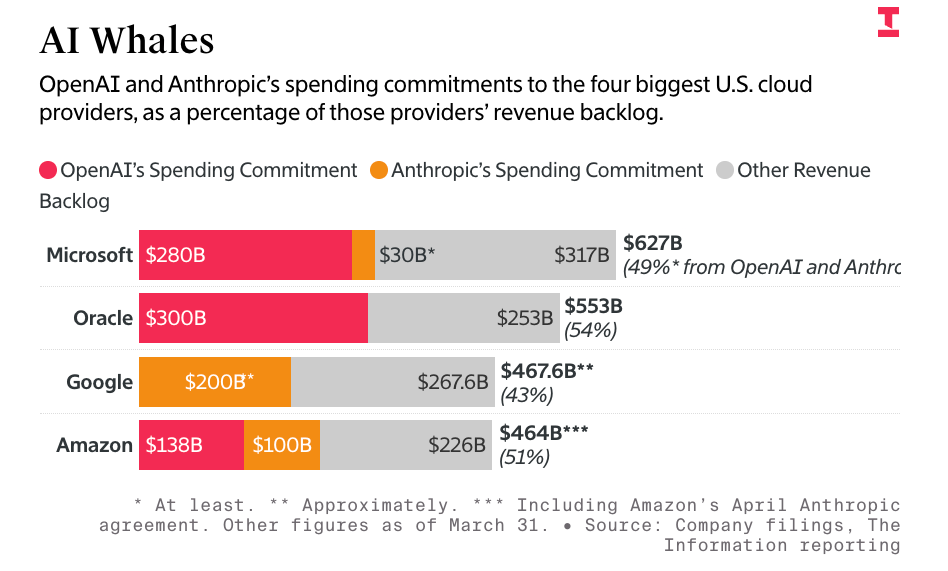

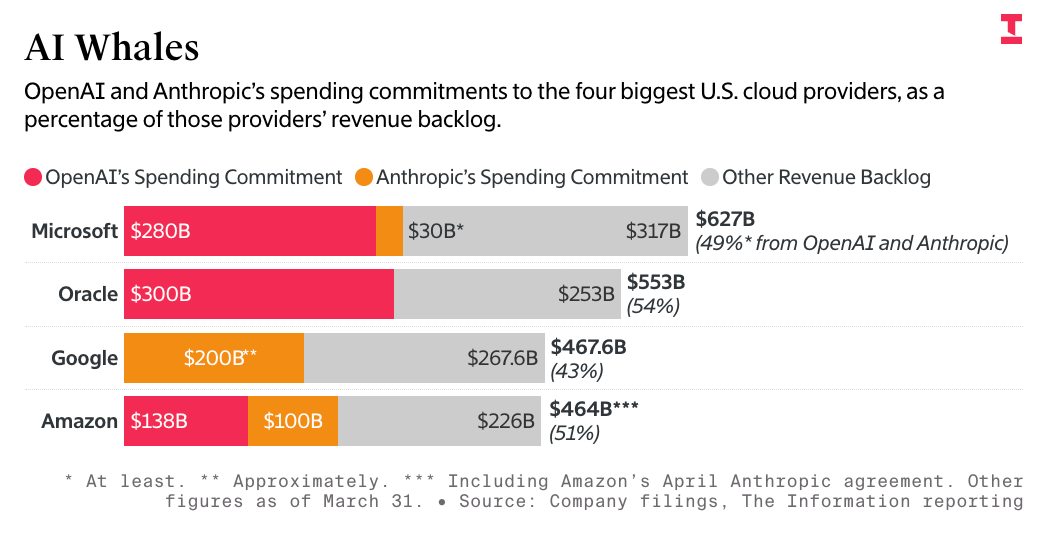

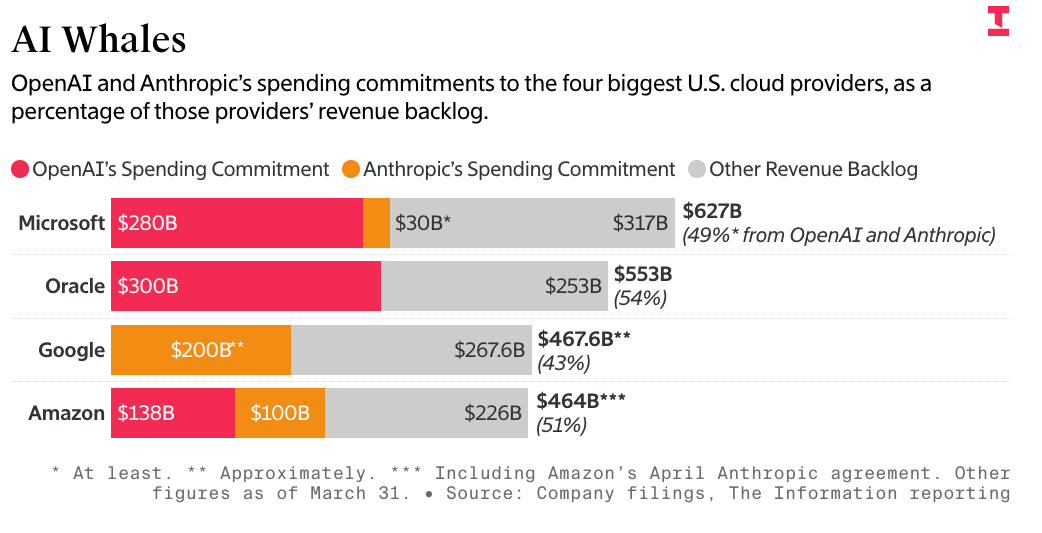

Anthropic and OpenAI have now committed to spending $748 billion across Amazon Web Services, Google Cloud, and Microsoft Azure, accounting for more than 50% of their remaining performance obligations. The very future of hyperscaler revenue depends both on Anthropic and OpenAI’s continued ability to pay and both of them having something to actually pay for.

I also think it’s fair to ask why Microsoft’s theoretical gigawatts of new compute aren’t producing tens of billions of dollars of new revenue.

Microsoft’s $37 billion in annualized AI run rate (sigh) is mostly taken up by OpenAI’s voracious demands for its :compute, and only ever seems to expand based on OpenAI’s compute demands and the now 20 million lost souls paying for Microsoft 365 Copilot. There’s supposedly incredible, unstoppable demand for AI compute, and Microsoft is apparently sitting on gigawatts’ worth, but somehow those gigawatts don’t seem to be translating into gigabillions, likely because they don’t fucking exist.

All of this makes me wonder what Google infrastructure head Amin Vahdat meant last November when he said that Google needed to double its capacity every six months to meet demand. Many took this to mean “Google is doubling its capacity every six months,” but I think it’s far more likely that Google is taking on capacity requests from Anthropic that are making said capacity demands necessary. Similarly, I think CEO Sundar Pichai’s comment that it would have made more money had it had more capacity to sell was a manifestation of a distinct lack of new capacity rather than a result of bringing on swaths of new data centers that immediately got filled.

I also need to be blunt on two things:

Look, I know it sounds crazy, but I’m telling you: I don’t think very many data centers are coming online! While I keep wanting to hedge my bets and say “I bet a few gigawatts came online,” I cannot actually find any compelling literature that backs up that statement. I’ve spent hours and hours looking, and I’ve come up with a few hundred megawatts delivered in the past two years. Every major project is stuck in the mud, a phase or two in, or facing mounting opposition from locals that don’t want a Godzilla-sized cube making a constant screaming sound 24/7 so that somebody can generate increasingly-bustier Garfields.

I’m not even being a hater! It’s just genuinely difficult to find actual data centers that have been announced that have also been fully turned on.

So, humour me for a second: if hyperscalers are bringing on hundreds of megawatts of capacity a year, then that means that the ever-growing quarterly chunks of depreciation ripped out of their net income are just a taste of what’s to come.

Last quarter, Google’s depreciation jumped $400 million to $6.482 billion, with Microsoft’s jumping nearly a billion dollars from $9.198 billion to $10.167 billion, and Meta’s from $5.41 billion to $5.99 billion. While Amazon’s technically dropped quarter-over-quarter, it still sat at an astonishing $18.94 billion.

Remember: depreciation only increases when an item is actually put into service. If Microsoft, Google, Amazon and Meta are sitting on tens of billions of yet-to-be-installed GPUs, and said GPUs are only being installed at a snail’s pace every quarter, that means that these depreciation figures are set to grow dramatically. In fact, year-over-year, Google’s depreciation has jumped 30.7%, Amazon’s 24.7%, Microsoft’s 23.9%, and Meta’s an astonishing 34.9%.

And that’s with an extremely slow pace of deployment.

Sidenote: This also really makes me doubt that Microsoft has been bringing a gigawatt of GPU capacity for two quarters straight. A gigawatt of GPU capacity would be about $2 billion a quarter or more in depreciation. A $400 million bump in depreciation is about $9.6bn ($400 million times 24 quarters (6 years)), at about $50,000 per B200 GPU, or around 192,000 GPUs at 1200W each, for around 230.4MW.

Hell, someone could probably sit down and work out their potential capacity based on depreciation alone.

I do kind of see why the hyperscalers are sinking capex into these big AI infrastructure gigaprojects now, though. Shareholders are currently tolerating the capex because they think stuff is coming online, and that’s where the “incredible value” is. When a $20 billion or $30 billion a quarter depreciation bill first rears its head — as I said, Amazon is close, reporting $18.945bn in depreciation and amortization expenses in the most recent quarter — it’ll become obvious that the only people seeing value from AI are Jensen Huang and one of the massive construction firms slowly building these projects.

Actually, it’s probably important to state that I don’t think the majority of these projects are doing anything untoward I just don’t think any of them realized how difficult it is to build a data center, and unlike basically any other problem the tech industry has ever faced, simply throwing as much money as possible at it doesn’t really change the limits of physical construction.

I think every one of these data center projects is its own individual construction nightmare, and thanks to the general market psychosis around the AI bubble, nobody has thought to question the core assumption that these things are actually getting built.

With all that being said, I’m not sure that anyone building these things is moving with much urgency either. Perhaps they don’t need to — perhaps hyperscalers are happy, because they can continually string out both the AI narrative and put off those massive blobs of depreciation.

But we really do need to reckon with the fact that nearly two years in, Stargate Abilene has only two buildings’ worth of actual, operational, revenue generating capacity, and nobody has given me an answer as to how it doesn’t have even a quarter of the 1.7GW of power it’ll need to turn everything on, if it ever gets fully built.

Maybe they can really pick up the pace, but as of early April, barely any actual gear was in the third building.

And then we get to the other problem: Oracle.

As I’ve discussed before, Oracle is building 7.1GW of total capacity for OpenAI, and keeps — laughably! — saying 2027 or 2028, when at this rate, Stargate Abilene won’t be done until mid-2027, and the rest either never get finished or are done in 2030 or later.

This is setting up a horrifying situation where Oracle desperately needs OpenAI to pay it for capacity that doesn’t exist, and if it ever gets built, it’s likely to be years after OpenAI has run out of money, which is the same problem that Microsoft, Google, and Amazon have with their $748 billion of deals with Anthropic and OpenAI, though thanks to the $340 billion or more necessary to build the Stargate data centers, Oracle’s problems are far more existential.

I’ve repeatedly — and correctly! — said that the problem is that these companies didn’t have the money to pay for their capacity, but Oracle lacks Microsoft or Google’s existing profitable businesses to fall back on if these data centers are delayed, with its existing business lines plateauing and its only real growth coming from theoretical deals with OpenAI and GPU compute with negative 100% margins.

Anthropic’s desperation for new sources of compute also suggests that it’s bonking its head against the limits of its capacity, and will continue to do so as long as it continues to subsidize its users. I also think that the slow pace of construction will eventually lead to OpenAI facing similar problems.

These companies need to continue growing to continue to raise the hundreds of billions of dollars in funding necessary to pay Oracle, Google, Microsoft, and Amazon their respective pounds of flesh.

It’s now very clear that the whole “inference is profitable” and “most compute is being used for training” myths are dead, because if they weren’t, Anthropic would either need way more compute or way higher-quality compute. Colossus-1 was specifically built as a training cluster, yet its current use is “reduce rate limits for our subsidized AI subscriptions,” which is most decidedly inference provided by three-year-old hardware.

Despite writing over 9000 words and driving myself slightly insane trying to find out, I still haven’t got an answer as to how much actual data center capacity has come online. Hyperscalers have clearly been retrofitting old data centers to fit their new chips, and based on my research, I can find no compelling evidence that they’ve added more than a few hundred megawatts a piece since 2023.

What I do know is that, across the board, a data center of anything above 50MW (or lower, in some cases) takes anywhere from 18 to 36 months to complete, and nobody has actually built a gigawatt data center despite how many people discuss them.

For example, Kevin O’Leary — known as “Mr. Dogshit” to his friends — is allegedly building a 9GW data center in Utah, but he may as well say that he’s building a unicorn that shits Toyota Tacomas, as doing so is far more realistic than a project that will likely cost $396 billion, assuming that locals and bankers don’t drag him to The Other Side like Dr. Facilier.

Nobody has built a 1GW data center, so I severely doubt Mr. Dogshit will be able to do anything other than create another scandal and lose a bunch of people’s money.

In other words, any time you hear about a “new data center project,” add a year or two to whatever projection they give. If it’s 2027, assume 2029, or that it never gets built. Anything being discussed as “finished in 2030” may as well not exist.

Sidenote: In general, the only projects that take anything less than a year are tiny — a megawatt max — other than Elon Musk’s Colossus-1, a Frankenstein’s monster of GPUs that vary between 1 and 3 years old.

While Musk claims it took 122 days to build, that was A) only the first 100,000 H100 GPUs and B) only possible because they used an old powered shell from an Electrolux factory and thirty horrible, inefficient methane gas turbines. It cost way, way too much, and was obviously such a liability that Musk flogged it the second he could.

In any case, what I’m suggesting is that very, very few data centers are actually getting finished, and if that’s true, NVIDIA has sold years worth of chips that are yet to be digested.

And if that’s true, somebody is sitting on piles of them.

I’m trying to be fair, so I’ll assume that an unknown amount of data centers got retrofitted to fit Blackwell GPUs. But I also refuse to believe that even half of the three million Blackwell GPUs that got shipped have actually been installed. Where would they go? You can’t use the same racks for them that you would with an H100 or H200, because Blackwell requires so much god damn cooling.

Another sign that these things aren’t actually getting installed is Supermicro’s $1.4 billion or so of B200 GPUs left in inventory from a canceled order from Oracle.

Why not? Isn’t this meant to be a chip that’s extremely valuable? Isn’t there infinite demand? Is there not a place to put them? Apparently Oracle wanted to use faster GB200 GPUs from Dell, but why aren’t there other customers lining up to buy these things?

Also…how was Oracle able to cancel an order of over a billion dollars’ worth of GPUs?

Can anybody do that? Because if they can, one has to wonder if this doesn’t start happening as people realize these data centers aren’t getting built.

Pick a data center. It’s probably barely under construction, or if it’s “finished” it’s actually “partly done” with no real guide as to when the rest will finish.

Remember that $17 billion deal with Microsoft and Nebius signed? The one that’s a key reason why Nebius’ stock is on a tear? Well, its existence is based on the continued construction of a data center out in Vineland, New Jersey facing massive local opposition, and multiple sources now confirm that construction has been halted due to local planning issues. The data center is horribly behind schedule already, and Microsoft has the option to cancel its entire contract if Nebius fails to meet milestones.

That data center is a major reason that people value Nebius’ stock! It cannot make a dollar of revenue without its existence! It has the funds and blessing of Redmond’s finest — the Mandate of Heaven! — and it can’t get things done! This is bad, and indicative of a larger problem in the industry — that it’s really difficult to build data centers, and for the most part, they’re not being fully built!

You’ve heard plenty about data centers getting opposed and canceled — how about ones that fully opened? No, really, if you’ve heard about them please get in touch, because it’s really difficult to find them.

Why don’t we know? This is apparently the single most important technology movement since whatever the last justification somebody made up was, shouldn’t we have a tangible grasp? Because the way I see it, if these things aren’t coming online at the rate that people think, we have to start asking for fundamental clarity from NVIDIA about where the GPUs are, and when they’re coming online.

NVIDIA’s continually-growing valuation is based on the conceit that there is always more demand for GPUs, and perhaps that’s true, but if this demand is based on functionally selling chips two years in advance. That makes NVIDIA’s yearly upgrade cadence utterly deranged. Buy today’s GPUs! They’re the best, for now, at least. By the time you plug them in they’re gonna be old and nasty. But don’t worry, it’ll take two years for you to install the next one too!

To be clear, Blackwell GPUs are absolutely being installed! But three million of them?

People love to use “enough to power two cities” to illustrate these points, but I actually think it’s better to illustrate in real data center terms.

Stargate Abilene has taken two years to build two buildings of around 103MW of critical IT load. 3 million B200 GPUs works out to about 3.6GW of IT load. Do you really think that nearly thirty five Stargate Abilene-scale buildings were built in 2025? If so, where are they, exactly?

You may argue that other data centers are smaller, and thus it would be easier to build. So why can’t I find any examples of where they’ve done so?

By all means prove me wrong! It’s so easy! Just show me a data center announced or that broke ground in 2023 and find obvious proof it turned on. I’ll even give you credit if it’s partially open!

The problem is that I keep finding examples of “partially complete” and those are the only examples of “finished” data centers.

Isn’t this a little insane? This is all we’ve heard about for years, everybody is ACTING like these things exist at a scale that I’m not sure is actually true!

I expect a fair amount of huffing and “well of course they’re coming online” from the peanut gallery, but come on guys, isn’t this all kind of weird? Even if you want to marry Sandisk and name your children “Western” and “Digital,” why can’t you say with your whole chest several data centers that got finished? We have macro level “proof” but when you try and look at even a shred of the micro you find a bunch of guys with their hands on their hips saying “sorry mate that’ll be another $4 million.”

Something doesn’t line up, and it’s exactly the kind of misalignment that happens in a bubble — when infrastructural reality disconnects from the financials. NVIDIA is making hundreds of billions of dollars and it’s unclear how much of it is from GPUs installed in operational data centers. It feels like Jensen Huang might have run the largest preorder campaign of all time.

This has massive downstream consequences. Sandisk, Samsung, SK Hynix, Broadcom, AMD, Microsoft, Google, Oracle, and Amazon’s remaining performance obligations total [find] and are dependent on being *able* to sell gigawatts worth of computing gear or compute access. If data centers are not getting built in anything approaching a reasonable timeline, that makes the future of these companies only as viable as the construction projects themselves. Even if you truly believe Anthropic will be a $2 trillion company and a $200 billion customer of Google, the compute capacity has to exist to be bought, and it does not appear to be built or, in many cases, anywhere further than the earliest stages of construction.

If they don’t get built in the next few years, there’s no space for that solid state storage or those instinct GPUs. There’s no reason for NVIDIA to have reserved most of TSMC’s capacity, either.

There’s also no reason to get excited about Bloom Energy, as it’s not making real revenue on those until Oracle finishes its data centers sometime between the next two years and never.

And if they don’t get built, hundreds of billions of dollars have been wasted, with large swaths of those billions funded by private credit, which in turn is funded by pensions, retirements and insurance funds.

I’ve got a bad feeling about this.

2026-05-08 22:40:45

In this week’s free newsletter, I explained how bad the circular AI economy is in the simplest-possible terms:

Anthropic not have money to pay big cloud bills, because Anthropic company cost lots of money, more money than Anthropic make! So Anthropic only PAY cloud bills if OTHERS give it money! Amazon GIVE MONEY to Anthropic to GIVE BACK TO AMAZON, which mean no profit! And Amazon not give Anthropic enough money to pay it, so Anthropic have to ask OTHERS for money! That BAD! It mean BUSINESS not STABLE, and CLIENT not STABLE.

This bad when client MOST OF AI MONEY!

This ALSO mean that Anthropic RELIANT on OTHERS to pay AMAZON, which make AMAZON dependent on VENTURE CAPITAL for FUTURE REVENUE! Amazon SAY it have BIG BUSINESS, but BIG BUSINESS dependent on ANTHROPIC, which mean BIG BUSINESS dependent on VENTURE CAPITAL!

This SAME for GOOGLE! Both say they have BIG CLIENT, but BIG CLIENT MONEY not supported by REVENUE, so BIG CLIENT actually mean “HOW MUCH VENTURE CAPITAL MONEY ANTHROPIC HAVE.”

This bad business!

Sidenote: Me know you say “ANTHROPIC STOCK WORTH BIG MONEY,” but me need you remember how much capex Amazon and Google spend! Even if Anthropic stake worth $200 Billion, Amazon and Google still spend MANY more dollar than that on capex! And stake so BIG that neither able to SELL ALL. Only make gain on PAPER, which not REAL MONEY!

In other words, the entire AI economy effectively comes down to Anthropic and OpenAI, who take up at least 70% of Amazon’s Google’s, and Microsoft’s compute capacity, 70% to 80% of their AI revenues and 50% of their entire revenue backlog, per The Information:

That’s $748 billion of the entire revenue backlog — not just AI compute — that’s dependent on Anthropic and OpenAI, two companies that cannot afford to pay these bills without constant venture capital infusions from either investors or the hyperscalers themselves.

This is a big problem, because Anthropic seems to be losing so much money that it had to raise $10 billion from Google, $5 billion from Amazon, and is reportedly trying to raise another $50 billion from investors, less than three months after it raised $30 billion on February 12, 2026, which was five months after it raised $13 billion in September 2025. That’s $58 billion in eight months, with the potential to reach $108 billion.

Now Anthropic is taking over all 300MW of SpaceX/xAI/Elon Musk’s Colossus-1 data center, which will likely cost somewhere in the region of $2.5 billion to $3.5 billion a year, given that most of the data center is made up of H100 and H200 GPUs (with around 30,000 GB200 GPUs).

I also don’t think people realize how bad a sign this is for the larger AI economy.

Musk built the 300MW Colossus-1 to be “the most powerful AI training system in the world,” specifically saying that it was built “for training Grok,” with inference handled through Oracle (which originally earmarked Abilene for Musk but didn’t move fast enough for him) and other cloud providers. xAI, as one of the largest non-big-two providers, had so little need for AI capacity that it was able to hand off the entirety of its self-built data center capacity to Anthropic.

If xAI doesn’t need 300MW of compute capacity that it spent at least $4 billion to build, who, exactly, are the other large customers for AI compute? I’m not even being facetious. I truly don’t know, I can’t find them, I spent most of last week looking for them, and the only answer I had a week ago was “Elon Musk buying a lot of compute for xAI to make the freaks on the Grok Subreddit able to generate pornography.”

xAI is also the only non-OpenAI/Anthropic AI lab that’s built its own capacity, capacity it clearly didn’t need, which begs the question as to why Musk needs however much capacity he’ll build at Colossus-2. Musk claims that xAI had moved all training to Colossus-2, but also that xAI would “provide compute to AI companies that are taking the right steps to ensure it is good for humanity.” This apparently includes Anthropic, which Musk called “misanthropic and evil” a little over two months ago. Researchers believe that the actual capacity of Colossus-2 is 350MW.

At $2.5bn a year or so, Anthropic will be effectively the entirety of xAI’s revenue, which was at around $107 million in the third quarter of 2025.

To put this very, very simply: xAI should, in theory, have massive demand for AI compute, but its demand is apparently so small that it can flog a multi-billion-dollar data center to a competitor.

Sightline Climate found that 15.2GW of capacity is under construction and due to be completed by the end of 2027, and at this point I’m not sure anybody can make a compelling argument as to why it’s being built or who it’s for.

Who needs it? Who are the customers? Who is buying AI compute at such a scale that it would warrant so much construction? Where is the demand coming from if it’s not OpenAI and Anthropic?

These questions shouldn’t be that hard to answer, but trust me, I’ve tried and cannot find a GPU compute customer larger than $100 million a year, and honestly, that customer was xAI.

Through many hours of research, I’ve found that the vast majority — as much as 95% — of all compute demand comes from a few places:

Otherwise, every data center deal you’ve ever read about is for a theoretical future customer or an unnamed “anchor tenant” that gives them “guaranteed, pre-committed occupancy” without being identified in any way.

Yet even that “pre-committed” language seems to be something of a myth, which I’ve chased down to a report from real estate firm JLL, who says that 92% of capacity currently under construction is precommitted through binding lease agreements or owner-occupied development. CBRE said it was 74.3% for the first half of 2025, and Cushman & Wakefield said it was 89%, though it also said that there was 25.3GW of capacity under construction, while Sightline sees 19.8GW under construction through the end of 2030.

And man, I cannot express how fucking difficult it is to find actual data center customers outside of the ones I’ve named above. In fact, it’s pretty difficult to find any customers for GPU compute not named Anthropic, OpenAI, Microsoft, Google, Meta or Amazon.

Outside of OpenAI and Anthropic, effectively no AI software makes more than a few hundred million dollars a year, and to make that money, they have to spend it on tokens generated by models run by one of those two companies.

When those companies generate those tokens, they then flow to one of a few infrastructure providers — I’ll get to the breakdown shortly — to rent out GPUs.

As I’ve discussed this week, at least 75% of Microsoft, Google and Amazon’s AI revenues come from OpenAI or Anthropic, and that’s before you count the money that Microsoft, Google and Amazon make reselling models from both companies.

To get specific, The Information reports that Anthropic will pay around $1.6 billion to Amazon for reselling its models. OpenAI, per my own reporting, sent Microsoft $659 million as part of its revenue share.

AI startups — all of whom are terribly unprofitable — predominantly spend their funding on models sold by OpenAI and Anthropic. Per Newcomer, as of August last year, Cursor was spending 100% of its revenue on Anthropic. Harvey, an AI tool for lawyers, raised $960 million between February 2025 and March 2026, with most of those costs flowing to Anthropic and OpenAI.

Effectively every AI startup is a feeder for API revenue for Anthropic or OpenAI, and as a result, almost every dollar of AI revenue flows to either Google, Microsoft or Amazon.

As Anthropic and OpenAI are extremely unprofitable, Google, Microsoft and Amazon then take that money and either re-invest it in OpenAI and Anthropic, as Google, Amazon and Microsoft have all done in the past few years.

At the beginning of the bubble, all three companies believed that OpenAI and Anthropic were golden geese that were, through the startups they inspired and powered, laying golden eggs that necessitated expanding their operations, leading them to spunk hundreds of billions of dollars in capex, with Amazon building the massive Project Rainier in Indiana for Anthropic and Microsoft the Atlanta and Wisconsin-based Fairwater data centers for OpenAI.

They likely also thought their own services would grow fast enough to warrant the expansion, or that other large GPU consumers would rear their heads.

That never happened. Instead, OpenAI grew bigger and more-demanding of Microsoft’s compute capacity, leading to Microsoft allowing it to seek other partners, in part (per The Information) because some executives believed OpenAI would die:

After striking the blockbuster deal in 2023, several top Microsoft executives told colleagues around that time that they thought OpenAI’s business would eventually fail, even if its technology was good, according to a former manager who discussed it with them.

By November 2025, OpenAI had signed a $300 billion deal with Oracle, a $22 billion deal with CoreWeave, a $38 billion deal with Amazon, and a theoretical deal with both AMD and NVIDIA.

Yet by this point, Microsoft realized it was in a bind, with the majority — at least 70% if not more of its AI revenues were dependent on OpenAI, but it had already walked away from 2GW of data center capacity to reduce its capex costs. It had also, as part of OpenAI’s conversion to a for-profit company, had convinced it to spend $250 billion in incremental revenue on Azure.

So Microsoft chose to start spreading out that capacity to neoclouds like Nebius and Nscale, effectively bankrolling their entire futures based on theoretical revenue from OpenAI, a company that plans to burn $852 billion in the next four years and cannot afford to pay any of its bills without continual subsidies. These companies were now part of a multi-threaded dependency that ultimately ended up at one place: OpenAI, which also makes up the vast majority of inference chip maker Cerebras’ revenue with its 3-year, $20 billion deal.

Meanwhile, Amazon and Google thought they had it made. Anthropic was growing, and its compute demands were reasonable enough that neither had to stretch themselves too thin…until the second quarter of 2025, when Anthropic’s accelerated growth led to it starting to push against the limits of Google and Amazon’s capacity.

So Google agreed to backstop several billion dollars behind two deals with Fluidstack, a brand new AI compute company, and Amazon continued expanding its Project Rainier data center.

Yet Anthropic’s hunger wasn’t sated. After mocking OpenAI in February 2026 for “YOLOing” into compute deals (and having signed a cloud deal with Microsoft), it massively expanded its AWS and Google Cloud deals, signed a deal with CoreWeave, and as I discussed above, took over the entirety of Musk’s Colossus-1 data center.

And all of this is only happening because, based on my analysis, very little actual demand for AI compute exists outside of OpenAI and Anthropic, and OpenAI and Anthropic only exist because of Microsoft, Google, and Amazon both building and expanding their infrastructure to cater to them.

In reality, OpenAI and Anthropic are the only meaningful companies in the AI industry. They are the majority of revenue, the majority of capacity and the majority of demand. Microsoft, Google and Amazon have exploited the desperation in a tech industry that’s run out of hypergrowth ideas, and created a near-imaginary industry by propping up both companies.

The mistake that most make in measuring the circularity of OpenAI and Anthropic is to focus entirely on the money raised — $13 billion from Microsoft and up to $50 billion from Amazon for OpenAI, and as much as $80 billion from Amazon and Google for Anthropic.

The correct analysis starts with measuring infrastructure. Based on discussions with sources and analysis of multiple years of reporting, I estimate that of the roughly $700 billion in capex spent by Google, Meta and Microsoft since 2023, at least 5.5GW of capacity costing at least $300 billion has been built entirely for two companies. This has in turn inflated sales through multiple counterparties involving NVIDIA, ODMs like Quanta, Foxconn, Supermicro and Dell, and created a form of market-driven AI psychosis that inspired Meta to burn over $158 billion in three years and the entire world to convince itself that AI was the biggest thing ever.

The reason that there isn’t another OpenAI or Anthropic is that Google, Microsoft, and Amazon bankrolled their entire infrastructure, fed them billions of dollars, and then charged them discount rates for their early compute, with sources telling me that Anthropic pays vastly below-market-rates for Trainium compute from Amazon, and The Information reporting that OpenAI was paying $1.30-per-A100-per hour in 2024, or at or around the cost of running them.

By sacrificing their entire infrastructure to OpenAI and Anthropic, the hyperscalers created the illusion of demand by feeding themselves money, all while buying endless GPUs and TPUs to fill further data centers for two customers, both of whom paid discount rates that lost them money.

This capex bacchanalia gave all three companies a massive boost to their stock prices, so they kept going, even though there wasn’t really demand other than for Anthropic or OpenAI, two companies that they had to constantly cater to with investment capital and server maintenance.

The belief became that all you had to do was plan to build a data center and you’d print money, boosting NVIDIA’s sales and associated counterparties in memory stocks like Sandisk. Except that never happened.

Every data center provider that doesn’t have an Anthropic, OpenAI, or Meta-related contract makes pathetic amounts of revenue that can barely keep up with their debt. AI startups make meager revenues, and lose multitudes more than they can ever hope to make.

The entire AI industry relies upon two companies that expect to burn at least $1 trillion in the next four years, with Anthropic, the supposed “compute-conscious” AI company, committing to at least $330 billion in spend in the next few years.

Where does that money come from, exactly? Because neither of these companies have anything approaching a path to profitability.

Based on a deep analysis of every publicly-available source on AI compute, I can find only two significant — over $100 million a year — purchasers of AI compute outside of Anthropic, OpenAI, Meta, or associated parties like NVIDIA, Microsoft, Google and Amazon. Those two are Poolside, which reportedly spends $400 million a year, an untenable position as it only raised $500 million in total funding before its $2 billion in funding collapsed earlier this year, and Perplexity, which appears to spend some amount of money with CoreWeave and Microsoft Azure. Both run at a massive loss.