2026-05-12 20:20:20

If you look very closely at my previous post, you’ll notice that I initialize a 128-bit integer with a 64-bit value. The 128-bit unsigned integer represents the internal state of a random number generator. Why not initialize it to a 128-bit value? I was trying to keep the code simple.

A surprising feature of C compilers, at least of GCC and Clang, is that you cannot initialize a 128-bit integer to a 128-bit integer literal. You can’t directly print a 128-bit integer either, which is why the previous post introduces a function print_u128.

The code

__uint128_t x = 0x00112233445566778899aabbccddeeff;

Produces the following error message.

error: integer literal is too large to be represented in any integer type

The problem isn’t initializing a 128-bit number to a 128-bit value; the problem is that the compiler cannot parse the literal expression

0x00112233445566778899aabbccddeeff

One solution to the problem is to introduce the macro

#define U128(hi, lo) (((__uint128_t)(hi) << 64) | (lo))

and use it to initialize the variable.

__uint128_t x = U128(0x0011223344556677, 0x8899aabbccddeeff);

You can verify that x has the intended state by calling print_u128 from the previous post.

void print_u128(__uint128_t n)

{

printf("0x%016lx%016lx\n",

(uint64_t)(n >> 64), // upper 64 bits

(uint64_t)n); // lower 64 bits

}

Then

print_u128(x);

prints

0x00112233445566778899aabbccddeeff

Update. The code for print_u128 above compiles cleanly with gcc but clang gives the following warning.

warning: format specifies type 'unsigned long' but the argument has type 'uint64_t' (aka 'unsigned long long') [-Wformat]

You can suppress the warning by including the inttypes header and modifying the print_u128 function.

Here’s the final code. It compiles cleanly under gcc and clang.

#include <stdio.h>

#include <stdint.h>

#include <inttypes.h>

#define U128(hi, lo) (((__uint128_t)(hi) << 64) | (lo))

void print_u128(__uint128_t n)

{

printf("0x%016" PRIx64 "%016" PRIx64 "\n",

(uint64_t)(n >> 64),

(uint64_t)n);

}

int main(void)

{

__uint128_t x = U128(0x0011223344556677, 0x8899aabbccddeeff);

print_u128(x);

return 0;

}

The post Initialize and print 128-bit integers in C first appeared on John D. Cook.

2026-05-12 19:07:31

A couple days ago I wrote about hacking the Mersenne Twister. I explained how to recover the random number generator’s internal state from a stream of 640 outputs.

This post will do something similar with the lehmer64 random number generator. This generator is very simple to implement. Daniel Lemire found it to be “the fastest conventional random number generator that can pass Big Crush,” a well-respected test for pseudorandom number generators.

The lehmer64 generator can be implemented in C by

__uint128_t g_lehmer64_state;

uint64_t lehmer64() {

g_lehmer64_state *= 0xda942042e4dd58b5ULL;

return g_lehmer64_state >> 64;

}

The analogous code in Python would have to simulate the overflow behavior of a 128-bit integer by reducing the state mod 2128 after the multiplication.

Reverse engineering lehmer64 is easier than reverse engineering the Mersenne Twister because only three outputs are needed. However, the theory behind the exploit is more sophisticated. See [1].

The following code sets the state to an arbitrary initial seed value and generates three values.

#include <stdio.h>

#include <stdint.h>

int main(void)

{

g_lehmer64_state = 0x4789499d78770934; // seed

for (int i = 0; i < 3; i++) {

printf("0x%016lx\n", lehmer64());

}

return 0;

}

The code prints the following.

0x3d144d12822bcc2e 0x85a67226191a568d 0x53e803dffc88e8f8

Here is Python code for recovering the state of the lehmer64 generator given in [1].

def reconstruct(X):

a = 0xda942042e4dd58b5

r = round(2.64929081169728e-7 * X[0] + 3.51729342107376e-7 * X[1] + 3.89110109147656e-8 * X[2])

s = round(3.12752538137199e-7 * X[0] - 1.00664345453760e-7 * X[1] - 2.16685184476959e-7 * X[2])

t = round(3.54263598631140e-8 * X[0] - 2.05535734808162e-7 * X[1] + 2.73269247090513e-7 * X[2])

u = r * 1556524 + s * 2249380 + t * 1561981

v = r * 8429177212358078682 + s * 4111469003616164778 + t * 3562247178301810180

state = (a*u + v) % (2**128)

return state

Let’s call reconstruct with the output of our C code.

X = [0x3d144d12822bcc2e, 0x85a67226191a568d, 0x53e803dffc88e8f8] print( hex( reconstruct(X) ) )

This prints

0x3d144d12822bcc2e1b81101c593761c4

Now for the confusing part: at what point is the number above the state of the generator? Is it the state before or after generating the three values? Neither! It is the state after generating the first value.

We can verify this by modifying the C code as follows and rerunning it.

void print_u128(__uint128_t n)

{

printf("0x%016lx%016lx\n",

(uint64_t)(n >> 64), // upper 64 bits

(uint64_t)n); // lower 64 bits

}

int main(void)

{

g_lehmer64_state = 0x4789499d78770934; // seed

for (int i = 0; i

The main goal of [1] is to recover the state of the PCG generator, not the lehmer64 generator. The latter was a side quest. Recovering the state of PCG64 is much harder; the authors estimate it takes about 20,000 CPU-hours. The paper shows that a technique used as part of pursuing their main goal can quickly recover the lehmer64 state.

Related posts

[1] Charles Bouillaguet, Florette Martinez, and Julia Sauvage. Practical seed-recovery for the PCG Pseudo-Random Number Generator. IACR Transactions on Symmetric Cryptology. ISSN 2519-173X, Vol. 2020, No. 3, pp. 175–196.

The post Hacking the lehmer64 RNG first appeared on John D. Cook.2026-05-12 08:49:41

This morning I wrote a post about the probability that a random matrix over a finite field is invertible. If the field has q elements and the matrix has dimensions n × n then the probability is

In that post I made observation that p(q, n) converges very quickly as a function of n [1]. One way to see that the convergence is quick is to note that

and

John Baez pointed out in the comments that p(q, ∞) = φ(1/q) where φ is the Euler function.

Euler was extremely prolific, and many things are named after him. Several functions are known as Euler’s function, the most common being his totient function in number theory. The Euler function we’re interested in here is

for −1 < x < 1. Usually the argument of φ is denoted “q” but that would be confusing in our context because our q, the number of elements in a field, is the reciprocal of Euler’s q, i.e. x = 1/q.

Euler’s identity [2] (in this context, not to be confused with other Euler identities!) says

This function is easy to calculate because the series converges very quickly. From the alternating series theorem we have

When q = 2 and so x = 1/2, N = 6 is enough to compute φ(x) with an error less than 2−70, beyond the precision of a floating point number. When q is larger, even fewer terms are needed.

To illustrate this, we have the following Python script.

def phi(x, N):

s = 0

for n in range(-N, N+1):

s += (-1)**n * x**((3*n**2 - n)/2)

return s

print(phi(0.5, 6))

Every digit in the output is correct.

[1] I didn’t say that explicitly, but I pointed out that p(2, 8) was close to p(2, ∞).

[2] This identity is also known as the pentagonal number theorem because of its connection to pentagonal numbers.

The post Euler function first appeared on John D. Cook.2026-05-11 23:30:30

What is the inverse of shifting a sequence to the right? Shifting it to the left, obviously.

But wait a minute. Suppose you have a sequence of eight bits

abcdefgh

and you shift it to the right. You get

0abcdefg.

If you shift this sequence to the left you get

abcdefg0

You can’t recover the last element h because the right-shift destroyed information about h.

A left-shift doesn’t fully recover a right-shift, and yet surely left shift and right shift are in some sense inverses.

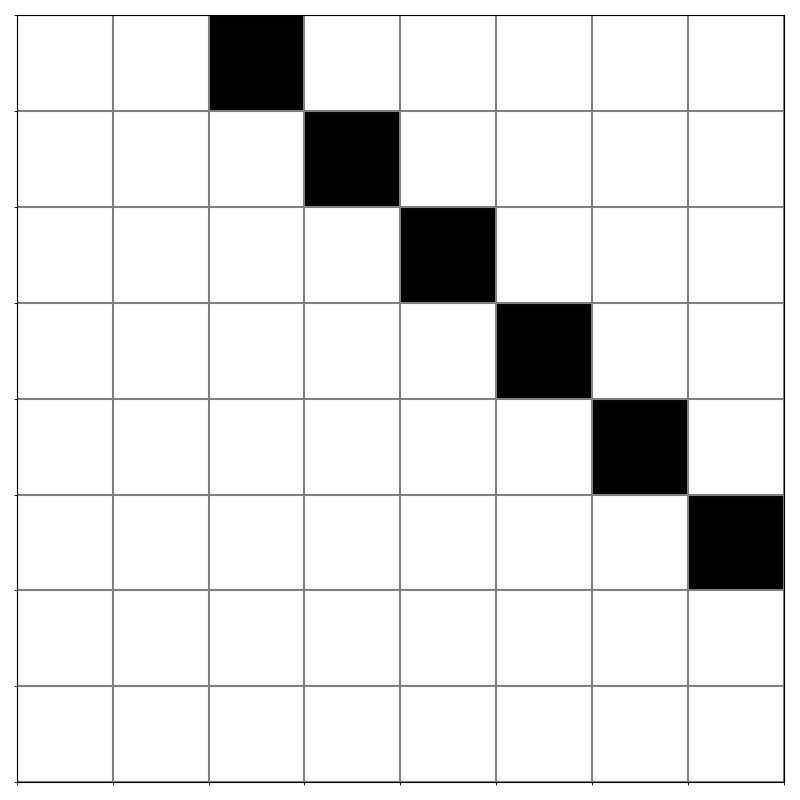

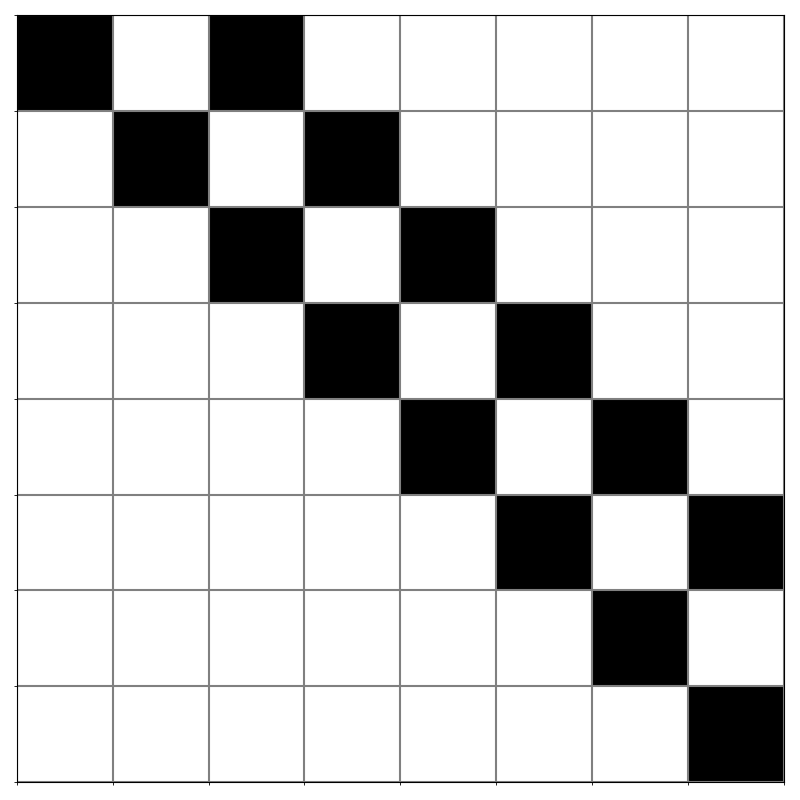

Yesterday I wrote a post about representing bit manipulations, including shifts, as matrix operators. The matrix corresponding to shifting right by k bits has 1s on the kth diagonal above the main diagonal and 0s everywhere else. For example, here is the matrix for shifting an 8-bit number right two bits. A black square represents a 1 and a white square represents a 0.

This matrix isn’t invertible. When you’d like to take the inverse of a non-invertible matrix, your kneejerk response should be to compute the pseudoinverse. (Technically the Moore-Penrose pseudoinverse. There are other pseudoinverses, but Moore-Penrose is the most common.)

As you might hope/expect, the pseudoinverse of a right-shift matrix is a left-shift matrix. In this case the pseudoinverse is simply the transpose, though of course that isn’t always the case.

If you’d like to prove that the pseudoinverse of a matrix that shifts right by k places is a matrix that shifts left by k places, you don’t have to compute the pseudo inverse per se: you can verify your guess. This post gives four requirements for a pseudoinverse. You can prove that left shift is the inverse of right shift by showing that it satisfies the four equations.

The post Inverse shift first appeared on John D. Cook.2026-05-11 21:25:13

The two latest posts have involved invertible matrices with 0 and 1 entries. If you fill an n × n matrix with 0s and 1s randomly, how likely is it to be invertible?

There are a couple ways to find the probability that a binary matrix is invertible, depending on what you mean by the inverse.

Suppose you have a matrix M filled with 0s and 1s and you’re looking for a matrix N such that MN is the identity matrix. Do you want the entries of N to also be 0s and 1s? And when you multiply the matrices, are you doing ordinary integer arithmetic or are you working mod 2?

In the previous posts we were working over GF(2), the field with two elements, 0 and 1. All the elements of a matrix are either 0 or 1, and arithmetic is carried out mod 2. In that context there’s a nice expression for the probability a square matrix is invertible.

If you’re working over the real numbers, the probability of binary matrix being invertible is higher. One way to see this is that the inverse of a binary matrix is allowed to be binary but it isn’t required to be.

Another way to see this is to look at determinants. If you think of a matrix M as a real matrix whose entries happen to only be 0 or 1, M is invertible if and only its determinant is non-zero. But if you think of M as a matrix over GF(2), the entries are either 0 or 1 out of necessity, and M is invertible if and only if its determinant, computed in GF(2), is non-zero. If the determinant of M as a real matrix is a non-zero even number, then M is invertible as a real matrix but not as a matrix over GF(2).

Working over GF(2), what is the probability that a random matrix is invertible? Turns out it’s just as easy to answer a more general question: what is the probability that a random n × n matrix over GF(q), a finite field with q elements, is invertible? This is

When q = 2 and n = 8 this probability is 0.289919. The probability is roughly the same for all larger values of n, converging to approximately 0.288788 as n → ∞.

What is the probability that an 8 × 8 matrix with random 0 and 1 entries is invertible as a real matrix? We can estimate this by simulation.

import numpy as np

def simulate_prob_invertible_real(n, numreps=1000):

s = 0

for _ in range(numreps):

M = np.random.randint(0, 2, size=(n, n))

det = np.linalg.det(M)

if abs(det) > 1e-9:

s += 1

return s/numreps

When n = 8, I got 0.5477 when running the code with 10,000 reps.

When n = 32, I got a probability of 1. Obviously it is possible for a 32 × 32 binary matrix to be singular, but it’s very unlikely: it didn’t happen in 10,000 random draws.

The post Probability that a random binary matrix is invertible first appeared on John D. Cook.2026-05-11 02:51:40

The previous post looked at the tempering step of the Mersenne Twister, formulating a sequence of bit operations as multiplication by a matrix mod 2. This post will look at the components more closely.

The theorems of linear algebra generally hold independent of the field of scalars. Typically the field is ℝ or ℂ, but most of basic linear algebra works the same over every field [1]. In particular, we can do linear algebra over a finite field, and we’re interested in the most finite of finite fields GF(2), the field with just two elements, 0 and 1.

In GF(2), addition corresponds to XOR. We will denote this by ⊕ to remind us that although it’s addition, it’s not the usual addition, i.e. 1 ⊕ 1 = 0. Similarly, multiplication corresponds to AND. We’ll work with 8-bit numbers to make the visuals easier to see.

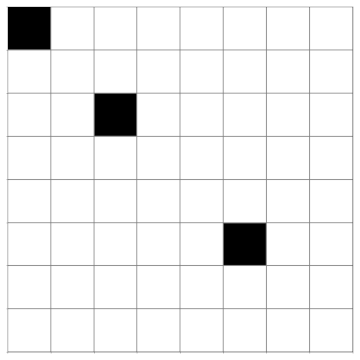

Shifting a number left one bit corresponds to multiplication by a matrix with 1’s below the diagonal main. Shifting left by k bits is the same as shifting left by 1 bit k times, so the the matrix representation for x << k is the kth power of the matrix representation of shifting left once. This matrix has 1s on the kth diagonal below the main diagonal. Below is the matrix for shifting left two bits, x << k.

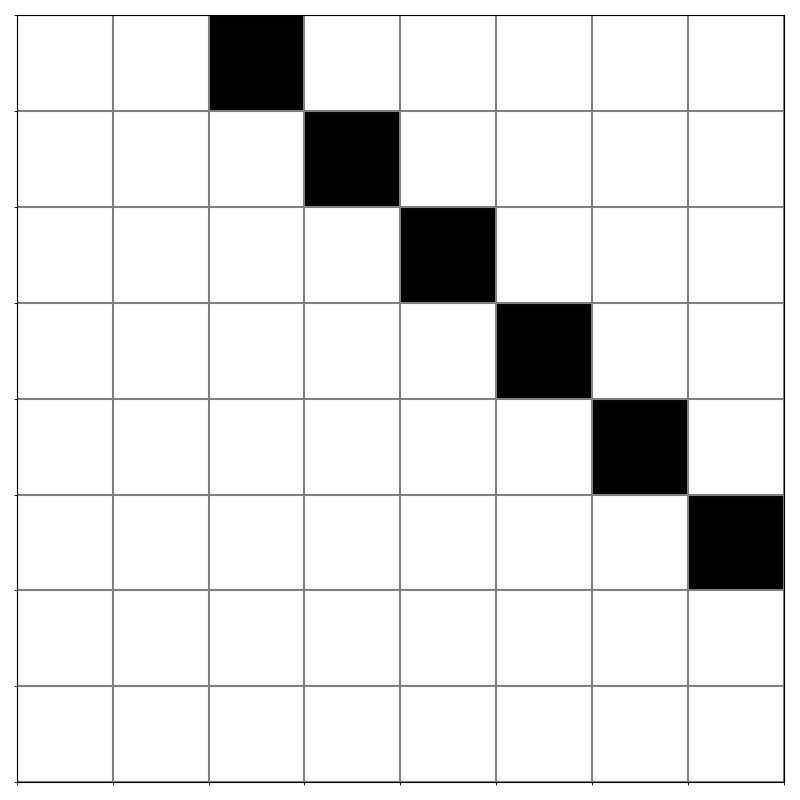

Right shifts are the mirror image of left shifts. Here’s the matrix for shifting right two bits, x >> k.

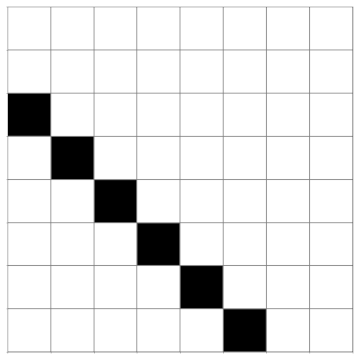

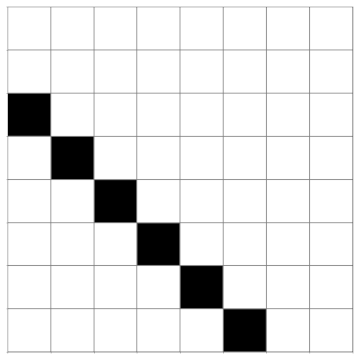

Shifts are not fully invertible because bits either fall off the left or the right end. The steps in the Mersenne Twister are invertible because shifts are always XOR’d with the original argument. For example, although the function that takes x to x >> 2 is not invertible, the function that takes x to x ⊕ (x >> 2) is invertible. This operation corresponds to the matrix below.

This is an upper triangular matrix, so its determinant is the product of the diagonal elements. These are all 1s, so the determinant is 1, and the matrix is invertible.

Bitwise AND multiplies each bit of the input by the corresponding bit in another number known as the mask. The bits aligned with a 1 are kept and the bits aligned with a 0 are cleared. This corresponds to multiplying by a diagonal matrix whose diagonal elements correspond to the bits in the mask. For example, here is the matrix that corresponds to taking the bitwise AND with 10100100.

Each of the steps in the Mersenne Twister tempering process are invertible because they all correspond to triangular matrices with all 1’s on the diagonal. For example, the line

y ^= (ysays to shift the bits of

yleft 7 places, then zero out the elements corresponding to 0s in the mask, then XOR the result with y. In matrix terms, we multiply by a lower triangular matrix with zeros on the main diagonal, then multiply by a diagonal matrix that zeros out some of the terms, then add the identity matrix. So the matrix corresponding to the line of code above is lower triangular, with all 1s on the diagonal, so it is invertible.[1] Until you get to eigenvalues. Then it matters whether the field is algebraically complete, which no finite field is.

The post The linear algebra of bit twiddling first appeared on John D. Cook.