2026-05-04 08:00:00

A while back I decided to stop using Tailwind for new projects and to just write vanilla CSS instead.

But one thing I missed about Tailwind was the colour palette (here as CSS).

If I wanted a light blue I could just use blue-100 and if I didn’t like it

maybe try blue-200 or blue-50. I’m not very good with colours so it makes

a big difference to me to have a reasonable colour palette that somebody who is

better at colour than me has thought about.

But I’m also a little tired of those Tailwind colours, so I asked on Mastodon today what other colour palettes were out there. And then a friend said they wanted links to those colour palettes, so here’s a blog post so my friend can see them, and all the rest of you too :)

The ones I liked the most were:

Folks also linked to a bunch of colour palette generators

I’ve always found these types of generators too hard to use but maybe one day I will get better enough at colour that I’m able to use a colour palette generator successfully so I’ll leave those links there anyway.

and more colour tools:

oklchGenerative colors with CSS gives an example of

how to use the oklch CSS function to dynamically generate colors.

2026-05-02 08:00:00

Hello! One of my long term projects on here is figuring out how to write frontend Javascript without using Node or any other server JS runtime.

One issue I run into a lot in my frontend JS projects is that I don’t know how to write tests for them. I’ve tried to use Playwright in the past, but it felt slow and unwieldy to be starting these new browser processes all the time, and it involved some Node code to orchestrate the tests.

The result is that I just don’t test my frontend code which doesn’t feel great. Usually I don’t update my projects much either so it doesn’t come up that much, but it would be nice to be able to make changes with more confidence! So a way to do frontend testing that I like has been on my wishlist for a long time.

Alex Chan wrote a great post a while back called Testing JavaScript without a (third-party) framework in response to one of my previous posts in this series that explained how to write a tiny unit-testing framework that runs in a page in browser.

I loved this post at the time, but it only talked about unit testing and I wanted to write end-to-end integration tests for my Vue components, and I didn’t know how to do that.

So when I was talking to Marco the other day and he said something like “you know, you can just run tests for your Vue components in the browser”, I thought “hey, I should try that again!!!”

I just did all of this yesterday so certainly there’s a lot to improve but I wanted to write down a few things I noticed about the process before I forget.

This was a bit tricky for me because the Vue site usually assumes that you’re

using Node as part of your build process in some way (there’s a lot of “step 1:

npm install THING), and I didn’t want to use Node/Deno/etc. But it turned

out to not be too complicated.

The project I’m going to talk about testing is this zine feedback site I wrote in 2023.

I used QUnit. It worked great but I don’t have anything interesting to say about how it works so I’ll leave it at that. I think that Alex’s “write your own test framework” approach would have worked too. I followed these directions.

I did appreciate that QUnit has a “rerun test” button that will only rerun 1 test. Because there are so many network requests in my tests, having a way to run just 1 test makes it a lot less confusing to debug the test.

The first thing I needed to do was get my Vue components set up in the test environment.

I changed my main app to put all my components in window._components,

kind of like this:

const components = {

'Feedback': FeedbackComponent,

...

}

window._components = components;

Then I was able to write a mountComponent function which

does basically exactly the same thing my normal main app does

(render a tiny template with the component I want to use).

The only differences are:

position: absolute; top: -10000, ...) so you can’t see it.Here’s what using the mountComponent function looks like:

const {div} = mountComponent(

'<Page :feedbacks="feedbacks" id=2 />',

{feedbacks: [testFeedback]},

);

and here’s the code for it:

function mountComponent(template, data) {

const app = Vue.createApp({

template: template,

data: () => data,

})

for (const [c, v] of Object.entries(window._components)) {

app.component(c, v);

}

const div = document.getElementById('qunit-fixture')

.appendChild(document.createElement('div'));

return div;

}

The result is a div where I can programmatically click, fill in form data, check that the right content appears, etc.

Because I was writing end-to-end integration tests to make sure my client JS worked properly with my server, I needed to have some test data in my database. So I wrote ~25 lines of SQL to set up some test data in my database, and added an endpoint to my dev server to run the SQL to reset the test data to a known state.

async function reset() {

return fetch('/api/reset_test_data', {method: "POST"})

}

Then I just run await reset() at the beginning of any test that needs the

test data.

My reset() function actually doesn’t always totally reset everything which is

kind of bad, but it was workable to start with and can always be improved.

Here’s what a basic test looks like! Basically we’re rendering the div and make sure it contains some approximately correct data.

QUnit.test('renders feedback content', async function (assert) {

const {div} = mountComponent(

'<Page :feedbacks="feedbacks" id=2 image=2 page_hash=2 />',

{feedbacks: [testFeedback]},

);

assert.ok(div.textContent.includes('loved this section'));

})

Those are all the basic pieces! Now here are a few issues I ran into along the way

I have a lot of network requests in my tests, and it takes time for them to finish and for the Vue code to do what it has to do with the results and update the DOM.

I think we all learned a long time ago that putting random sleep() calls in

your tests and hoping that the timings are right is slow and flaky and extremely

frustrating, so I needed a different way.

As far as I can tell the normal way to deal with this is to figure out a way to tell from the DOM whether it’s okay to proceed or not. Like “if this button is visible, we can “.

So I wrote a little waitFor() function that polls every 20ms to see if a

condition has finished yet. It times out after 2 seconds.

Here’s what using it looks like:

QUnit.test("click item", async function (assert) {

const {div} = mountComponent(

'<Feedback zine_id="test123" image_width="800px" />',

{});

const item = await waitFor(() => div.querySelector('.feedback-item'));

item.click();

// rest of test goes here...

})

It looks like there are a lot of implementations of this concept out there and they’re all better thought-through than mine. (from a quick Google: qunit-wait-for, playwright expect.poll)

In some cases I thought I’d identified the right thing to wait for in the DOM (“just wait for this textarea to appear!’) but it turned out that because of some internal details of how my program works, actually I needed to wait for something else later on which was hard to pin down.

I ended up changing one of my components to add some random value to the DOM

when it was finished an important action (like data-this-thing-is-ready=true)

which didn’t feel great.

My best guess is that the right way to fix this kind of test issue is a refactor that also makes the app more reliable for the users: if there’s an element in the DOM that isn’t actually ready for the user to interact with, maybe I shouldn’t be displaying it yet!

I ended up adding a few classes to HTML elements that I needed to find in the tests, either because I needed to click on them or wait for them to appear in the DOM.

I might want to change this approach later - frontend testing frameworks seem to suggest avoiding using CSS classes and instead using something like getByRole or as a last resort something like a data-testid. Feels like there’s a way to make the app more accessible and easier to test at the same time.

To fill out a form, I can’t just set the value, I also need to dispatch an

event to tell Vue that the element has changed. For example, checkbox and

textarea need different kinds of events.

textarea.value = 'banana banana banana';

textarea.dispatchEvent(new Event('input'));

checkbox.checked = true;

checkbox.dispatchEvent(new Event('change'));

This is kind of annoying and it made me realize why I might want to use some kind of UI testing library, for example:

I want to have an idea of what my test coverage was, and it turns out that Chrome actually has a built-in code coverage feature for JS and CSS!

My JS is bundled into a file called bundle.js with esbuild, so I could just

look at bundle.js and see which lines weren’t covered.

The process was a little finicky: I had to turn off sourcemaps in the Chrome devtools to get this to work, and there’s a specific not super obvious series of actions I have to do in order to see the coverage data.

As usual with these posts I’ve never really worked as a frontend or backend developer (other than for myself!) and I feel like I’m constantly learning how to do super basic tasks.

I really had a blast doing this. My frontend projects always feel so fragile because they’re untested, and maybe one day I’ll have a test suite I’m confident in!

Some things I’m still thinking about:

.umd.js file

that works without Node.2026-03-10 08:00:00

Hello! My big takeaway from last month’s musings about man pages was that examples in man pages are really great, so I worked on adding (or improving) examples to two of my favourite tools’ man pages.

Here they are:

The goal here was really just to give the absolute most basic examples of how to use the tool, for people who use tcpdump or dig infrequently (or have never used it before!) and don’t remember how it works.

So far saying “hey, I want to write an examples section for beginners and infrequent users of this tools” has been working really well. It’s easy to explain, I think it makes sense from everything I’ve heard from users about what they want from a man page, and maintainers seem to find it compelling.

Thanks to Denis Ovsienko, Guy Harris, Ondřej Surý, and everyone else who reviewed the docs changes, it was a good experience and left me motivated to do a little more work on man pages.

I’m interested in working on tools’ official documentation right now because:

tcpdump -w out.pcap, it’s useful to pass -v to print

a live summary of how many packets have been captured so far. That’s really

useful, I didn’t know it, and I don’t think I ever would have noticed it on

my own.It’s kind of a weird place for me to be because honestly I always kind of assume documentation is going to be hard to read, and I usually just skip it and read a blog post or Stack Overflow comment or ask a friend instead. But right now I’m feeling optimistic, like maybe the documentation doesn’t have to be bad? Maybe it could be just as good as reading a really great blog post, but with the benefit of also being actually correct? I’ve been using the Django documentation recently, and it’s really good! We’ll see.

The tcpdump project tool’s man page is

written in the roff language,

which is kind of hard to use and that I really did not feel like learning it.

I handled this by writing a very basic markdown-to-roff script to convert Markdown to roff, using similar conventions to what the man page was already using. I could maybe have just used pandoc, but the output pandoc produced seemed pretty different, so I thought it might be better to write my own script instead. Who knows.

I did think it was cool to be able to just use an existing Markdown library’s ability to parse the Markdown AST and then implement my own code-emitting methods to format things in a way that seemed to make sense in this context.

I went on a whole rabbit hole learning about the history of roff, how it’s

evolved since the 70s, and who’s working on it today, inspired by learning about

the mandoc project that BSD systems (and some Linux

systems, and I think Mac OS) use for formatting man pages. I won’t say more

about that today though, maybe another time.

In general it seems like there’s a technical and cultural divide in how documentation works on BSD and on Linux that I still haven’t really understood, but I have been feeling curious about what’s going on in the BSD world.

2026-02-18 08:00:00

Hello! After spending some time working on the Git man pages last year, I’ve been thinking a little more about what makes a good man page.

I’ve spent a lot of time writing cheat sheets for tools (tcpdump, git, dig, etc) which have a man page as their primary documentation. This is because I often find the man pages hard to navigate to get the information I want.

Lately I’ve wondering – could the man page itself have an amazing cheat sheet in it? What might make a man page easier to use? I’m still very early in thinking about this but I wanted to write down some quick notes.

I asked some people on Mastodon for their favourite man pages, and here are some examples of interesting things I saw on those man pages.

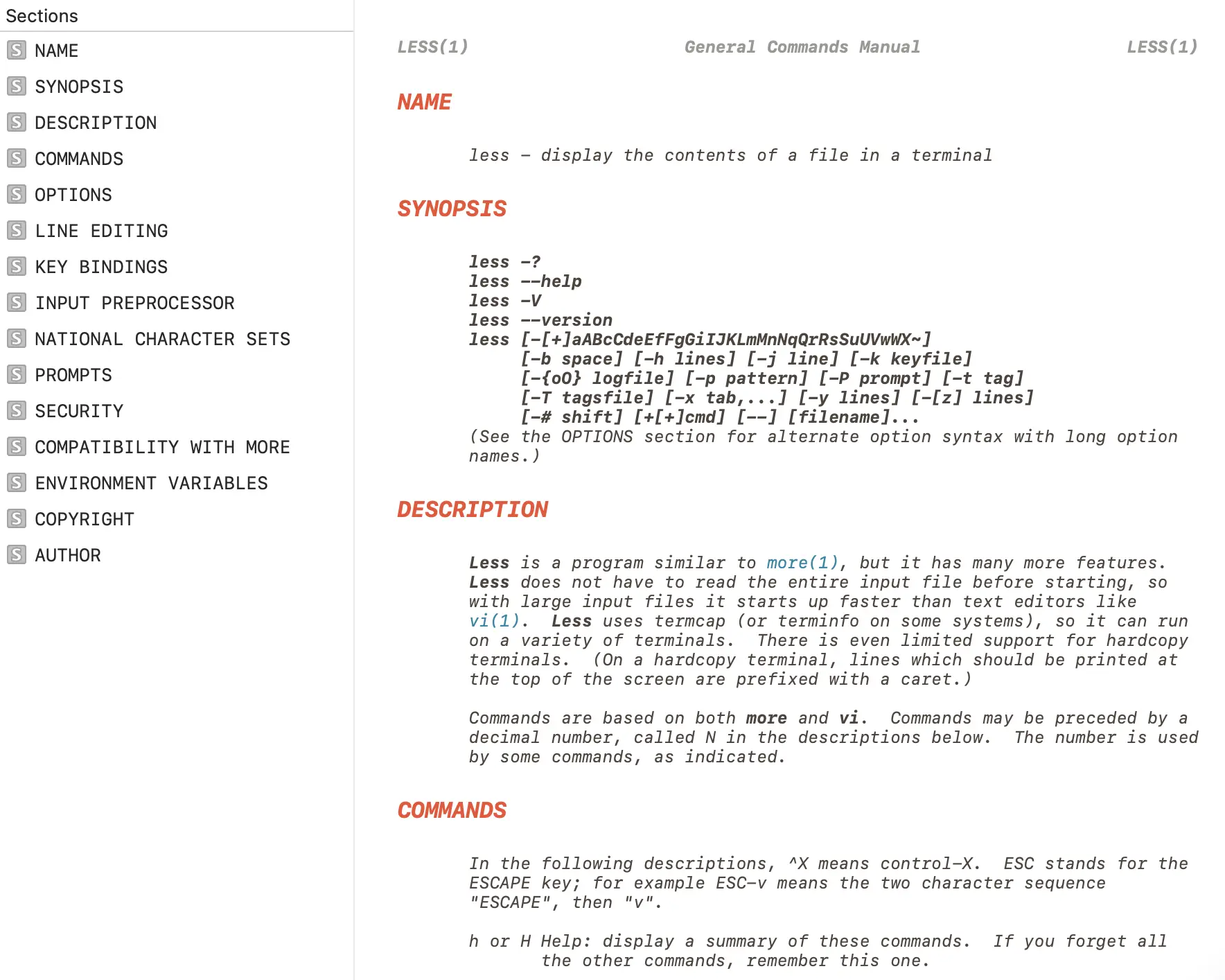

If you’ve read a lot of man pages you’ve probably seen something like this in

the SYNOPSIS: once you’re listing almost the entire alphabet, it’s hard

ls [-@ABCFGHILOPRSTUWabcdefghiklmnopqrstuvwxy1%,]

grep [-abcdDEFGHhIiJLlMmnOopqRSsUVvwXxZz]

The rsync man page has a solution I’ve never seen before: it keeps its SYNOPSIS very terse, like this:

Local:

rsync [OPTION...] SRC... [DEST]

and then has an “OPTIONS SUMMARY” section with a 1-line summary of each option, like this:

--verbose, -v increase verbosity

--info=FLAGS fine-grained informational verbosity

--debug=FLAGS fine-grained debug verbosity

--stderr=e|a|c change stderr output mode (default: errors)

--quiet, -q suppress non-error messages

--no-motd suppress daemon-mode MOTD

Then later there’s the usual OPTIONS section with a full description of each option.

The strace man page organizes its options by category (like “General”, “Startup”, “Tracing”, and “Filtering”, “Output Format”) instead of alphabetically.

As an experiment I tried to take the grep man page and make an

“OPTIONS SUMMARY” section grouped by category, you can see the results

here. I’m not

sure what I think of the results but it was a fun exercise. When I was writing

that I was thinking about how I can never remember the name of the -l grep

option. It always takes me what feels like forever to find it in the man page

and I was trying to think of what structure would make it easier for me to find.

Maybe categories?

A couple of people pointed me to the suite of Perl man pages (perlfunc, perlre, etc), and one thing I

noticed was man perlcheat, which has

cheat sheet sections like this:

SYNTAX

foreach (LIST) { } for (a;b;c) { }

while (e) { } until (e) { }

if (e) { } elsif (e) { } else { }

unless (e) { } elsif (e) { } else { }

given (e) { when (e) {} default {} }

I think this is so cool and it makes me wonder if there are other ways to write condensed ASCII 80-character-wide cheat sheets for use in man pages.

A common comment was something to the effect of “I like any man page that has examples”. Someone mentioned the OpenBSD man pages, and the openbsd tail man page has examples of the exact 2 ways I use tail at the end.

I think I’ve most often seen the EXAMPLES section at the end of the man page, but some man pages (like the rsync man page from earlier) start with the examples. When I was working on the git-add and git rebase man pages I put a short example at the beginning.

This isn’t a property of the man page itself, but one issue with man pages in the terminal is it’s hard to know what sections the man page has.

When working on the Git man pages, one thing Marie and I did was to add a table of contents to the sidebar of the HTML versions of the man pages hosted on the Git site.

I’d also like to add more hyperlinks to the HTML versions of the Git man pages at some point, so that you can click on “INCOMPATIBLE OPTIONS” to get to that section. It’s very easy to add links like this in the Git project since Git’s man pages are generated with AsciiDoc.

I think adding a table of contents and adding internal hyperlinks is kind of a nice middle ground where we can make some improvements to the man page format (in the HTML version of the man page at least) without maintaining a totally different form of documentation. Though for this to work you do need to set up a toolchain like Git’s AsciiDoc system.

It would be amazing if there were some kind of universal system to make it easy

to look up a specific option in a man page (“what does -a do?”).

The best trick I know is use the man pager to search for something like ^ *-a

but I never remember to do it and instead just end up going through

every instance of -a in the man page until I find what I’m looking for.

The curl man page has examples for every option, and there’s also a table of contents on the HTML version so you can more easily jump to the option you’re interested in.

For instance the example for --cert makes it easy to see that you likely also want to pass the --key option, like this:

curl --cert certfile --key keyfile https://example.com

The way they implement this is that there’s [one file for each option](https://github.com/curl/curl/blob/dc08922a61efe546b318daf964514ffbf41583 25/docs/cmdline-opts/append.md) and there’s an “Example” field in that file.

Quite a few people said that man ascii was their favourite man page, which looks like this:

Oct Dec Hex Char

───────────────────────────────────────────

000 0 00 NUL '\0' (null character)

001 1 01 SOH (start of heading)

002 2 02 STX (start of text)

003 3 03 ETX (end of text)

004 4 04 EOT (end of transmission)

005 5 05 ENQ (enquiry)

006 6 06 ACK (acknowledge)

007 7 07 BEL '\a' (bell)

010 8 08 BS '\b' (backspace)

011 9 09 HT '\t' (horizontal tab)

012 10 0A LF '\n' (new line)

Obviously man ascii is an unusual man page but I think what’s cool about this man page (other than the fact that it’s always

useful to have an ASCII reference) is it’s very easy to scan to find the

information you need because of the table format. It makes me wonder if there

are more opportunities to display information in a “table” in a man page to make

it easier to scan.

When I talk about man pages it often comes up that the GNU coreutils man pages (for example man tail) don’t have examples, unlike the OpenBSD man pages, which do have examples.

I’m not going to get into this too much because it seems like a fairly political topic and I definitely can’t do it justice here, but here are some things I believe to be true:

info tool. I’ve heard from some Emacs users that they like the Emacs info browser. I don’t think I’ve ever talked to anyone who uses the standalone info tool.After a certain level of complexity a man page gets really hard to navigate: while I’ve never used the coreutils info manual and probably won’t, I would almost certainly prefer to use the GNU Bash reference manual or the The GNU C Library Reference Manual via their HTML documentation rather than through a man page.

Here are some tools I think are interesting:

tldr grep. Lots of people have told me they find it useful.

Man pages are such a constrained format and it’s fun to think about what you can do with such limited formatting options.

Even though I’m very into writing I’ve always had a bad habit of never reading documentation and so it’s a little bit hard for me to think about what I actually find useful in man pages, I’m not sure whether I think most of the things in this post would improve my experience or not. (Except for examples, I LOVE examples)

So I’d be interested to hear about other man pages that you think are well designed and what you like about them, the comments section is here.

2026-01-27 08:00:00

Hello! One of my favourite things is starting to learn an Old Boring Technology that I’ve never tried before but that has been around for 20+ years. It feels really good when every problem I’m ever going to have has been solved already 1000 times and I can just get stuff done easily.

I’ve thought it would be cool to learn a popular web framework like Rails or Django or Laravel for a long time, but I’d never really managed to make it happen. But I started learning Django to make a website a few months back, I’ve been liking it so far, and here are a few quick notes!

I spent some time trying to learn Rails in 2020,

and while it was cool and I really wanted to like Rails (the Ruby community is great!),

I found that if I left my Rails project alone for months, when I came

back to it it was hard for me to remember how to get anything done because

(for example) if it says resources :topics in your routes.rb, on its own

that doesn’t tell you where the topics routes are configured, you need to

remember or look up the convention.

Being able to abandon a project for months or years and then come back to it is really important to me (that’s how all my projects work!), and Django feels easier to me because things are more explicit.

In my small Django project it feels like I just have 5 main files (other

than the settings files): urls.py, models.py, views.py, admin.py, and

tests.py, and if I want to know where something else is (like an HTML template)

is then it’s usually explicitly referenced from one of those files.

For this project I wanted to have an admin interface to manually edit or view some of the data in the database. Django has a really nice built-in admin interface, and I can customize it with just a little bit of code.

For example, here’s part of one of my admin classes, which sets up which fields to display in the “list” view, which field to search on, and how to order them by default.

@admin.register(Zine)

class ZineAdmin(admin.ModelAdmin):

list_display = ["name", "publication_date", "free", "slug", "image_preview"]

search_fields = ["name", "slug"]

readonly_fields = ["image_preview"]

ordering = ["-publication_date"]

In the past my attitude has been “ORMs? Who needs them? I can just write my own SQL queries!”.

I’ve been enjoying Django’s ORM so far though, and I think it’s cool how Django

uses __ to represent a JOIN, like this:

Zine.objects

.exclude(product__order__email_hash=email_hash)

This query involves 5 tables: zines, zine_products, products, order_products, and orders.

To make this work I just had to tell Django that there’s a ManyToManyField

relating “orders” and “products”, and another ManyToManyField relating

“zines”, and “products”, so that it knows how to connect zines, orders, products.

I definitely could write that query, but writing product__order__email_hash is

a lot less typing, it feels a lot easier to read, and honestly I think it would

take me a little while to figure out how to construct the query

(which needs to do a few other things than just those joins).

I have zero concern about the performance of my ORM-generated queries so I’m pretty excited about ORMs for now, though I’m sure I’ll find things to be frustrated with eventually.

The other great thing about the ORM is migrations!

If I add, delete, or change a field in models.py, Django will automatically

generate a migration script like migrations/0006_delete_imageblob.py.

I assume that I could edit those scripts if I wanted, but so far I’ve just been running the generated scripts with no change and it’s been going great. It really feels like magic.

I’m realizing that being able to do migrations easily is important for me right now because I’m changing my data model fairly often as I figure out how I want it to work.

I had a bad habit of never reading the documentation but I’ve been really enjoying the parts of Django’s docs that I’ve read so far. This isn’t by accident: Jacob Kaplan-Moss has a talk from PyCon 2011 on Django’s documentation culture.

For example the intro to models lists the most important common fields you might want to set when using the ORM.

After having a bad experience trying to operate Postgres and not being able to

understand what was going on, I decided to run all of my small websites with

SQLite instead. It’s been going way better, and I love being able to backup by

just doing a VACUUM INTO and then copying the resulting single file.

I’ve been following these instructions for using SQLite with Django in production.

I think it should be fine because I’m expecting the site to have a few hundred writes per day at most, much less than Mess with DNS which has a lot more of writes and has been working well (though the writes are split across 3 different SQLite databases).

Django seems to be very “batteries-included”, which I love – if I want CSRF

protection, or a Content-Security-Policy, or I want to send email, it’s all

in there!

For example, I wanted to save the emails Django sends to a file in dev mode (so that it didn’t send real email to real people), which was just a little bit of configuration.

I just put this settings/dev.py:

EMAIL_BACKEND = "django.core.mail.backends.filebased.EmailBackend"

EMAIL_FILE_PATH = BASE_DIR / "emails"

and then set up the production email like this in settings/production.py

EMAIL_BACKEND = "django.core.mail.backends.smtp.EmailBackend"

EMAIL_HOST = "smtp.whatever.com"

EMAIL_PORT = 587

EMAIL_USE_TLS = True

EMAIL_HOST_USER = "xxxx"

EMAIL_HOST_PASSWORD = os.getenv('EMAIL_API_KEY')

That made me feel like if I want some other basic website feature, there’s likely to be an easy way to do it built into Django already.

I’m still a bit intimidated by the settings.py file: Django’s settings system

works by setting a bunch of global variables in a file, and I feel a bit

stressed about… what if I make a typo in the name of one of those variables?

How will I know? What if I type WSGI_APPLICATOIN = "config.wsgi.application"

instead of WSGI_APPLICATION?

I guess I’ve gotten used to having a Python language server tell me when I’ve made a typo and so now it feels a bit disorienting when I can’t rely on the language server support.

I haven’t really successfully used an actual web framework for a project before (right now almost all of my websites are either a single Go binary or static sites), so I’m interested in seeing how it goes!

There’s still lots for me to learn about, I still haven’t really gotten into Django’s form validation tooling or authentication systems.

Thanks to Marco Rogers for convincing me to give ORMs a chance.

(we’re still experimenting with the comments-on-Mastodon system! Here are the comments on Mastodon! tell me your favourite Django feature!)

2026-01-08 08:00:00

Hello! This past fall, I decided to take some time to work on Git’s documentation. I’ve been thinking about working on open source docs for a long time – usually if I think the documentation for something could be improved, I’ll write a blog post or a zine or something. But this time I wondered: could I instead make a few improvements to the official documentation?

So Marie and I made a few changes to the Git documentation!

After a while working on the documentation, we noticed that Git uses the terms “object”, “reference”, or “index” in its documentation a lot, but that it didn’t have a great explanation of what those terms mean or how they relate to other core concepts like “commit” and “branch”. So we wrote a new “data model” document!

You can read the data model here for now. I assume at some point (after the next release?) it’ll also be on the Git website.

I’m excited about this because understanding how Git organizes its commit and branch data has really helped me reason about how Git works over the years, and I think it’s important to have a short (1600 words!) version of the data model that’s accurate.

The “accurate” part turned out to not be that easy: I knew the basics of how Git’s data model worked, but during the review process I learned some new details and had to make quite a few changes (for example how merge conflicts are stored in the staging area).

git push, git pull, and moreI also worked on updating the introduction to some of Git’s core man pages. I quickly realized that “just try to improve it according to my best judgement” was not going to work: why should the maintainers believe me that my version is better?

I’ve seen a problem a lot when discussing open source documentation changes where 2 expert users of the software argue about whether an explanation is clear or not (“I think X would be a good way to explain it! Well, I think Y would be better!”)

I don’t think this is very productive (expert users of a piece of software are notoriously bad at being able to tell if an explanation will be clear to non-experts), so I needed to find a way to identify problems with the man pages that was a little more evidence-based.

I asked for test readers on Mastodon to read the current version of documentation and tell me what they find confusing or what questions they have. About 80 test readers left comments, and I learned so much!

People left a huge amount of great feedback, for example:

Most of the test readers had been using Git for at least 5-10 years, which I think worked well – if a group of test readers who have been using Git regularly for 5+ years find a sentence or term impossible to understand, it makes it easy to argue that the documentation should be updated to make it clearer.

I thought this “get users of the software to comment on the existing documentation and then fix the problems they find” pattern worked really well and I’m excited about potentially trying it again in the future.

We ended updating these 4 man pages:

git add (before, after)git checkout (before, after)git push (before, after)git pull (before, after)The git push and git pull changes were the most interesting to me: in

addition to updating the intro to those pages, we also ended up writing:

Making those changes really gave me an appreciation for how much work it is

to maintain open source documentation: it’s not easy to write things that are

both clear and true, and sometimes we had to make compromises, for example the sentence

“git push may fail if you haven’t set an upstream for the current branch,

depending on what push.default is set to.” is a little vague, but the exact

details of what “depending” means are really complicated and untangling that is

a big project.

It took me a while to understand Git’s development process. I’m not going to try to describe it here (that could be a whole other post!), but a few quick notes:

I also found the mailing list archives on lore.kernel.org hard to navigate, so I hacked together my own git list viewer to make it easier to read the long mailing list threads.

Many people helped me navigate the contribution process and review the changes: thanks to Emily Shaffer, Johannes Schindelin (the author of GitGitGadget), Patrick Steinhardt, Ben Knoble, Junio Hamano, and more.

(I’m experimenting with comments on Mastodon, you can see the comments here)