2026-05-08 08:00:00

tl;dr: Try out telecast.company; share your complaints/gripes on GitHub.

2005 birthed the iPod Video and YouTube.

20 years later… it's 2025. Compare YouTube against the podcast (iPod broadcast) ecosystem. YouTube pisses off its creators, fuels a clickbait thumbnail arms-race, and fosters addiction (protip: disable recommendations). Podcasters thrive in an ecosystem of interviews, educational content, and long-form journalism.

Okay, this is a bit unfair. Trash podcasts exist. Quality YouTube channels exist (and are strafing over to Nebula). Incentives tend to produce different median media on their platforms; YouTube's centralized advertising model seems to encourage unsustainable behavior from its advertisers, its creators, and its consumers.

Yes, video podcasts have been a thing since 2005, but YouTube dominated online video by simplifying hosting/delivery/discovery. Twenty years later, creating/consuming video podcasts remains an awful experience.

"vidcast" and "vodcast" are clunky portmanteaus, so I'm co-opting the term "telecast" (television broadcast) for video podcasts.

And so I built a very crappy video podcast player.

Some initial features/findings:

That prototype was too crappy. I tossed it in the proverbial garbage.

"Throw One Away" is my favorite way of acclimating to a new problem domain.

Here's what it looks like now:

It's designed to be local-first software; nearly everything lives in the frontend. The only server endpoints are (1) a public search API and (2) a CORS/cache proxy for RSS feeds and video thumbnails.

I used duckdb and some nasty sql to load the search database with ~100K feeds from PodcastIndex.org.

curl -O https://public.podcastindex.org/podcastindex_feeds.db.tgz

tar -xzf podcastindex_feeds.db.tgz -C /tmp/

sed "s|\$DATABASE_URL|$DATABASE_URL|" import_feeds.sql | duckdbThanks to PodcastIndex.org for helping make the internet open, free, and accessible!

Beyond video podcasts, I also added a bunch of my favorite RSS feeds from YouTube, plus channels from Kagi's smallyt.txt list.

The code is on Github. It's crappy, but it's mine -- I'm tired of smelling other people's crap.

Try it out at telecast.company

2026-04-03 08:00:00

Behold, Slap! It's a language chimera:

Slap is a stack language. Postfix syntax is ugly, but powerful:

-- twenty fibonacci numbers (no recursion)

0 1 20 (swap over plus) repeat drop

6765 eq assertI'll eventually add Uiua-esque glyphs so you

can feel like a wizard: 0 1 20 (: ↷ +) ⍥ ↘ 2765 = !

Those who abhor tacit stack manipulation can use let instead:

-- sum of squares (tacit)

[1 2 3 4 5]

(sqr) map sum

55 eq assert

-- sum of squares (explicit)

[1 2 3 4 5]

0 ('x let 'y let x x mul y plus) fold

55 eq assertSlap's true power is what it cannot do.

Parametric types (à la Hindley–Milner) prevent mismatched data:

[1] [2.0] cat

-- TYPE ERROR: type variable conflict

-- expected: int list

-- got: float listLinear types (i.e. Rust-like borrow checker) protect allocated memory from leakage, corruption, meddling, and abandonment. You cannot duplicate nor discard a pointer (box):

42 box dup

-- TYPE ERROR: dup requires copyable type, got box

42 box drop

-- TYPE ERROR: drop requires copyable type, got boxFor boxes, you must lend, mutate, clone, or free:

-- borrow a read-only snapshot with lend

[1 2 3] box

(len) lend

3 eq assert free

-- mutate in place

[1 2 3] box

(4 give) mutate

(len) lend

4 eq assert free

-- clone into two independent boxes

[1 2 3] box

clone

(4 give) mutate

(len) lend

4 eq assert free

() lend

3 eq assert freeThis API prevents classic problems like double-free, use-after-free, and forgot-to-free.

Linear types are also great for file handles and thread coordination. Stay tuned!

Slap's stacks are flexible. You can safely use them as tuples or closures without confusing the type system:

-- it's a tuple

(1 2) apply plus

3 eq assert

-- it's a closure

'make-adder ('n let (n plus)) def

5 make-adder 3 swap apply

8 eq assert

-- it's a function

(1 plus) (2 mul) compose (3 sub) compose (sqr) compose

5 swap apply

81 eq assertIn some languages you can declare function types. Typed stack languages have a similar concept called "stack effects". The Slap type-checker automatically infers these for you, but you may add them for extra clarity/enforcement:

-- double = n -> n * 2

'double (2 mul) def

-- square : int -> int

-- square = n -> n * n

'square (dup mul)

[int lent in int move out]

effect check defSlap's annotations are expressive enough to describe exotic stack effects:

-- no effect

'noop () [] effect check def

-- return multiple values

'hello-world ("hello" "world")

[str move out str move out]

effect check def

-- linear parametric effect

'pal

((dup reverse cat) mutate)

[ 'a list 't own in

'a list 't own out ]

effect check defNo garbage collection! No secret allocations! Everything sits on the stack

(unless you send it to the heap in a box).

The stack is often slower than the heap. Slap's transparent semantics forces you to reason about such tradeoffs.

Slap has fast solutions to most of the first fifty Project Euler problems. Here are the first ten:

| 1 | 3 ms | problem | solution |

| 2 | 3 ms | problem | solution |

| 3 | 3 ms | problem | solution |

| 4 | 102 ms | problem | solution |

| 5 | 3 ms | problem | solution |

| 6 | 3 ms | problem | solution |

| 7 | 542 ms | problem | solution |

| 8 | 10 ms | problem | solution |

| 9 | 40 ms | problem | solution |

| 10 | 7298 ms | problem | solution |

| … | … | … | … |

slap.c is ~2,000 miserable lines of C99.

Could be smaller too -- I'm convinced I can shave another ~500 lines without sacrificing readability or performance.

It's a lexer, a typechecker, and a stack evaluator.

If Slap's architecture can fit in my pea-sized brain, it will surely fit in yours too.

Slap has pixels! Build with make slap-sdl (native) or make slap-wasm

(browser) to get a 640x480 canvas with 2-bit grayscale.

The runtime and lo-fi aesthetics were inspired by Uxn. Go check it out!

You can interact with your host system via efficient (and type-safe) (and memory-safe) managed effects:

'tick (handler1) on

'keydown (handler2) on

'mousedown (handler3) on

(render0) show'cell ( H plus H mod W mul swap W plus W mod plus nth ) def

'neighbors (

'cy let 'cx let 'gs let

gs cx 1 sub cy 1 sub cell

gs cx cy 1 sub cell plus

gs cx 1 plus cy 1 sub cell plus

gs cx 1 sub cy cell plus

gs cx 1 plus cy cell plus

gs cx 1 sub cy 1 plus cell plus

gs cx cy 1 plus cell plus

gs cx 1 plus cy 1 plus cell plus

) def

'step (

'g let list

0 'i let

(i N lt) (

i W divmod 'y let 'x let

'g x y neighbors 'n let

'g i nth 1 eq (n 2 eq n 3 eq or) (n 3 eq) if

(1) (0) if give

i 1 plus 'i let

) while

) def

'tick ( drop drawing 0 eq (step) () if ) on

(... render grid ...) show'tick (

...

-- Flee from mouse

dist2 10000 lt mx -1 neq and (

vx dx sign 6 mul plus 'vx let

vy dy sign 6 mul plus 'vy let

) () if

-- When stopped, sneak back home

vx abs vy abs plus 2 lt (

hxs i get x sub 'hdx let

hys i get y sub 'hdy let

x hdx sign plus 'x let

y hdy sign plus 'y let

) () if

) on'tick (

drop 1 plus

state 1 eq (

dup 6 mod 0 eq (

-- move head in current direction

dir 0 eq (hx hy 1 sub) (

dir 1 eq (hx 1 plus hy) (

dir 2 eq (hx hy 1 plus) (

hx 1 sub hy

) if) if) if

-- wall/self collision → death

-- eat food → grow, else drop tail

) () if

) () if

) onSee all the examples for yourself!

2026-03-20 08:00:00

Hello. I'm hosting a writing contest with my friends at Quarter Mile. Please send us something! Don't overthink it -- they're just aliens, and you're only human.

2026-03-19 08:00:00

| Period | Invested | Asset Value | Debt | Depreciation | Taxes | Cash Out | Equity |

|---|

tl;dr:

- Defer US taxes by reinvesting your taxable income into the economy as business expenses, depreciating assets, etc.

- For your leveraged investments, pay yourself in refinanced cash when your investments appreciate and/or credit rates drop.

You can dodge defer US taxes if you reinvest your dollars into the economy.

This is no loophole; the system is working as intended. Your government wants

you to create taxable wealth.

Equity is taxable wealth that already exists. You cannot create wealth by purchasing $10k of AAPL equity. You can create wealth by investing $10k in an apple orchard.

But you must reinvest your dollars in a particular way that Uncle Sam understands. When you report business expenses on your tax return, you inform the IRS what you spent on enterprise. The US tax code rewards entrepreneurial pursuits which grow the economy. Uncle Sam happily forgoes $1 now for $11 next decade -- it's the same slice from a larger pie.

To perpetually defer taxes on your taxable wealth, keep reinvesting your surplus. The IRS forgoes $10 now for $110 next decade, $100 for $1,100, and so on.

If you aren't actually reinvesting capital, pay your damn taxes. Don't be an asshole.

Depreciation spreads business expenses over time. If you invest $100 in a lawnmower that earns $11 per year, this depreciation schedule will minimize your total taxable income each year:

| Year | Revenue | Depreciation | Taxable Income |

|---|---|---|---|

| 1 | $11 | $10 | $1 |

| 2 | $11 | $10 | $1 |

| … | … | … | … |

| 10 | $11 | $10 | $1 |

| Total | $110 | $100 | $10 |

But you can also ask the IRS to treat it as $10/year for 10 years rather than $11/year for 9 years. You might consider this schedule if your other investments lost $11 this year:

| Year | Revenue | Depreciation | Taxable Income |

|---|---|---|---|

| 1 | $11 | $0 | $11 |

| 2 | $11 | $11 | $0 |

| … | … | … | … |

| 10 | $11 | $11 | $0 |

| Total | $110 | $100 | $11 |

Let's say your other investments gain $89 this year, so you front-load the lawnmower depreciation schedule. You pay zero taxes this year, but you've increased your tax obligations in future years:

| Year | Revenue | Depreciation | Taxable Income |

|---|---|---|---|

| 1 | $11 | $100 | -$89 |

| 2 | $11 | $0 | $11 |

| … | … | … | … |

| 10 | $11 | $0 | $11 |

| Total | $110 | $100 | $99 |

To defer taxes, deduct yesterday's expenses from today's revenue. Good accountants will massage depreciation schedules to match unexpected profits/losses.

Example: Instead of depreciating a building over 27.5 or 39 years, a cost segregation study could reclassify components (carpeting, fixtures, landscaping, certain electrical) into 5, 7, or 15-year assets. In this way, a $2M property could accrue $200K–$300K in depreciation deductions its first year.

Again, this is intentional. If you contribute more to the US economy than you siphon out, your government will happily pretend you're penniless.

A politician attracts investments into their constituency via tax incentives. Unfortunately, some tax incentives are loopholes which invite crooks to claim exemptions without truly contributing. It is difficult to distinguish whether a loophole is corrupt or negligent, and impossible to prosecute politicians either way.

Most investment money is borrowed (e.g. SBA loans, commercial real estate loans). Your government wants you to create wealth, so it loans money to banks at a magic interest rate. Banks may lend that money to you at a higher rate.

If you contribute loaned wealth to the US economy, you must siphon your dollars out in a way that Uncle Sam understands. One popular method is refinancing, i.e. paying off your old loan with a new loan and pocketing the cash difference. Loaned money isn't taxable income, so you can save/spend it without affecting your tax rate.

Disclaimer: Loans ain't free. Refinancing ain't easy.

Death is a popular escape from deferred taxes. When you die, your obligations to the government vanish. Your heirs inherit assets/property at market value. Their assets depreciate from new cost bases.

According to Modern Monetary Theory, taxes are a method of pulling dollars out of circulation. The government never actually needed your money anyway.

Your life on Earth continues long after you die. Every dollar you've spent, saved, borrowed, lent, donated, willed -- it all mattered. People will commute on the roads you paid for, or taste apples from your trees, or pollute the Pacific Ocean, or survive tuberculosis, or eat pasta, or overdose on fentanyl, or play chess, or gossip, or whatever people do.

2026-03-03 08:00:00

I've been a reluctant Bank of America customer for over a decade. My parents chose BofA, so I chose BofA. Migrating to Chase or Wells Fargo is more of the same -- not worth the switching cost.

Am I really a "customer" when they charge -0.01% interest to hold my money?

BofA is clunky. Their physical branches seem simultaneously overstaffed and understaffed. Everybody there is cordial yet confused. I would never visit their physical locations if their app worked, but alas, their app is crap. I cannot open/close accounts, I cannot reliably cash checks, I cannot easily transfer money -- the software might just be ornamental.

But it ain't 2010 anymore. We now have branchless banks like Ally, SoFi, and maybe even Robinhood. Online-only banking alternatives offer 3%+ APY in lieu of physical locations. According to science, paying region-locked human staffers to occupy an expensive retail space full of money costs a fortune.

Sometimes these banks are technically not banks -- they're "financial services companies with trusted banking partners".

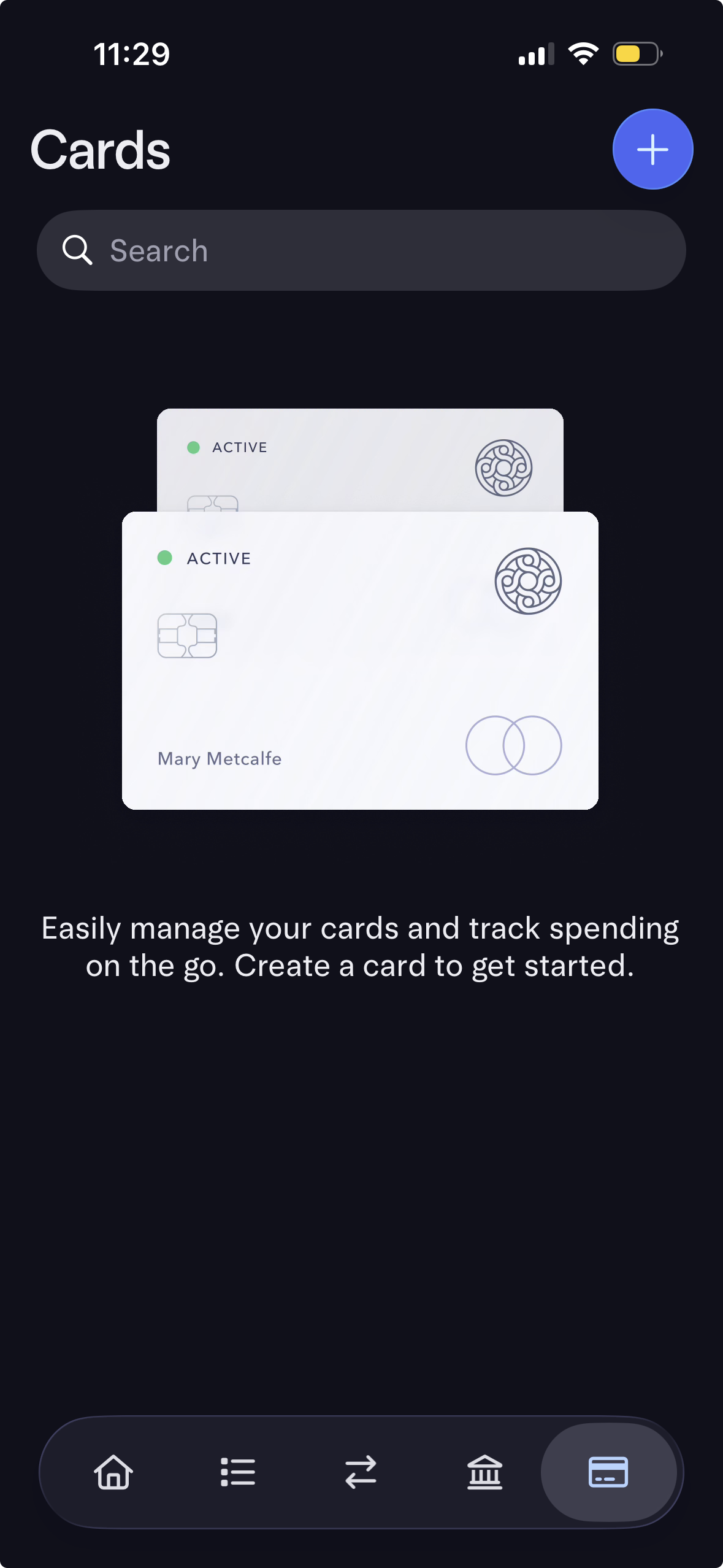

I use Mercury for business banking. It's great. When I discovered that Mercury offers personal banking, I was cautiously optimistic. They built a successful B2B product, but companies usually botch expansions from B2B into B2C.

Oracle's graveyard of B2C products remains a trove of cautionary tales.

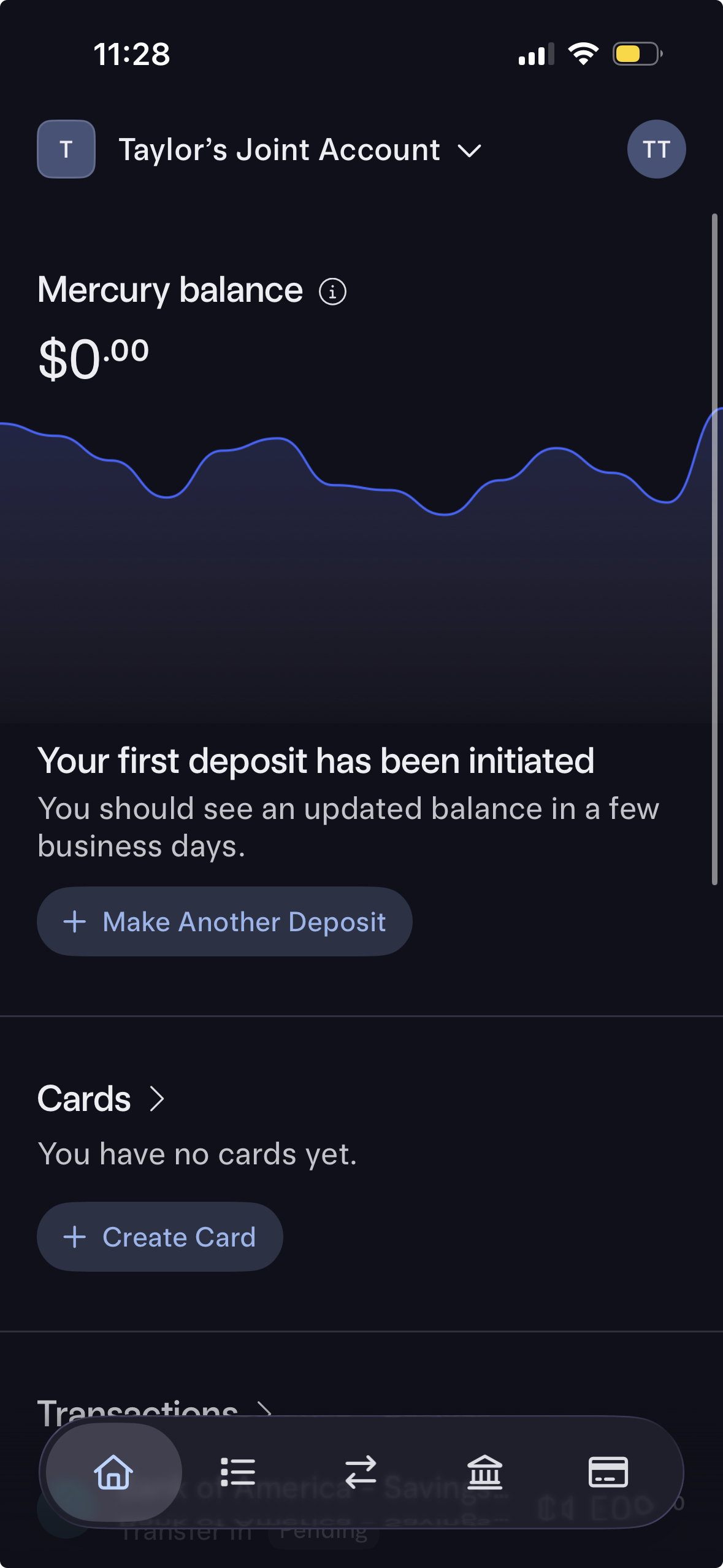

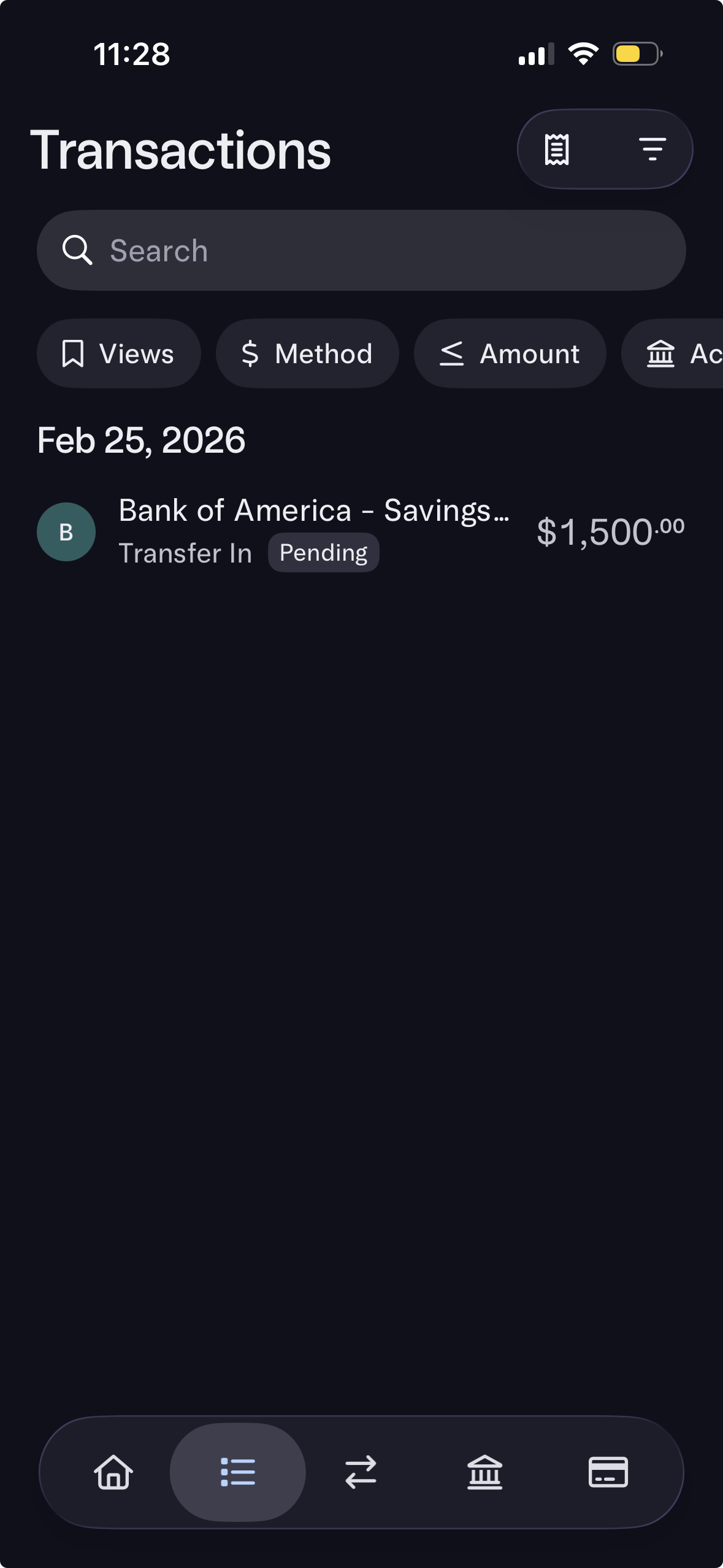

My wife and I opened a joint account in minutes. Mercury onboarded us individually and then instantly approved us. I transferred the money via their BofA Plaid integration -- no routing numbers needed, thank you sir. Smooth.

Bonus points: Mercury did not send me a trillion "PLEASE TAKE OUR SURVEY" emails.

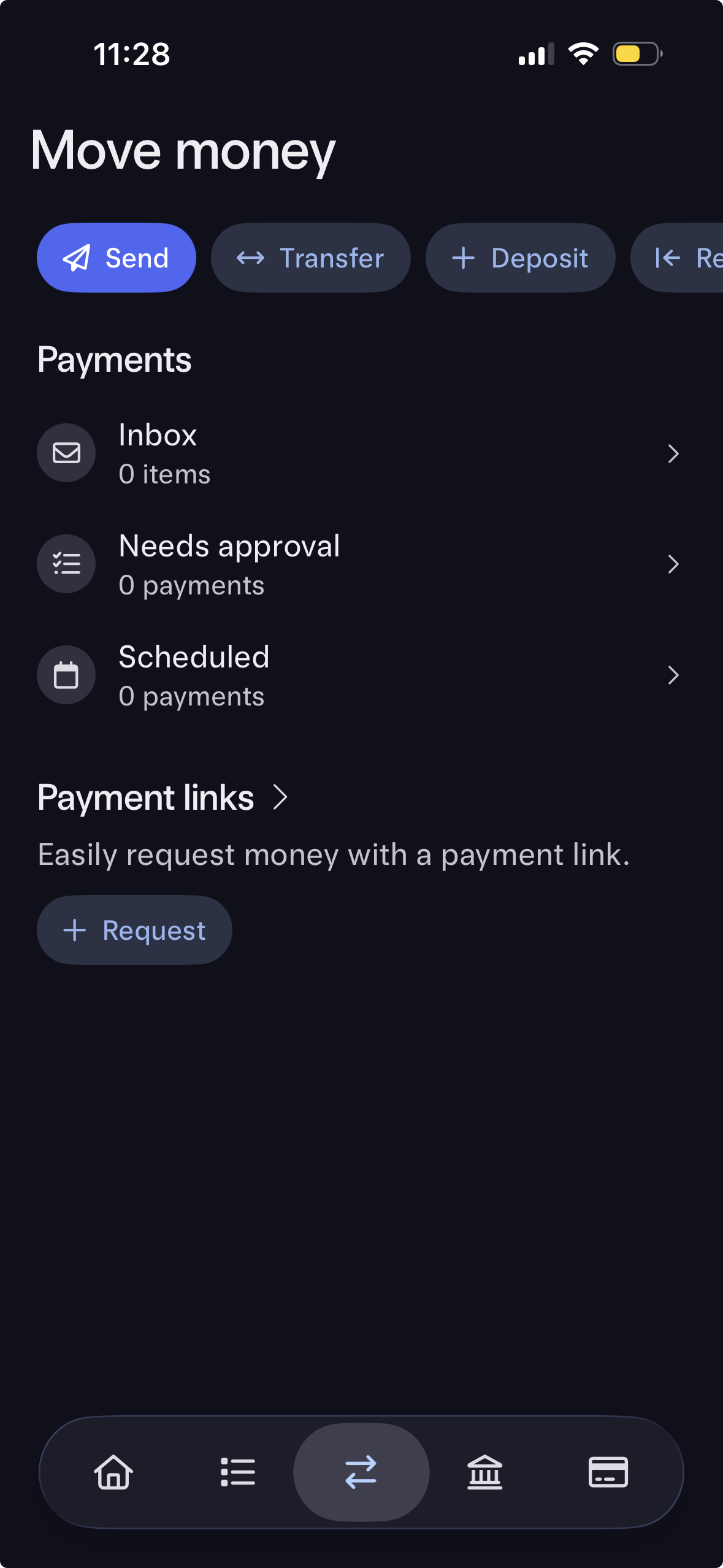

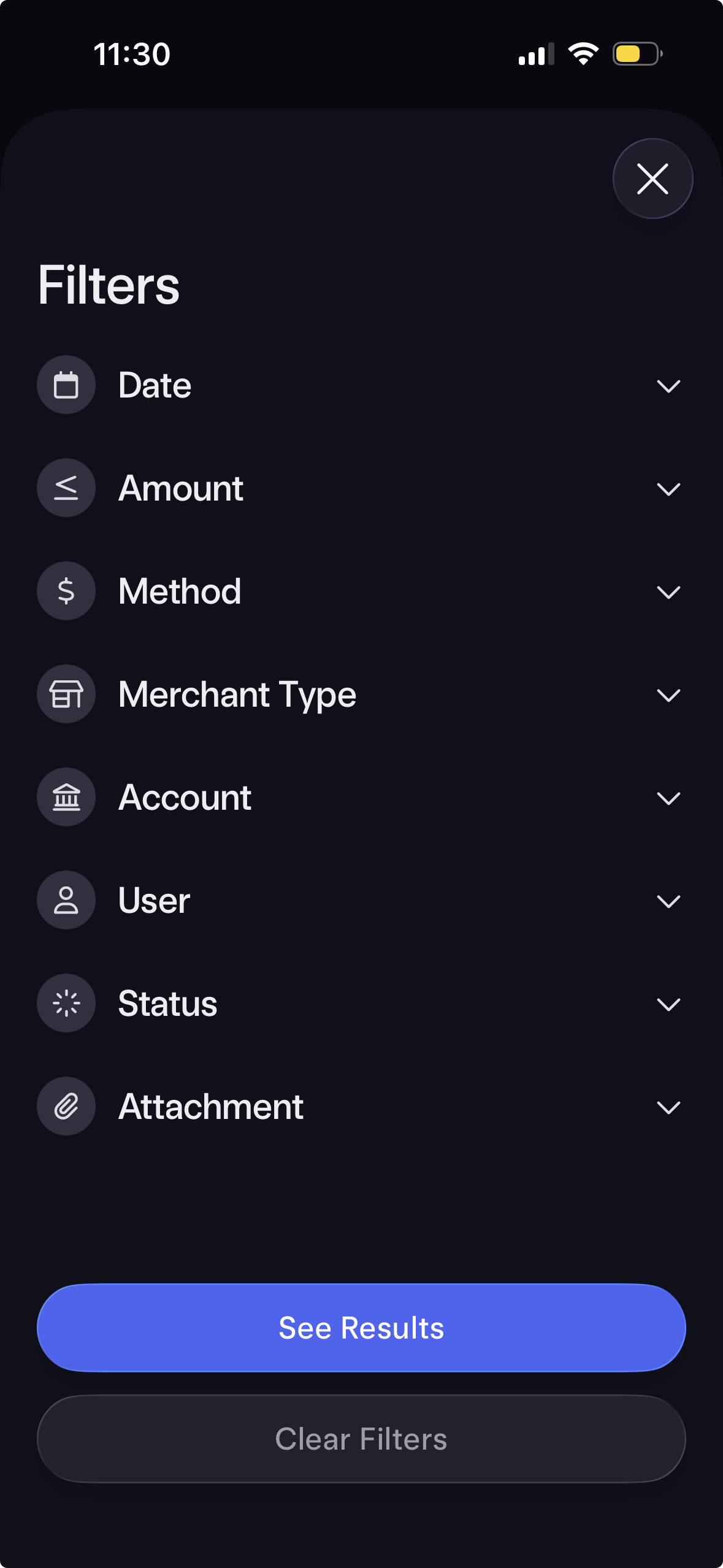

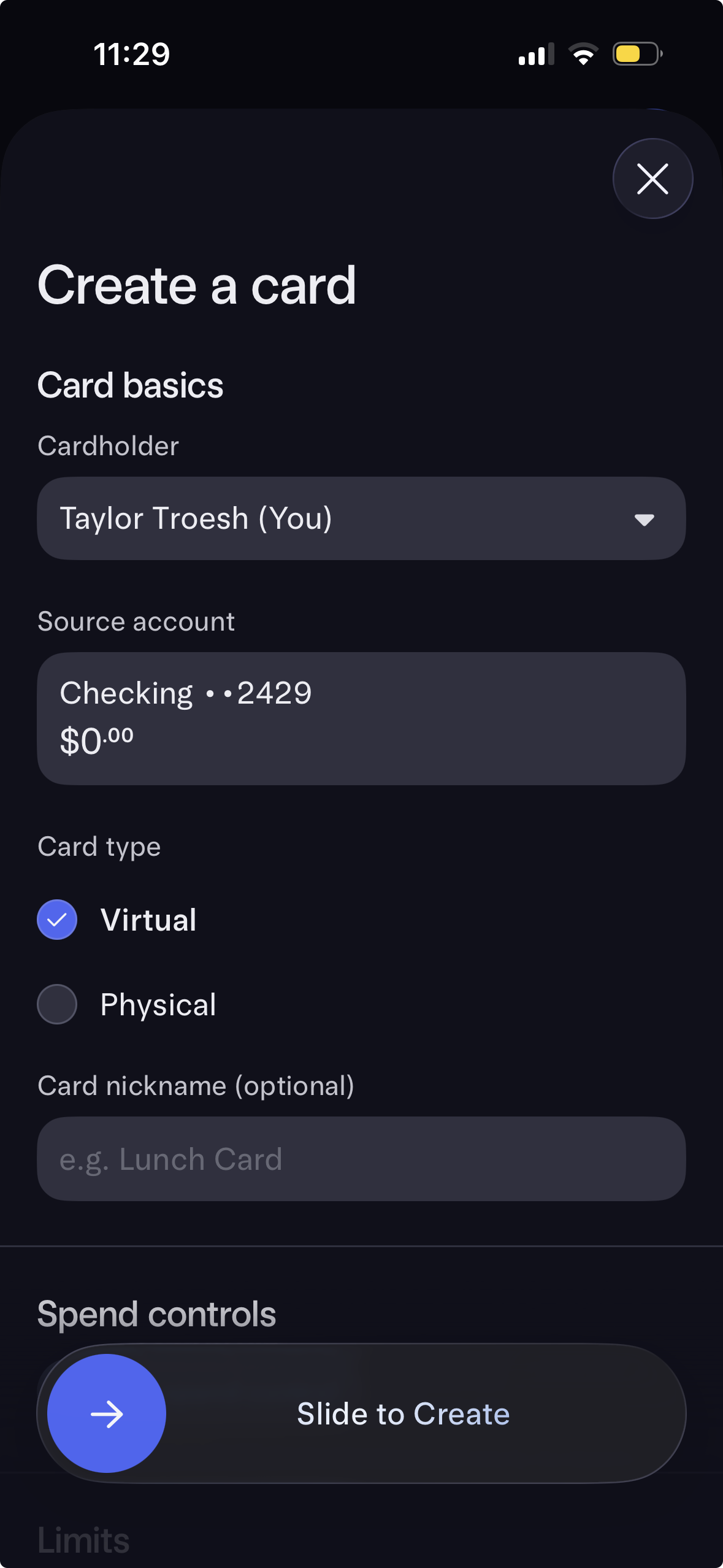

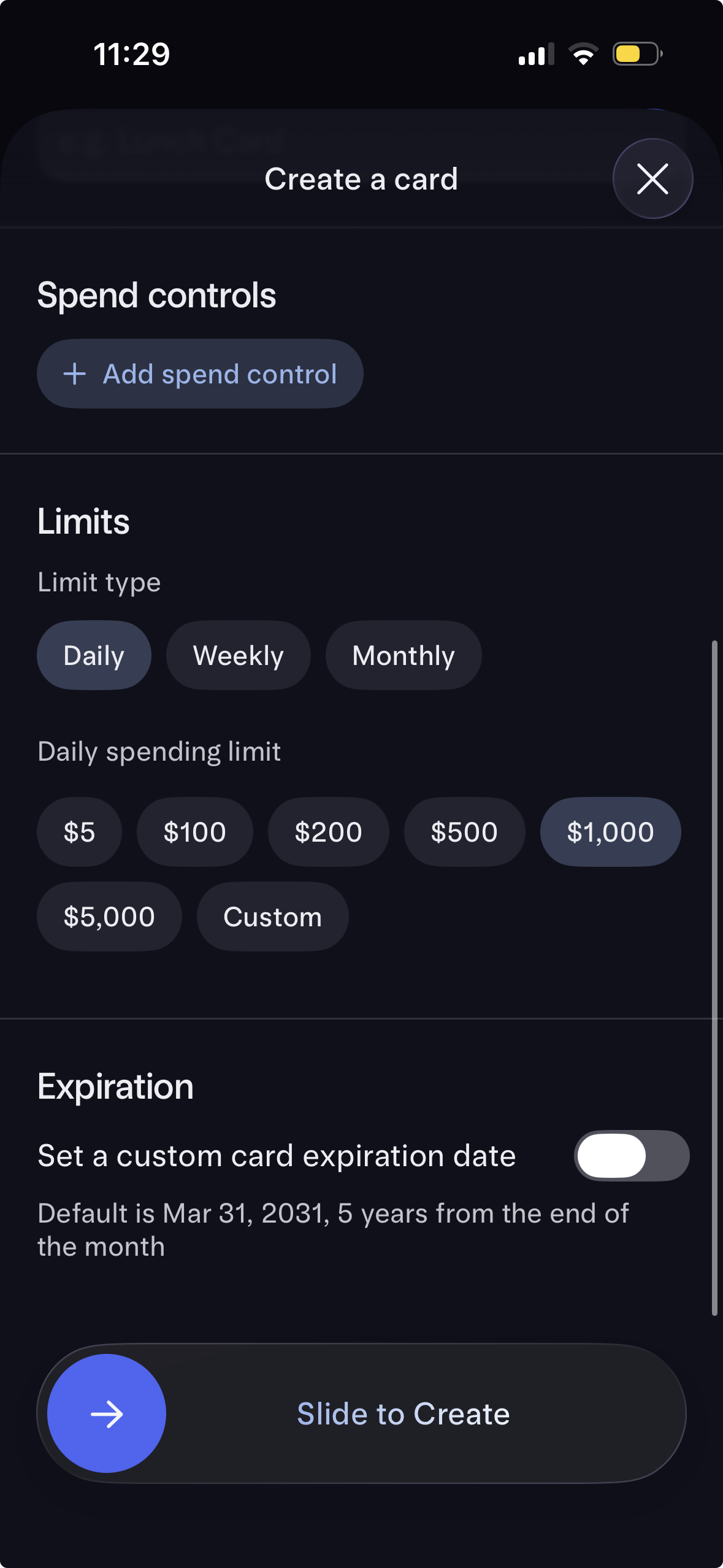

I'm eager to test the following features after our money lands:

If you can survive without physical branches, consider parking your money in Mercury too.