2026-05-15 02:00:56

The sudden shuttering of the United States Agency for International Development (USAID) by DOGE in 2025 is associated with a rise in violent conflicts across Africa, according to a study published on Thursday in Science.

Days into Donald Trump’s second term, his administration began rapidly dismantling USAID, which had, up until that point, been the world’s largest national humanitarian donor. Elon Musk, who spearheaded the Department of Government Efficiency, announced that his team had fed the agency “into the woodchipper” in February 2025. Tracking models suggest the collapse of USAID may have already caused 762,000 preventable deaths, of which 500,000 are children, and the cuts could lead to more than nine million preventable deaths by 2030, according to a study published in February 2026.

Now, a team reports “the earliest evidence of the impact of cuts to USAID on the incidence of violent events” which suggests that “the radical cuts…led to an increase in conflict in the regions that received the most aid from the United States,” according to the new study.

“What we find is that with the USAID shutdown, there was a rapid increase in the likelihood of violence, the severity of violence, and the lethality of violence across nearly one thousand subnational administrative units across Africa,” said Austin L. Wright, study co-author and associate professor and director of strategic initiatives at the Harris School of Public Policy at the University of Chicago, in a call with 404 Media.

In regions that received the most support from USAID, the cuts were associated with a 6.5 percent probability of any conflict event, compared to regions that received no aid. To get a sense of the devastating impact of that statistic, here’s what the study reports:

Between 2021 and 2024, USAID is estimated to have saved 91 million lives, about a third of which are children under 5 years old. The agency was created by John F. Kennedy in 1961 and, in the years preceding Trump’s shutdown of the agency, accounted for less than 1 percent of total U.S. federal spending.

The impact of aid on communities is complex and context-dependent. Aid may reduce conflicts in cases where the opportunity costs of violence are mitigated by an influx of resources, known as the “opportunity cost effect.” But aid can also fuel conflicts over the handling and distribution of those resources, known as the “rapacity effect.”

The collapse of USAID, which is unprecedented in its scale and speed, has produced the worst of both worlds, according to the new study.

“When those funds rapidly go away, it's a shock to the opportunity cost, and now it becomes more and more attractive to participate in what we might call the unproductive part of the economy, which is participating in violence, engaging in crime, and other activities,” Wright said. “But because the shutdown was so rapid, it didn't really have an opportunity to bind on the rapacity effect, because it's not as if the bridges, roads, or full-on infrastructure went away. The things that individuals or groups might fight over were still present.”

“It’s a bit of a ticking time bomb, because you're both removing the conflict-reducing side of aid, while leaving behind the conflict-enhancing part of aid,” he added.

To quantify the impact of the cuts on violence, Wright and his colleagues examined the Geocoded Official Development Assistance Dataset (GODAD), which monitors geolocated information regarding foreign aid disbursements, alongside the Armed Conflict Location and Event Data (ACLED), which tracks violent events.

The overlapping datasets revealed macro-level patterns between aid distribution and violence in the wake of the cuts, including significant upticks of violence in areas that had previously received large amounts of aid, or where the population had less control over their government due to weaker executive constraints.

Moreover, this increase in conflict has persisted over the course of months and may continue in areas that fall into “conflict traps” defined by self-perpetuating cycles of violence.

These impacts are catastrophic for people who had relied on USAID, as evidenced by the estimated death tolls, and the increased risk of violent conflicts and upheavals. They also present new vulnerabilities for the United States and its allies. Though USAID had an altruistic mission, the agency also served as a vector of soft power and an early-warning system for tracking public health risks, like pandemics. The loss of the agency has already caused national security issues for the U.S., such as the seizure of discarded USAID supplies by Iran-backed Houthi groups in Yemen.

“Those insecurities don't stay where they're created; they travel,” Wright said. “That unfortunately means that the vulnerabilities that are being created at the moment will likely have long-run consequences of creating insecurity that directly impacts the safety of Americans.”

Moreover, Trump’s demolition of USAID prompted many allies in Europe to pull back on their own foreign aid, exacerbating the effects. Though other humanitarian organizations are struggling to mitigate the consequences, the loss of trust caused by the shutdown of USAID is likely permanent, with ominous long-term consequences.

“Even if you reactivated USAID and pretended as if it never went away, you can't reverse these effects because you've already communicated your bad faith behavior,” Wright said. “There is nothing quite like the reputational bomb of simply shutting down an agency, and what that does to the reputation that the U.S. might have if it ever wanted to reinitiate its interventions.”

“From the soft power lens, and a global lens, the reputational effects, I think, are tremendous and will create a bunch of wedges and inefficiencies,” he concluded. “If one simply wanted to restart USAID, it's going to cost much more to rebuild than simply the same budget all over again.”

2026-05-14 21:30:52

A few weeks ago, I came across a wild post on Reddit’s r/DHExchange, a subreddit for trading large datasets: “I hoarded a large database of something valuable, just not what’s [sic] you expect…150k stools images.”

The post, made by a user called Ill_Car_7351, was advertising exactly what it sounds like: A database of poop images, collected from an AI poop analyzing app that he had launched several years ago. Basically, 25,000 people had been taking images of their poop and uploading them to his app. He’d been collecting, analyzing, and annotating these images and now wanted to sell access to them: “I’ve got 150k+ labeled and classified images of 💩 from roughly 25K different people. Jokes aside, I know there’s a lot of value in it (hard to obtain, useful for ML [machine learning] training, cancer studies etc) but not sure on how to move about it. Feels like I’m sitting on a pile of shi..ny coins but can’t find who wants them.” The poster added that “the images are extremely rare,” and that he was trying to figure out how much money he could sell them for.

The comments were from people who were mostly horrified: “When I was 5 the teacher taught me how to read. I now regret that happened,” one read. “What in the fuck,” another read. “How to delete someone else’s post,” a third said.

I messaged the poster and told him I was interested in obtaining the database. Thus began my journey into the Internet of Shit and, by extension, the unpleasant world of the underground sale of highly sensitive, app-collected user data for AI training.

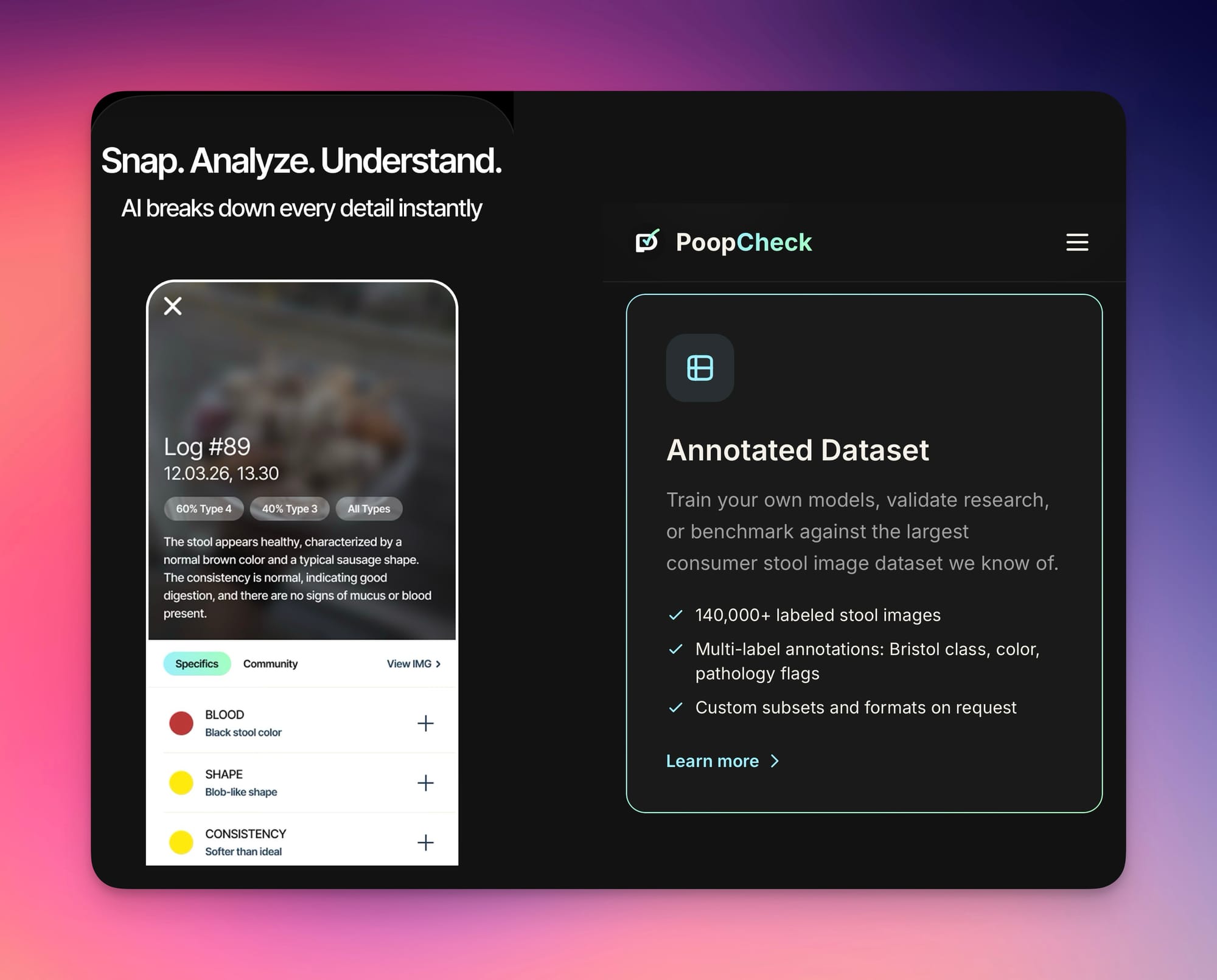

The poop database comes from an app called PoopCheck, an app made by a company called Soft All Things that purports to use AI to analyze images of one’s stool in order to give you a “daily gut health score.”

“Our AI analyzes your poop using the Bristol Stool Scale and advanced pattern recognition. Get insights on consistency, color, shape, and what they mean for your digestive health,” the app advertises. The Bristol Stool Scale classifies stools into one of seven types ranging from “separate hard lumps, like little pebbles” to “watery with no solid pieces.”

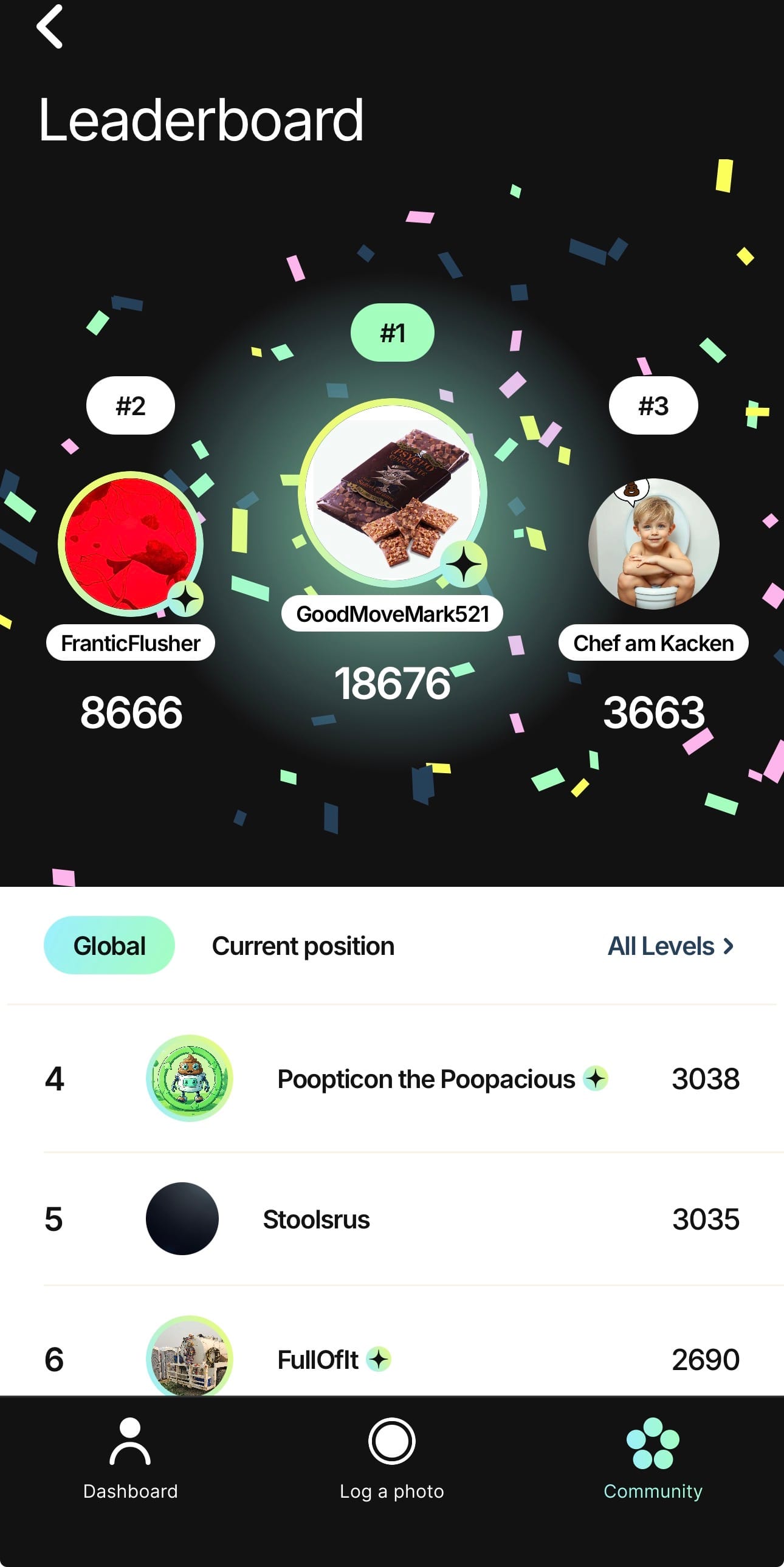

The app also features a “community,” of 151,317 “shared stools” at the time of this writing and a “leaderboard,” where people can share images of their poop for commentary from other users and earn points for participating. I found the posts in the community a bit hard to stomach, with titles “like play dough,” “Concerned,” and “Dealing with this on and off for the past 3 weeks.” Pictures are not automatically shared to the community; when you take a photo it asks if you want to share it.

“Popular” posts on the app include people speculating as to whether their fellow community members have parasites or colon cancer; in the comments section of a few posts I saw people recommending ivermectin to the original poster.

Though users have the option to share their poops with other users, the app provides mixed messages about the fact that the data uploaded to the app will be analyzed, annotated, and packaged with other poops into a commercial database to be sold to AI companies.

On the App Store page for PoopCheck, it says “The developer does not collect any data from this app.” The link to the privacy policy from within the App Store download page does not mention anything about selling or sharing the data and says “your health data is encrypted in transit and at rest. Photos are processed securely. We implement industry-standard security measures to protect your data.”

The PoopCheck website’s About page states “Privacy First.” And “Health data is sensitive. That’s why privacy isn’t a feature, it’s our foundation. Your photos are encrypted. You can delete everything at any time. We built PoopCheck the way we’d want our own health apps built.” The FAQ also notes “your privacy is our priority.”

This is completely different from the “Service Agreement” and “Terms and Conditions” people agree to when they actually open the app and make an account. The Service Agreement states that “by uploading stool images or any health-related data to the App, you grant Soft All Things LLC a worldwide, irrevocable, perpetual, unconditional, royalty-free, fully-paid, transferable, sub licensable license to use, reproduce, modify, adapt, distribute, sell, license, and create derivative works from such content for any lawful purpose, including but not limited to research, commercial exploitation, product development, and third party licensing. You acknowledge that your images and data may be used to create, train, improve, and commercialize AI technologies and machine learning models, and that such models and any outputs derived from your data may be licensed or sold to third parties, including medical organizations, research institutions, and commercial partners.”

It adds that “your data may be irreversibly incorporated into AI models and aggregated datasets. Deletion of your account will remove your personal profile data but does not require the removal of anonymized, aggregated, or derivative data already processed or incorporated into AI models.” Under a section called “Sharing of Information,” it adds that the company reserves the right to share or sell the data “for any business purpose,” including “AI and Data Licensing.”

On Reddit, I messaged Ill_Car_7351 and said “Hi - am interested in this database you posted about. Can you share any more info about what you're looking for / details about the app where it was collected? also any chance there's like, a sample of what the data looks like etc?” They responded quickly and said “Hey! The db was gathered by real users, we had 25k users over the last couple years, since we launched the app. It’s called PoopCheck btw if you wanna see it. Let’s maybe talk via email? I’ll be happy to share a sample of the data if that interests you.”

I sent an email to someone named “Marco” at Soft All Things, who identified himself as one of the founders of PoopCheck. I said I had reached out on Reddit and was interested in a sample of the data. I used my real email address and real name.

“We can surely send you a sampling of the dataset, would a Google Drive link containing an image folder and JSON data work? We can also figure out other ways if you prefer,” Marco said. “In terms of the actual dataset you need, what would be the size of it for your needs? And what would you be using it for? Just so we can make sure it’s actually a good fit for your use case.”

I told Marco that I wanted 10,000 pieces of data and said I would use it for AI training. I asked him for pricing and what type of data was included.

Marco responded:

“You'll find a folder with images and JSON metadata covering the key fields we capture per entry. Let us know if you have any questions about it.

To give you a better idea of the dataset and pricing options: we currently have over 150,000 images validated by AI. Around 5,000 of these have also been manually reviewed by a member of our team, who verified the AI output and labeling, making this portion more valuable and priced accordingly. It's also worth noting that certain types on the Bristol Stool Scale are rarer than others, so availability may vary depending on your specific needs.

With that in mind, here there is an estimation of pricing options:

• 10,000 unreviewed images (AI-validated) — $3,000

• 5,000 fully human-reviewed & annotated (on top of AI validation) — $4,000

• 5,000 reviewed + 5,000 unreviewed — $5,000

It would be great to have a quick call to take this further as there are a few things about the dataset's structure and coverage that are easier to walk through live.”

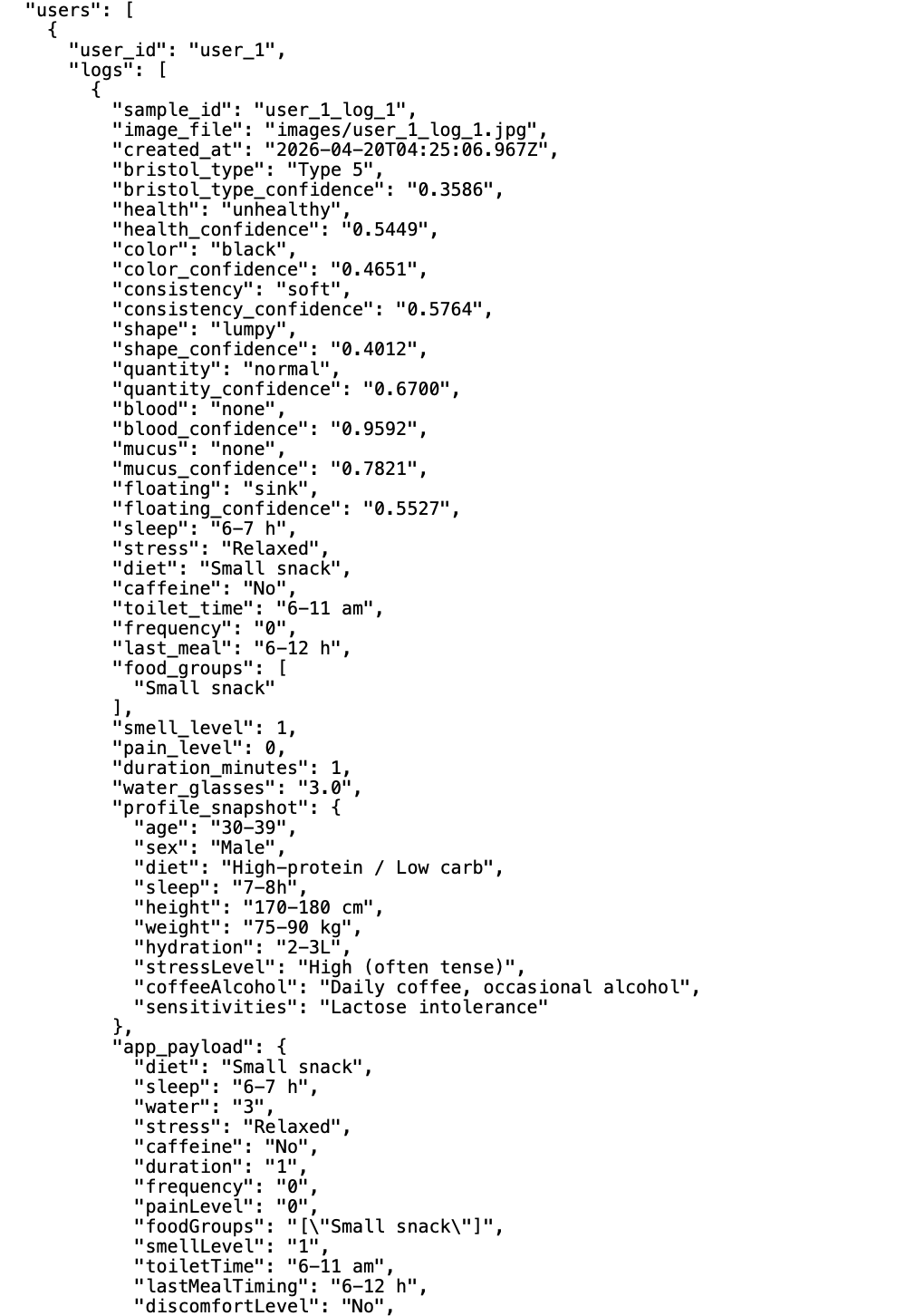

The sample dataset Marco sent me included 20 images of poop from four specific users (five poops each). Each image was tied to a series of user-reported data points as well as AI analyses of each image. AI-analyzed datapoints included the time the poop was taken, the Bristol Type of each poop, whether it was “healthy” or “unhealthy,” the “shape” and “consistency,” whether there was blood or mucus in the poop, and the quantity (“large,” “normal,” or “small”), and whether it was “floating” or not. Each of these data points also had a “confidence” score for how confident the AI was in its analysis. Each image also had user-reported information, which included the answers to a series of questions including “when did you have your last meal,” “any discomfort while pooping? (“Hard to pass;” “burning”; “sharp pain” etc); “How long did it take?” “Did it smell stronger than usual?” “Coffee or alcohol in the last 12 hours?” The data also included demographic information, which includes age ranges, sex, height, weight, and sensitivities such as “lactose intolerance” or “irritable bowel syndrome.” Each image is tied to a specific user through a field called “externalIndividualID.”

Soft All Things is not exactly quiet about the database that it has created. On the Poop Check website, it has a page called “For Business,” which advertises its database. It sells access to both the “Stool Analysis API,” which “turns a stool photo into a structured health report,” as well as the “Annotated Dataset,” of 140,000+ images to “train your own models.” It advertises this as the “largest consumer stool image dataset we know of.”

It maybe should not be terribly surprising that a free app in which you upload images of your poop to a random company would have a business model focused on packaging and selling that data. But this type of data collection—of our literal poop—highlights how almost anything we do on our phones can ultimately end up for sale. The fact that it is advertising this for sale at all indicates that there is an AI goldrush for any and all types of data, even our literal waste.

Research has shown, over and over again, that de-identified “anonymous” data doesn’t necessarily remain anonymous when combined with other datasets. Toward the end of last year, the appliance giant Kohler endured a security shitshow when a researcher showed that its stool-analyzing smart toilet camera was not actually properly encrypting the images that it sent to Kohler. The concern there was that your poop data would be somehow accessed by bad actors. In the case of PoopCheck, anyone can simply buy access.

After I told Marco I was writing an article about PoopCheck and its database, he stopped responding to me and did not answer any of my questions.

2026-05-14 01:02:59

An eon ago, in the year 2012, an editor at my first job at U.S. News and World Report had the idea that we should have a YouTube channel. It wasn’t a pivot to video, exactly, but it would be a bet on an emerging platform where some creators were beginning to go viral with news content. The idea was to put the journalists in front of the camera and have them talk about their articles and the news of the day. It did not go well.

I was nervous, unconfident, had a bad haircut, and, like everyone in Washington, D.C. then and now, was very unfashionable. I had no media training, had never been on TV or video of any sort. I did not have a smartphone. I was socially awkward and spoke in monotone. I blinked endlessly while I talked and fidgeted like crazy with my hands. I constantly said um, tripped over my words, and generally had no idea what I was doing. We made a series of videos with titles like “Head Injury Studies Continue to Cause Alarm in NFL,” “Are the Politics of Climate Change Shifting?,” and “Which Party Will Get the ‘Internet Vote’?” The videos were poorly edited, sounded weird, and got zero traction.

I did not want to make these videos but it was a newsroom-wide initiative and so I did it anyway. Thankfully and mercifully, almost no one watched any of these videos, because they were bad. Then and now, they are the opposite of what anyone watches on the internet. And yet, these videos were roughly about as good as a series of podcast videos being released by the Washington Post’s new and drastically worsened Opinion section, apparently at great expense to the outlet. They were also about as popular, with many of my videos garnering upwards of several dozen views.

On Sunday, the very good media newsletter Status reported that the Washington Post recently invested $80,000 on new audio and video gear for its new Make It Make Sense podcast, which features the Washington Post Editorial Board. It has also remodeled a studio in its office, which seems apparent in a very bad trailer for the show titled “A News Show You Can Trust, Finally,” but not in any of its previously recorded videos (some of which were released this week). All of this has happened at the behest of opinion editor Adam O’Neal and Washington Post owner Jeff Bezos as part of the section’s shift rightward to focus on billionaire- and free market-friendly content.

2026-05-13 22:24:03

Fiber-optic cable has become a staple of drone war. From Ukraine to the Sahel, combatants are fielding quadcopters piloted via kilometer-long lengths of cable that allows operators to control them across vast distances while insulating the drone from being knocked from the sky. This technique was once a cheap way for militaries to beat their opponents' electronic warfare, but demand for cable from data centers and war is raising the cost of every flight.

War is a cat and mouse game. One side deploys a devastating tactic and the other side figures out a way to defeat it. When small and cheap quadcopter drones began to dominate the skies, first by Islamic State and then in Russia’s war on Ukraine, fighters quickly learned it was easier to knock them out of the sky with electronic warfare than it was to shoot them down.

Then, in 2023, Russia began to deploy FPV drones controlled via lengths of fiber-optic cable. The cable sits spooled in a tube below the drone that unwinds as it flies. The fiber-optic cable provides a fast and clear connection between a drone and its operator and no signal is flying through the air which makes it immune to jamming.

Ukraine took heavy vehicle losses when Moscow began using fiber-optic drones but Kyiv quickly adopted the tactic and now wheat fields in the country are covered in discarded cable. Three years ago, this was a cheap and effective means of slipping past enemy defenses. In 2026 it’s not nearly as cost effective.

“Fiber-optics is still happening at the battlefield, although not as much as it used to be. It's extremely pricey now. We used to buy 50km spool for $300, now it's easily $2500. Just so you know,” Dimko Zhluktenko, a Ukrainian soldier, said in a post on X on May 10.

The price of fiber-optic cable has been steadily rising since about 2023 and has almost doubled in just the past few months. In January, Shanghai based fiber-optic company Sun Telecom declared there would be a “fiber famine” in 2026. Last year, a kilometer of its G.652D fiber cable cost $2.20. By December of 2025 the same length of cable cost $3. A month later, Sun Telecom had increased the price again to $4.1.

One of the big market shifts driving up the cost of fiber is an increased demand for data centers as companies rush to build out the compute infrastructure they believe they’ll need for AI. “Almost every phone call I get from my customers is trying to see, how do we get them more? I think next year the hyperscalers will be our biggest customers,” Wendell Weeks, the CEO of fiber-optic cable manufacturer Corning, told CNBC after his company signed a deal with Meta for $6 billion in cable.

In a January LinkedIn post, North Carolina telecom company Brightspeed warned of “fiber-supply shortages.” Two other American ISPs told trade publication Broadband Breakfast said they’d seen orders for fiber unexpectedly cancelled. “We have heard concerns in recent weeks of timeframes slipping, and concerns about the ability to obtain supplies at all, as circumstances change,” Mike Romano, the CEO of NTCA, a rural broadband tradegroup, told Broadband Breakfast.

Data center driven demand is only part of the story. Wars in Ukraine, Iran, and the Sahel region of Africa are hungry for fiber-optic cable and manufacturers can barely keep up. Combined, Russia and Ukraine consume 50-60 million kilometers of fiber-optic cable every year, according to Kyiv Post. Most of this comes from China because both countries lack the domestic manufacturing base to produce that much cable. The demand has caused the price of a kilometer of Chinese fiber-optic to go from $2.33 in 2025 to $5.83 in 2026.

The core component of fiber-optic cables is a long piece of flexible and manufactured glass or plastic called an optical fiber. The delicate strands are about the width of a human hair. Ukraine doesn’t manufacture optical fibers. Russia had one factory in the city of Saransk but Ukraine destroyed it with drones in the spring of 2025. Now both countries rely on China to keep drones in the air. Exports on fiber-optic cable to Russia spiked after Ukraine destroyed the factory, hitting a height of 717.5 million meters in November of 2025.

“Ukraine has recently expanded its use of Starlink communications for attack drones, which are impractical for Russia to jam. The cost of a Starlink antenna—which is expended in an attack—is now lower than the cost of the longest-range FPV fiber-optic spools,” Roy Gardiner, an OSINT analyst at Defense Tech for Ukraine told 404 Media. “The drive toward the development and deploying at least partial autonomous control for drones to defeat electronic warfare jamming will accelerate as fiber optic FPVs become less available.”

During war humans become great innovators. The game of cat and mouse continues and fighters are developing strategies to combat fiber-optic drones. In September of 2025, Russian and Ukrainian military bloggers began to report a new technique for countering the wire driven drones: a 150-meter-long fence made of spinning barbed wire. The theory is that the fiber-optic cable, dragged along the ground, will get caught in the fence and severed.

Despite rising costs and the dangers posed by barbed wire, the drones keep flying. In March, Iran used fiber-optic controlled drones to strike American targets in the gulf, including the destruction of a Black Hawk helicopter parked in Iraq. The known fiber-optic FPV drones top out at about 50 kilometers of cable, a distance that will clear the Strait of Hormuz at its narrowest point.

2026-05-13 21:10:19

We start this week with Joseph’s story about how we obtained Haotian AI, a sought-after piece of realtime video deepfake software that lets you turn into anyone else during Microsoft Teams, WhatsApp, or Zoom calls. After the break, Matthew tells us about some insane Yu-Gi-Oh trading card drama. In the subscribers-only section, Jason explains how the hard drive shortage is impacting those archiving the internet.

Listen to the weekly podcast on Apple Podcasts, Spotify, or YouTube. Become a paid subscriber for access to this episode's bonus content and to power our journalism. If you become a paid subscriber, check your inbox for an email from our podcast host Transistor for a link to the subscribers-only version! You can also add that subscribers feed to your podcast app of choice and never miss an episode that way. The email should also contain the subscribers-only unlisted YouTube link for the extended video version too. It will also be in the show notes in your podcast player.

2026-05-13 21:00:48

Tech company executives are confident that AI will completely transform the economy and point to the changes they see in-house to prove that this change is coming fast. At Meta, Google, Microsoft, and others, leadership says that AI generates a growing share of the overall code, which makes it cheaper and faster to produce. The implication is that if this AI is good enough that tech companies are using it internally to improve efficiency and reduce headcount, it’s only a matter of time until every other industry is similarly transformed.

Developers who are told to use AI whether they like it or not, however, tell a different story. On Reddit, Hacker News and other places where people in software development talk to each other, more and more people are becoming disillusioned with the promise of code generated by large language models. Developers talk not just about how the AI output is often flawed, but that using AI to get the job done is often a more time consuming, harder, and more frustrating experience because they have to go through the output and fix its mistakes. More concerning, developers who use AI at work report that they feel like they are de-skilling themselves and losing their ability to do their jobs as well as they used to.

“We're being told to use [AI] agents for broad changes across our codebase. There's no way to evaluate whether that much code is well-written or secure—especially when hundreds of other programmers in the company are doing the same,” a UX designer at a midsized tech company told me. 404 Media granted all the developers we talked to for this story anonymity because they signed non-disclosure agreements or because they fear retribution from their employers. “We're building a rat's nest of tech debt that will be impossible to untangle when these models become prohibitively expensive (any minute now...).”

The actual quality of output doesn't matter as much as our willingness to participate.

Tech company executives love to brag about how much of the code at their company is AI-generated. In April, Google said that three quarters of new code at the company was generated by AI. Last year, Microsoft CEO Satya Nadella said up to 30 percent of the company’s code was generated by AI. Microsoft’s CTO Kevin Scott said he expects 95 percent of all code at the company to be AI-generated by 2030. Meta’s Mark Zuckerberg said last year he expects AI to write most of the code improving AI within 12-18 months. Anthropic says 90 percent of the code written by most if its team is AI generated. Tech companies have also been bragging about their “tokenmaxxing,” or how much money they’re spending on AI tools instead of human employees.

Predictably, the huge spike in productivity that these companies claim their own AI products have enabled hasn’t resulted in more or better products, shorter work weeks, or better consumer experiences. Mostly, AI implementation in tech companies has been used to justify multiple massive rounds of layoffs. To name just a few examples where tech companies said they reduced headcount because of AI use, more recently, Meta said it would cut 10 percent of its workforce (around 8,000 people), Microsoft said it would offer voluntary retirement to 7 percent of its American workforce (around 125,000 people). Snapchat said it would lay off 16 percent of its full-time staffers (about 1,000 people).