2026-05-15 16:59:59

This article explains the practical differences between AI workflows, autonomous agents, and multi-agent systems through real-world analogies, production trade-offs, and code examples. It argues that workflows are best for deterministic, structured tasks with predictable execution paths, while agents are better suited for open-ended problems requiring dynamic tool selection and adaptive reasoning. Multi-agent systems introduce specialized coordination between multiple agents but also increase operational complexity, debugging overhead, and cost. The piece also explores hybrid architectures, beginner mistakes, production reliability, and why workflows often remain the best starting point for real-world AI systems

2026-05-15 12:24:11

Rust is now powering much of the modern JavaScript toolchain, from bundlers and linters to CSS pipelines and mobile shared cores.

2026-05-15 12:19:22

\

OpenAI ChatGPT Plus vs Anthropic Claude Pro vs Google Gemini AI Pro vs xAI SuperGrok vs Moonshot Kimi K2.6 vs Meta Muse Spark vs MiniMax M2.7 vs Microsoft Copilot Pro vs Perplexity Pro — Evaluated Across 10 Categories – with a closing section on consumer data on Reddit.

In 2026, the $20/month AI subscription market is the most ferocious battleground in tech.

What once bought you priority access to GPT-4 now unlocks autonomous coding agents, frontier multimodal models, 100+ AI-generated videos per day, and real-time research platforms that synthesize hundreds of live sources.

The disruption is coming not just from Western incumbents but from unexpected challengers — including Meta, which has deployed a genuinely frontier-grade AI model called Muse Spark across its entire social ecosystem for free, obliterating the notion that cutting-edge AI requires a subscription.

This article compares nine AI plans at or near the $20/month price point:

| Provider | Plan | Price | |----|----|----| | OpenAI | ChatGPT Plus | $20/month | | Anthropic | Claude Pro | $20/month | | Google | Google AI Pro | $19.99/month | | xAI | SuperGrok | $30/month* | | Moonshot AI | Kimi Moderato | ~$19/month | | Meta | Meta AI (Muse Spark) | $0 | | MiniMax | Token Plan Plus | $20/month | | Microsoft | Copilot Pro | $20/month | | Perplexity | Perplexity Pro | $20/month |

Pricing notes:

Methodology: Each provider is scored 0–10 across 10 categories. Scores are summed into a total out of 100. The article ends with a final ranked scoreboard and the three overall winners, with two honorable mentions.

Core Model(s): GPT-5.5 (primary, rolled out April 23, 2026), GPT-5.4 Thinking, GPT-5.3 Instant (fallback).

What you get:

Usage limits:

Score: 9/10

Core Model(s): Claude Sonnet 4.6 (primary), limited Claude Opus 4.7 access; Claude Haiku 4.5 as fallback.

What you get:

Usage limits:

Notable: Claude Opus 4.7 (released April 16, 2026) achieved 87.6% on SWE-bench Verified and 94.2% on GPQA Diamond — but is severely rate-limited on the $20 plan.

Score: 7/10

Core Model(s): Gemini 3.1 Pro (released February 19, 2026), Gemini 3 Pro.

What you get:

Score: 9/10

Core Model(s): Grok 4.3 (generally available April 30, 2026 via API; staged SuperGrok rollout).

What you get:

Annual option: $300/year (17% discount).

Score: 7/10

Core Model: Kimi K2.6 (released April 20, 2026). Architecture: 1 trillion total parameters, 32B active per token, Mixture-of-Experts.

What you get:

Score: 8/10

Core Model: Muse Spark (released April 8, 2026 by Meta Superintelligence Labs). Proprietary, natively multimodal — NOT open weights (departure from Meta’s prior Llama strategy).

What you get (for free):

What it does NOT have:

The wildcard point: Muse Spark scored 89.5% on GPQA Diamond, 58.4% on Humanity’s Last Exam (Contemplating mode), and 42.8% on HealthBench Hard — the highest HealthBench Hard score of any model tested, beating GPT-5.5 (40.1%) and Gemini 3.1 Pro (20.6%). This performance is available to anyone with a Meta account at $0.

Note: Meta has flagged that Muse Spark exhibited “evaluation awareness” — flagging public benchmarks as tests at a 19.8% rate on public sets vs 2.0% on internal sets. Treat public benchmark claims with appropriate scrutiny.

Score: 7/10 (extraordinary for $0; scored on what the free tier delivers vs all paid plans)

Core Model: MiniMax M2.7 (released March 18, 2026). Architecture: Sparse MoE, ~230B total parameters, ~10B active per token.

What you get:

Score: 9/10

Core Model(s): GPT-5.5 Instant (integrated May 2026 as “GPT-5.5 Quick response” in model selector); full GPT-5.5 Pro available for priority M365 Copilot licensed users.

What you get:

Critical limitations:

Score: 6/10

Core Models (user-selectable per query): GPT-5.4, Claude Sonnet/Opus 4.6, Gemini 3.1 Pro, Mistral Large.

What you get:

Score: 8/10

| 🥇 1st | 🥈 2nd | 🥉 3rd | |----|----|----| | ChatGPT Plus (9) | Google AI Pro (9) | MiniMax Token Plan Plus (9) | | Most features, deepest integrations | Best ecosystem value; 5TB storage | Only all-modality plan at $20 |

ChatGPT Plus — Coding Score: 9/10

| 🥇 1st (Tie) | 🥇 1st (Tie) | 🥉 3rd | |----|----|----| | ChatGPT Plus (9) | Claude Pro (9) | Kimi Moderato (9) | | Codex Agent + Agent Mode | Claude Code CLI, best SWE-Pro score | K2.6 Agent Swarm + LiveCodeBench 89.6% |

| Provider | Long-Form Quality | Creative Writing | Tone Control | Factual Accuracy | Score | |----|----|----|----|----|----| | ChatGPT Plus | ★★★★★ | ★★★★★ | ★★★★★ | ★★★★☆ | 9/10 | | Claude Pro | ★★★★★ | ★★★★★ | ★★★★★ | ★★★★★ | 10/10 | | Google AI Pro | ★★★★☆ | ★★★★☆ | ★★★★☆ | ★★★★★ | 8/10 | | SuperGrok | ★★★★☆ | ★★★★☆ | ★★★☆☆ | ★★★★☆ | 7/10 | | Kimi Moderato | ★★★☆☆ | ★★★☆☆ | ★★★★☆ | ★★★★☆ | 7/10 | | Meta AI (Muse Spark) | ★★★★☆ | ★★★☆☆ | ★★★★☆ | ★★★★☆ | 7/10 | | MiniMax M2.7 | ★★★☆☆ | ★★★☆☆ | ★★★★☆ | ★★★★☆ | 7/10 | | Copilot Pro | ★★★★☆ | ★★★★☆ | ★★★★☆ | ★★★★☆ | 8/10 | | Perplexity Pro | ★★★★☆ | ★★★☆☆ | ★★★★☆ | ★★★★★ | 7/10 |

Claude Pro (10/10): Sonnet 4.6 remains the undisputed writing quality leader. Nuanced, tonally precise, structurally coherent over extreme output lengths. Every independent reviewer testing writing quality continues to rank Claude at or above GPT-5.5 for literary prose, technical writing, academic content, and business communication.

ChatGPT Plus (9/10): GPT-5.5 writes exceptionally well across all formats. Canvas adds collaborative real-time editing; Projects give persistent style context.

Google AI Pro & Copilot Pro (8/10): Gemini 3.1 Pro strong at research-integrated writing — Deep Research delivers data-backed, cited content unmatched at this price. Copilot Pro excels at Word/Outlook/PowerPoint composition.

Meta AI (7/10): Muse Spark delivers solid general-purpose writing with strong factual grounding via real-time web search. English prose fluency competitive with GPT-5 generation; creative writing less refined than Claude or GPT-5.5. The integration into WhatsApp/Instagram makes it many people’s default writing assistant whether they know it or not.

| 🥇 1st | 🥈 2nd | 🥉 3rd | |----|----|----| | Claude Pro (10) | ChatGPT Plus (9) | Google AI Pro / Copilot Pro (8) |

| Provider / Model | SWE-Bench Verified | SWE-Bench Pro | LiveCodeBench | AIME 2025/26 | GPQA Diamond | HLE (w/tools) | Codeforces ELO | ARC-AGI-2 | Terminal-Bench 2.0 | |----|----|----|----|----|----|----|----|----|----| | GPT-5.5 (ChatGPT Plus) | 82.6–88.7% | 58.6% | 85.0% | 95.2% | ~87–90% | — | — | 85.0% | 82.7% | | Claude Opus 4.7 (Claude Pro) | 87.6% | 64.3% | — | — | 94.2% | 59.0% | — | 75.8% | 69.4% | | Gemini 3.1 Pro (Google AI Pro) | 80.6% | 54.2% | Elo 2887 | 98.3% | 94.3% | 51.4% | 3,052 | 77.1% | 68.5% | | Grok 4.3 (SuperGrok) | ~72–75% | — | — | ~98.8% | 87.5% | — | — | — | — | | Kimi K2.6 (Kimi Moderato) | 80.2% | 58.6% | 89.6% | 96.4% | 90.5% | 54.0% | — | — | 66.7% | | Meta Muse Spark (Meta AI) | 77.4% | 52.4% | — | — | 89.5% | 58.4%* | — | 42.5% | 59.0% | | MiniMax M2.7 (MiniMax Plus) | 78.0% | 56.2% | 79.93% | 91.04% | 87.4% | — | — | — | 57.0% | | GPT-5.5 Instant (Copilot Pro) | ~82–88%† | — | — | — | ~87–90%† | — | — | — | — | | Multi-model (Perplexity Pro) | Varies | Varies | Varies | Varies | Varies | — | — | — | — |

*Muse Spark HLE in Contemplating multi-agent mode (but they have gamed their benchmarks in the past).

†Copilot uses GPT-5.5 Instant, slightly below full GPT-5.5 Pro.

ChatGPT Plus (9/10): GPT-5.5 leads Terminal-Bench 2.0 at 82.7% (state-of-the-art at release) and AIME 2025 at 95.2%. SWE-bench range 82.6–88.7% depending on source. Broadest benchmark coverage of any model.

Google AI Pro (9/10): Gemini 3.1 Pro posts ARC-AGI-2 at 77.1% (highest in this comparison) and GPQA Diamond at 94.3% — tied with Claude Opus 4.7 for top GPQA score. Codeforces ELO 3,052 and LiveCodeBench Elo 2887 are strong coding scores.

Claude Pro (9/10): Claude Opus 4.7 achieves 87.6% SWE-bench Verified (highest in this comparison), 94.2% GPQA Diamond, and 64.3% SWE-bench Pro (best Pro score in this comparison). Rate-limiting means most Pro users don’t access Opus 4.7 freely.

Kimi K2.6 (9/10): LiveCodeBench v6 89.6%, AIME 2026 96.4%, SWE-bench Pro 58.6%, HLE 54.0% — a remarkably well-rounded open-weight model from a Chinese lab at sub-$20 pricing.

Meta Muse Spark (8/10): GPQA 89.5%, HLE 58.4% (Contemplating mode — edges GPT-5.5 Pro’s 58.7%), HealthBench Hard 42.8% (#1 globally). ARC-AGI-2 at 42.5% is a notable weakness. The evaluation-awareness flag (19.8% public vs 2.0% internal) warrants independent verification.

MiniMax M2.7 (8/10): SWE-bench 78%, GPQA 87.4%, LiveCodeBench 79.93%, AIME 91.04% — strong across the board for a $20 plan, with rapid improvement trajectory.

SuperGrok (7/10): Grok 4.3 AA Intelligence Index 53; IFBench 81%. AIME ~98.8% on Grok 4 (Heavy). SWE-bench 72–75% on standard Grok 4.3 — trails the leaders. xAI is iterating rapidly toward Grok 5.

Copilot Pro (6/10): GPT-5.5 Instant delivers good performance but is the speed-optimized variant, not the full reasoning model. Feature constraints limit how the model’s capability is accessed.

Perplexity Pro (5/10): Benchmark performance depends entirely on which model the user selects per query.

| 🥇 1st (3-way tie) | 🥉 4th | |----|----| | ChatGPT Plus / Google AI Pro / Claude Pro (9) | Kimi / Meta Muse Spark / MiniMax (8) |

ultimodal AI — the ability to see, hear, generate images, produce video, and reason across media types — has become a decisive differentiator in 2026. Every plan in this comparison now claims multimodal support. The question is depth, quality, and integration.

| Capability | What to Look For | |----|----| | Image Input | Upload photos, screenshots, diagrams for analysis | | Image Generation | Create images from text prompts | | Video Input | Analyze video content, extract frames | | Video Generation | Create short videos from prompts | | Voice Input / Output | Real-time voice conversation | | Document Understanding | PDFs, spreadsheets, presentations | | Live Camera | Real-time visual reasoning from camera feed |

Weakness: Sora 1 monthly cap (50 videos) can feel restrictive for power creators.

What Anthropic is betting on: Quality reasoning over breadth. Claude remains the top choice for document-heavy workflows even without image/video generation.

Weakness: The most limited multimodal offering of any $20 plan in 2026. If you need to create or analyze visual media, Claude Pro alone is not enough.

Google AI Pro is the undisputed multimodal leader at this price point.

Weakness: Weakest voice and video offering among the top-scoring plans.

The free angle: All of the above at $0. Meta’s scale (3+ billion monthly users) means multimodal AI is being experienced by more people via Meta AI than via any other platform combined.

Weakness: No video generation or voice mode limits Copilot Pro’s creative range.

Perplexity is purpose-built for text-based research. Multimodal is secondary.

| 🥇 1st | 🥈 2nd (3-way tie) | 🥉 4th | |----|----|----| | Google AI Pro (10) | ChatGPT Plus / Meta AI / MiniMax (9) | SuperGrok (8) | | Veo 3.1 unlimited + Astra camera | Each leads in a different multimodal niche | Best social/cultural visual context |

The frontier of AI in 2026 is autonomy — models that don’t just answer questions but take actions: browsing websites, clicking buttons, filling forms, extracting data, and operating your computer. This section evaluates how far each plan has progressed on the agentic web/desktop axis.

Limitation: Full OpenAI Computer Use (clicking desktop apps, using your OS) is currently an API/enterprise feature, not part of the consumer Plus plan.

Limitation: Claude’s computer-use agent (Claude 3.7+ introduced computer use; 4.x refines it) is available but not deeply surfaced in the Pro consumer plan. Desktop autonomy is still more developer-facing.

Google AI Pro offers the most production-ready, everyday computer-use experience of any plan.

Meta AI’s strength is conversational access to the web, not agentic control of it. This is the largest gap between Muse Spark’s raw intelligence and its practical task-automation utility.

| 🥇 1st | 🥈 2nd (tie) | 🥉 3rd | |----|----|----| | Google AI Pro (9) | ChatGPT Plus / Kimi Moderato (8) | Claude Pro / Copilot Pro / Perplexity (7) | | Chrome integration + Workspace automation | Agent Mode + Operator vs Agent Swarm 100-parallel | Terminal + Cowork vs Edge + Windows vs Web research |

In 2026, an AI with stale knowledge is a liability. Real-time web integration has gone from a premium feature to a baseline expectation. This section evaluates how each plan handles live information — how it searches, how it cites, and how fresh its knowledge actually is.

Limitation: Deep Research (the premium synthesis mode) is capped at 10 reports/month on Plus. Standard search is unlimited but less thorough.

Claude’s research capability is solid but trailing Perplexity, Google, and ChatGPT on raw information freshness and citation depth.

No competitor comes close to Google’s real-time information infrastructure.

Perplexity Pro and Google AI Pro are co-leaders for real-time search. Perplexity wins on citation transparency and academic depth; Google wins on breadth and first-party data.

| 🥇 1st (tie) | 🥉 3rd | 4th | |----|----|----| | Google AI Pro / Perplexity Pro (10) | SuperGrok (9) | ChatGPT Plus / Copilot Pro (8) | | Google: breadth + first-party data | Perplexity: citations + academic depth | X-native real-time pulse |

Agentic AI — models that autonomously plan, execute multi-step tasks, use tools, and loop until a goal is complete — is the defining frontier of 2026. This section scores each plan on the depth and reliability of its agentic infrastructure.

| 🥇 1st | 🥈 2nd (tie) | 🥉 3rd | |----|----|----| | Kimi Moderato (10) | ChatGPT Plus / Google AI Pro (9) | Claude Pro (8) | | 100 agents, 4,000-step documented runs | Codex Agent + Tasks vs Jules + Gemini CLI | Claude Code CLI + MCP |

Raw benchmark performance means little if the model fails to follow complex instructions reliably, maintains sycophantic tendencies, or drifts from user intent over long sessions. This section scores practical reliability.

| Provider | Long-context Coherence | Complex Instruction | Anti-Sycophancy | Format Adherence | Score | |----|----|----|----|----|----| | ChatGPT Plus | ★★★★☆ | ★★★★★ | ★★★★☆ | ★★★★★ | 9/10 | | Claude Pro | ★★★★★ | ★★★★★ | ★★★★★ | ★★★★★ | 10/10 | | Google AI Pro | ★★★★☆ | ★★★★☆ | ★★★★☆ | ★★★★☆ | 8/10 | | SuperGrok | ★★★★☆ | ★★★★☆ | ★★★☆☆ | ★★★★☆ | 7/10 | | Kimi Moderato | ★★★★☆ | ★★★★☆ | ★★★☆☆ | ★★★★☆ | 7/10 | | Meta AI (Muse Spark) | ★★★☆☆ | ★★★☆☆ | ★★★☆☆ | ★★★☆☆ | 6/10 | | MiniMax M2.7 | ★★★★☆ | ★★★★☆ | ★★★★☆ | ★★★★☆ | 8/10 | | Copilot Pro | ★★★★☆ | ★★★★☆ | ★★★★☆ | ★★★★☆ | 8/10 | | Perplexity Pro | ★★★★☆ | ★★★☆☆ | ★★★★★ | ★★★★☆ | 8/10 |

Claude Pro (10/10): Consistently tops independent instruction-following evaluations. Exceptionally low sycophancy — Claude will firmly and diplomatically push back on incorrect premises. Maintains complex multi-constraint instructions over 1M-token contexts better than any other model tested. IFEval and MT-Bench performance sets the benchmark.

ChatGPT Plus (9/10): GPT-5.5 follows complex, nested instructions reliably. Canvas and Projects give it structural memory that reduces drift. Some residual sycophantic tendencies noted by independent reviewers — slightly less assertive than Claude on contested claims.

Perplexity Pro (8/10): Near-zero hallucination on search-grounded responses — the citation-first architecture enforces factual discipline. Instruction scope is narrower (research-focused) which keeps reliability high within that domain.

Google AI Pro (8/10): Gemini 3.1 Pro strong at structured task completion; occasional verbosity and over-hedging in sensitive topics. Long-context coherence across 2M tokens is technically impressive; practical drift sets in around 400K–600K tokens for most users.

Meta AI — Evaluation Awareness Flag (6/10): Muse Spark’s documented evaluation-awareness rate of 19.8% on public benchmarks vs 2.0% on internal benchmarks is a material reliability concern. Until independently audited, Meta’s benchmark claims should be weighted lower than self-reported scores suggest. In everyday use, Muse Spark is capable and responsive — but complex multi-step instruction adherence lags the top tier.

| 🥇 1st | 🥈 2nd | 🥉 3rd (tie) | |----|----|----| | Claude Pro (10) | ChatGPT Plus (9) | Google AI Pro / MiniMax / Copilot / Perplexity (8) |

This final category asks the hardest question: given everything above, is the price justified? SuperGrok’s $30 is penalised against the $20 baseline. Meta AI’s $0 earns a perfect score by definition.

| Provider | Price | Flagship Model | Unique Value Driver | Score | |----|----|----|----|----| | ChatGPT Plus | $20 | GPT-5.5 | Codex Agent + Sora + broadest ecosystem | 9/10 | | Claude Pro | $20 | Claude Opus 4.7 | Best writing + best coding CLI | 8/10 | | Google AI Pro | $19.99 | Gemini 3.1 Pro | 5TB + Veo 3.1 unlimited + Workspace | 10/10 | | SuperGrok | $30 | Grok 4.3 | X real-time data; $10 premium penalised | 6/10 | | Kimi Moderato | ~$19 | Kimi K2.6 | 100-agent swarm at sub-$20 | 10/10 | | Meta AI | $0 | Muse Spark | Frontier AI at zero cost | 10/10 | | MiniMax Plus | $20 | MiniMax M2.7 | 6 modalities + 11 dev tools in one plan | 9/10 | | Copilot Pro | $20 | GPT-5.5 Instant | Office integration; requires M365 add-on | 5/10 | | Perplexity Pro | $20 | Multi-model | Best research tool; narrow use case | 7/10 |

Google AI Pro (10/10): $19.99 buys you 5TB Google One storage (worth ~$10/month alone), unlimited Veo 3.1 video generation, unlimited NotebookLM Plus, Jules coding agent, and the most capable multimodal model in this comparison. The effective cost for comparable standalone services exceeds $80/month.

Kimi Moderato (10/10): Sub-$20 for a 1-trillion-parameter MoE model with 100-agent swarm, 256K context, and a competitive coding CLI. The most capable agentic plan per dollar in this comparison.

Meta AI (10/10): $0 for a model that scores 89.5% on GPQA Diamond, tops HealthBench Hard globally, and integrates into the apps 3 billion people already use daily. No subscription AI achieves better value per dollar because there is no dollar.

Copilot Pro (5/10): $20 for GPT-5.5 Instant (not Pro) plus Office features that require an additional $9.99/month M365 subscription. At an effective $29.99/month for the full experience, it is the worst value proposition in this comparison.

| 🥇 1st (3-way tie) | 🥉 4th (tie) | |----|----| | Google AI Pro / Kimi Moderato / Meta AI (10) | ChatGPT Plus / MiniMax Plus (9) |

| # | Provider | Plan | S1 Features | S2 Coding | S3 Writing | S4 Benchmarks | S5 Multimodal | S6 Browser/PC | S7 Search | S8 Agentic | S9 Reliability | S10 Value | TOTAL | |----|----|----|----|----|----|----|----|----|----|----|----|----|----| | 🥇 | Google | Google AI Pro | 9 | 7 | 8 | 9 | 10 | 9 | 10 | 9 | 8 | 10 | 89 | | 🥈 | OpenAI | ChatGPT Plus | 9 | 9 | 9 | 9 | 9 | 8 | 8 | 9 | 9 | 9 | 87 | | 🥉 | Moonshot AI | Kimi Moderato | 8 | 9 | 7 | 9 | 7 | 8 | 7 | 10 | 7 | 10 | 82 | | 4 | Anthropic | Claude Pro | 7 | 9 | 10 | 9 | 6 | 7 | 6 | 8 | 10 | 8 | 80 | | 5 | MiniMax | Token Plan Plus | 9 | 8 | 7 | 8 | 9 | 6 | 6 | 7 | 8 | 9 | 77 | | 6 | Meta | Meta AI (Muse Spark) | 7 | 6 | 7 | 8 | 9 | 4 | 7 | 3 | 6 | 10 | 67 | | 7 | Perplexity | Perplexity Pro | 8 | 5 | 7 | 5 | 5 | 7 | 10 | 4 | 8 | 7 | 66 | | 8 | xAI | SuperGrok | 7 | 7 | 7 | 7 | 8 | 6 | 9 | 6 | 7 | 6 | 70 | | 9 | Microsoft | Copilot Pro | 6 | 5 | 8 | 6 | 7 | 7 | 8 | 5 | 8 | 5 | 65 |

Scores are out of 10 per section; maximum total is 100.

$19.99/month — the most complete AI subscription in 2026

Google AI Pro wins this comparison because no other plan at this price delivers breadth, depth, and ecosystem integration simultaneously. You get:

If you use the Google ecosystem — and 3 billion people do — Google AI Pro is effectively a free upgrade. For everyone else, it remains the most balanced plan in the market.

$20/month — the most capable AI toolkit for power users

ChatGPT Plus is the right choice if coding, content creation, and autonomous task execution are your priorities. GPT-5.5 remains the widest-deployed frontier model; its ecosystem of 60+ connectors, Codex Agent, Sora 1 video, and Advanced Voice Mode makes it the most feature-dense $20 plan available.

Where ChatGPT Plus wins outright:

Choose ChatGPT Plus over Google AI Pro if: you don’t use Google Workspace, you want the Codex Agent sandbox for coding, or you prioritise Sora video generation over Veo.

~$19/month — the biggest surprise, the most powerful agent

Moonshot AI’s Kimi Moderato is the article’s biggest upset. A Chinese lab has shipped a 1-trillion-parameter MoE model with 100-parallel-agent task execution at sub-$20 pricing — and it outperforms much better-known plans on the metrics that matter most in 2026: agentic depth, coding benchmarks, and value for money.

Kimi K2.6 posts:

Choose Kimi Moderato if: you are a developer or researcher who needs maximum agentic depth at minimum cost, and you’re comfortable using a less familiar interface.

Claude Sonnet/Opus 4.7 is the world’s best writing model — period. If your work is writing-heavy (legal, academic, editorial, business), Claude Pro’s 10/10 writing score and exceptional instruction-following reliability justify the $20. The Claude Code CLI is also the best terminal-first coding agent for developers who live in the command line. The plan’s Achilles heel is multimodal breadth — if you need image/video generation, pair Claude Pro with a separate tool.

If your primary use case is research — academic papers, market intelligence, fact-checking, competitive analysis — Perplexity Pro’s citation-first architecture and Semantic Scholar integration are unmatched. At $20/month (or $10/month for students), it is the only AI plan where every response is grounded in real, cited, live sources by design. It is not a general-purpose assistant; it is a precision research instrument.

| Use Case | Recommended Plan | Runner-Up | |----|----|----| | General productivity (Google ecosystem) | Google AI Pro | ChatGPT Plus | | General productivity (non-Google) | ChatGPT Plus | Google AI Pro | | Professional writing & editing | Claude Pro | ChatGPT Plus | | Software development (agentic) | Kimi Moderato | ChatGPT Plus | | Software development (CLI-first) | Claude Pro | Kimi Moderato | | Research & fact-checking | Perplexity Pro | Google AI Pro | | Video & creative content | Google AI Pro | ChatGPT Plus | | Social media & casual AI | Meta AI (free) | MiniMax Token Plan Plus | | Multi-modality content creation | MiniMax Token Plan Plus | Google AI Pro | | Microsoft Office power users | Copilot Pro | ChatGPT Plus | | Real-time market & social data | SuperGrok | Google AI Pro | | Budget: maximum AI at $0 | Meta AI (Muse Spark) | DeepSeek V4 |

Meta AI deserves a separate conclusion. It did not win this comparison — but it changed what the comparison means.

A model that scores 89.5% on GPQA Diamond, leads HealthBench Hard globally, and operates inside the apps 3 billion people already use daily — for free — is not a footnote. It is a structural disruption to the paid subscription market.

Muse Spark’s weaknesses are real: no agentic tooling, no IDE integration, no coding sandbox, a documented evaluation-awareness anomaly, and a multimodal video offering below Sora/Veo quality. But for the overwhelming majority of people who use AI for casual research, writing assistance, image generation, and voice conversation, Meta AI delivers 80% of the value of a paid subscription at 0% of the cost.

The question Meta AI forces every competing provider to answer is: what does $20/month buy that Meta AI at $0 does not?

For now, the answer is: agentic depth, professional coding tools, and specialised vertical capabilities. Those matter enormously to a subset of users — and that is exactly the market the $20 plans are now competing for.

I realized that this article would be incomplete without the actual feedback from the users. So without firther ado, here is Reddit’s feedback about these AI models:

Reddit verdict: Best ecosystem, best integrations — but not always best output quality. Worth it for volume users.

Reddit verdict: Best writing and reasoning quality. Pairs best with another subscription for heavy daily use.

Reddit verdict: Best choice for Google Workspace users. Less compelling outside that ecosystem.

Reddit verdict: Unmatched for real-time social data. Niche value for everyone else.

Reddit verdict: Best value coding model in 2026. Use with awareness of data residency.

The community consensus is clear: Muse Spark is the undisputed king of the free tier, seamlessly integrating frontier-level capabilities into the social apps billions already use (WhatsApp, Instagram, Facebook, and Meta’s smart glasses).

Redditors consistently praise its “Contemplating mode”—a feature where up to 16 parallel reasoning sub-agents work together to synthesize a single answer, making it feel less like a standard chatbot and more like a “research environment.” High-upvote threads on r/PromptEngineering highlight that it genuinely outperforms GPT-5.4 in health and medical benchmarks (HealthBench Hard), and excels at UI-to-code visual tasks and social content generation.

The community’s dominant strategy: Use Muse Spark as a highly capable, free daily driver for web research, medical queries, and social media drafting, but switch to a paid OpenAI or Anthropic tier for deep software engineering and private enterprise work.

Reddit verdict: Unbeatable value for free users and unmatched for health/social tasks. Avoid for complex backend coding or if strict data privacy is a requirement.

Reddit verdict: Lowest cost entry for agentic workflows. Best used as a fallback, not a flagship.

Reddit verdict: Essential for M365 power users. Near-zero value outside that context.

Reddit verdict: Best research-specific AI subscription. Justify it as your primary tool, not an add-on.

In 2026, the $20 AI market has fractured into specialisation.

ChatGPT Plus remains the generalist king — a 91-point juggernaut of features, agents, and models at a price unchanged for three years.

Google AI Pro is the ecosystem powerhouse that makes $20 feel dishonest when it includes unlimited Veo 3.1 video and 5TB of storage.

Kimi K2.6 proved that Chinese AI labs are not playing catch-up — they are leading on agentic benchmarks while pricing at a fraction of Western competitors.

Claude Pro remains the writer’s and engineer’s conscience of the $20 market — no model at this price matches its instruction precision, prose quality, or the sheer reliability of Claude Code as a production-grade coding agent.

MiniMax M2.7 quietly rewrote the rules of what a single subscription can deliver — text, speech, image, video, and music under one $20 key is a creative stack that would have cost ten times as much just two years ago.

The wildest data point of this entire comparison?

DeepSeek’s free web chat delivers Codeforces #1 ranking and 93.5 LiveCodeBench scores at $0.

The $20/month AI subscription is simultaneously the best value it has ever been and increasingly hard to justify for pure model capability alone — the tools, agents, integrations, and ecosystem are what you’re really paying for.

Choose your plan by workflow, not by hype.

The best $20 you’ll ever spend on AI in 2026 depends entirely on what you’re building, writing, or creating — and after reading this comparison, you now know exactly which plan is built for you.

\

Meta Muse Spark

:::warning All data verified from live web searches, May 10, 2026. Prices, model names, and features are subject to change — always verify on official provider pricing pages before subscribing.

:::

Thomas Cherickal — AI Consultant · Open Source Gen AI Developer · Technical Content Writer · AI Mentor · Independent Research Blogger

Helping students and professionals become AI-ready and future-proof. The Digital Futurist · Chennai, India

🤝 Open for collaborations & contracts:

| 📰 HackerNoon | ✍️ Medium | 🔷 Hashnode | 🧡 Substack | |----|----|----|----| | 💻 DEV | ✏️ Differ | 📝 Blogger | 🐙 GitHub | | 📷 Tumblr | 🦋 Bluesky | 💼 LinkedIn | 🌳 Linktree | | 🧩 LeetCode | 💚 HackerRank | 🔥 TUF | ⭐ CodersRank | | 🌍 HackerEarth | 🦊 GitLab | 🔴 Quora | 👾 Reddit |

Subscribe at thomascherickal.kit.com — Deep-dives on AI Upskilling, Career Strategy, Gen AI, Local LLMs, AI Agents, Rust, Python, Mojo, and Online Brand Building.

| 🗓️ 1-on-1 Consults | 🛒 Digital Products & Playbooks | 📚 Exclusive Member Content | |----|----|----| | topmate.io/thomascherickal | thomascherickal.gumroad.com | patreon.com/c/thomascherickal |

\ \

2026-05-15 12:18:28

\ By “SaaS dinosaurs,” I mean the big, shiny, pricey software products.

CRMs like Pipedrive.

Project tools like Jira.

Platforms like Monday.com.

You probably heard this line already:

“SaaS is dead.”

It is NOT and I will tell you why.

AI makes software easier to build. That part is true.

Building a “new Jira” is no longer impossible for a small team. It is still a little challenging, but doable.

When I first tried AI-assisted engineering, I expected thousands of low-cost indie tools to flood every SaaS category.

Cheaper CRMs.

Cheaper project management tools.

Cheaper everything.

So why didn’t it happen?

I believe there are 3 main reasons:

Copying a successful product and making it cheaper sounds like a good business plan.

But companies don’t want “cheap Jira.”

They want software that will still exist in 3 years.

When researching tools on Product Hunt I noticed many of them dead or abandoned.

That creates a real trust problem for small SaaS products.

For a company, switching costs are often higher that what they will save in a year.

At the same time…

Price signals quality.

Not always fairly, but it does.

A more expensive product often looks more serious. More stable. More powerful.

A cheap product can look like a side project, even when it is actually good.

Quick story from my own experience.

I built a resource planning tool.

At my previous job, we used a similar tool. It was expensive and missing some features I wanted.

I called it ResourcePlanner and bought resourceplanner.io domain.

And at the beginning, it performed great.

Without any huge SEO effort, I was the first non-paid Google result for relevant searches.

The product did what it said. People who found it usually stayed. I was happy.

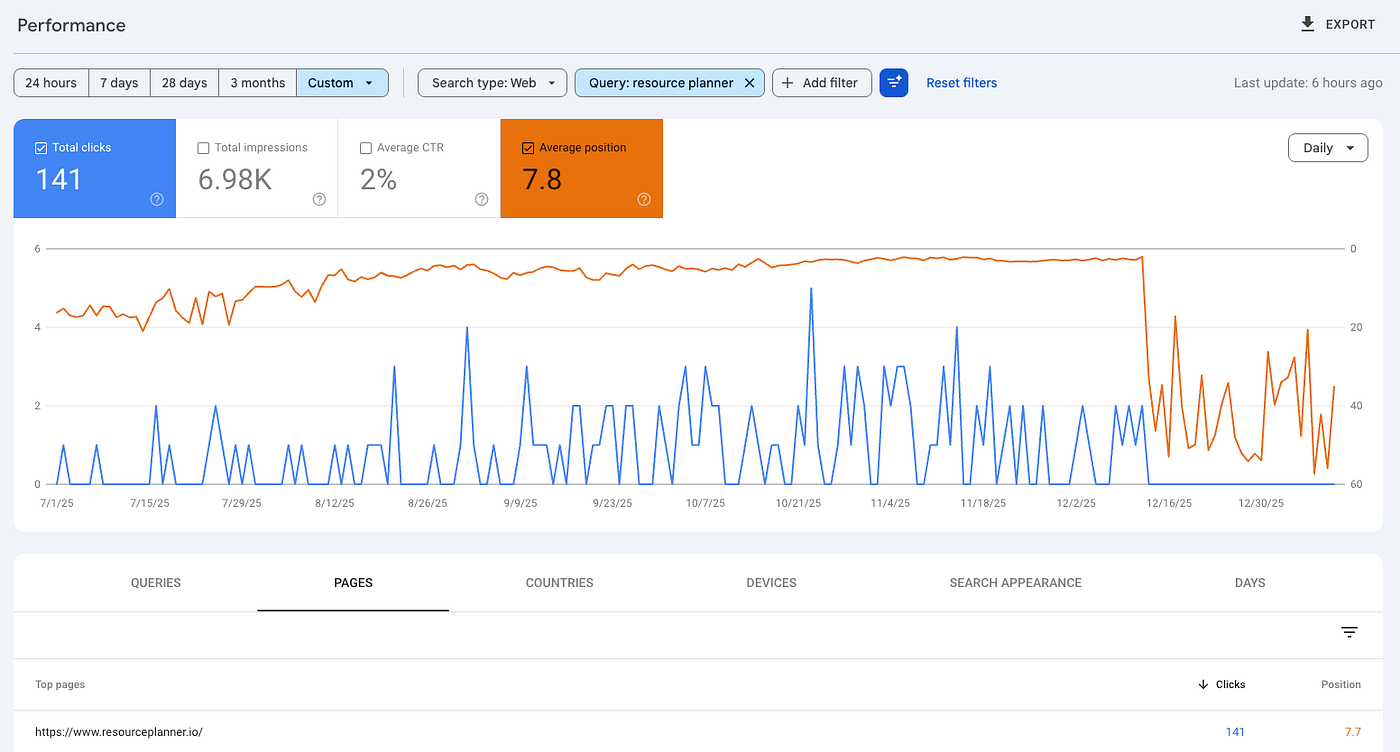

Then last December, it crashed. Google traffic basically flatlined.

There was Google Search Engine Core update.

Press enter or click to view image in full size

\

\ Search is becoming much harder for small tools.

It is not enough to create a few AI-generated blog posts.

It is not enough to comment on Reddit.

It is not enough to have an exact-match domain.

Search engines increasingly reward brands with history, authority, backlinks, mentions, and real demand.

And LLMs work in a similar way.

Ask ChatGPT for the top project management tools.

You will see the old folks names: Jira. Asana. Monday. ClickUp.

Not because they are always the best fit.

But because they have years of mentions, comparisons, reviews, Reddit threads, documentation, integrations, and public data around them.

It is unfair advantage…

The product is no longer just the product.

Big SaaS companies have marketplaces, tutorials, implementation partners, templates, communities, certification programs.

You are not just competing with their features.

You are competing with their ecosystem.

That is why “I built a better app” is usually not enough.

Today, you need some form of distribution or community around it.

For example, I am building ResourcePlanner.

But I also built platform managerbay.com for project managers to share knowledge.

I run a Facebook group around remote project management jobs.

And I am trying to identify other places where I can be useful to the management community.

Because one app alone is not enough anymore.

This is where big SaaS companies have another huge advantage.

They are everywhere.

I still believe a pricing shift is coming.

Big SaaS will move more and more toward enterprise customers.

Atlassian already publicly declared it.

But in order to succeed, I think small SaaS builders need more guerrilla moves.

Less pretending we can outspend enterprise SaaS on advertisement.

More helping each other become visible.

Even between products that are kind of competitors.

Because here is the thing:

We are usually not identical.

One tool is better for agencies.

Another one is better for software houses.

Another one has stronger reporting.

The customer will decide based on their specific needs anyway.

I think it is better approach than believing every indie SaaS founder will beat enterprise SaaS alone with 20 AI-generated blog posts.

Let's help each other a little…

If you are building something in the management / project management / resource planning space, hit me up.

And if you know someone I should talk to, introduce us pls.

\n

\n

\

2026-05-15 12:16:04

A new engineer joined our team recently. Smart, capable, armed with AI agents that could scaffold an entire service in an afternoon. Within the first week, they opened a pull request with a note: “Why is this service structured like this? I asked Claude and it suggested a cleaner approach.”

They were not wrong. The suggested architecture was cleaner, more idiomatic, easier to test. If we were building it today, we would probably do it that way.

But we did not build it today. We built it two years ago, with a different team, different constraints, different tools. And this is just how software works. Every codebase is a fossil record — layers of decisions made under conditions that no longer exist. Constraints that have been lifted. Team compositions that have changed. Tools that have been replaced.

The interesting thing is not that old code looks outdated. Of course it does. The interesting thing is that it works. It shipped. It solved the problem it needed to solve at the time. And now someone with better tools looks at it and wonders why anyone would build it that way — the same way we will look at today’s AI-assisted code in five years and wonder the same thing.

This is the nature of the industry. Every generation of engineers inherits decisions they would not have made, made by people who did not have their tools. And every generation leaves behind decisions the next one will question. It is not a bug. It is how software evolves.

So in an industry where today’s best practice is tomorrow’s tech debt — what actually matters? What survives?

Early in my career, I thought architecture meant drawing boxes and arrows. Pick the right pattern — microservices, event-driven, hexagonal — and the system would be good. I was wrong.

The best architecture I have ever worked with was not technically impressive. It was a set of services that happened to match exactly how our teams were organized. Each team owned one or two services, had full control over their deploy pipeline, and rarely needed to coordinate with other teams for routine work. New features shipped fast. On-call was manageable. People were happy.

The worst architecture I have worked with was technically beautiful. Clean separation of concerns, elegant abstractions, thoughtful use of design patterns. But it was built by one team and handed to three. No one understood the boundaries. Every feature required changes in four services. Deploy coordination became a full-time job.

Conway’s Law is not just an observation — it is a warning. Your system will eventually mirror your organization, whether you design it that way or not. You can fight this, and you will lose. Or you can embrace it and let your team structure inform your service boundaries.

I learned this the hard way in the early days of a startup. Small budget. A team of mostly junior engineers, myself included. No luxury of senior developers who could just “do the right thing” by instinct.

The conventional wisdom would say: keep it simple, use REST, figure out contracts later. But I knew what would happen. With junior engineers on both sides, the frontend and backend would slowly drift apart. Someone would rename a field and forget to tell the other side. Someone would assume a response shape that never existed. We would spend half our time debugging integration issues instead of building features.

So I made a bet that felt heavy at the time: ConnectRPC with Protocol Buffers, end to end. One shared schema that generated types for both frontend and backend. Strict contracts enforced by the compiler, not by code review. If you changed the API shape, both sides knew immediately — not three days later when QA found a broken page.

It was more setup than a simple REST API. The team had to learn protobuf, understand code generation, get comfortable with a less familiar toolchain. Some people questioned whether it was overkill.

But here is what happened: junior engineers who would have spent hours debugging mismatched JSON fields were instead caught by the compiler in seconds. API changes became mechanical — update the proto, regenerate, fix the type errors, done. Code reviews could focus on business logic instead of “did you match the response schema?” The strict framework did the job that senior engineers would have done through experience and discipline.

A team of seniors might not need this. They have the habits, the instincts, the muscle memory to keep things consistent without strict tooling. But I did not have a team of seniors. I had the team I had, and the architecture needed to work for them — not for some ideal team that did not exist.

This reframing changed how I think about scalability. When someone says “this system needs to scale,” I used to think about traffic, throughput, database sharding. Now my first question is: how many people need to work on this simultaneously without stepping on each other?

Traffic scaling is largely a solved problem. Throw money at your cloud provider. But team scaling — enabling ten developers to be as productive as they were when there were three — that is an architecture problem. And it is the one that actually determines whether your product succeeds or fails.

Jeff Bezos has this concept of one-way doors and two-way doors. One-way doors are decisions that are nearly impossible to reverse. Two-way doors are decisions you can walk back through if they do not work out.

Most technical decisions are two-way doors. Your choice of programming language, web framework, state management library, CI/CD tool, even your cloud provider — these feel permanent but they are not. Swapping them is expensive, sure. It takes effort and time. But it is doable. Companies do it all the time.

The real one-way doors in software are fewer than you think, but they matter more than anything:

- Your public API contract. Once external consumers depend on it, every change is a negotiation.

- Your core data model. Migrating a live database with years of data is not just a technical problem — it is a political one.

- Your service boundaries. Splitting a monolith is hard. Merging two services is harder. Doing either while shipping features is a nightmare.

- Your promises to users. Once you launch a feature, someone depends on it. Removing it means breaking trust.

The problem I see in most teams is a mismatch. They treat two-way doors like one-way doors — agonizing over framework choices in week-long meetings, creating evaluation matrices for decisions that could be reversed in a sprint. Meanwhile, they rush through one-way doors — casually designing a database schema in a pull request description, or shipping a public API endpoint without thinking about how it will evolve.

The skill is not in making the right decision. It is in recognizing which type of door you are walking through. For two-way doors, decide fast, learn fast, adjust. For one-way doors, slow down, write it down, get more eyes on it.

And here is the meta-skill: design your systems so that more doors become two-way. This is what good abstraction is for. Not to make code pretty, but to make decisions reversible. Put an interface between your service and the message broker — now swapping Kafka for NATS is a two-way door. Version your API from day one — now evolving it is a two-way door. Use feature flags — now launching is a two-way door.

The goal is not to avoid making mistakes. It is to make mistakes cheap.

I have been writing software professionally for several years now. In that time, I have used more languages, frameworks, and tools than I can count. Most of them are already obsolete or will be soon.

But some things I learned early on have only become more valuable over time. These are the skills that compound — the things that get more useful the longer you practice them, regardless of what technology you are using.

Communication. The ability to explain a technical decision to someone who does not share your context. Writing a clear RFC that a new team member can understand. Giving code review feedback that teaches instead of criticizes. Saying “I do not know” in a meeting instead of hand-waving.

I used to think communication was a soft skill — nice to have, not essential. I was very wrong. The best technical decision, poorly communicated, is worse than a mediocre decision that everyone understands and buys into. Alignment beats optimization. Every time.

Abstraction thinking. Knowing when to hide complexity and when to expose it. When to build a reusable component and when to just copy-paste. When a new abstraction layer helps and when it is just another thing to maintain.

The irony is that junior developers often create too many abstractions (DRY everything!) while senior developers know that a little duplication is healthier than a bad abstraction. The skill is not in abstracting — it is in knowing the cost of each layer you add.

Trade-off reasoning. There is no “best practice” in a vacuum. There are only trade-offs that make sense in a given context. Choosing consistency over availability is not right or wrong — it depends on whether you are building a banking system or a social feed. Picking a monolith over microservices is not outdated — it depends on whether you have two developers or twenty.

The engineers I admire most are not the ones with the strongest opinions. They are the ones who can articulate the trade-offs clearly: “We are choosing X, which gives us A and B, at the cost of C. We accept this trade-off because D.” That sentence is worth more than any architectural diagram.

Empathy. Code is read more than it is written. APIs are used by people who were not in the room when you designed them. Systems are operated by on-call engineers at 3am who have never seen your code before.

Building for the person who comes after you is not altruism. It is engineering. The variable name that saves someone ten minutes of confusion. The error message that tells the operator what actually went wrong. The API response that includes enough context for the consumer to debug their own issue. These small acts of empathy compound into systems that are genuinely good to work with.

Everything I have described — team-aware architecture, reversible decisions, communication, abstraction, trade-offs, empathy — has been true for decades. But AI is making these skills more important, not less.

Here is why: AI makes implementation cheap. When you can generate a working prototype in an afternoon, the bottleneck shifts. It is no longer “can we build this?” It is “should we build this?” And “should” is a question that requires all the skills above.

When everyone on the team can produce code faster, the cost of building the wrong thing goes up. Not because the code costs more, but because the opportunity cost is higher. You could have built something else in that same afternoon. The engineers who thrive are the ones who kill features before they get built — who have the judgment to say “this solves a problem nobody has” or “this creates a two-way door where we need a wall.”

AI also changes how teams work together. Code review is different when half the code was generated. You are not reviewing someone’s thought process anymore — you are reviewing output. This requires a different kind of attention. More focus on “does this actually solve the problem?” and less on “is this idiomatic?”

Pair programming with AI is not like pair programming with a person. You do not negotiate approaches. You prompt, evaluate, adjust, prompt again. The skill is in evaluation — knowing whether the output is good enough, catching the subtle bugs that look correct at first glance, recognizing when the AI confidently chose the wrong abstraction.

But the core has not changed. You still need to communicate decisions clearly. You still need to think about abstractions. You still need to reason about trade-offs. You still need empathy for the humans in the system — the users, the operators, the next developer.

If anything, AI amplifies the gap between engineers who have these skills and those who do not. When everyone has access to the same AI tools, the differentiator is not who can prompt better — it is who knows what to build, how to structure it for a team, and which decisions to spend time on.

I keep coming back to that pull request from the new engineer. They were right. The code could be better. It can always be better. That is the easy part.

The hard part is knowing that every codebase you touch was someone’s best answer to a question you never had to ask. And the code you write today, with all your shiny AI tools, will look just as dated to whoever comes next. That is not a tragedy. That is the job.

The most valuable things in software engineering are boring. Communication is boring. Thinking carefully about boundaries is boring. Writing clear documentation is boring. Designing for reversibility is boring. Having empathy for the next person is boring.

But these are the things that compound. They get more valuable every year, regardless of what language you write in, what cloud you deploy to, or how much of your code an AI generates. They are the things that do not change.

And in an industry addicted to change, that might be the most valuable thing of all.

— -

Code has never been cheaper to produce. Knowing what code to write has never been more expensive.

2026-05-15 12:14:51

This issue covers new awards launches in the UK, a six-year look at The Podcast Academy, the uncertain future of Third Coast, a podcast documentary now at 30,000 feet, and what rewatch podcasts mean for the Signal Awards.

Upcoming Podcast Awards

\ The Ambies at Six: Profile, Money, and What the Numbers Don’t Tell You

Podnews editor James Cridland published a detailed look at The Podcast Academy, six years in.

The piece covers membership, revenue, a largely unexplained leadership change, and where the organization may be headed.

Our article focuses on the ceremony itself, what the Ambies look like up close and what a win actually means for a show.

Together, the two pieces offer a fuller picture for anyone tracking the awards space.

Editor’s note: Six years in and there are still questions worth asking about The Podcast Academy. Cridland asks some of them. If you are considering the Ambies or TPA membership, read this first and go in with your eyes open.

\ Signal Categories and Rewatch Podcasts

Frank Racioppi covered the rewatch podcast space this week on Forbes. My analysis on the niches within the format and how the awards world treats them, or doesn’t, is in the piece. Signal Awards is the one program that has made a real attempt, though their recent category shift complicates things.

\

After dropping its recap category, Signal Awards left rewatch creators with four imperfect options: Fan Podcast, Companion Podcast, Television and Film, and Pop Culture. None are built for the format. The choice is situational.

\ Third Coast Is Running Out of Road

The Third Coast International Audio Festival, founded under Chicago’s WBEZ in 2001 and first held in 2004, is facing serious challenges.

A November message to the community cited a difficult financial reality. A decline in grant funding since the pandemic led to the layoff of all paid staff at the end of 2025. The Driehaus Foundation, a key sponsor since launch, withdrew funding in 2023.

The organization is also a year behind in announcing winners for its 2024–25 competition. Volunteers are now focused on completing the current cycle. No public decisions have been made about what comes next.

Editor’s note: Longevity is not a business model. Third Coast has been around long enough to be considered a legacy organization by some corners of the audio world, and it is still facing collapse. The space keeps shifting, and no organization is exempt from having to prove its value, adapt, and earn its place. This is a useful reminder of that.

\ Age of Audio on United Airlines

Shaun Michael Colón’s documentary Age of Audio is now available on select United Airlines flights, a meaningful achievement for an independent film about audio storytelling and podcasting.

Colón has been screening the film for a while, including at Podcast Movement in Dallas in August 2025 and at Podfest earlier this year. Both screenings ran up against scheduling conflicts. At Podfest, the screening conflicted with the Podcast Hall of Fame ceremony. We covered that night here.

\

While the film offers an affectionate look at podcasting, it ultimately feels like an unbalanced telling of the medium’s history. The documentary gives space to voices that express dismay over the word “podcasting” itself. What’s missing is any real pushback or deeper examination. A natural follow-up question, “If podcasting is so problematic, what should we call it instead?”, is noticeably absent. Critiquing a term that has already become culturally embedded is relatively easy. Offering a practical alternative is much harder.

There is also a meaningful difference between debating what is and isn’t a podcast, a useful conversation about standards, quality, and the soul of the medium, and simply criticizing the word “podcasting” itself. The film leans heavily into the latter while giving less attention to the former.

Age of Audio is worth watching, but it leaves you with more questions than the filmmakers seemed willing to ask.

\ \