2026-05-14 14:34:19

Recently, I’ve been following the progress of domestic GPU scheduling and Kubernetes AI resource models. With the release of HAMi v2.9, I want to share several observations on how the AI Infra control plane is evolving.

When DeepSeek R1 was released in early 2025, most people focused on the fact that it trained a model competitive with OpenAI o1 for just $5.6 million. What struck me, however, was that as inference costs plummeted, GPU utilization issues would quickly come to the forefront.

As models became more useful and inference demand exploded, “one GPU per model” rapidly became a luxury. Meanwhile, the NVIDIA H200 export saga accelerated the adoption of domestic compute. First, a sales ban; then, at the end of 2025, a 25% tariff under Trump; and by January 2026, Chinese customs had cleared zero units. Policy now mandates that over 40% of data center chips must be domestically produced by 2026.

The reality is harsh: not only are GPUs scarce, but you must also learn to use NVIDIA, Ascend, Cambricon, Hygon, and other very different platforms simultaneously.

That’s why I believe HAMi v2.9 is more significant than it appears on the surface.

Kubernetes has always managed GPUs in a rather crude way:

resources:

limits:

nvidia.com/gpu: 1

This was sufficient in 2019, when the main question was simply whether a Pod needed a GPU or not.

But that’s no longer enough. An inference service might only need 4GB of VRAM, multiple small models can share a single card, training jobs care about GPU topology and interconnect bandwidth, and multi-tenancy requires fault domain isolation. Treating GPUs as integer resources is like using an abacus for statistical analysis—not impossible, but the mental model is out of sync with reality.

The most notable feature in HAMi v2.9 is the HAMi-core mode for the Ascend 910C. Previously, sharing Ascend cards relied on SR-IOV hardware virtualization, which was coarse-grained and inflexible. HAMi-core takes a different approach: it uses LD_PRELOAD to intercept ACL calls in user space, enabling memory isolation at the MB level and compute throttling by percentage.

In short: it’s managed by software, not hardware slicing.

This is reminiscent of how SDN abstracted the network control plane from hardware devices—GPU partitioning is shifting from a hardware capability to a cluster control plane capability. Considering that Huawei shipped 810,000 Ascend 910C cards last year—nearly half of all domestic chips—this capability has significant real-world impact.

Kubernetes v1.34 (September 2025) officially promoted DRA (Dynamic Resource Allocation) to GA, and Red Hat OpenShift 4.21 followed suit. This is a big deal.

The Device Plugin solved “how to connect GPUs to K8s,” but not “how to express complex AI resource requirements.” Device Plugins only know how many cards are on a node, not how much VRAM you need, what topology, or what isolation level.

DRA standardizes device resource declaration, allocation, and management via ResourceClaim and DeviceClass. HAMi-DRA takes a pragmatic approach: it doesn’t require users to change how they declare resources. Instead, it uses a Mutating Webhook to automatically convert existing Device Plugin-style declarations into the DRA model. Legacy systems don’t need to change, but can still leverage new capabilities.

I liken this to what CSI did for storage: it didn’t eliminate vendor differences, but allowed Kubernetes to consume different storage capabilities in a unified way. DRA does the same for AI accelerators—NVIDIA, Ascend, AMD, Vastai cards will never be identical, but the scheduling layer should speak a common language.

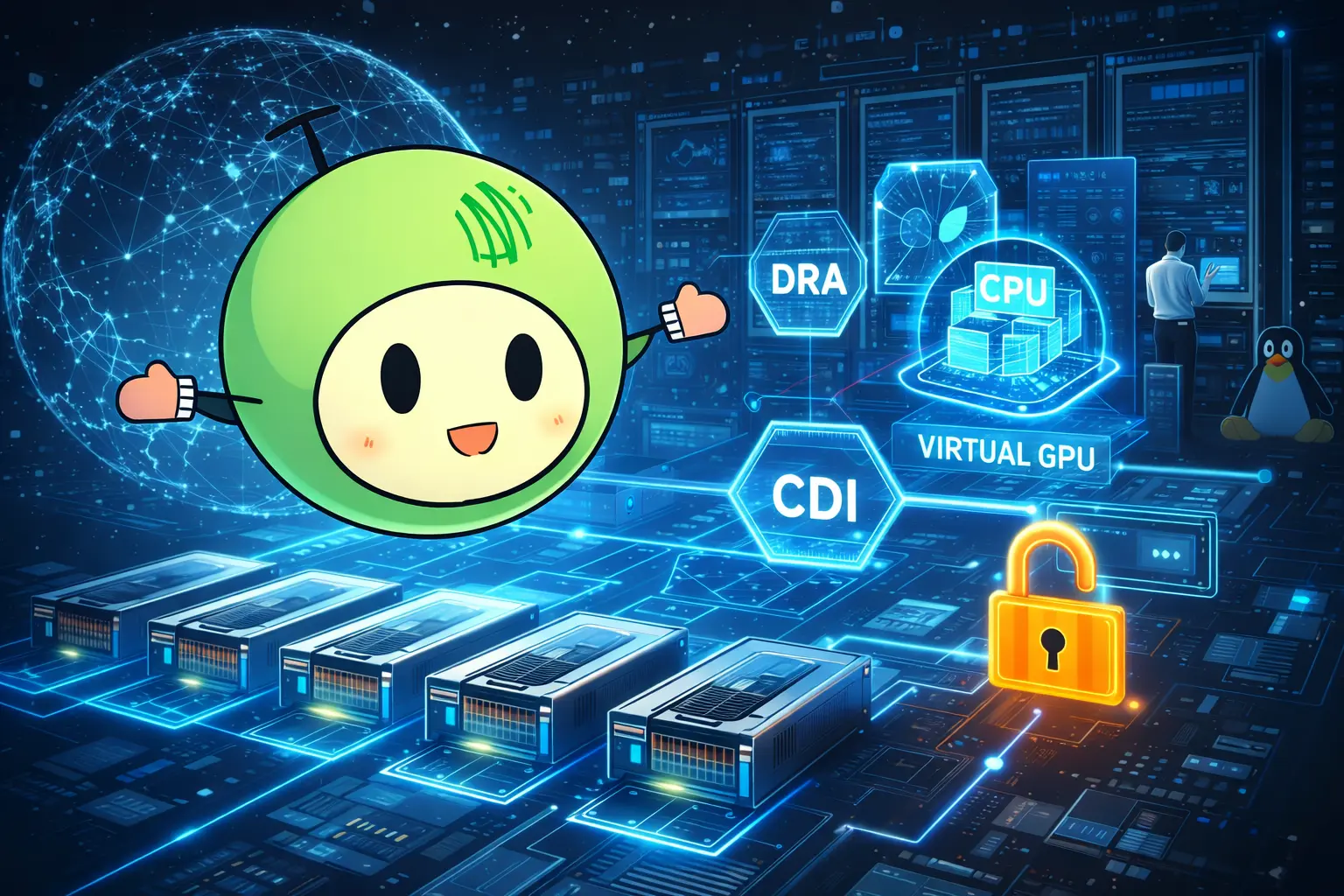

If we look beyond individual features and consider HAMi-core, DRA, CDI, and the scheduler together, they actually correspond to different layers of GPU resource management:

Connecting these layers, from top to bottom, forms the complete Kubernetes GPU Control Plane:

This is a complete control plane path. In v2.9, Volcano vGPU was upgraded to v0.19 with enhanced CDI support. While this may seem like a minor improvement in device injection, it actually completes a critical link in this chain.

The reality for domestic AI clusters: you can’t build infrastructure around just one type of GPU.

Enterprise environments often have NVIDIA, Ascend, Biren, Cambricon, Hygon, Muxi, Kunlunxin, Vastai, and other devices coexisting. Each card has different drivers, runtimes, virtualization capabilities, and monitoring methods. HAMi v2.9 adds support for Vastai, covering more than ten types of heterogeneous compute devices. Mixed training and inference, online and offline workloads, domestic and overseas GPUs, multi-team and multi-tenant resource pools—in these scenarios, unified scheduling is far more important than single-card performance.

GPU sharing will shift from a cost-saving measure to a default requirement. After the explosion of inference workloads, not every workload deserves exclusive access to an entire card. Exclusive allocation will increasingly become a luxury.

DRA is the way forward, but migration will be gradual. The Device Plugin ecosystem is too large to disappear overnight. HAMi-DRA’s compatibility layer shows the project team understands this.

Heterogeneous scheduling will become the core challenge for AI Infra. Whoever can abstract different vendor devices into a unified scheduling language will control the key position in the control plane.

Kubernetes will be reshaped by AI workloads. From scheduling semantics to resource models, AI requires much greater expressiveness than traditional web services. DRA, CDI, topology-aware scheduling—these are not isolated evolutions, but all point to one thing: Kubernetes is evolving from a container orchestrator to the control plane for AI computing.

The significance of HAMi v2.9 is not just in supporting a particular device or partitioning method, but in making one thing clear: the next generation of AI infrastructure competition is not just about model frameworks or GPU counts, but about the control plane.

GPUs are shifting from external devices on nodes to native resources within the Kubernetes control plane. Whoever defines the resource model for the AI era will define the long-term boundaries of AI Infra.

2026-04-03 13:20:28

Kubernetes hasn’t been replaced by AI, but it’s being redefined by it. Anxiety is the prelude to rebirth.

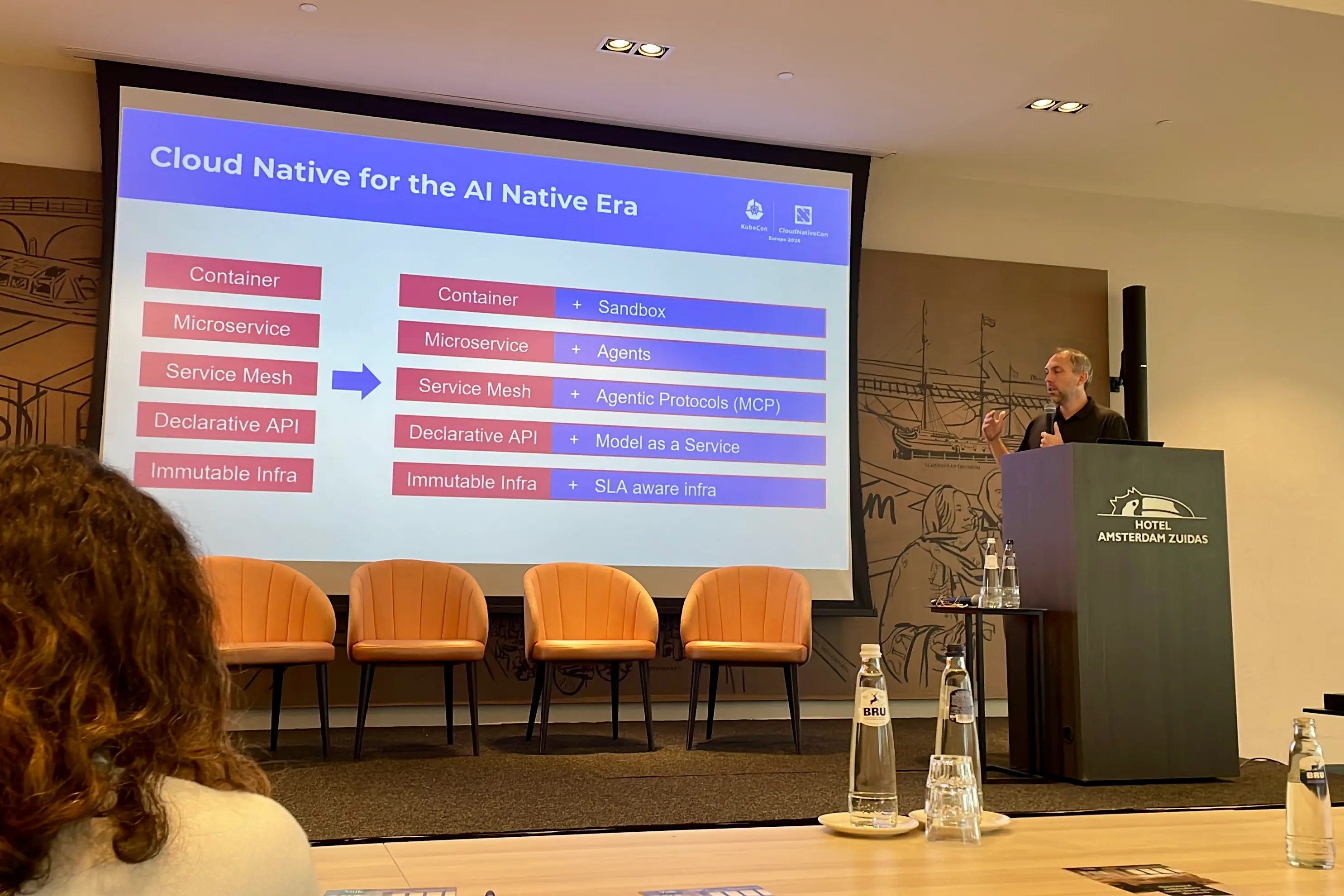

After attending KubeCon EU 2026 in Amsterdam, I’ve been pondering a key question: Kubernetes isn’t obsolete, but it’s no longer “enough”; it hasn’t been replaced by AI, but it’s being redefined by AI.

This was my third time attending KubeCon in Europe. Over the past few years, you can actually see the community’s mindset shift through the event slogans:

2024 Paris: La vie en Cloud Native

→ Cloud Native has become a “way of life,” the default state

2025 London: No slogan, just the 10th anniversary

→ Kubernetes reached a milestone, focusing on retrospection rather than moving forward

2026 Amsterdam: Keep Cloud Native Moving

→ But the question is: where is it moving?

The absence of a slogan in 2025 was a signal in itself:

When an ecosystem starts commemorating the past instead of defining the future, it’s already at an inflection point.

This article doesn’t recap the talks, but instead distills my observations at KubeCon into insights about Kubernetes’ anxiety and rebirth in the AI wave.

The biggest change at KubeCon was that AI has completely replaced traditional cloud native topics. The focus shifted from service optimization and microservices management to how to deploy and manage AI workloads on Kubernetes, especially inference tasks and GPU scheduling.

Kubernetes, as the foundational infrastructure, was once the core of the cloud native world. With the explosive growth of AI models, the question now is whether Kubernetes can still serve as a “universal” platform for everything, which has become a new source of anxiety.

The AI boom brings real challenges: Can Kubernetes’ “universality” adapt to the complexity of AI workloads?

AI’s popularity has shifted the cloud native spotlight entirely to artificial intelligence. AI coding, OpenClaw, large language models, and generative models have all drawn widespread attention. AI has become the core computing demand in the real world.

This surge in demand raises the question: Can Kubernetes continue to serve as the infrastructure platform for complex tasks? Especially with issues like GPU sharing, inference model scheduling, VRAM allocation, and device attribute selection, is the traditional Kubernetes resource model sufficient?

In the past, Kubernetes handled compute, storage, and networking as foundational infrastructure. But with the rapid development of AI, its “universality” is being challenged. Particularly for inference tasks, Kubernetes’ model appears thin.

OpenStack once aimed to be a complete open-source cloud platform, but ultimately failed to sustain growth due to complexity and a lack of flexibility in adapting to new technologies.

Will Kubernetes follow the same path? I believe Kubernetes has different strengths: as a container and microservices orchestration platform, it’s widely adopted and has strong community and vendor support. It doesn’t try to replace all cloud provider capabilities but serves as an infrastructure control plane to help users manage resources.

However, as AI workloads become mainstream, Kubernetes must find a new position to avoid being replaced by “AI-optimized platforms.”

At KubeCon, NVIDIA announced the donation of the GPU DRA (Dynamic Resource Allocation) driver to the CNCF, marking the upstreaming of GPU resource management. GPU sharing and scheduling have become urgent issues for Kubernetes.

Traditionally, Kubernetes relied on the Device Plugin model to schedule GPUs, only supporting allocation by device count (e.g., nvidia.com/gpu: 1). But for AI inference tasks, more information is needed for resource scheduling, such as VRAM size, GPU topology, and sharing strategies. NVIDIA DRA makes GPU resource management more flexible and intelligent, gradually easing the “GPU resource crunch” in AI workloads.

This shift means Kubernetes is no longer just a “container orchestration platform,” but is becoming the infrastructure layer for AI-specific resource scheduling.

Against this backdrop, both the community and industry are exploring finer-grained GPU resource abstraction and scheduling mechanisms. For example, the open-source project HAMi is building a GPU resource management layer for AI workloads on top of Kubernetes, supporting GPU sharing, VRAM-level allocation, and heterogeneous device scheduling.

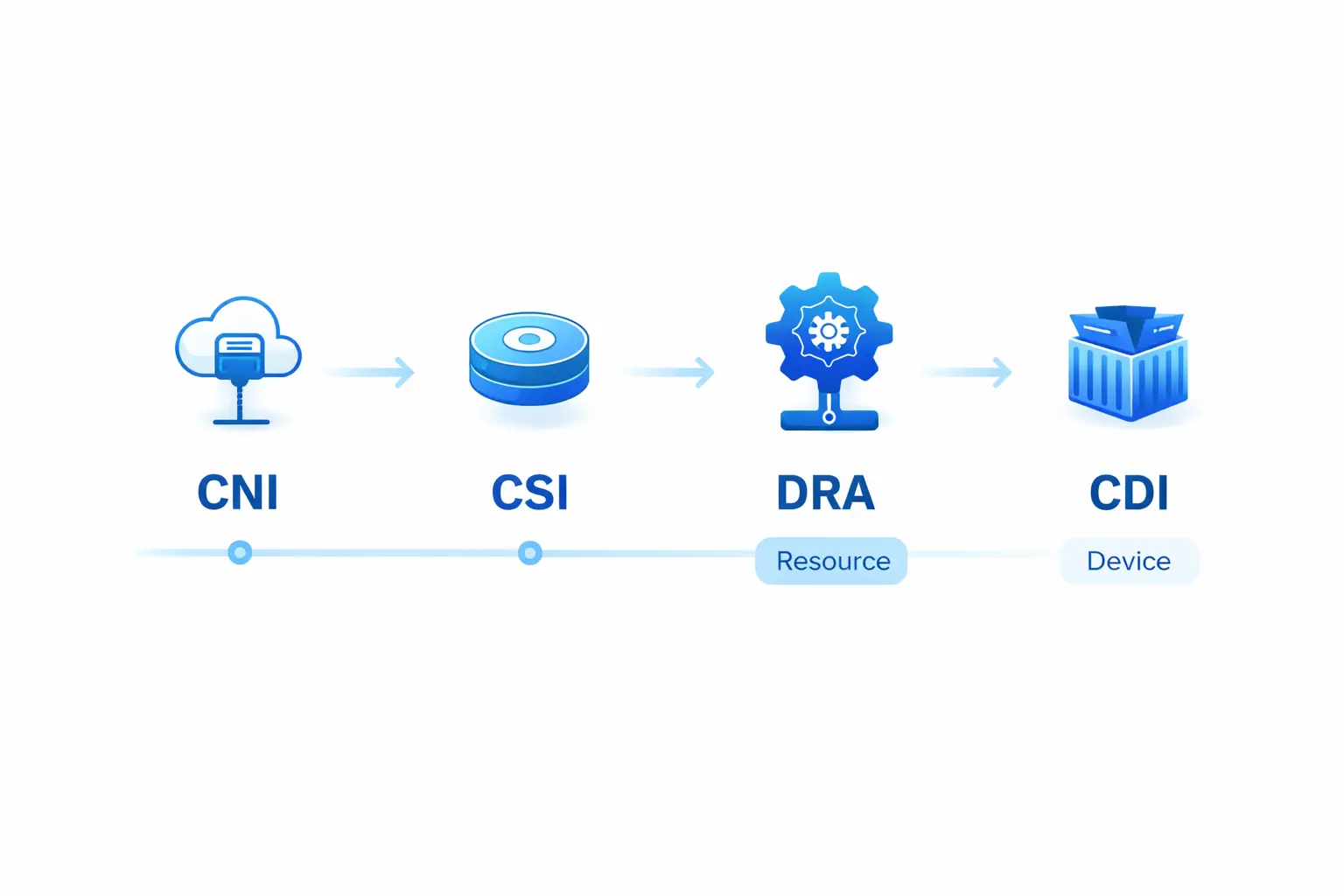

These efforts are not about replacing Kubernetes, but about filling the resource model gaps for the AI era. In the long run, this layer may evolve into a “GPU Abstraction Layer” similar to CNI/CSI, becoming a key part of AI-native infrastructure.

A common post-event summary was: Many PoCs, but “everyday production deployments” are still rare. Pulumi summarized it as:

lots of working demos, very few production setups people trust

This shows that while many AI workload solutions succeed in technical demos, the transition from experimentation to production remains difficult. Whether it’s GPU resource sharing or inference request scheduling, whether Kubernetes as the foundation can support this transformation is still an open question.

Another major event at this KubeCon was llm-d being contributed to the CNCF as a Sandbox project.

If GPU DRA represents the upstreaming of device resource models, then llm-d represents another critical evolution: Distributed LLM inference capabilities are moving from proprietary engineering implementations to standardized, community-driven collaboration in cloud native.

This is significant not just because it’s another open-source project, but because it shows that Kubernetes’ challenges in the AI era are no longer just about “how to schedule GPUs,” but also “how to host inference systems themselves.” As prefill/decode separation, request routing, KV cache management, and throughput optimization move into the infrastructure layer, Kubernetes’ boundaries are being redefined.

Traditionally, the Kubernetes scheduler focused on Pod scheduling. But in AI inference scenarios, scheduling is not just about picking a node—it’s about selecting the most suitable inference instance based on request characteristics. Factors like model state, request queue depth, and cache hit rate all need to be considered. This process is increasingly managed by inference runtimes, forming new “request-level scheduling” systems.

This leads to an overlap between the Kubernetes scheduler and inference systems, forcing Kubernetes to rethink its role: should it keep expanding, or collaborate with inference systems?

At the AI Native Summit, the real needs for AI-native infrastructure were especially clear. The focus was no longer “can it run on Kubernetes,” but how to make AI workloads routine, stable, and production-ready on Kubernetes.

The core challenge is delivery. Unlike traditional apps, AI model weights are often huge—tens of GB or even TB—making model delivery and data management extremely complex. Traditional container delivery systems (like image layers) struggle with such massive data and complex versioning.

A key direction for Kubernetes is to standardize model weight and data delivery, using ImageVolume and OCI artifacts to solve AI model delivery and version management on Kubernetes. This not only reduces “cold start” times but also provides infrastructure support for multi-tenancy and compliance.

Kubernetes won’t be replaced by AI, but it’s being reshaped as the core of infrastructure. This anxiety is the force driving its evolution—it’s moving from a “general-purpose infrastructure platform” to an “AI-powered multifunctional base”. Some even call it the AI operating system.

In the future, Kubernetes’ core competitiveness will no longer be just container management, but how effectively it can schedule and manage AI workloads, and how it can make AI a routine part of operations. This was my biggest takeaway from the AI Native Summit and KubeCon, and it’s what I look forward to in the Kubernetes ecosystem over the next few years.

2026-03-23 04:41:19

Today marks my first day at KubeCon Europe 2026. The most striking feeling is: the world is vast, but this community is truly small.

One strong impression stands out:

The world is big, but this circle is really small.

At the Maintainer Summit, I met many familiar faces—

Colleagues from Ant Group, friends from Tetrate, and some people I’ve known for nearly a decade. Together, we’ve journeyed from the early days of Kubernetes, Service Mesh, and cloud native infrastructure to today.

In a sense, this generation has fully experienced:

This isn’t about “new people entering the field,” but rather—

The same group stepping into a new technology cycle.

If you ask:

What is the Kubernetes community most concerned about right now?

Today’s answer is very clear:

👉 How to run AI workloads better on Kubernetes

Many topics at the Maintainer Summit revolved around:

In other words:

Kubernetes hasn’t been replaced by AI; it’s actively “absorbing” AI.

Today, I had an in-depth discussion with CNCF TOC, Red Hat, and the vLLM community.

The core question was:

How should GPUs be “platformized”?

Some consensus is already clear:

At the Maintainer Summit in Amsterdam, we had deep discussions with CNCF TOC, Red Hat, and the vLLM community about GPU resource management and LLM Serving integration in Kubernetes scenarios, and explored potential collaboration between vLLM and HAMi.

Behind this is a major paradigm shift:

| Past | Now |

|---|---|

| GPU = Node resource | GPU = Infrastructure layer |

| Exclusive use | Multi-tenant sharing |

| Static binding | Dynamic scheduling |

| Managed within frameworks | Unified management at the platform layer |

This is exactly what we’ve been working on in HAMi.

Another interesting change today:

HAMi is no longer just a “community project”—it’s becoming:

A reference implementation (reference pattern) for AI Infra

This is reflected in several ways:

Especially in conversations with Red Hat and vLLM, a clear trend emerged:

GPU resource management and LLM serving are becoming coupled

That is:

A new “interface layer” is gradually forming.

This is a direction worth betting on.

At the same time, I have a somewhat “counterintuitive” observation:

We haven’t yet seen a large wave of AI Infra (K8s-focused) startups.

Most companies I saw today:

But those truly focused on:

“Making AI workloads run better on Kubernetes”

There are actually not many startups at this layer.

This could mean two things:

Currently, most activity is at:

But not at:

Because at its core, this is:

The intersection of Cloud Native × GPU × AI workload

It’s not just “wrapping AI,” but a fundamental re-architecture at the infrastructure level.

If we break down the AI technology stack:

Agent / Application

↓

LLM Serving (vLLM, etc.)

↓

AI Runtime / Scheduling

↓

GPU Resource Layer

↓

Hardware

Most innovation today is concentrated in:

But the real long-term moat lies in:

And Kubernetes is very likely to remain:

The default platform for this middle layer

Today’s takeaway:

Kubernetes is not obsolete; it’s being redefined.

And our generation is shifting from:

“Cloud Native Builders”

to:

“AI Infrastructure Builders”

More to come tomorrow.

2026-03-17 08:55:52

This redesign is more than a style update—it’s a step toward clearer technical communication and better user experience. Try the new HAMi website at https://project-hami.io and submit issues here.

Over the past two months, I conducted a thorough refactor of the documentation website (see GitHub). Externally, it looks like a “visual redesign”, but from the perspective of community maintainers and content builders, it’s a comprehensive upgrade of information architecture, content system, and frontend experience.

This article aims to systematically explain three things: why we did this refactor, what exactly changed, and what these changes mean for the HAMi community.

HAMi is a CNCF-hosted open source project initiated and contributed by Dynamia, with growing influence in GPU virtualization, heterogeneous compute scheduling, and AI infrastructure. The community content is expanding, and user types are becoming more diverse: from first-time visitors to engineers and enterprise users seeking deployment docs, architecture diagrams, case studies, and ecosystem information.

The original site was functional, but as content grew, several issues became apparent:

For a fast-evolving open source community, the website is not just a “place for docs”, but the public interface of the community. It needs to serve as project introduction, knowledge gateway, adoption proof, community connector, and brand expression.

So the goal of this refactor was clear: not just superficial beautification, but to truly upgrade the website into HAMi’s systematic community entry point.

This update was not a single-point change, but a series of systematic improvements.

The most obvious change is the homepage.

We redesigned the homepage structure, moving away from simply stacking content blocks, and instead organizing the page around the main narrative: “Project Positioning → Core Capabilities → Ecosystem Entry → Content Accumulation → Community Trust”.

Specifically, the homepage received several key upgrades:

These changes include Hero animations and atmosphere layers, research/story sections, new resource entry sections, refreshed CTAs, unified background design, and ongoing reduction of visual noise. Together, they solve a core problem: enabling visitors to understand what HAMi is and why it’s worth exploring further within seconds.

Key diagrams were redrawn for clearer technical communication. This helps users grasp HAMi’s role in AI infrastructure.

For HAMi, this change is critical. The community faces not just a single feature, but a set of system-level challenges involving Kubernetes, schedulers, GPU Operators, heterogeneous devices, and enterprise platforms. Improved diagrams make the website a better technical entry point.

Another important direction was strengthening the “community proof” layer.

Many open source project sites fall into the trap of having complete docs, but users can’t tell if the project is truly adopted, if the community is active, or if the ecosystem is expanding. The HAMi website redesign consciously addresses this.

Blog cards, lists, and metadata were unified for easier reading and sharing. Blogs are now a core communication layer.

Navigation, card layouts, footer, and search were improved for smoother mobile browsing.

Footer layout was enhanced for better navigation and credibility. Built-in search replaced unreliable external solutions, improving content accessibility.

From screenshots, it looks like “the website looks better”. But from a community-building perspective, its significance is deeper.

First, HAMi’s external expression is more systematic.

The website is no longer just a collection of scattered pages, but is forming a complete narrative chain: users can understand project value from the homepage, capability details from docs, practical paths from blogs, and community impact from ecosystem modules.

Second, community content assets are reorganized.

Previously, valuable articles, diagrams, and explanations existed but were hard to find. Now, through homepage sections, navigation, and search refactor, these contents are more effectively connected.

Third, HAMi’s community image is more mature.

A mature open source project needs not just an active code repository, but clear, stable, and sustainable website expression. Structure, style, and usability are part of the community’s engineering capability.

Fourth, this lays the foundation for expanding case studies, adopters, contributors, and ecosystem content.

With the framework sorted, adding more case studies, collaboration entry points, or showcasing more adopters and partners will be more natural and easier for users to understand.

In summary, I believe this refactor got three things right:

These may not be as flashy as launching a new feature, but they directly impact content dissemination, user comprehension, and the project’s long-term image.

For infrastructure projects like HAMi, technical capability is fundamental, but clearly communicating, organizing, and continuously presenting that capability is also a form of infrastructure.

This HAMi documentation and website refactor is essentially an upgrade to the community’s “expression layer” infrastructure.

It improves visual and reading experience, reorganizes content, homepage narrative, search paths, mobile access, and community signal display. Homepage redesign, architecture diagram redraw, unified blog style, mobile optimization, enhanced footer, and switching from external to built-in search together constitute a true “refactor”.

Externally, it helps more people quickly understand HAMi; internally, it provides a stable platform for the community to accumulate case studies, expand the ecosystem, and serve adopters and contributors.

The website is not an accessory to the open source community, but part of its long-term influence. HAMi’s redesign is about taking this seriously.

If you’re interested in Kubernetes GPU virtualization, add me on WeChat jimmysong or scan the QR code below.

2026-03-15 11:34:06

AI is quietly reshaping the infrastructure landscape, and GTC 2026 may become a key node in this transformation.

Next week, one of the most important technology conferences in the AI industry, NVIDIA GTC 2026, will be held in San Jose, USA.

For many people, GTC is just a GPU technology conference. But if you follow the development of the AI industry over the past few years, you’ll find an interesting phenomenon:

Many important narratives about AI infrastructure are gradually taking shape at GTC.

From CUDA, DGX, to AI Factory, and most recently Jensen Huang’s proposed AI Five-Layer Cake, NVIDIA is constantly attempting to redefine the computing infrastructure of the AI era.

This is why many people call GTC:

AI’s “Woodstock.”

This year’s GTC (March 16-19) is expected to cover various levels of the AI stack, including:

According to NVIDIA’s official blog, this year’s keynote will focus on the complete AI stack from chips to applications.

If we put these signals together, we can actually see a larger trend:

AI is transforming from an “applied technology” into “infrastructure.”

From a longer time scale, the technological revolutions in human history are essentially infrastructure revolutions.

We usually divide industrial revolutions into four times.

In the table below, you can see the infrastructure corresponding to each industrial revolution:

| Industrial Revolution | Infrastructure |

|---|---|

| Steam Revolution | Steam Engine |

| Electrical Revolution | Power Grid |

| Digital Revolution | Computer |

| Internet Era | Network |

The steam engine allowed humans to utilize mechanical power on a large scale for the first time. Production no longer relied on human or animal power, but on machines.

Electricity changed not only the source of power, but also the organization of production. Assembly lines, large-scale manufacturing, and modern industrial systems are all built on the foundation of the power grid.

Computers allowed information to be processed digitally. Software became a production tool.

The internet connects all computers together. Cloud computing transforms computing resources into infrastructure. And AI gives machines a certain degree of “cognitive ability.”

If we observe these industrial revolutions, we discover a pattern:

Each industrial revolution produces a new General Purpose Infrastructure.

And AI is likely to become the next-generation infrastructure.

NVIDIA even directly stated in a recent article:

AI is essential infrastructure, like electricity and the internet.

In other words:

AI is no longer just an applied technology, but a new factor of production.

Recently, Jensen Huang proposed a very interesting concept: AI Five-Layer Cake.

AI is broken down into five layers:

This model actually illustrates one thing:

AI is a complete industrial system.

Jensen Huang even described AI at Davos as:

“One of the largest-scale infrastructure constructions in human history.”

This year’s GTC is expected to release several important directions.

The focus of AI in the past was training. But the main load of AI in the future is likely to be Inference.

Analysts expect that by 2030, 75% of computing demand in the AI data center market will come from inference.

The past AI model was:

User → Model → Answer

The Agent model is more complex:

User → Agent → Tools → Model → Action

The flowchart below shows the main interaction paths in the Agent model:

AI is no longer just answering questions, but executing tasks.

Recent media reports suggest that NVIDIA may launch a new Agent platform: NemoClaw, aimed at helping enterprises deploy AI Agents.

If this project is truly released, it means NVIDIA’s stack will become the following structure:

This is actually a complete AI stack.

The emergence of Agents brings new computing workload issues.

Past AI workloads were mainly:

But Agents bring a third type of workload:

Agent Workloads

The figure below shows the diverse workload types related to Agents:

The characteristic of this workload is highly fragmented. GPUs are no longer occupied for long periods, but rather face many small requests. This poses new challenges for infrastructure.

For the past few years, I’ve been thinking about a question:

What is AI-native infrastructure?

It is clearly not just “Kubernetes with GPUs.” I’m more inclined to believe it needs to possess several characteristics.

In the cloud computing era, CPU is the core resource. In the AI era, GPU is the core resource.

Real-world AI chips are not limited to NVIDIA:

Future AI infrastructure must be able to manage heterogeneous computing.

GPU is a very expensive resource. If it cannot be shared, utilization will be very low. This is why GPU virtualization and slicing are becoming increasingly important.

AI scheduling includes not only traditional CPU and Memory, but also:

GPU

VRAM

Topology

Bandwidth

Combining the above trends, the future AI stack may present the following structure:

This structure is very close to NVIDIA’s Five-Layer Cake.

Combining signals from GTC, AI Factory, Agents, and AI Five-Layer Cake, we can see a very obvious trend:

AI is rewriting computing infrastructure.

Future competition may not just be “who has the best model,” but:

Who has the best AI Infrastructure.

Just like the past few decades:

The future may be:

AI Infrastructure determines intelligence capability.

If we stretch the time scale a bit longer, we may be in a new historical stage.

AI is no longer just a technological tool. It is becoming new infrastructure.

Just like:

And AI-native infrastructure is likely to become one of the most important technology directions for the next decade.

2026-02-13 22:32:46

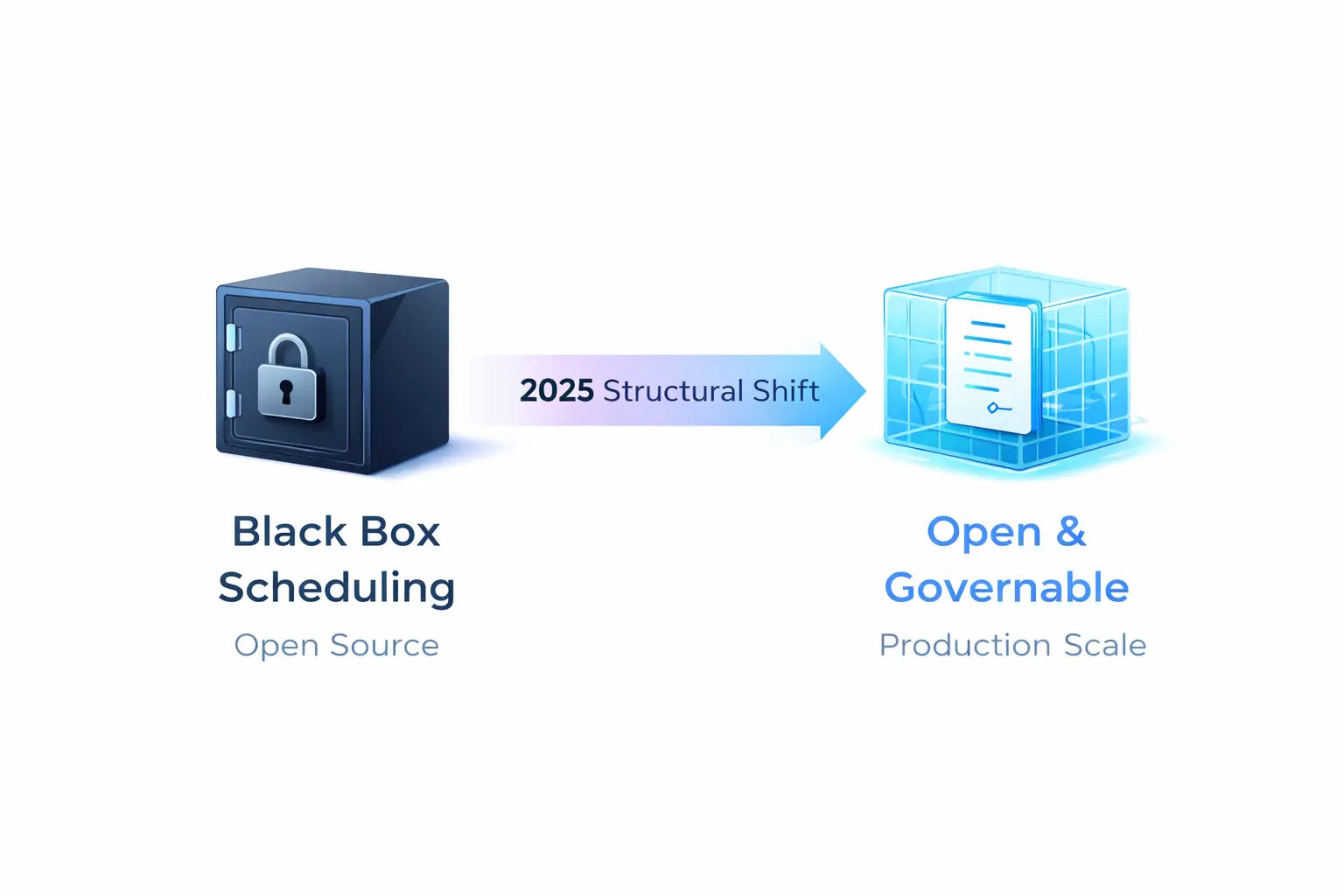

The future of GPU scheduling isn’t about whose implementation is more “black-box”—it’s about who can standardize device resource contracts into something governable.

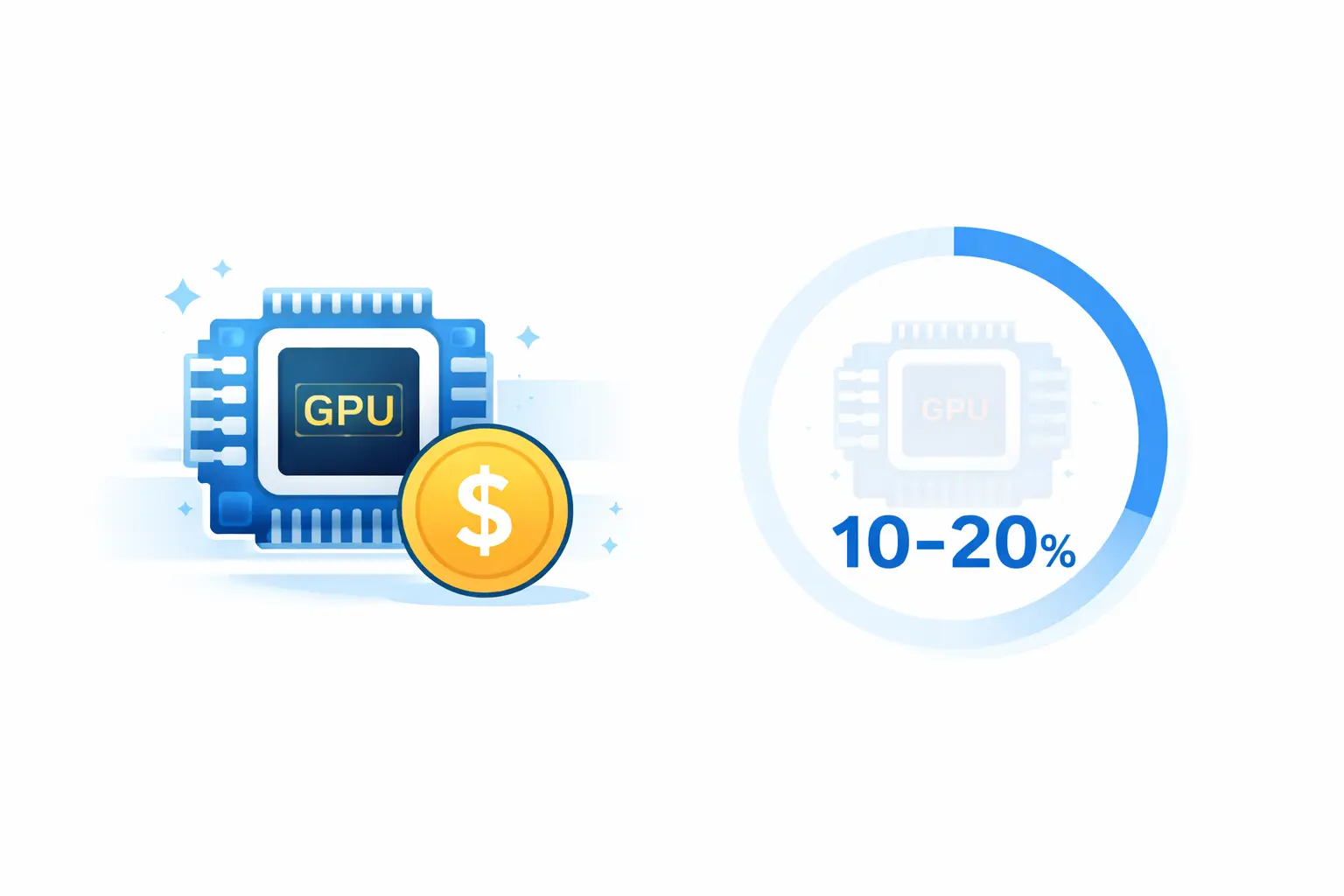

Have you ever wondered: why are GPUs so expensive, yet overall utilization often hovers around 10–20%?

This isn’t a problem you solve with “better scheduling algorithms.” It’s a structural problem - GPU scheduling is undergoing a shift from “proprietary implementation” to “open scheduling,” similar to how networking converged on CNI and storage converged on CSI.

In the HAMi 2025 Annual Review, we noted: “HAMi 2025 is no longer just about GPU sharing tools—it’s a more structural signal: GPUs are moving toward open scheduling.”

By 2025, the signals of this shift became visible: Kubernetes Dynamic Resource Allocation (DRA) graduated to GA and became enabled by default, NVIDIA GPU Operator started defaulting to CDI (Container Device Interface), and HAMi’s production-grade case studies under CNCF are moving “GPU sharing” from experimental capability to operational excellence.

This post analyzes this structural shift from an AI Native Infrastructure perspective, and what it means for Dynamia and the industry.

In multi-cloud and hybrid cloud environments, GPU model diversity significantly amplifies operational costs. One large internet company’s platform spans H200/H100/A100/V100/4090 GPUs across five clusters. If you can only allocate “whole GPUs,” resource misalignment becomes inevitable.

“Open scheduling” isn’t a slogan—it’s a set of engineering contracts being solidified into the mainstream stack.

Before: GPUs were extended resources. The scheduler didn’t understand if they represented memory, compute, or device types.

Now: Kubernetes DRA provides objects like DeviceClass, ResourceClaim, and ResourceSlice. This lets drivers and cluster administrators define device categories and selection logic (including CEL-based selectors), while Kubernetes handles the full loop: match devices → bind claims → place Pods onto nodes with access to allocated devices.

Even more importantly, Kubernetes 1.34 stated that core APIs in the resource.k8s.io group graduated to GA, DRA became stable and enabled by default, and the community committed to avoiding breaking changes going forward. This means the ecosystem can invest with confidence in a stable, standard API.

Before: Device injection relied on vendor-specific hooks and runtime class patterns.

Now: The Container Device Interface (CDI) abstracts device injection into an open specification. NVIDIA’s Container Toolkit explicitly describes CDI as an open specification for container runtimes, and NVIDIA GPU Operator 25.10.0 defaults to enabling CDI on install/upgrade—directly leveraging runtime-native CDI support (containerd, CRI-O, etc.) for GPU injection.

This means “devices into containers” is also moving toward replaceable, standardized interfaces.

On this standardization path, HAMi’s role needs redefinition: it’s not about replacing Kubernetes—it’s about turning GPU virtualization and slicing into a declarative, schedulable, governable data plane.

HAMi’s core contribution expands the allocatable unit from “whole GPU integers” to finer-grained shares (memory and compute), forming a complete allocation chain:

This transforms “sharing” from ad-hoc “it runs” experimentation into engineering capability that can be declared in YAML, scheduled by policy, and validated by metrics.

HAMi’s scheduling doesn’t replace Kubernetes—it uses a Scheduler Extender pattern to let the native scheduler understand vGPU resource models:

This architecture positions HAMi naturally as an execution layer under higher-level “AI control planes” (queuing, quotas, priorities)—working alongside Volcano, Kueue, Koordinator, and others.

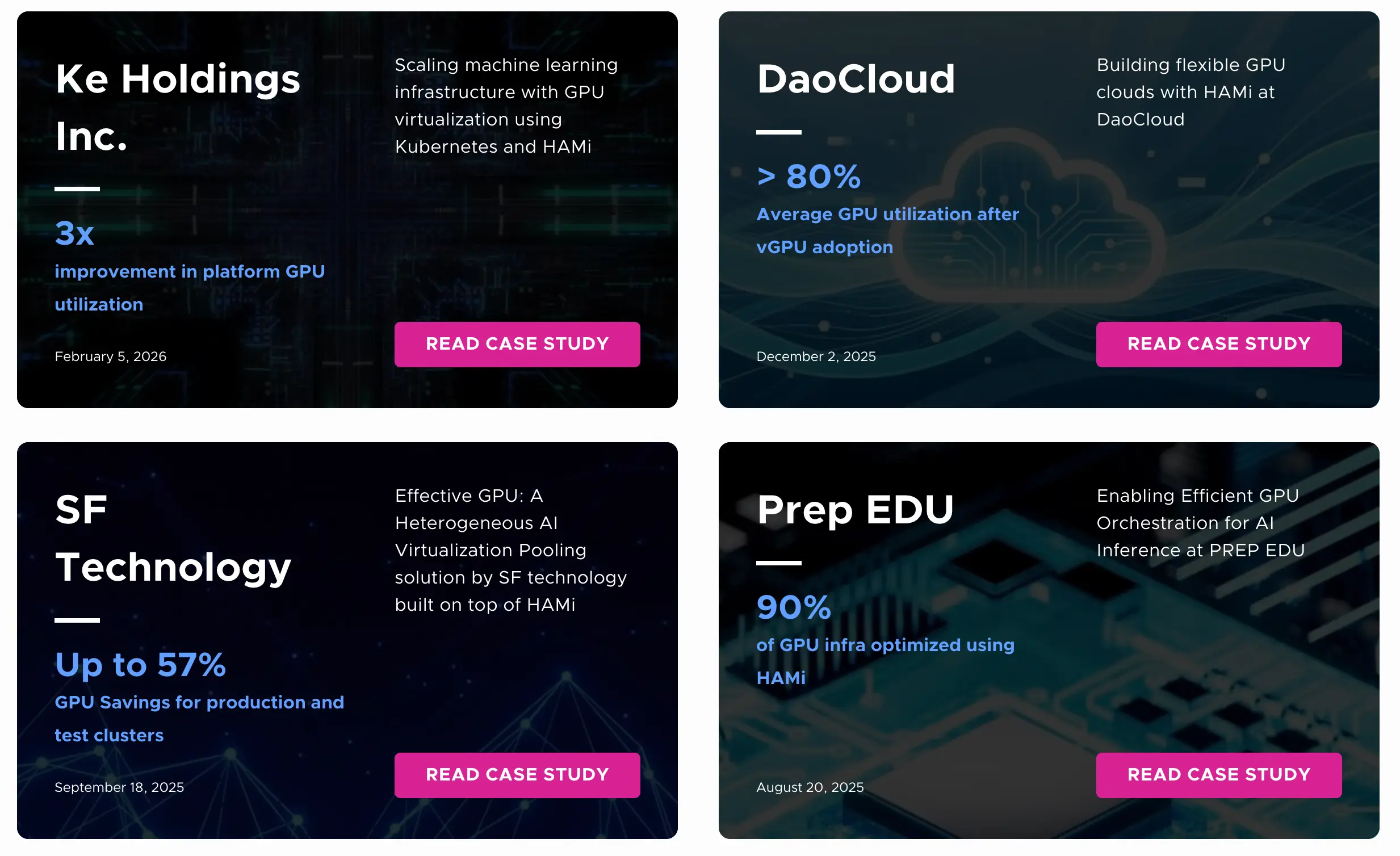

CNCF public case studies provide concrete answers: in a hybrid, multi-cloud platform built on Kubernetes and HAMi, 10,000+ Pods run concurrently, and GPU utilization improves from 13% to 37% (nearly 3×).

Here are highlights from several cases:

These cases demonstrate a consistent pattern: GPU virtualization becomes economically meaningful only when it participates in a governable contract—where utilization, isolation, and policy can be expressed, measured, and improved over time.

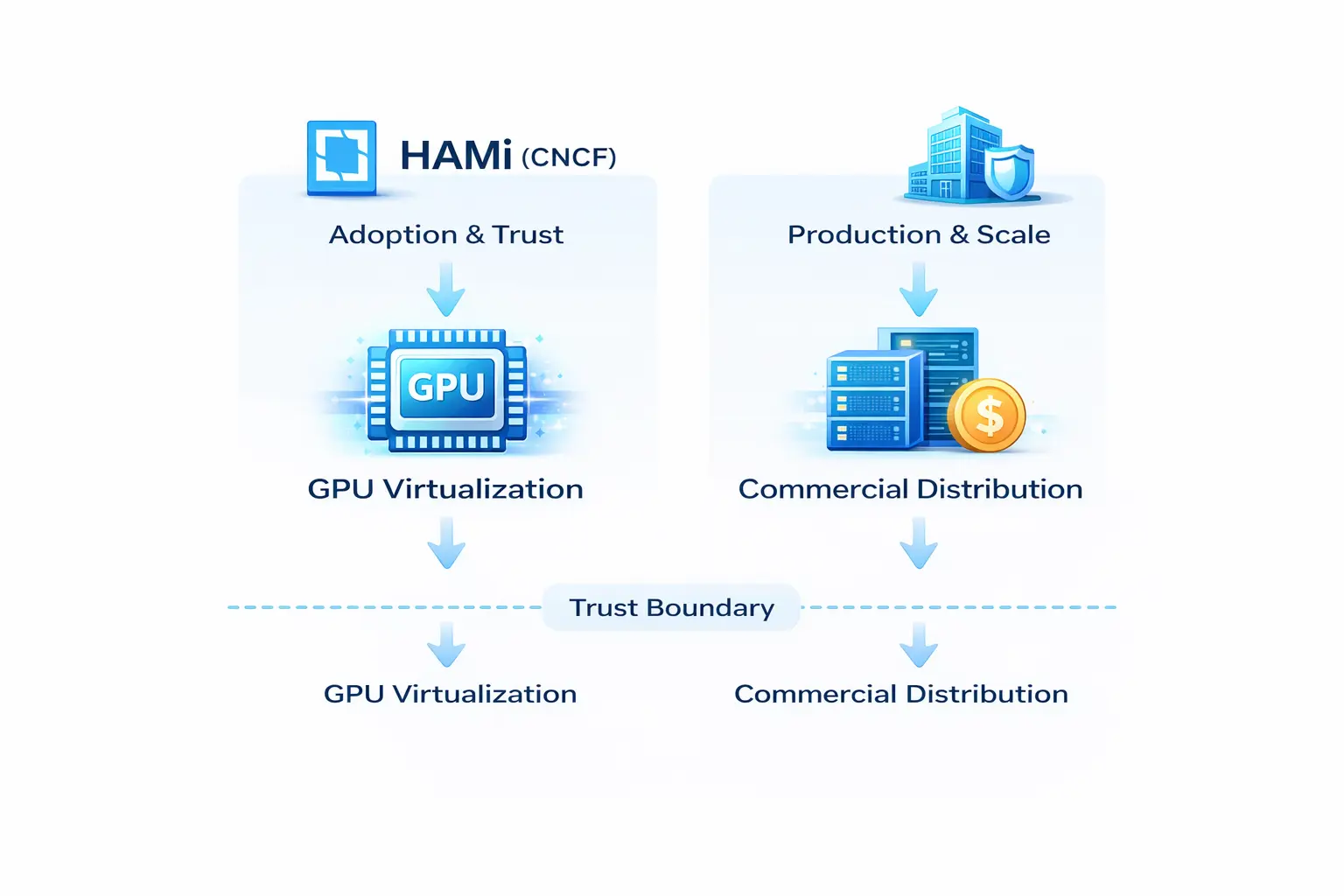

From Dynamia’s perspective (and as VP of Open Source Ecosystem), the strategic value of HAMi becomes clear:

This boundary is the foundation for long-term trust—project and company offerings remain separate, with commercial distributions and services built on the open source project.

The internal alignment memo recommends a bilingual approach:

First layer: Lead globally with “GPU virtualization / sharing / utilization” (Chinese can directly use “GPU virtualization and heterogeneous scheduling,” but English first layer should avoid “heterogeneous” as a headline)

Second layer: When users discuss mixed GPUs or workload diversity, introduce “heterogeneous” to confirm capability boundaries—never as the opening hook

Core anchor: Maintain “HAMi (project and community) ≠ company products” as the non-negotiable baseline for long-term positioning

DaoCloud’s case study already set vendor-agnostic and CNCF toolchain compatibility as hard constraints, framing vendor dependency reduction as a business and operational benefit—not just a technical detail. Project-HAMi’s official documentation lists “avoid vendor lock” as a core value proposition.

In this context, the right commercialization landing isn’t “closed-source scheduling”—it’s productizing capabilities around real enterprise complexity:

My strong judgment: over the next 2–3 years, GPU scheduling competition will shift from “whose implementation is more black-box” to “whose contract is more open.”

The reasons are practical:

These signals suggest that heterogeneity will grow: mixed accelerators, mixed clouds, mixed workload types.

Low-latency inference tiers (beyond just GPUs) will force resource scheduling toward “multi-accelerator, multi-layer cache, multi-class node” architectural design—scheduling must inherently be heterogeneous.

In this world, “open scheduling” isn’t idealism—it’s risk management. Building schedulable governable “control plane + data plane” combinations around DRA/CDI and other solidifying open interfaces, ones that are pluggable, multi-tenant governable, and co-evolvable with the ecosystem—this looks like the truly sustainable path for AI Native Infrastructure.

The next battleground isn’t “whose scheduling is smarter”—it’s “who can standardize device resource contracts into something governable.”

When you place HAMi 2025 back in the broader AI Native Infrastructure context, it’s no longer just the year of “GPU sharing tools”—it’s a more structural signal: GPUs are moving toward open scheduling.

The driving forces come from both ends:

For Dynamia, HAMi’s significance has transcended “GPU sharing tool”: it turns GPU virtualization and slicing into declarative, schedulable, measurable data planes—letting queues, quotas, priorities, and multi-tenancy actually close the governance loop.